Introduction

In this big data era, data is being generated at an incredible rate. From our smartphones and fitness trackers to large-scale business operations, the amount of data produced every second is staggering. With this flood of data, organizations face the challenge of processing, analyzing, and making sense of it all.

In this article, we will explore the challenges of data streaming in the era of big data. We will discuss how cloud-based platforms can help manage this complexity while also highlighting the cost implications that come with them. Additionally, we will introduce innovative strategies for optimizing data flow architecture, including Sedai's autonomous optimization solutions.

The Era of Big Data

Big data is a term that describes the enormous volume of data generated every day. This data comes from various sources, such as social media, sensors, and online transactions. As technology advances, the amount of data we produce continues to multiply.

We often do not realize how much data we create in our daily lives. When we use our smartphones to check emails or track our fitness goals, we contribute to this vast pool of information. Every click, message, and step adds to the data pool.

Many industries benefit from big data. For example, in banking, advanced systems can detect fraudulent transactions in real time. Artificial intelligence helps researchers analyze complex data in healthcare to develop new treatments quickly. These examples show how big data can lead to better decision-making and improved services.

While big data is a game-changer, it also comes with significant challenges:

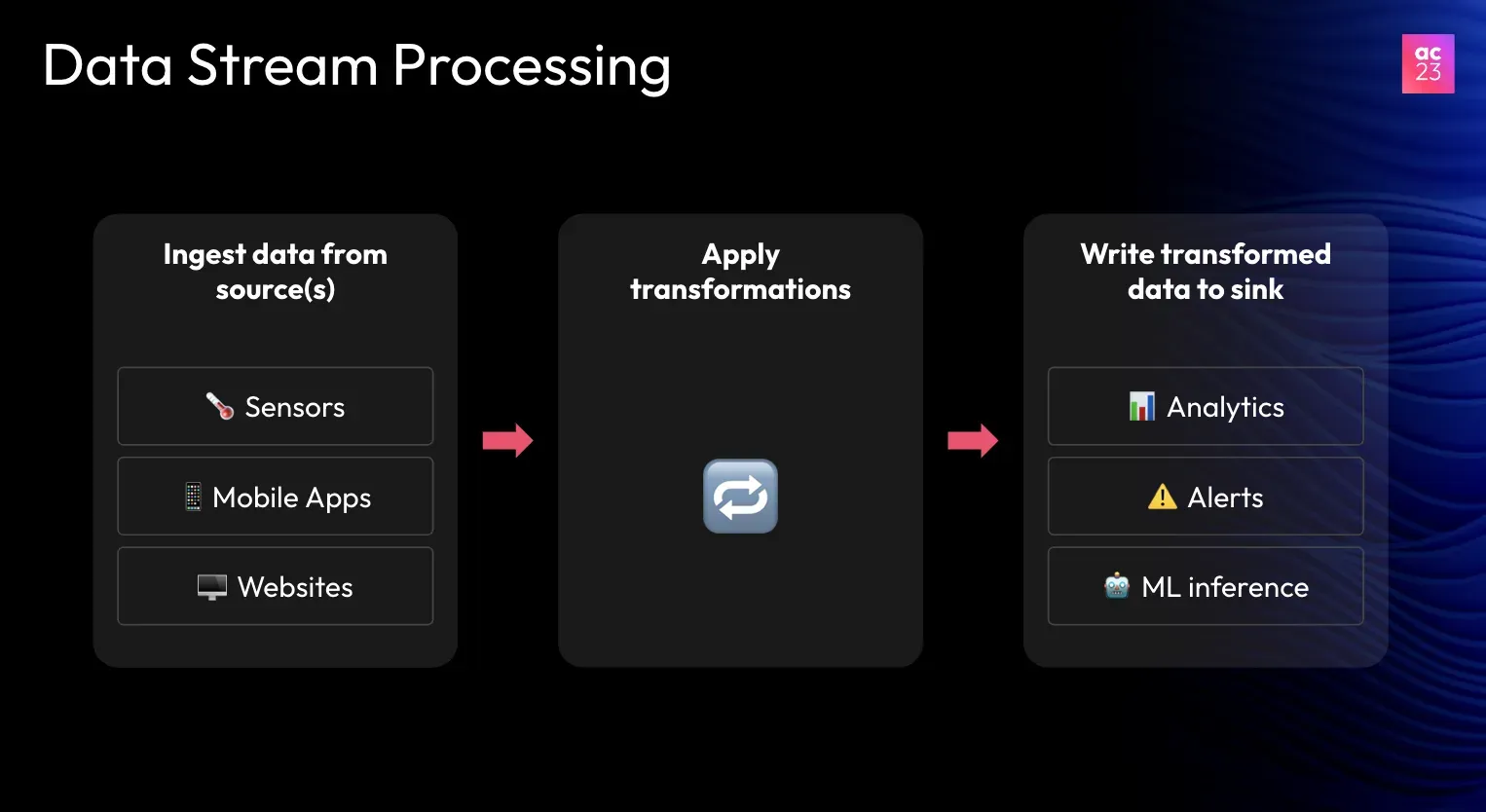

- Massive Data Influx: The data generated from devices, social media, and web applications is processed through complex data streaming pipelines. The data is cleansed, filtered, and transformed before reaching its destination for analysis.

- Cloud-Based Solutions: With growing data, cloud-based platforms, such as Apache, Spark, Databricks, Data Factory, and Google Dataflow, can help to manage this data. They offer scalable solutions with features like security and disaster recovery.

Cost Implications of Cloud Solutions

However, moving to the cloud presents its own set of challenges, particularly in managing costs.

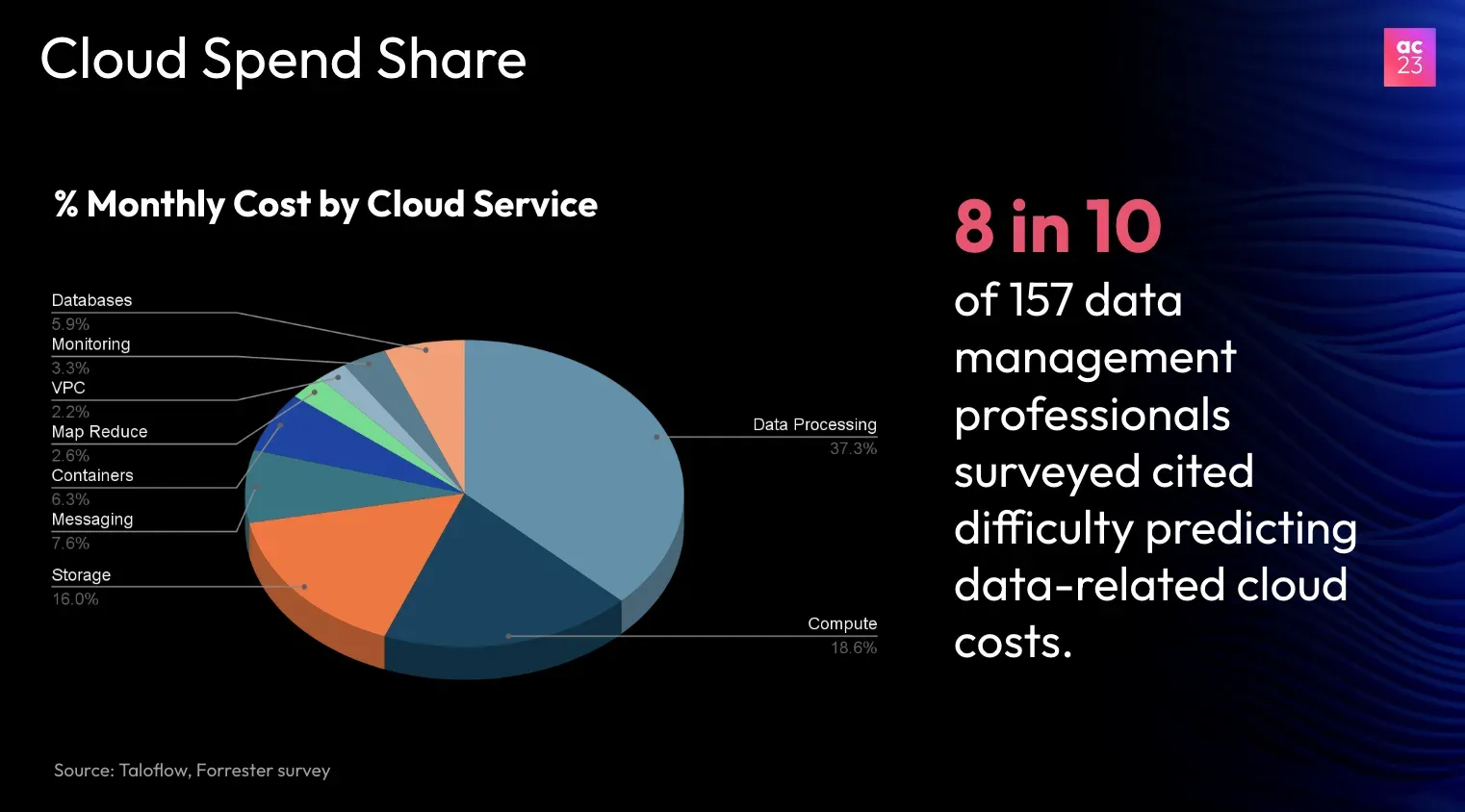

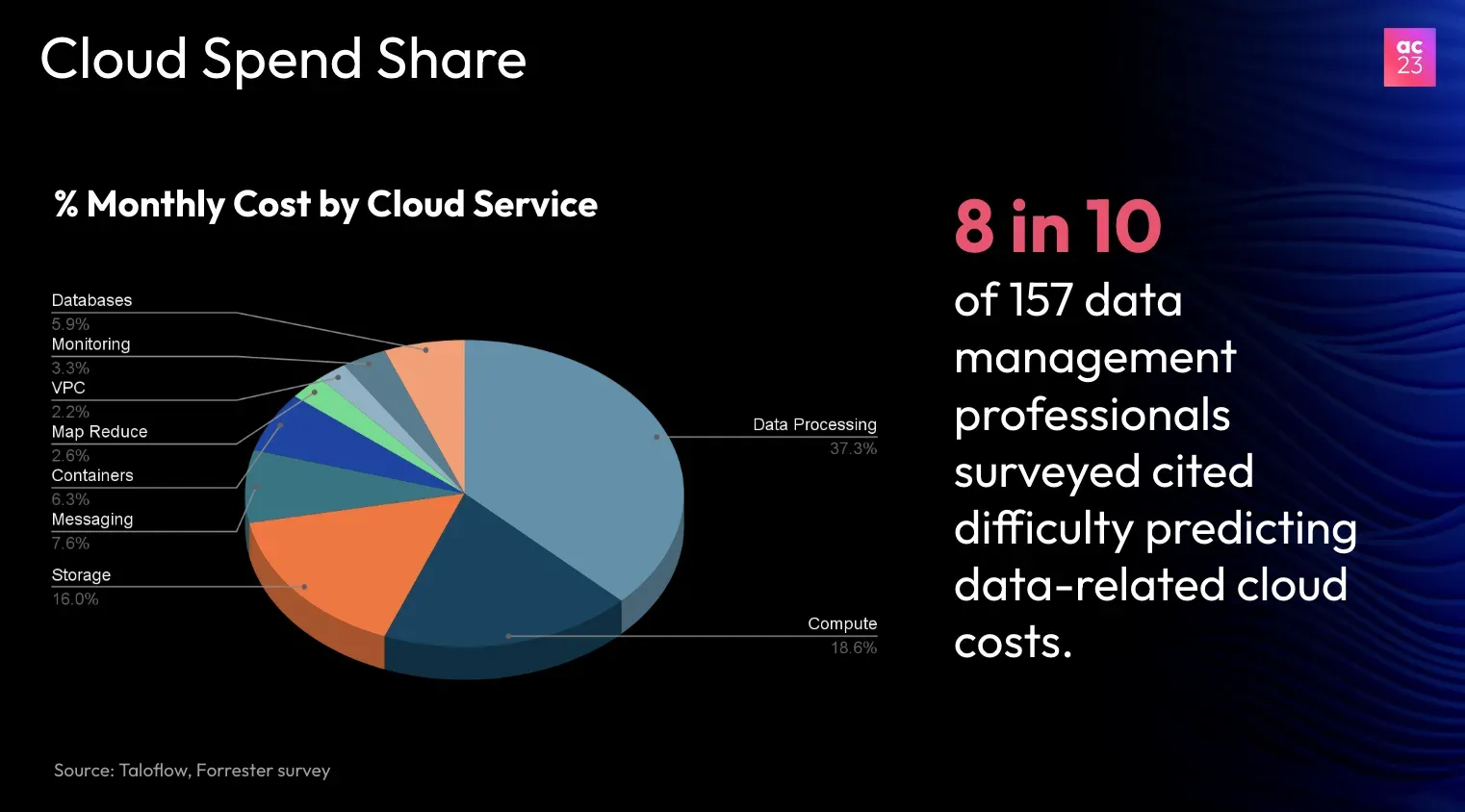

According to industry evaluations, data processing costs can make up as much as 40% of a company’s monthly cloud expenses.

In a survey by Forrester, 8 out of 10 data professionals reported difficulty in forecasting cloud-related costs.

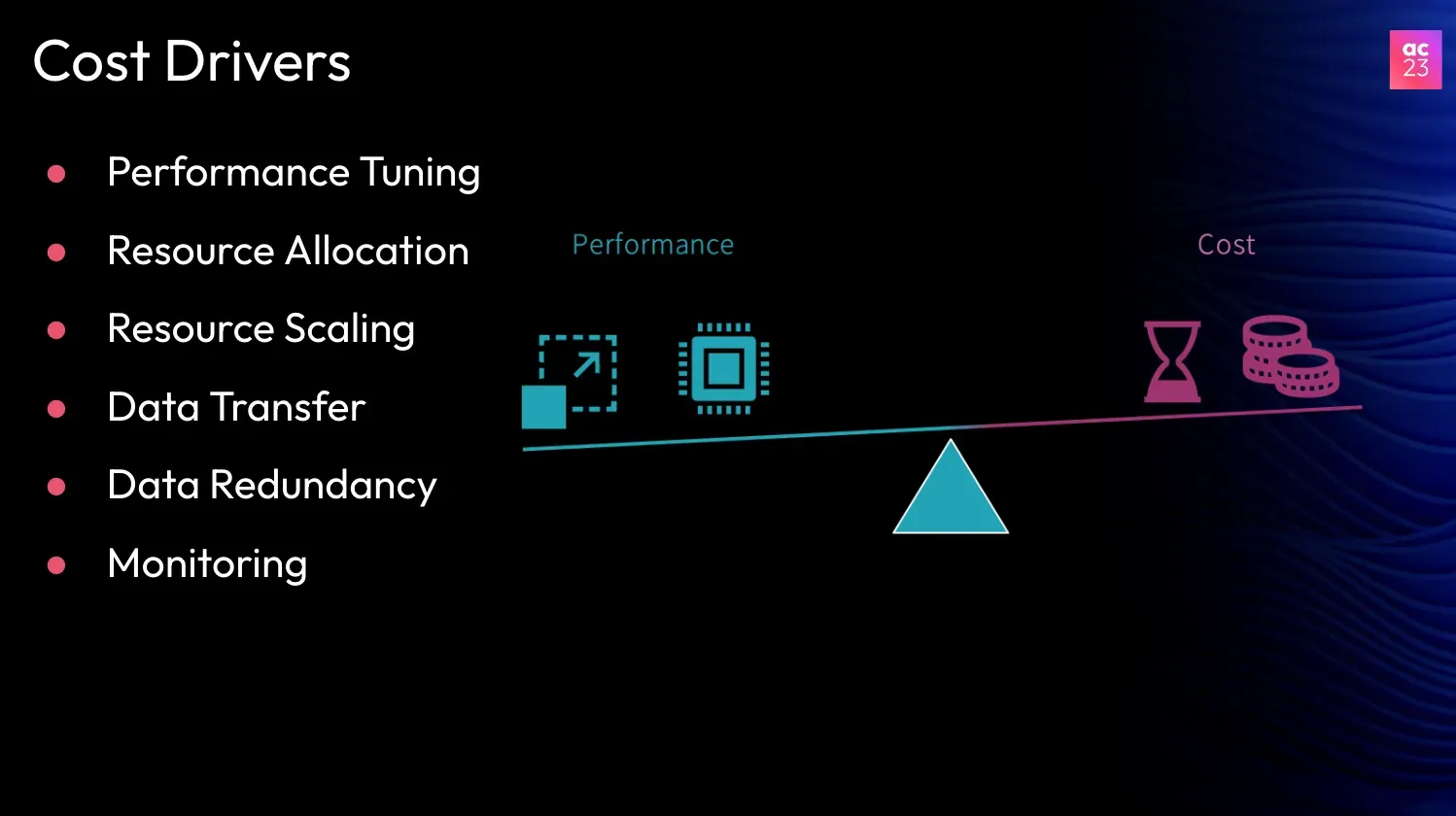

This is because there are many factors that can contribute to the cost of using a cloud data platform. Some of these factors include:

- Overallocated resources: If you're using more resources than you need, you'll pay for them.

- Resource scaling: If your workload is inconsistent, you may need to scale your resources up or down. This can be costly.

- Data transfer costs: If you're transferring a lot of data in or out of the cloud, you'll be charged for it.

- Data redundancy costs: If you store multiple copies of your data, you'll pay for storage.

- Monitoring costs: You'll be charged for using a monitoring tool to track the performance of your data platform.

In addition to these factors, there is also the challenge of balancing performance with cost. You want your data platform to be able to handle your workload efficiently, but you also don't want to pay more than you need to.

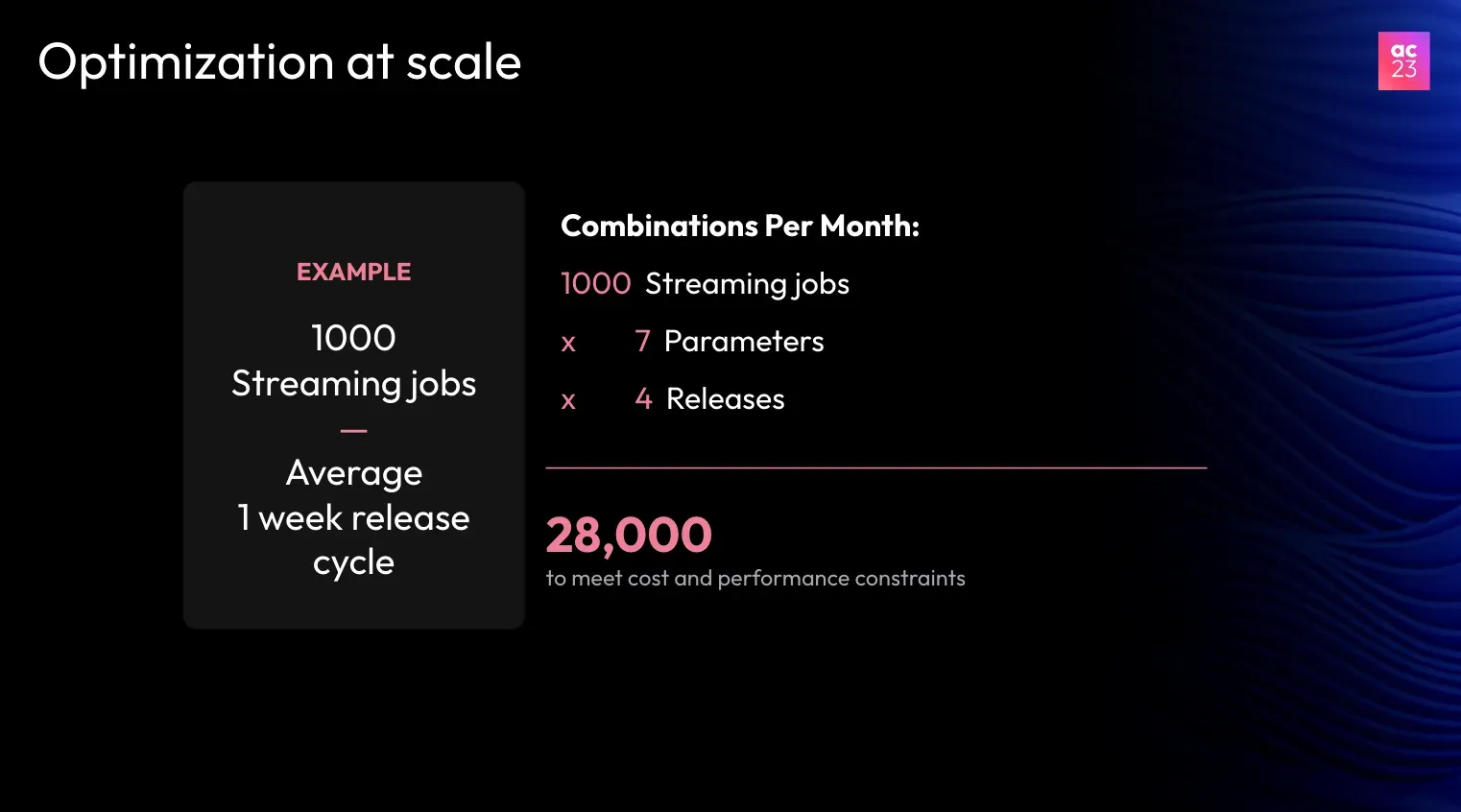

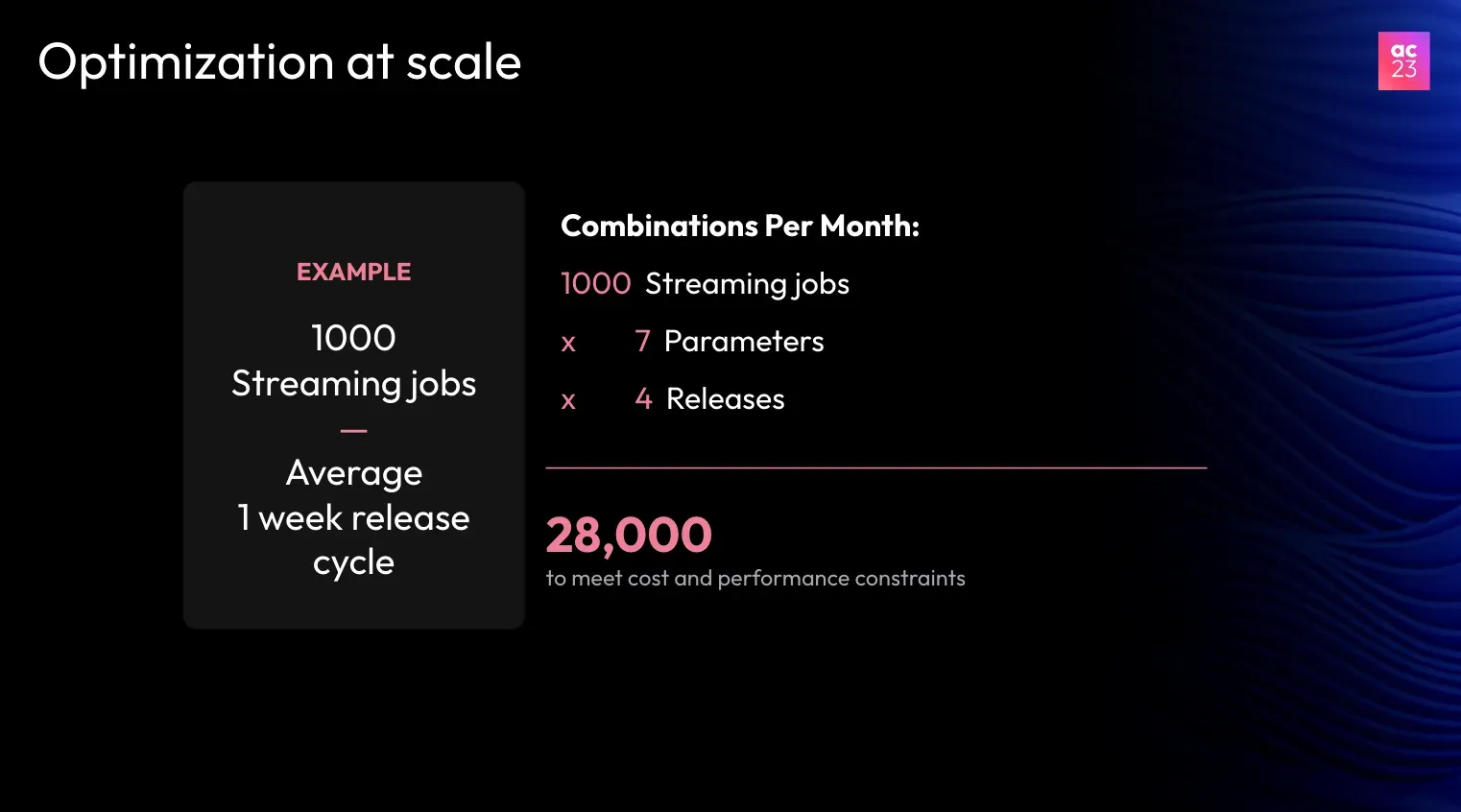

For instance, consider an enterprise with 1,000 streaming jobs that has a weekly release schedule. If there are approximately seven parameters to optimize for cost, this results in around 28,000 combinations each month. This is a substantial number to tackle manually, making optimization a complex and time-consuming task.

Optimizing Dataflow Architecture

Before we dive into the solutions, let’s explore the dataflow architecture.

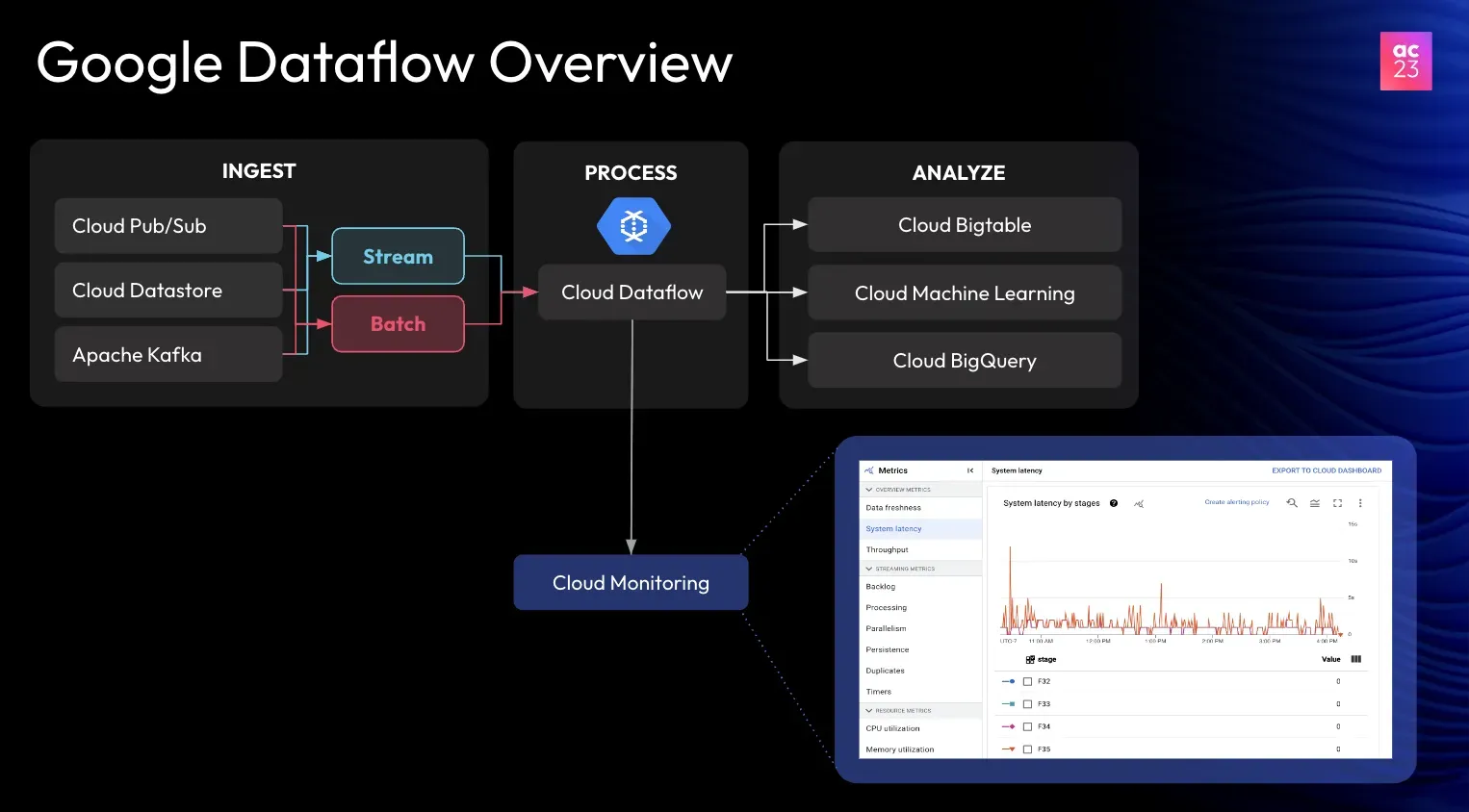

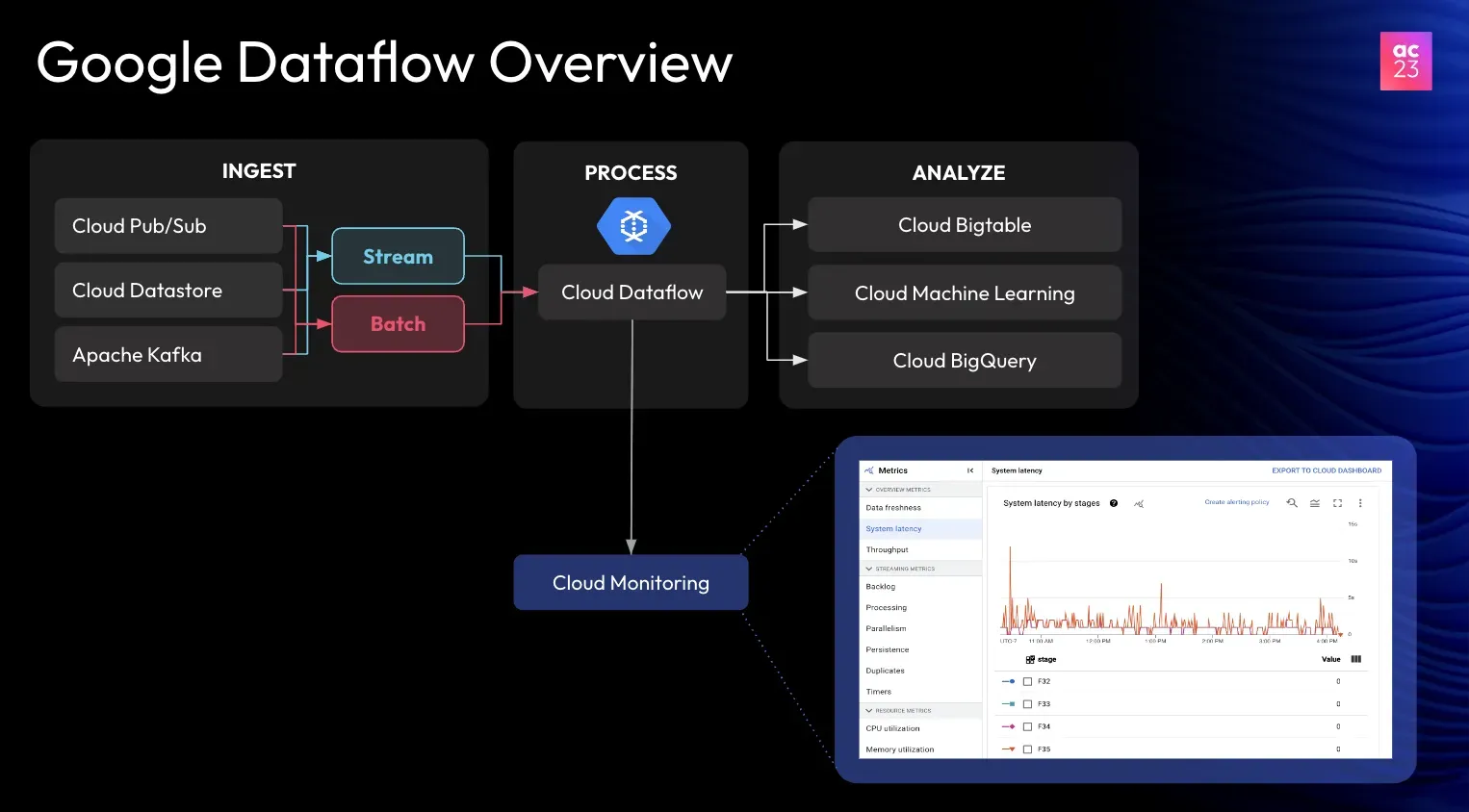

Google Dataflow is a powerful cloud service designed for both batch and stream processing of large-scale data. It's a powerful tool that can help you handle your data efficiently.

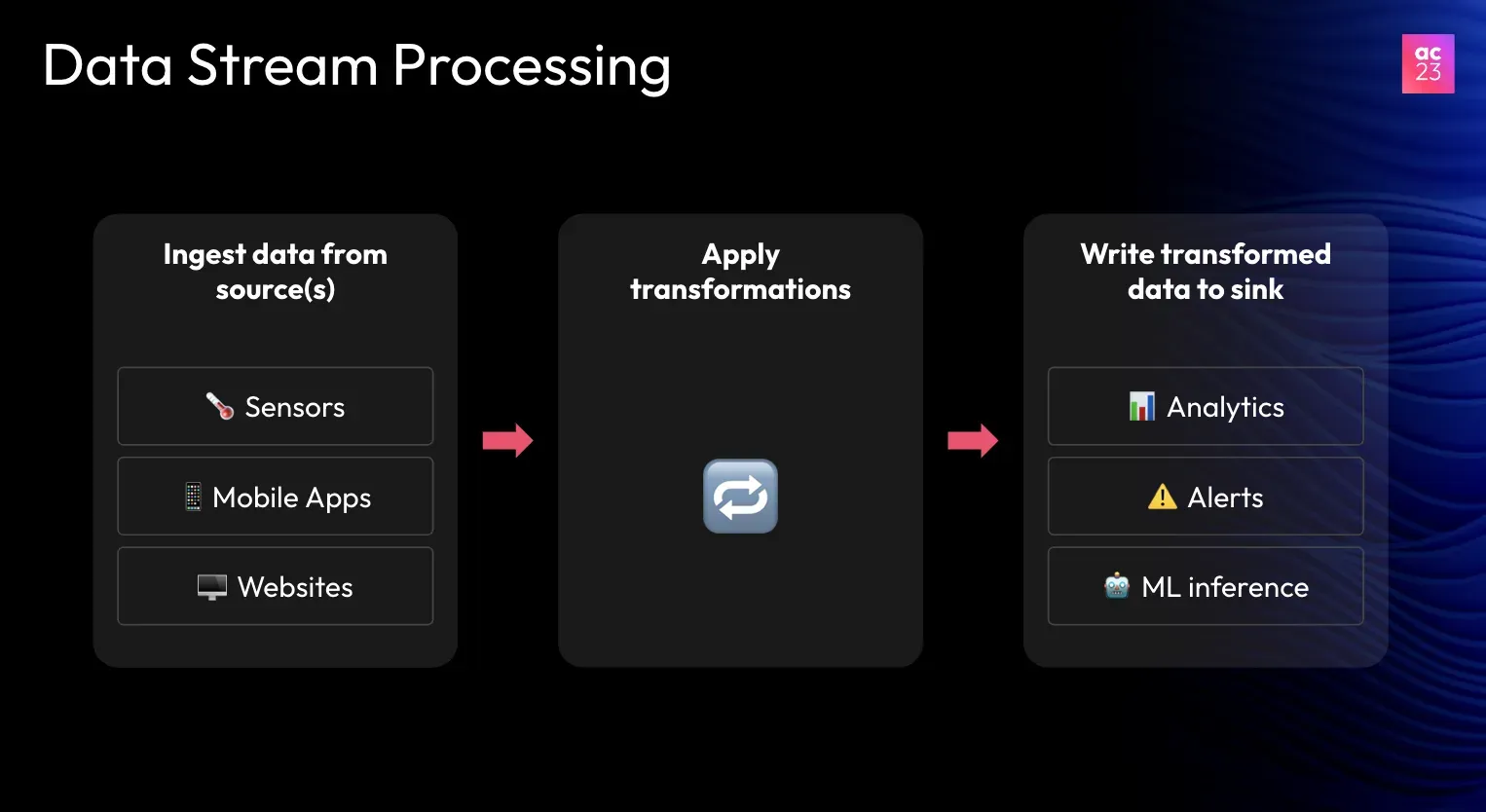

Here's a quick overview of how Dataflow works:

- Data ingestion: Dataflow can ingest data from various sources. These include Pub/Sub, Kafka, and Cloud Storage.

- Data transformation: Dataflow can transform your data using different techniques and send it to the destination for analytics and inference purposes.

- Data delivery: Dataflow can deliver your data to multiple destinations, such as BigQuery, Cloud Storage, and Pub/Sub.

When a Dataflow job is launched, the service allocates a pool of worker virtual machines (VMs) to process the data pipeline. Dataflow generates over 40 specific metrics related to the data processing, such as:

- Data freshness

- Throughput

- System lag

- Parallelism

Additionally, it provides around 60 metrics related to VM performance, including

- CPU usage

- Memory utilization

- Disk space

Resource Management

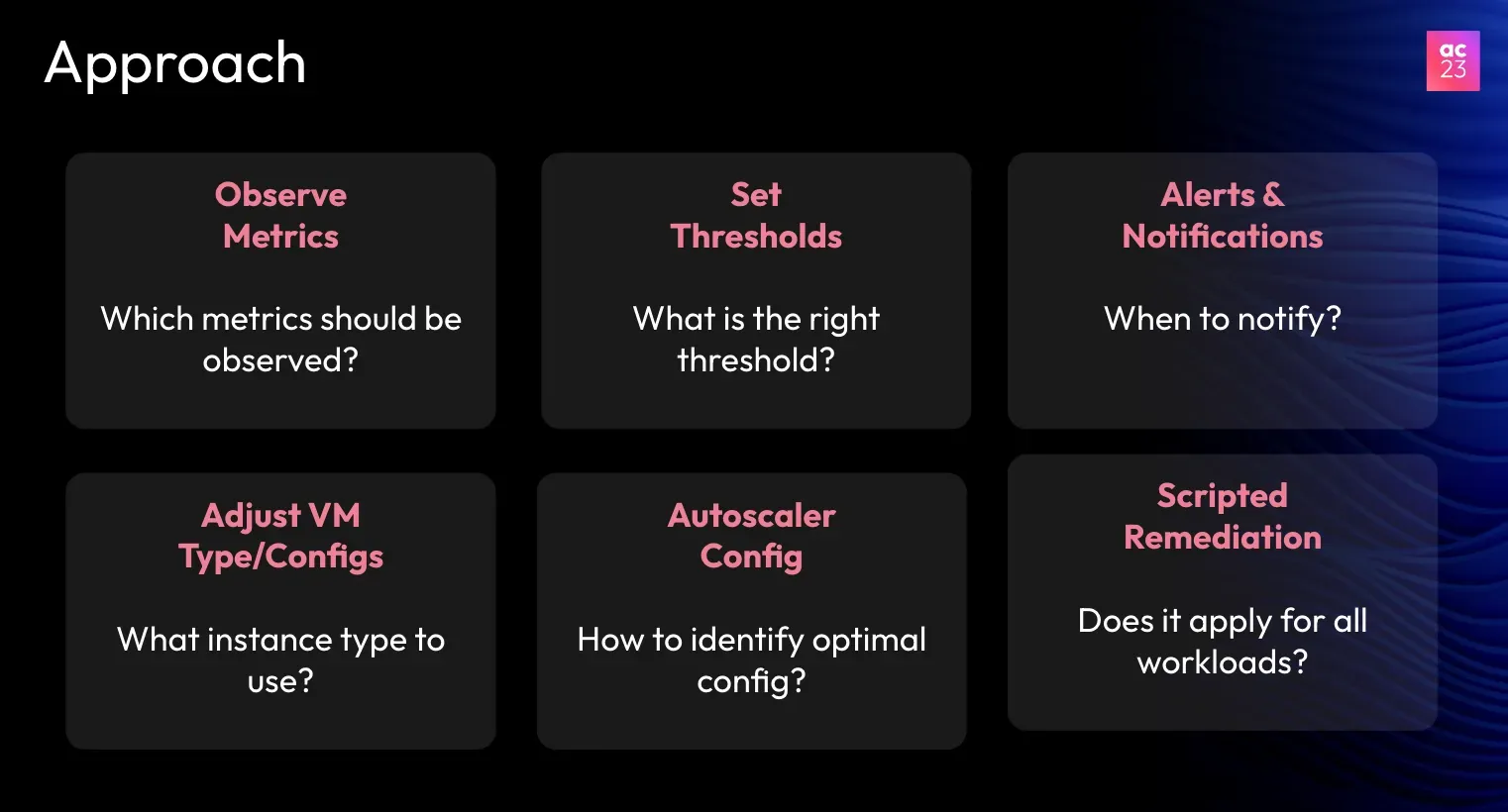

Managing resources effectively is crucial for optimal performance. Organizations need to adjust CPU and memory settings based on workload. For jobs dealing with varying loads, an autoscale can be configured to adjust resources based on demand automatically.

Each job may have different requirements based on its type (streaming or batch) and the input data it processes. Therefore, a one-size-fits-all solution is not effective. Customizing resource allocation for each job type ensures better performance and cost efficiency.

Strategies for Dataflow Optimization

Businesses can implement several strategies for optimizing their dataflow architecture to maximize the efficiency and cost-effectiveness of data streaming. Here are some key strategies to consider:

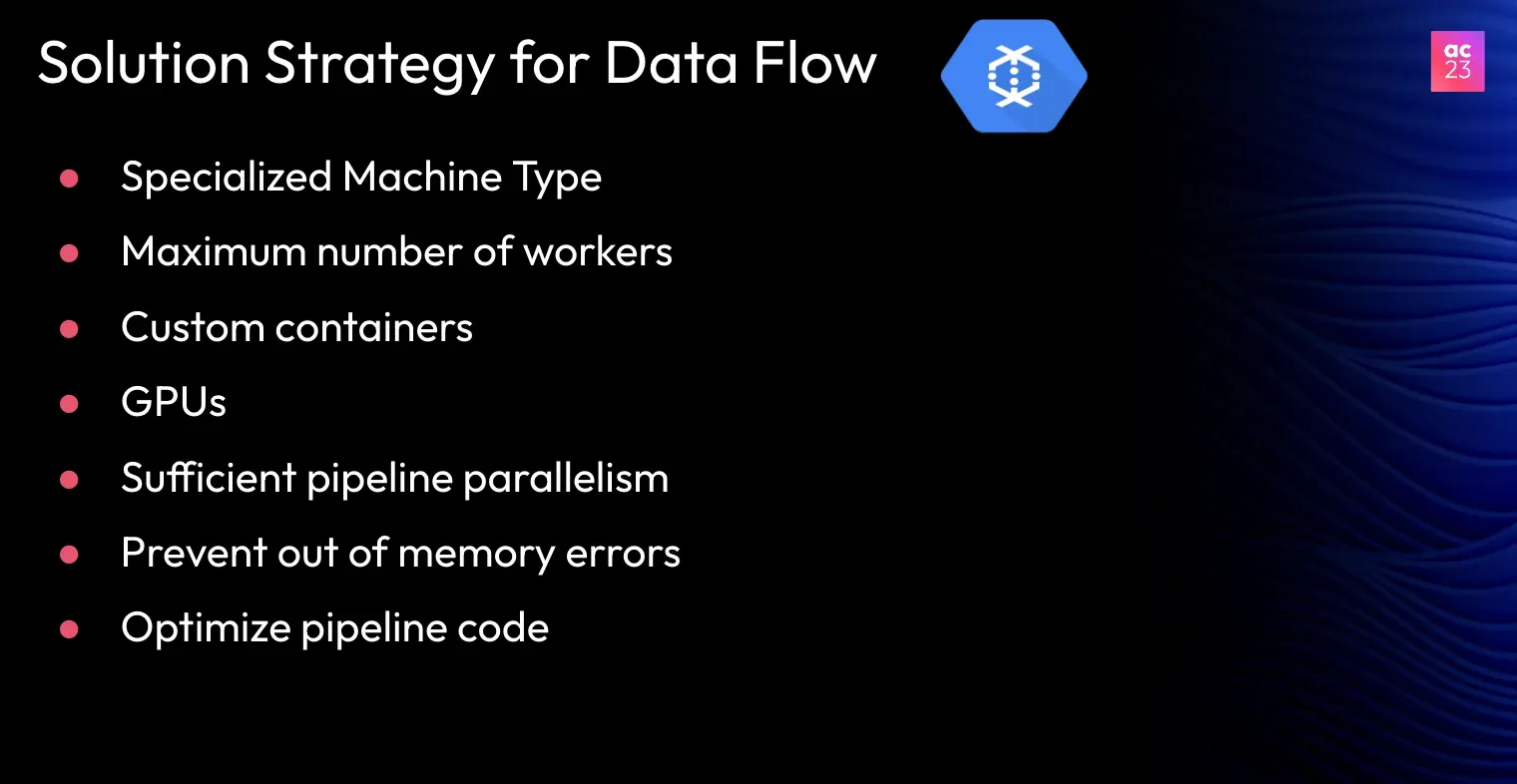

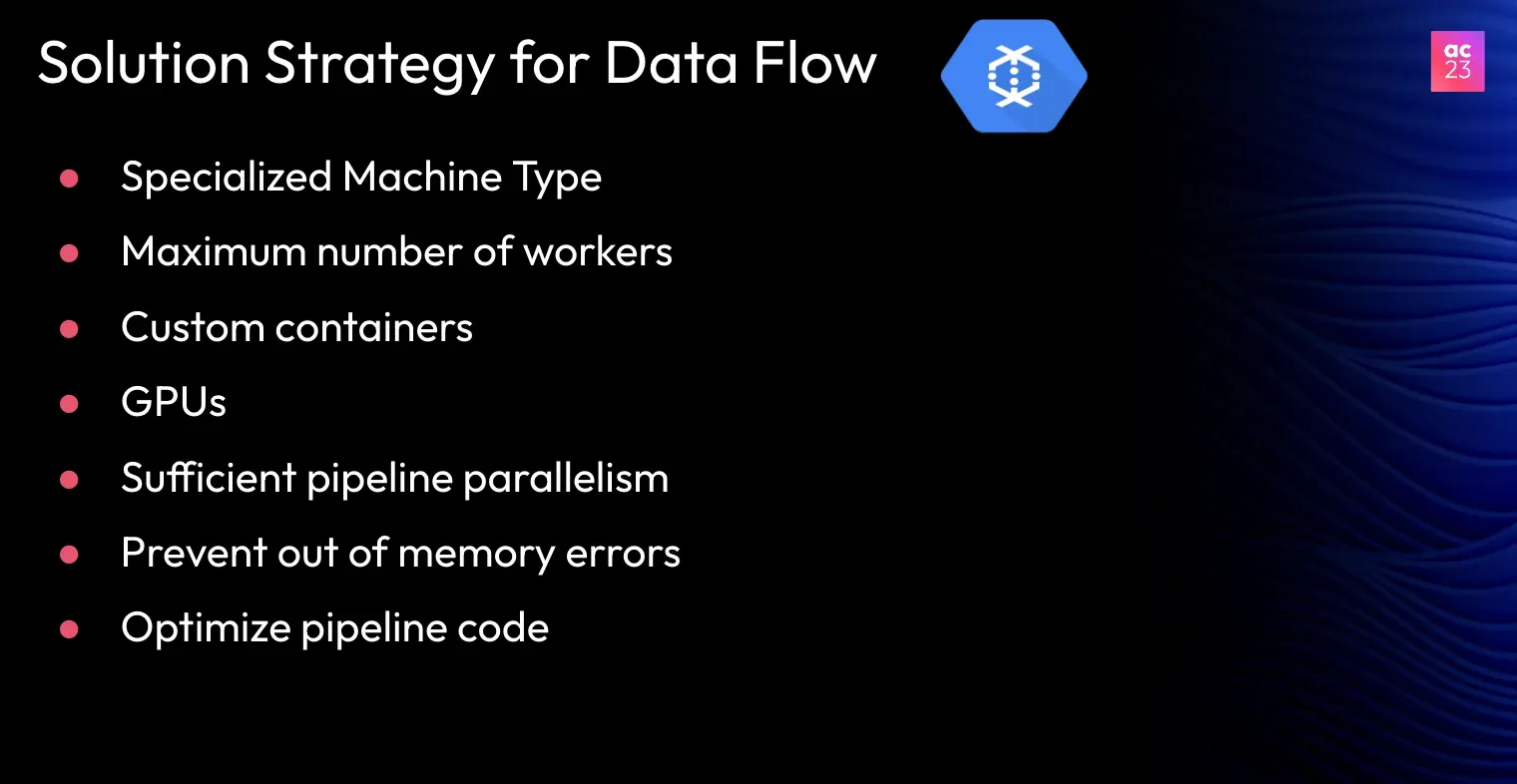

- Use Specialized Machine Types: Dataflow jobs often start with a default configuration, such as the n1-standard-4 machine type (4 vCPUs and 15 GB memory). However, this setup may not fit all jobs. For example, a more memory-intensive job may not need as many CPUs, leading to wasted resources. Choosing a machine type based on your job's specific requirements can reduce unnecessary costs.

- Set Limits on Worker Scaling: To avoid excessive scaling, set an upper limit on the number of worker VMs. The default for streaming jobs is 100 workers, while batch jobs can scale up to 1,000. The system can scale up too much without careful monitoring, resulting in higher costs. Setting appropriate limits helps control this.

- Use Custom Containers: Using custom containers can improve startup times for Dataflow jobs. This allows organizations to deploy their applications more quickly and efficiently.

- Incorporate GPUs: Graphics processing units (GPUs) can significantly speed up processing times for tasks involving machine learning or deep learning.

- Ensure Sufficient Parallelism: Adequate parallelism within the pipeline is important to optimize data processing.

- Prevent Out-of-Memory Errors: Properly tune memory settings to prevent out-of-memory errors, which can cause job failures. Organizations should monitor memory usage and adjust settings as needed.

- Optimize Pipeline Code: Regularly reviewing and optimizing the code used in data pipelines can improve performance.

A lot of parameters are involved in these strategies, like CPU, memory, machine type, disk size, worker count, and parallelism.

So, tuning these parameters at scale is tedious and time-consuming. And we have a better approach!

The Need for a Better Approach – The Autonomous Solution

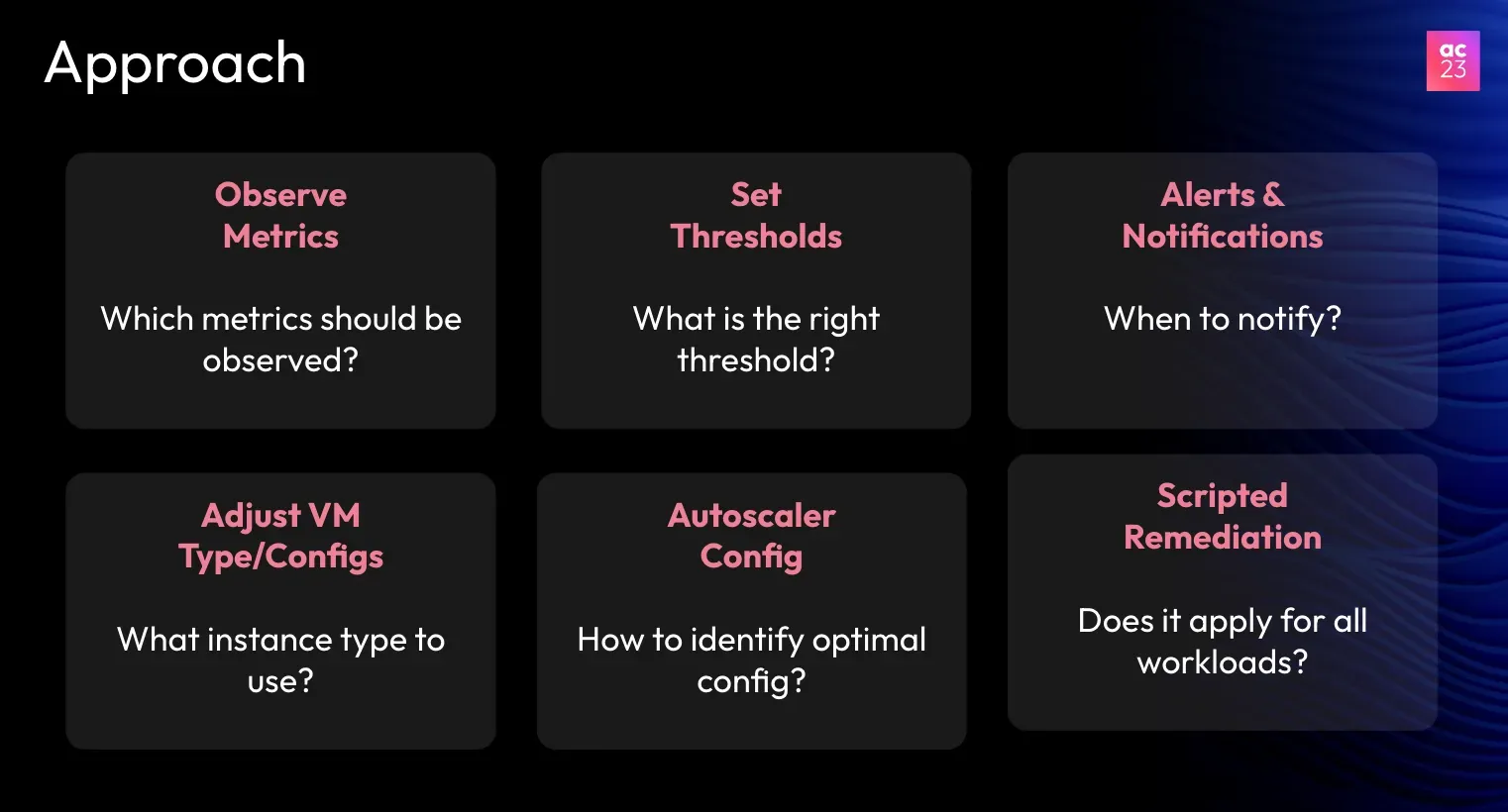

An autonomous approach can be very effective in simplifying the optimization of data flow. This method uses advanced technology to manage resources automatically and reduce the need for manual adjustments.

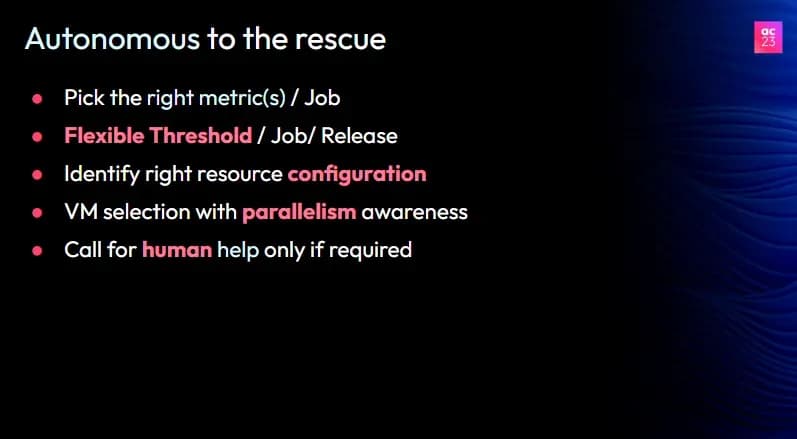

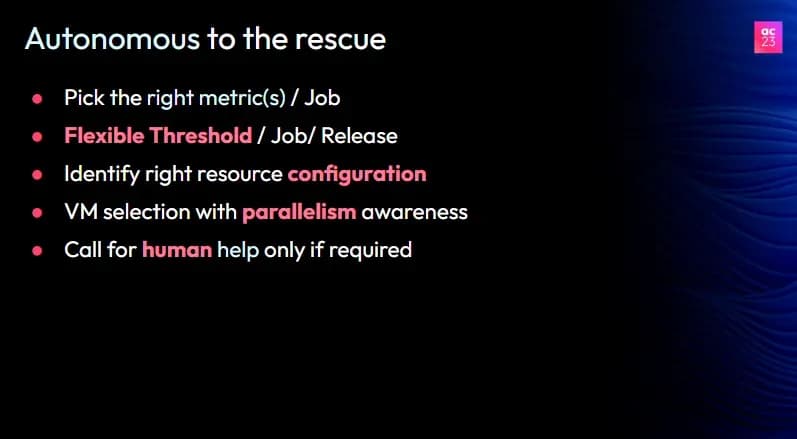

Here are the key features of this approach:

- Self-Monitoring: An autonomous system can automatically monitor all relevant metrics associated with Dataflow jobs.

- Automatic Threshold Detection: The system can determine the right thresholds for these metrics independently. This reduces the chances of alert fatigue, which occurs when too many alerts overwhelm the operations team.

- Intelligent Resource Allocation: The autonomous system selects the most suitable VM types based on each job's specific needs. It can identify whether a job requires more CPU, memory, or parallelism and adjust accordingly.

- Human Oversight: While the system operates autonomously, it involves human intervention only when necessary. If something goes wrong or there are significant changes in workload, human experts can make adjustments.

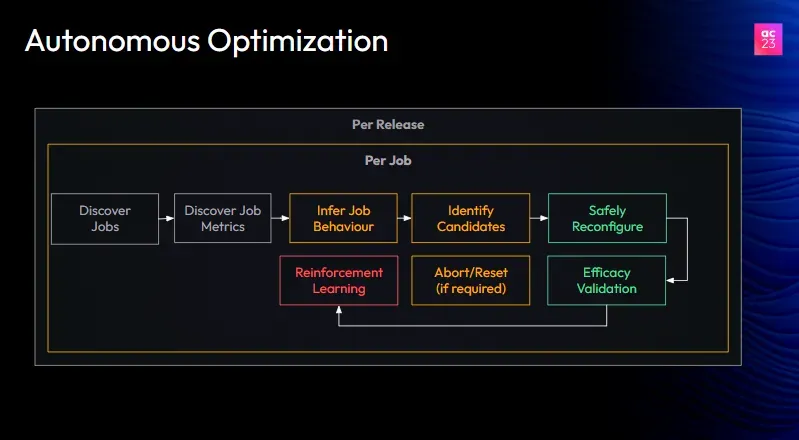

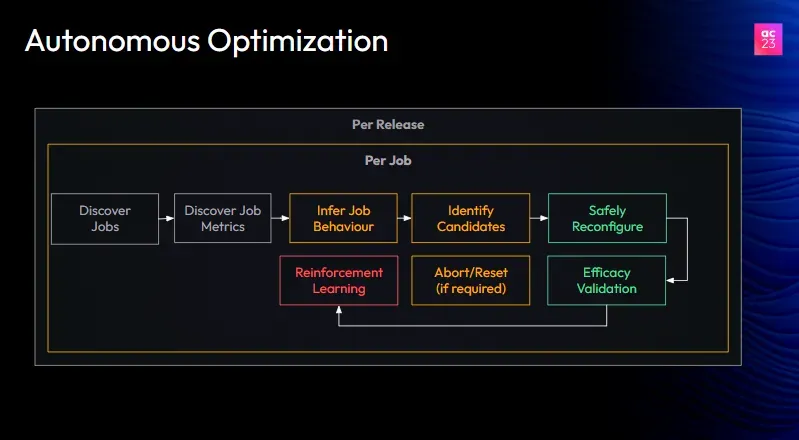

By correlating all this information, the autonomous system should be able to infer job behavior, estimate cost, and identify opportunities for optimization.

Once those opportunities are identified, the system should safely apply those new configurations and then evaluate their efficacy. This has to happen in a continuous loop until no more optimization is required using reinforcement learning techniques.

Introduction to Sedai's Autonomous Optimization

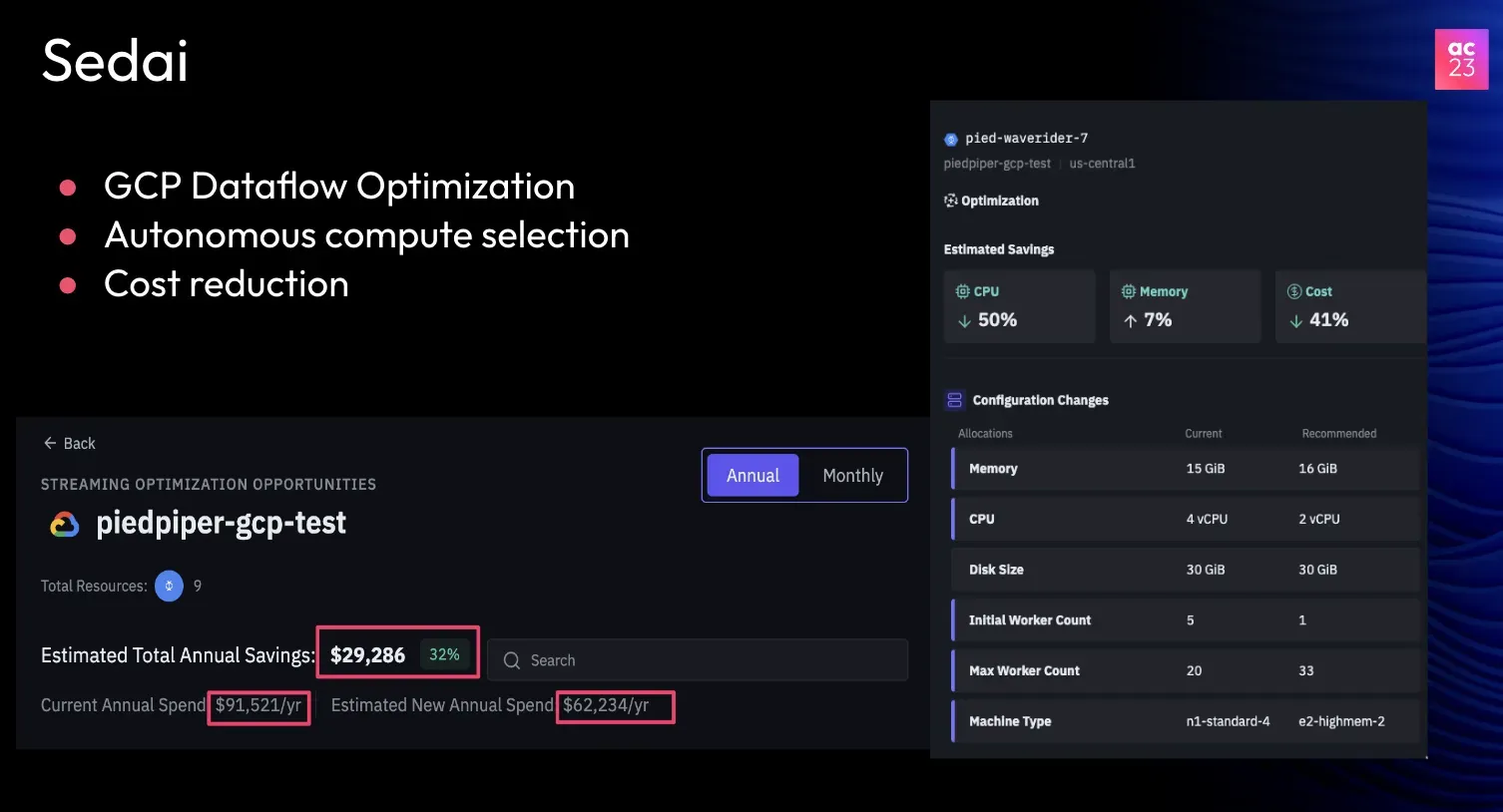

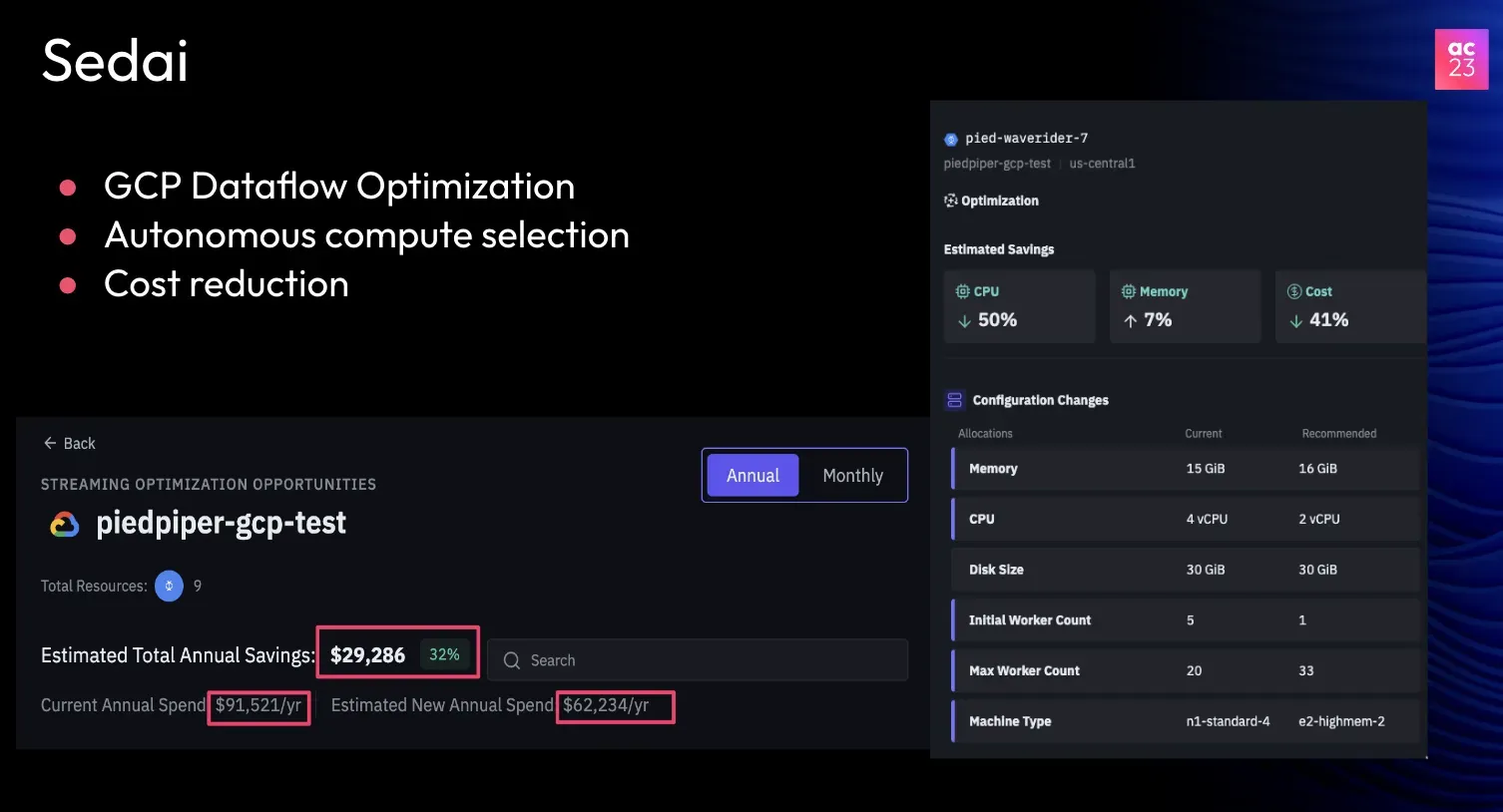

Sedai offers an innovative solution for optimizing Dataflow through its autonomous optimization technology. This tool is designed to enhance performance while reducing costs for data streaming jobs. Here are some key features and benefits:

- Cost Savings: Sedai's autonomous optimization can identify significant cost-saving opportunities. For instance, a recent analysis showed potential annual savings of around 32% for some Dataflow accounts.

- Intelligent Recommendations: The system provides tailored recommendations for optimizing Dataflow jobs. For example, it can suggest changing the machine type from an n1-standard-4 to a more cost-effective E2 machine. This adjustment can lead to a 40% reduction in costs for certain streaming jobs.

- Dynamic Resource Adjustment: Sedai's tool can automatically adjust the minimum and maximum number of workers needed for a job. This helps to make sure that resources are used efficiently based on the job's requirements.

While the focus is on Dataflow, Sedai is expanding its autonomous optimization capabilities to other streaming platforms, such as Databricks and Snowflake. This broadens the potential benefits for organizations using different data processing tools.

Start Your Journey to Cost-Efficient Data Management With Sedai!

We have discussed the challenges of managing cloud-based data platforms, specifically focusing on cost. We've also explored a solution that can help you optimize your data pipelines and save money.

Sedai's autonomous optimization solution is a great way to improve the performance of your Dataflow pipelines and reduce costs. This solution is easy to use and affordable.

Exploring Sedai's offerings could be beneficial for those looking to enhance their data streaming management. Consider signing up for a demo to see how Sedai can help optimize your data processes.