Note: this article is a transcript of a talk by Siddharth Ram, current CTO of Velocity Global, at autocon/22.

Introduction

This article delves into Failed Customer Interactions (FCIs), a critical metric that requires our attention. It provides valuable insights into system availability from a customer-centric standpoint. By assessing the success or failure of customer interactions, FCIs offer a way to evaluate the performance and effectiveness of a system. The article aims to demystify FCIs by examining their definition, measurement techniques, and their crucial role in achieving operational excellence. Furthermore, it explores how the powerful tool, Sedai, supports reductions in FCIs. You can watch the full video here.

The Pillars of SaaS Success: Engineering and Operational Excellence

Let's talk about running a SaaS company and the importance of operational and engineering excellence. When it comes to the backbone of any SaaS company, these two aspects play a crucial role. Engineering excellence encompasses all the tasks involved in creating and deploying software into production.

When we talk about engineering excellence, it involves a variety of activities. First and foremost, you need to write the code that powers your software. This includes designing and developing the features and functionalities that make your SaaS product unique and valuable. Additionally, you need to consider the user experience and ensure that it aligns with your customers' needs and expectations. Achieving product-market fit is also a critical aspect of engineering excellence, as you want your software to address a specific market's demands effectively.

Moreover, once the software is developed, it needs to go through a continuous integration and continuous deployment (CI/CD) pipeline. This pipeline ensures that the software is thoroughly tested, reviewed, and deployed seamlessly into production. The CI/CD pipeline helps streamline the development process and ensures that any changes or updates to the software are delivered efficiently and with minimal disruptions. On the other hand, Operational Excellence focuses on what happens to your software after it has been deployed in a production environment. It involves the ongoing maintenance, management, and optimization of the software to keep it running smoothly and deliver an excellent customer experience. Operational excellence encompasses tasks such as monitoring the software's performance, addressing any issues or bugs that may arise, and making continuous improvements to enhance the overall quality and efficiency of the product.

By prioritizing operational and engineering excellence, a SaaS company can ensure that its software is not only developed with precision and attention to detail but also maintained and optimized to provide a seamless user experience. These two aspects work hand in hand to create a strong foundation for a successful SaaS business, as they contribute to the overall reliability, performance, and customer satisfaction of the software product.

Tracking Metrics for Engineering and Operational Excellence

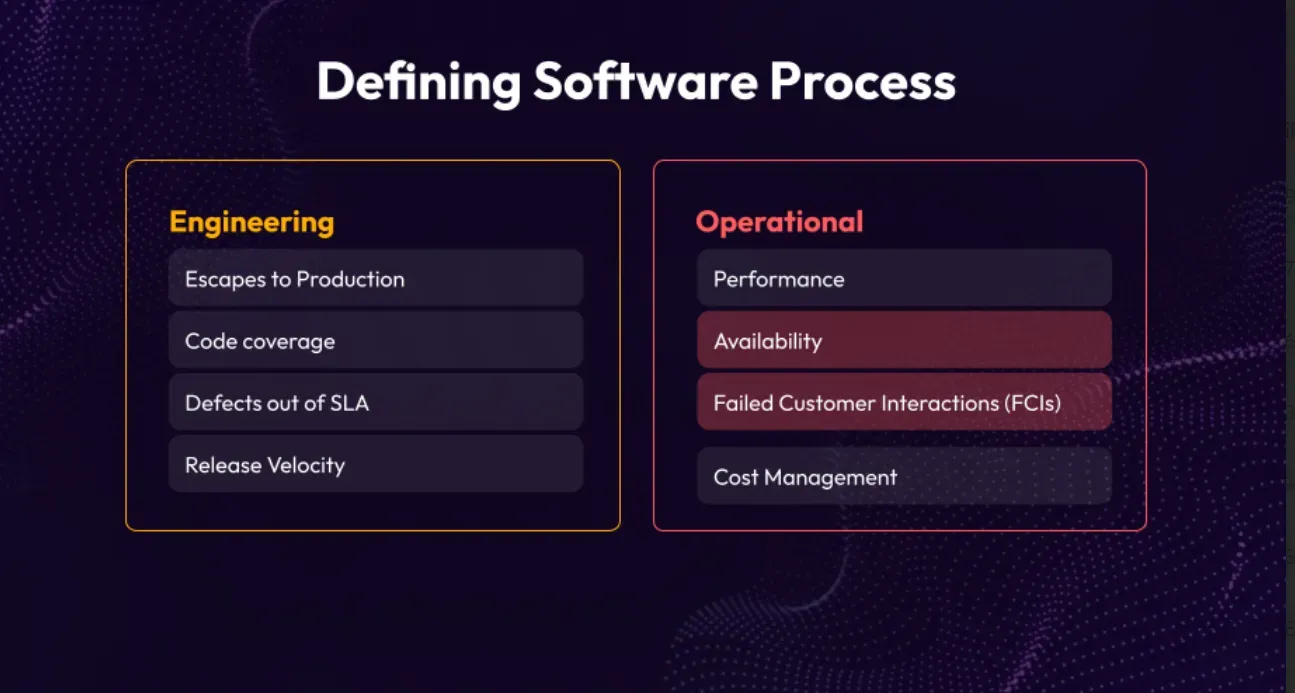

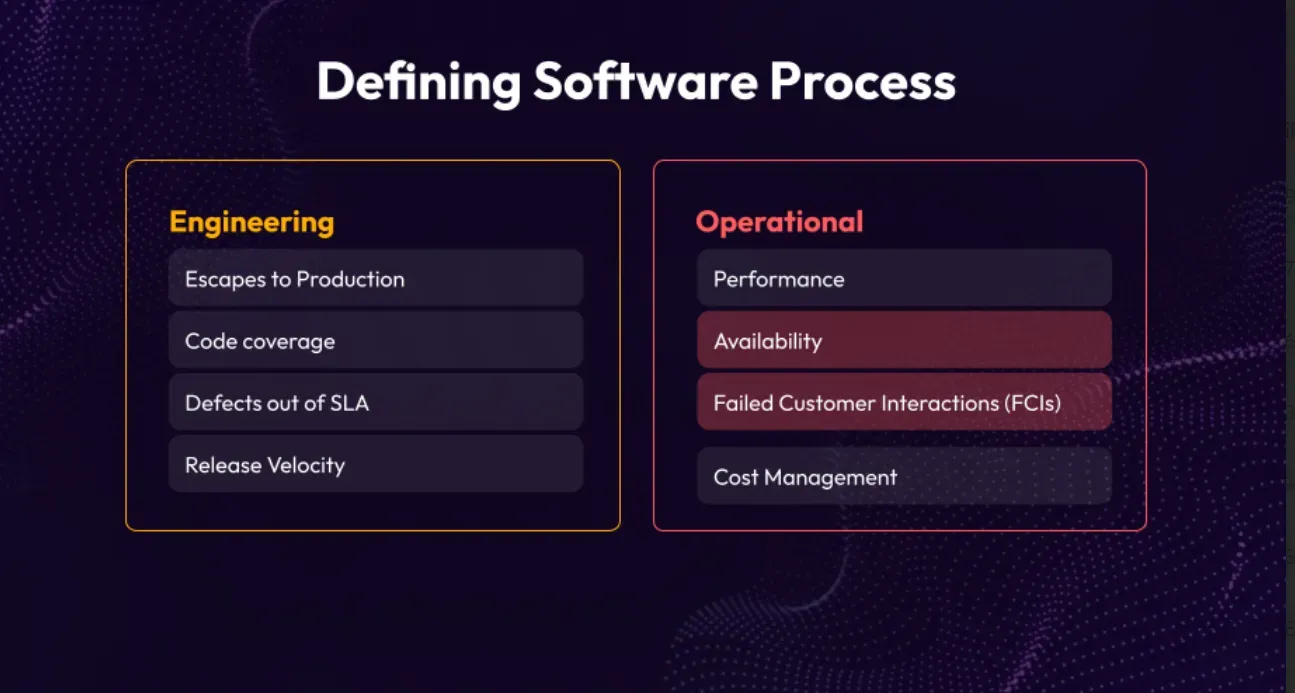

To track these aspects, certain metrics are commonly used. For engineering excellence, metrics like escapes to production, code coverage, defects out of SLA, and release velocity are important. These metrics assess the quality, test coverage, adherence to service level agreements, and speed of software deployment.

Operational excellence metrics include performance, availability, FCI, and cost management. Performance measures the speed and efficiency of the software, availability ensures it remains accessible to users, FCI focuses on delivering value quickly to customers, and cost management optimizes resource utilization.

Evaluating Availability and Failed Customer Interactions

Let's narrow our focus to two crucial aspects: availability and handling failed customer interactions. Starting with availability, it has long been a well-established metric in the industry. We can find a concise definition of availability, which involves subtracting the impacted time from the total time and then dividing it by the total time. This metric originated during a time when monolithic architectures dominated the technology landscape. It operated on the premise that there was a single machine connected via an Ethernet cable, and availability was determined by whether the network or the machine itself was functioning.

Transitioning from Monoliths to Microservices: A Paradigm Shift in Availability Measurement

The traditional metric of availability, which measures how often a system is up and running, was designed for an era when monolithic systems were dominant. Monolithic systems are a type of software architecture where everything is interconnected and dependent on a single machine. Back then, availability was simply determined by whether that machine or its network connection was functioning.

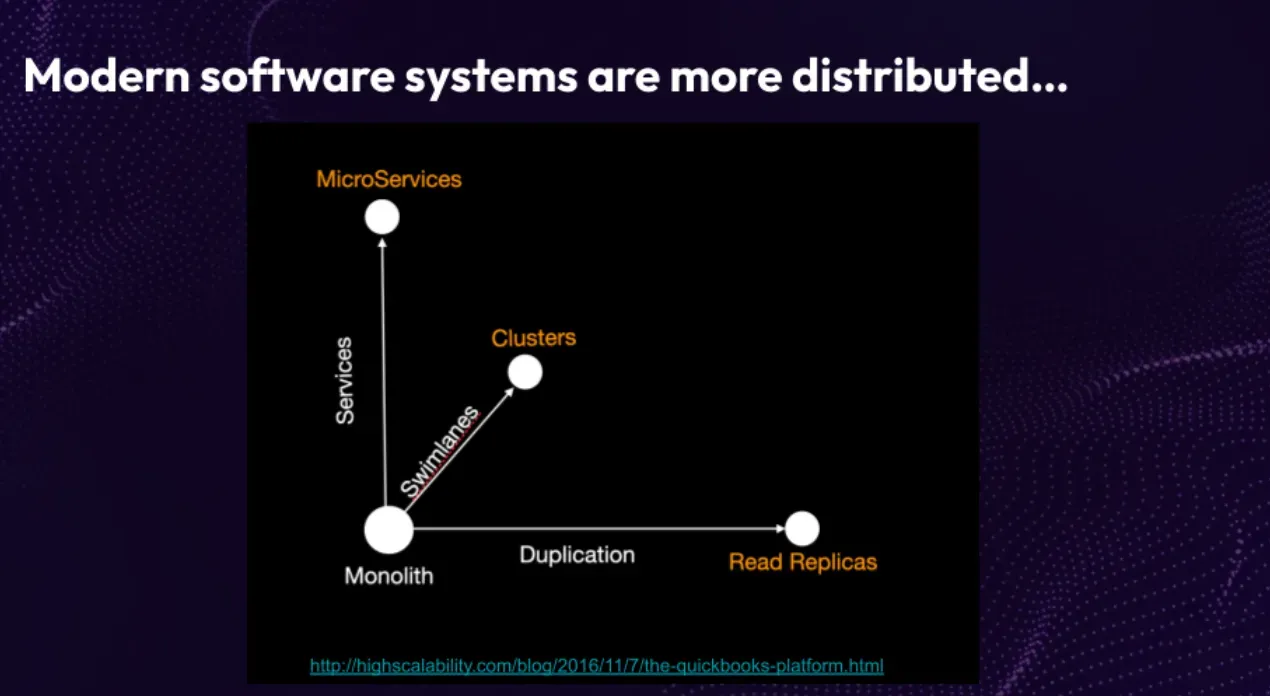

However, in today's world, where distributed architectures are more common, this traditional metric falls short. Distributed systems involve multiple interconnected components like microservices, containers, and cloud computing. Availability in these systems is not solely dependent on a single machine or network connection but involves various factors. Therefore, it's important to recognize that the old metric of availability was better suited for monolithic systems and mainly focused on the backend system. To evaluate availability in modern architectures, alternative metrics that consider individual microservices, network communication, and other relevant factors need to be explored.

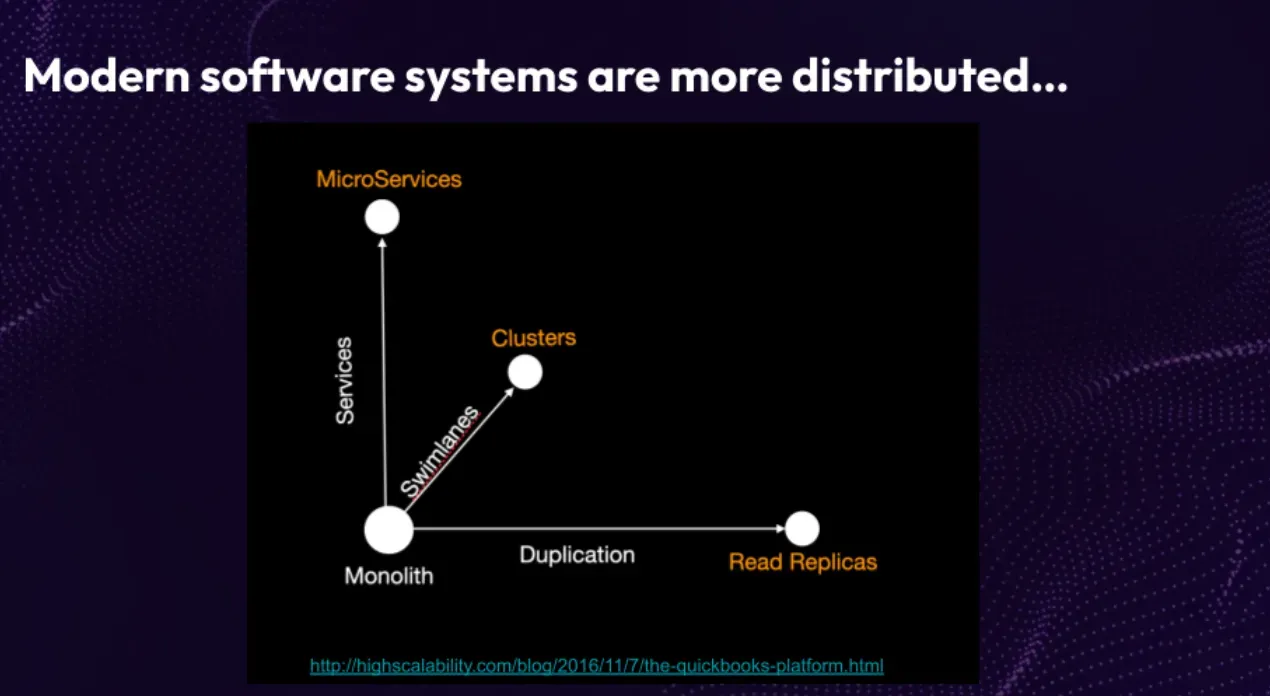

Let's delve into the world of system architectures and the challenges they bring to measuring availability. Picture your system transforming from a single dot to a multi-dimensional cube. On the X-axis, you have read replicas, distributing load and enabling concurrency. The Y-axis represents the concept of microservices, separating functionality into individual services for scalability and specialized teams. Finally, the Z-axis introduces swim lanes, scaling resiliency by segregating customers or functionality.

With this intricate system structure, calculating availability becomes complex. What if a microservice goes down, impacting a specific function? How do we measure availability in such cases? Consider the scenario where your US customers reside in one swim lane and Canadian customers in another. If the Canadian cluster experiences an outage, how should availability be measured? It's a challenging puzzle that demands careful thought.

In light of these complexities, a more modern and effective approach is to shift our perspective from time-based metrics to count-based metrics. Instead of solely focusing on the duration of outages, we consider the frequency of service failures. This count-based approach provides a more nuanced understanding of availability, allowing us to identify patterns, isolate faults, and take proactive measures. By embracing this shift, we gain greater insight into system resilience and can make targeted improvements. Rather than waiting for the entire system to be affected, we can address specific service failures promptly. Count-based metrics enable us to navigate the intricacies of modern system architectures and ensure their availability.

Why time-based metrics aren’t good enough

Availability doesn't always reflect the severity of an incident accurately, nor does it measure the customer experience. It also lacks clear ownership, as different teams may be responsible for implementing changes. Additionally, availability doesn't capture task completion rate, which is crucial for assessing if customers can easily accomplish their goals on a webpage.

To truly improve customer experience, we need to go beyond availability metrics. Factors like usability, performance, and task completion rates should be considered for a holistic understanding. Remember, the impact on customers doesn't always align with the severity of an incident. So, relying solely on availability can lead to a skewed understanding of the user experience. By considering factors like usability, performance, and task completion rates, we can gain a more comprehensive view of the customer journey and make informed decisions to enhance their overall satisfaction.

Customer-Centric Metric: Measuring and Enhancing the Customer Experience for Success

It is imperative that we redirect our attention towards a customer-centric metric that not only gauges but also enhances the customer experience, fosters team ownership, and elevates. It has also a direct impact on NPS scores, as it measures the happiness and satisfaction of our customers. Surprisingly, such a metric already exists in the industry, although it's not widely utilized as it should be.

What is a failed Customer Interaction?

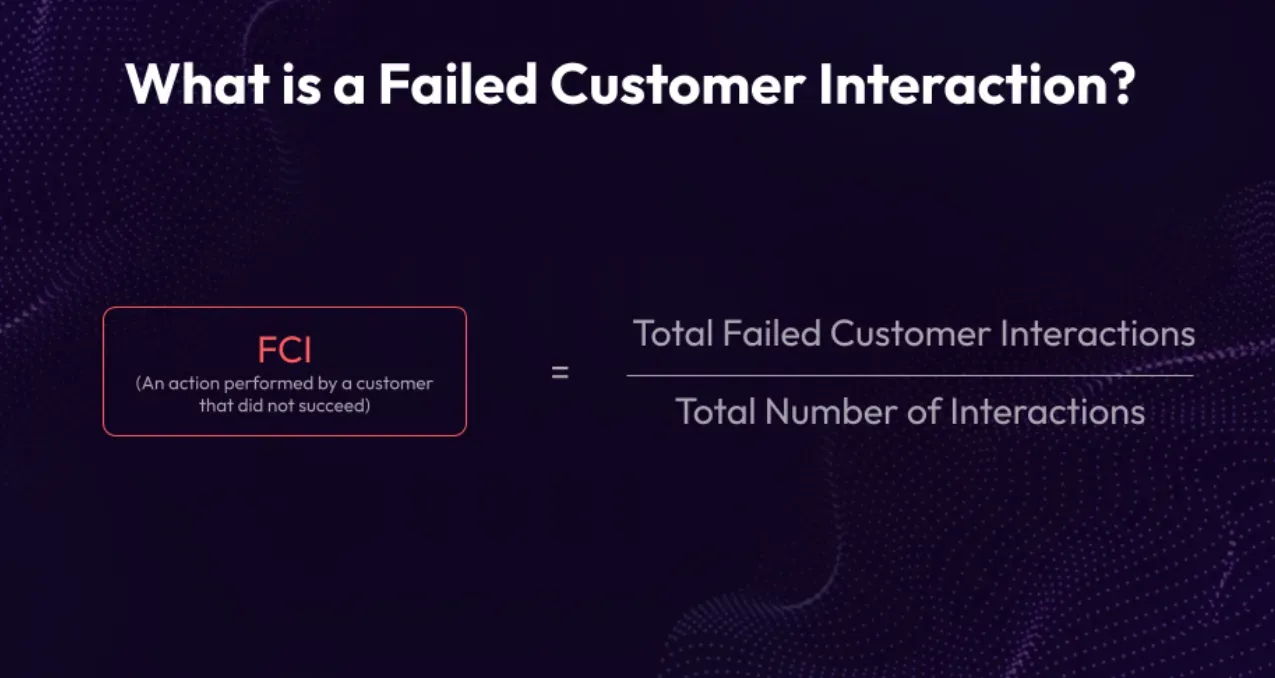

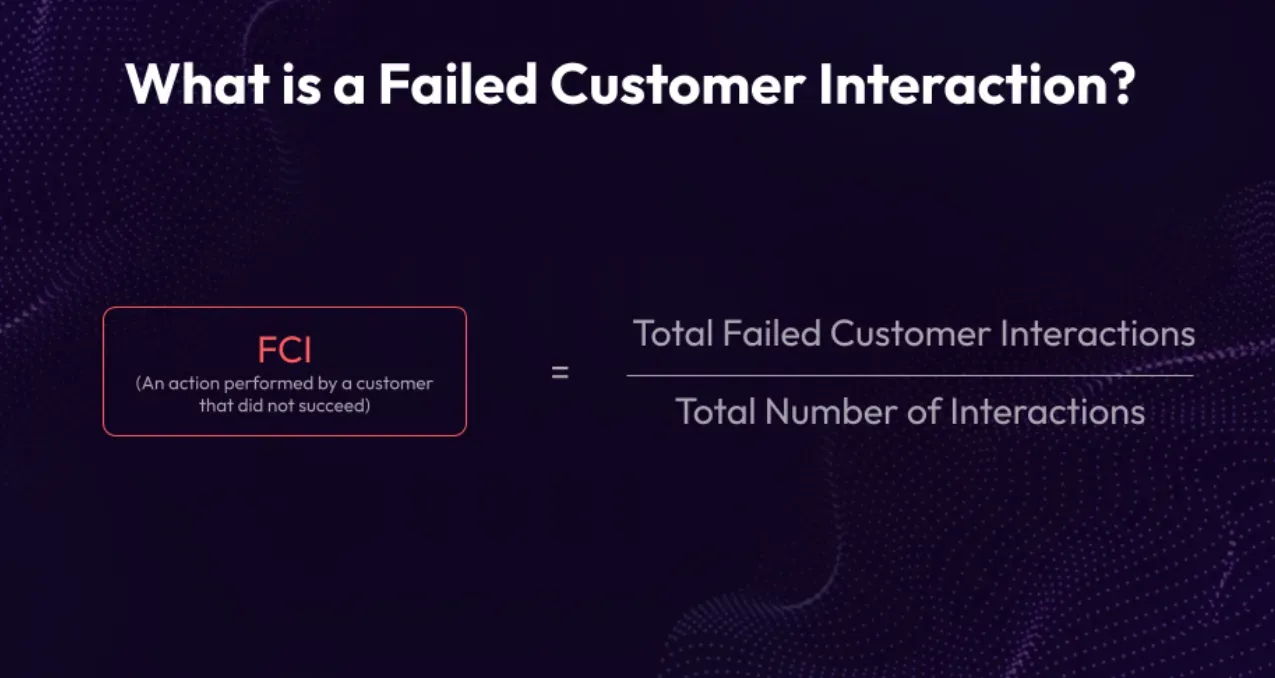

Understanding the concept of a failed customer interaction is straightforward. It involves calculating the total number of unsuccessful interactions and dividing it by the overall number of interactions. This measurement focuses on the account level rather than considering time as a factor. However, it is possible to incorporate time-based analysis to determine the occurrence rate of these incidents within a given period.

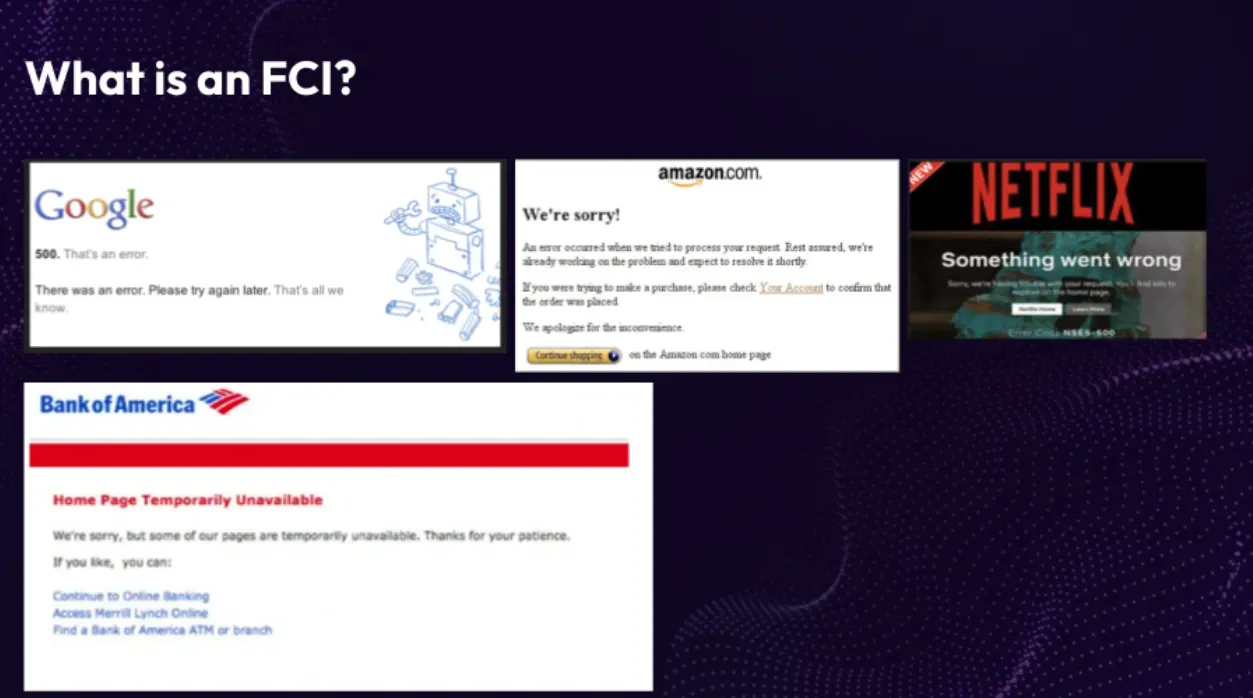

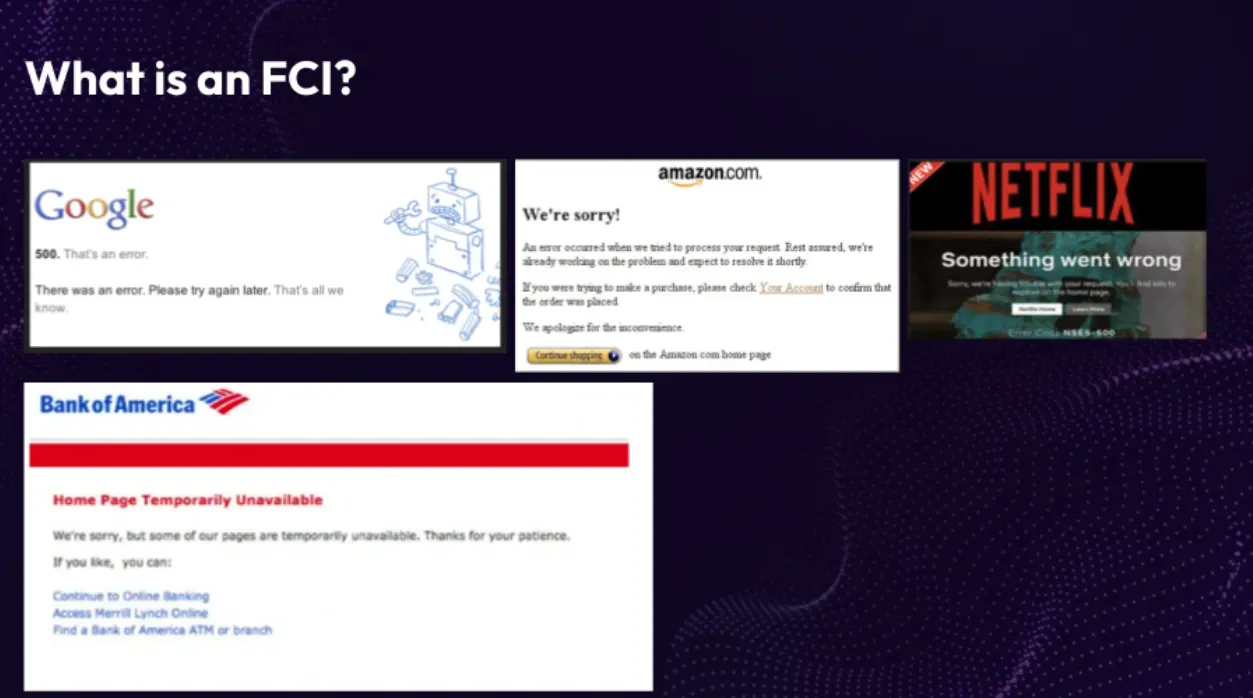

What exactly constitutes a Failed Customer Interaction (FCI)? Let's delve into it. FCIs encompass instances such as encountering 500 errors or frequent occurrences of 404 errors. These errors, which many of us have encountered ourselves, can happen on various platforms, including popular ones like Google, Amazon, and even Bank of America (which seems to be a particular subject of disdain for someone!). FCIs are an inherent part of any system that serves a considerable user base, as there will inevitably be situations where users unintentionally trigger actions leading to failed interactions, resulting in internal server errors.

To illustrate this, let me share a personal experience. Just a couple of weeks ago, while accessing my credit card bills on chase.com, I was taken back when I encountered an unmanaged Apache error—an unexpected 500 error. It was surprising to witness such a flaw in a well-established company like Chase. However, this incident emphasizes the inherent complexity of software and the likelihood of occasional glitches. Whenever a 500 error occurs within the system, tracing it back to the browser reveals a frustrated customer who was unable to accomplish their intended task.

Elevating Customer Experience: Unveiling FCIs Through Observability Platforms

Let me share an important insight, a secret that deserves widespread attention, perhaps even displayed on a prominent billboard along Highway 101. The observatory platform you have at your disposal possesses the capability to effortlessly measure FCIs. Surprisingly, it's already equipped to do so; we just haven't been paying enough attention to it. To share an example from my experience at Good Hire or Inflection, where we utilized Datadog. In a matter of minutes, we set up a dashboard that provided us with valuable insights into our fail customer interaction rate. Unfortunately, there aren't many established benchmarks available for comparison in this area. However, through conversations with colleagues and friends at AWS, I discovered that their benchmark for failed customer interactions is less than 0.025% of the total transactions within the system. To put this into perspective, if you have a million transactions, according to Amazon, the number of failures should be less than 250. This benchmark serves as a helpful context to gauge your own performance.

GoodHire's Experience Reducing FCIs

To present a compelling case study from my previous company. At the outset, our fail customer interaction (FCI) rate stood at 3.2%. Considering my extensive experience in system analysis, I must emphasize that actively monitoring and addressing these numbers is crucial. Starting at 3.2% is actually quite commendable. Subsequently, we incorporated FCI reduction as a key component of our quarterly plan, aiming to achieve a remarkable target of 0.025%. Remarkably, we surpassed our goal, reaching an impressive 0.02% FCI rate after nearly three quarters of concerted effort. However, the team didn't stop there; they went above and beyond, diligently working towards rendering FCIs virtually undetectable within our system.

Now, let's explore the value derived from this endeavor. The true worth lies in the Net Promoter Scores (NPS) – an essential metric for assessing customer satisfaction. While I cannot establish a direct causation, it is worth noting the positive correlation between our FCI reduction efforts and the NPS scores of our product. Over time, we witnessed a notable increase in NPS scores, progressing from 63 to 68 and eventually reaching a remarkable 70. This remarkable improvement further validates the importance of investing efforts into reducing FCIs and ultimately enhancing customer satisfaction.

Implementing FCIs

Naturally, there were other contributing factors that influenced the outcomes and propelled progress. However, these findings align with my expectations when customers can effectively utilize the system, perform their tasks seamlessly, and experience satisfactory system performance. It's no surprise that such improvements lead to a higher NPS score.

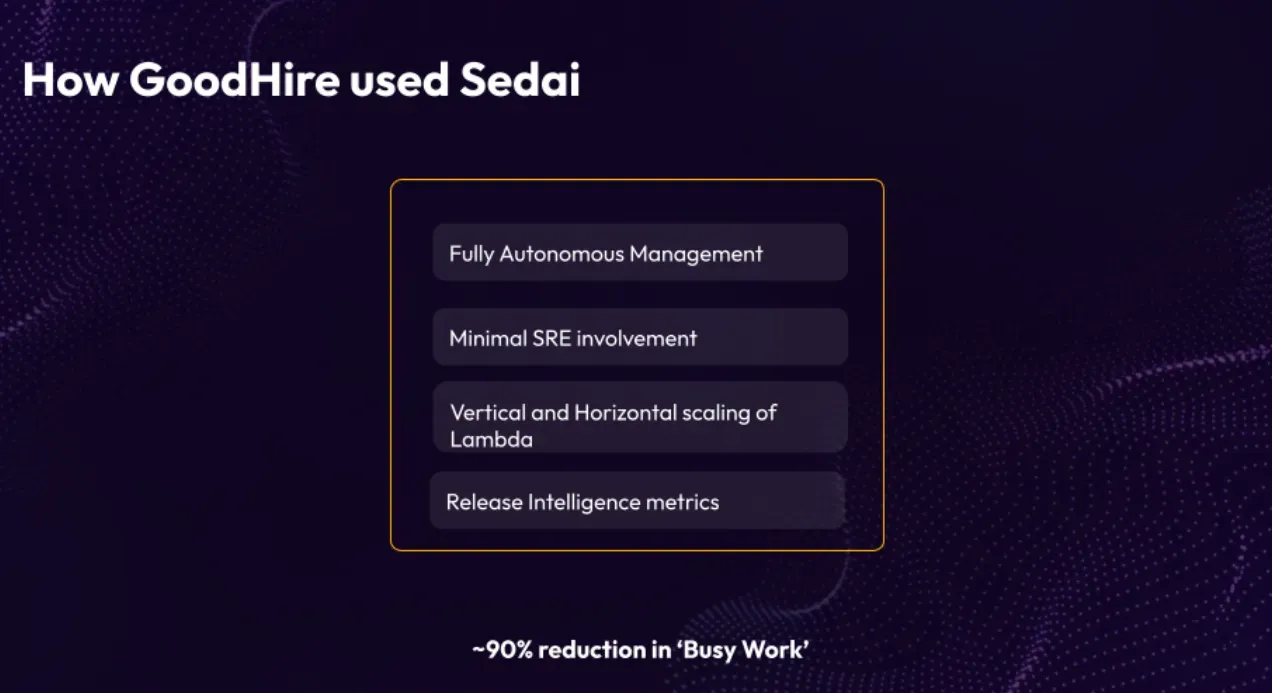

Let's explore the practical implementation of FCIs. A significant aspect of our success was the adoption of Lambda, which allowed us to easily deploy Sedai on top of it. Moreover, once the system was up and running, our SRE team could divert their attention elsewhere as Lambda handled the operational aspects smoothly. However, it's important to note that 500 errors can stem from various causes. Scaling issues, where inadequate resources lead to dropped requests or delayed timers, can be problematic. In our case, using autonomous management techniques helped us make substantial progress in addressing these issues. It's worth mentioning that when running C# and .NET stacks on Lambda, we encountered the highest latency and struggled with cold starts, often exceeding 15 seconds. Clearly, expecting customers to wait for such extended periods is a significant challenge that needs attention.

Furthermore, mindset played a crucial role. It became a priority to emphasize that solving customer problems was our core objective. As part of this commitment, we set a goal to minimize FCIs, and we successfully achieved that. For more insights on bridging the customer-engineering gap, I have provided a detailed write-up on cio.com that you may find interesting. Additionally, implementing an on-call program proved beneficial. Instead of engineers focusing solely on their backlog items during their on-call week, their responsibility shifted towards collaborating with customer success teams. They actively worked on understanding customer concerns, investigating error logs through tools like Datadog or Splunk, and resolving issues promptly. This change in approach ensured a customer-centric mindset and drove continuous improvement. Kindly proceed to the next slide for further details.

Driving Success: How Sedai's Autonomous System Transformed Operations and Delighted Customers

Lastly, let's delve into the utilization of Sedai in our operations. Our implementation of Sedai was characterized by its fully autonomous nature. While we didn't have access to the remarkable Lambda extensions that could have further mitigated cold starts, we managed to address the minor cold start challenges by leveraging provision concurrency. As a result, scaling issues, often associated with errors, became significantly reduced. The majority of our encountered errors stemmed from coding-related matters, such as overlooked code paths.

Consequently, the need for a dedicated team of SREs solely focusing on serverless operations diminished. We only had two SREs handling the remaining components within the monolith, while the serverless architecture required minimal attention. Weekly reviews of Sedai's performance and dashboards ensured efficient vertical and horizontal scaling. The release of intelligent metrics provided by Sedai were particularly intriguing, alerting us to any irregularities or concerns that warranted immediate attention. This empowered us to make well-informed decisions regarding release deployments.

The serverless side of our workload witnessed a remarkable improvement of approximately 90%, specifically in terms of mundane tasks. This progress coincided with our transition away from Kubernetes and complete adoption of serverless infrastructure. This strategic shift brought about significant enhancements to our operations and overall productivity.

To summarize, FCIs play a vital role in today's landscape. The rise of autonomous management, regardless of infrastructure choices, signifies a progressive approach. By prioritizing failed customer interactions and implementing autonomous strategies, businesses can unlock remarkable enhancements in customer satisfaction and overall experience.

Q&A

Q: Could you provide an overview of how FCIs are defined and measured in terms of customer experience at a single step level?

A: Each microservice is owned by a scrum team, and they are responsible for tracking FCIs for their respective services. Each scrum team has a dedicated dashboard showing the number of FCIs they've had in a given week. Based on their priorities and plans for the next iteration, the team decides which FCIs to focus on and address. As a leader, I set quarterly goals for reducing FCIs, and everyone works towards achieving those targets. Datadog played a significant role in helping us effectively manage FCIs.

Q: You mentioned a 90% reduction in busy work. How was the saved time and resources reinvested?

A: Some of our Kubernetes specialists, realizing that Kubernetes wasn't the right fit for our needs, chose to pursue other opportunities, and we supported them in finding new roles. Instead of filling those positions, we hired additional development engineers for our scrum teams. I believe in empowering scrum teams with end-to-end ownership, including operations, and we embedded operations responsibilities within the teams. This allowed us to utilize the saved resources in enhancing team capabilities and overall efficiency.

Q: So, a shift towards embedding operations within the scrum teams?

A: Yes, precisely. Embedding operations within scrum teams is the right model, especially in a cloud environment. Operations, including aspects like cost management, became the responsibility of the scrum teams.