Introduction to EKS Cost Optimization

Amazon Elastic Kubernetes Service (EKS) has become a favorite for teams managing Kubernetes clusters. But while its flexible infrastructure is fantastic, costs can add up fast if not managed correctly. Understanding where your money goes in EKS can make a big difference in avoiding unexpected expenses.

Why does cost optimization matter so much in EKS? In the cloud, you’re paying for every minute your clusters run. Misconfigured settings, underutilized instances, or heavy data transfers can drive up costs. Proactive cost management helps you make the most of your budget without compromising performance.

This is where autonomous cloud optimization tools like Sedai come into play. By continuously monitoring your EKS cluster, these tools use AI to analyze workloads, resource usage, and traffic patterns in real time, making autonomous adjustments like scaling resources, optimizing configurations, and balancing loads based on demand. Sedai’s platform minimizes manual interventions by taking on the majority of the optimization tasks, helping you keep your environment cost-efficient without constant oversight.

In this article, we’ll explore EKS cost optimization in detail, with a particular focus on how autonomous optimization tools like Sedai can enhance cost control, without negatively impacting performance You’ll learn how these tools provide real-time, autonomous adjustments to keep your clusters running efficiently and cost-effectively—saving you time, resources, and budget in the long run.

Breaking Down EKS Cost Components

To understand where EKS costs come from, let’s review key cost drivers. These include control plane charges, worker node expenses, data transfer fees, and storage costs. For a detailed look at each of these components, you can refer to Understanding AWS EKS Kubernetes Pricing and Costs.

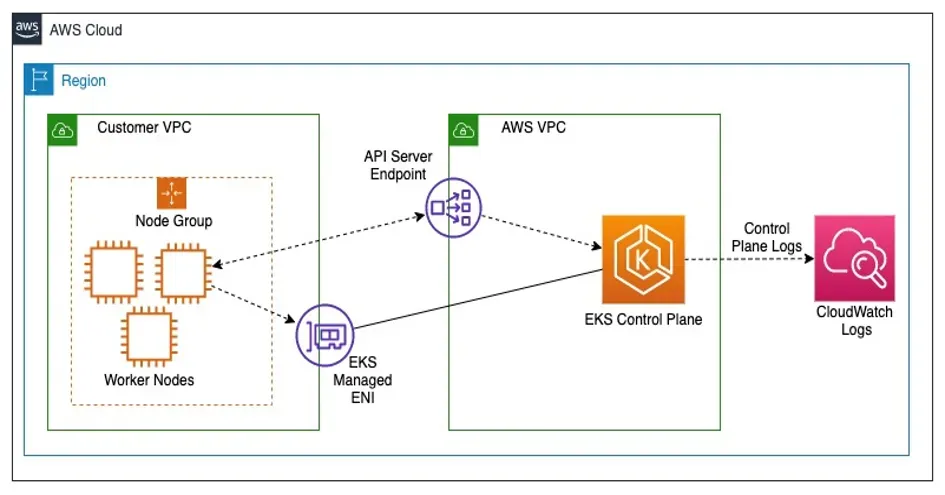

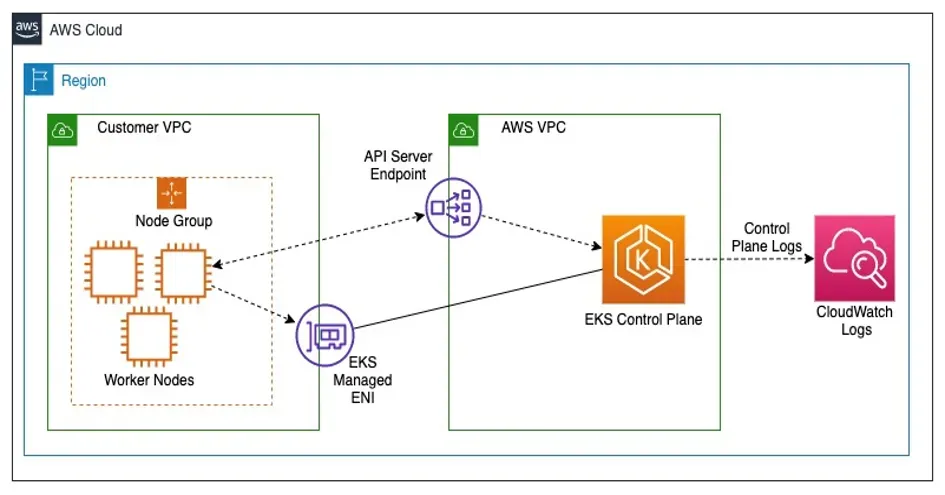

Source: AWS

Control Plane Logs: One often-overlooked cost factor is control plane logging. EKS logs for components like the API server, authenticator, controller manager, and scheduler can be enabled to monitor and troubleshoot applications. However, these logs are stored in Amazon CloudWatch, incurring both data ingestion and storage fees. Optimizing these costs includes selective log retention, setting up archival processes, and using managed services like Amazon GuardDuty for threat detection.

Manual Cost Tracking Challenges

Tracking these costs manually can be a daunting task. With the sheer number of variables, such as scaling instances or managing cross-zone traffic, EKS workloads require constant oversight to prevent unexpected cost spikes. AWS-native tools like Cost Explorer give insights into cost trends, but they still need a considerable time investment to use effectively.

Autonomous Optimization with Sedai: Sedai simplifies this process by offering real-time monitoring and autonomous adjustments. Instead of constantly tracking each cost component yourself, Sedai’s AI-driven platform analyzes workload patterns and optimizes resource usage dynamically. For example, it can autonomously adjust control plane log retention policies or manage data transfer needs, ensuring you’re not overpaying for unused or redundant resources.

Right-Sizing Resources for Cost Efficiency

One of the best ways to optimize EKS costs is by "right-sizing" resources, meaning you match resources to workload demands. Right-sizing avoids overpaying for resources you don’t need and ensures efficient resource allocation within the cluster.

1. Choosing Optimal EC2 Instances

Selecting the right EC2 instance type can have a huge impact on your AWS bill. Amazon offers Cost Explorer with instance recommendations based on usage patterns, making it easier to choose instance sizes that match your needs. Lighter workloads may benefit from smaller instances, while high-performance workloads may require larger ones.

2. Leveraging Automated Rightsizing Tools

AWS provides built-in rightsizing tools to suggest optimal instance types based on your actual usage. However, Sedai’s autonomous cloud optimization platform takes this a step further by continuously adjusting instances to match real-time workload needs, reducing waste without manual input. Sedai monitors your cluster and automatically resizes instances as workloads change, ensuring that you’re never over-provisioned or under-resourced.

3. Optimizing Kubernetes Pod Resources

In Kubernetes, each pod has resource requests and limits, which define the minimum and maximum CPU and memory it will use. Setting these values accurately ensures that each pod only uses what it needs, helping to prevent resource wastage. However, setting these limits manually can lead to “slack cost,” where resources are reserved but go unused.

To combat this, tools like kube-resource-report can visualize resource usage and slack cost, making it easier to adjust requests and limits based on actual utilization. Additionally, Sedai autonomizes this process by dynamically adjusting pod resources based on real-time demand, ensuring pods have just the right amount of CPU and memory to operate efficiently without over-allocation.

By continuously resource right-sizing at the pod and instance level, you can save significantly on EKS costs, making your cluster cost-effective without compromising on performance.

Implementing Autoscaling to Match Demand

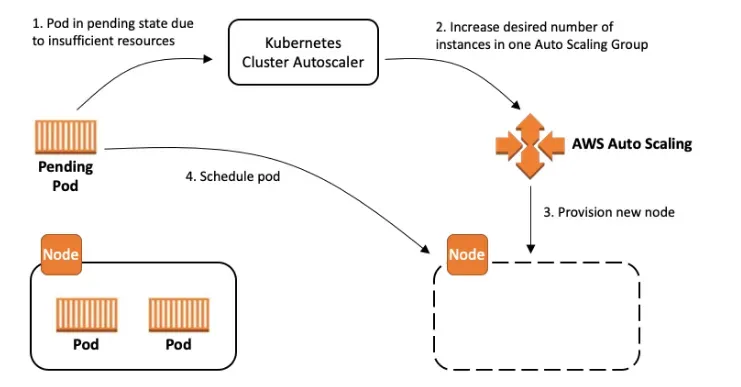

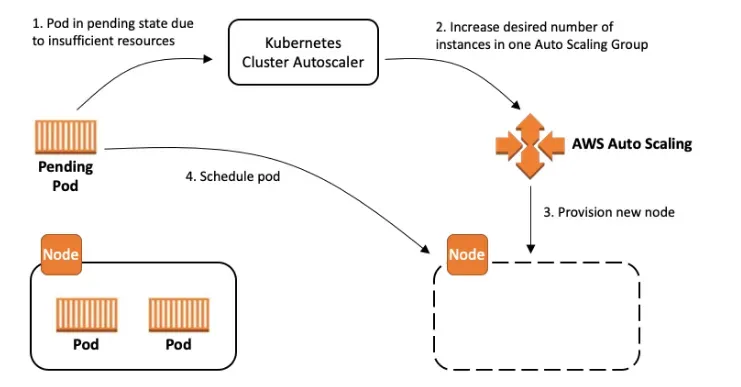

Source: AWS-Github

Autoscaling helps EKS dynamically adjust resources based on demand, which can drastically reduce costs by preventing idle resources from sitting unused. With autoscaling, you can match capacity to real-time workload needs, ensuring that your cluster has just enough resources during peak times and scales down during off-peak periods.

1. Cluster Autoscaler

The Cluster Autoscaler is a Kubernetes-native tool managed by the Kubernetes SIG Autoscaling team. It monitors unschedulable pods and nodes with low utilization, making intelligent scaling decisions based on current workloads. For EKS, the Cluster Autoscaler integrates with EC2 Auto Scaling groups to dynamically adjust the number of worker nodes, ensuring your cluster meets workload demands without excess capacity.

The Cluster Autoscaler operates by simulating node changes, deciding if nodes should be added or removed before making the adjustment. To enhance performance, consider using Node Groups with similar configurations and maintaining consistency across Auto Scaling groups. This approach helps ensure nodes are optimally used, minimizing resource waste. To keep security tight, limit the Cluster Autoscaler’s permissions to only required actions, such as updating DesiredCapacity in the Auto Scaling group.

2. Horizontal Pod Autoscaler (HPA)

The Horizontal Pod Autoscaler (HPA) scales the number of pod replicas based on metrics like CPU or memory usage, helping avoid overprovisioned resources and reducing costs during low-demand periods. It’s a core component in Kubernetes and works with the Kubernetes Metrics Server to monitor pod utilization. When workload demand spikes, HPA increases pod replicas to handle the load, and it scales down automatically when demand drops.

This setup ensures that each application has just the right amount of resources it needs at any given time. HPA is particularly effective for managing workloads with varying demand, like web applications, where traffic may fluctuate throughout the day. By using HPA, you avoid paying for excess resources during low-traffic times.

3. Scheduled Autoscaling for Off-Hours

Scheduled autoscaling allows you to configure scaling based on predefined schedules, particularly useful for non-production or test environments. For instance, a development environment may only need resources during standard working hours. With scheduled autoscaling, you can configure your cluster to scale down outside these hours, saving on costs for resources that are not actively used.

To set up scheduled autoscaling, you can configure schedules within the Cluster Autoscaler or use Kubernetes tools like kube-downscaler, which reduces pod counts outside working hours, making it ideal for development and testing environments that don’t require 24/7 operation.

Optimizing Autoscaling with Sedai: While autoscaling is effective, configuring it correctly for complex workloads can be challenging. Sedai’s autonomous cloud optimization platform takes autoscaling further by continuously adjusting scaling parameters to optimize both cost and performance. Sedai monitors your workloads, dynamically tuning Cluster Autoscaler and HPA settings based on real-time demand and usage patterns, ensuring cost-efficiency without compromising performance. Through autonomous scaling adjustments, Sedai reduces the need for manual configuration and helps avoid unexpected cost spikes.

With the combination of Cluster Autoscaler, HPA, scheduled scaling, and Sedai’s autonomous tuning, you achieve a balanced, cost-effective, and responsive EKS cluster that efficiently meets workload demands.

Leveraging Cost-Effective Instance Types

Selecting the right EC2 instance type is vital when managing costs in Amazon EKS (Elastic Kubernetes Service). AWS provides various options tailored for different workload requirements, allowing businesses to strike a balance between cost and performance. Here's a breakdown of cost-effective EC2 instance types and when to use each.

1. Spot Instances

Description: Spot Instances let you tap into unused AWS capacity at heavily discounted rates (often up to 90% less than On-Demand pricing). They’re excellent for applications that can handle interruptions, as AWS might reclaim this capacity when needed.

Best For: Spot Instances are ideal for workloads like batch jobs, testing, or other non-critical tasks that don’t require constant uptime. By deploying Spot Instances in EKS, you can optimize costs without compromising flexibility in non-urgent scenarios.

2. Reserved Instances

Description: Reserved Instances (RIs) offer substantial discounts in exchange for a commitment to a one- or three-year term. They’re suited for predictable workloads that have steady, long-term needs. Reserved Instances reduce costs by locking in lower rates and are available in both standard and convertible options, offering some flexibility in case requirements change.

Best For: If your EKS workloads involve consistent applications or services that run around the clock, Reserved Instances can provide significant savings. This option is ideal for backend services, databases, or applications with stable demand.

3. Fargate

Description: AWS Fargate provides a serverless compute model for containers, allowing you to run EKS without managing the underlying EC2 infrastructure. With Fargate, you’re billed only for the resources you use, and it scales automatically according to demand. This means you don’t have to worry about provisioning or managing EC2 instances.

Best For: Fargate is ideal for short-lived or intermittent tasks and is particularly useful when workload patterns are unpredictable or sporadic. It’s great for development and testing environments where you need flexibility without the commitment of a reserved or spot instance.

Combining Instance Types for Cost Efficiency

By mixing Spot, Reserved, and Fargate instances, you can create a cost-optimized setup for EKS:

- Spot Instances provide flexibility for batch jobs and non-critical workloads.

- Reserved Instances cater to long-term, consistent applications, keeping base costs low.

- Fargate offers serverless flexibility for workloads with fluctuating demand, ensuring you only pay for what you need.

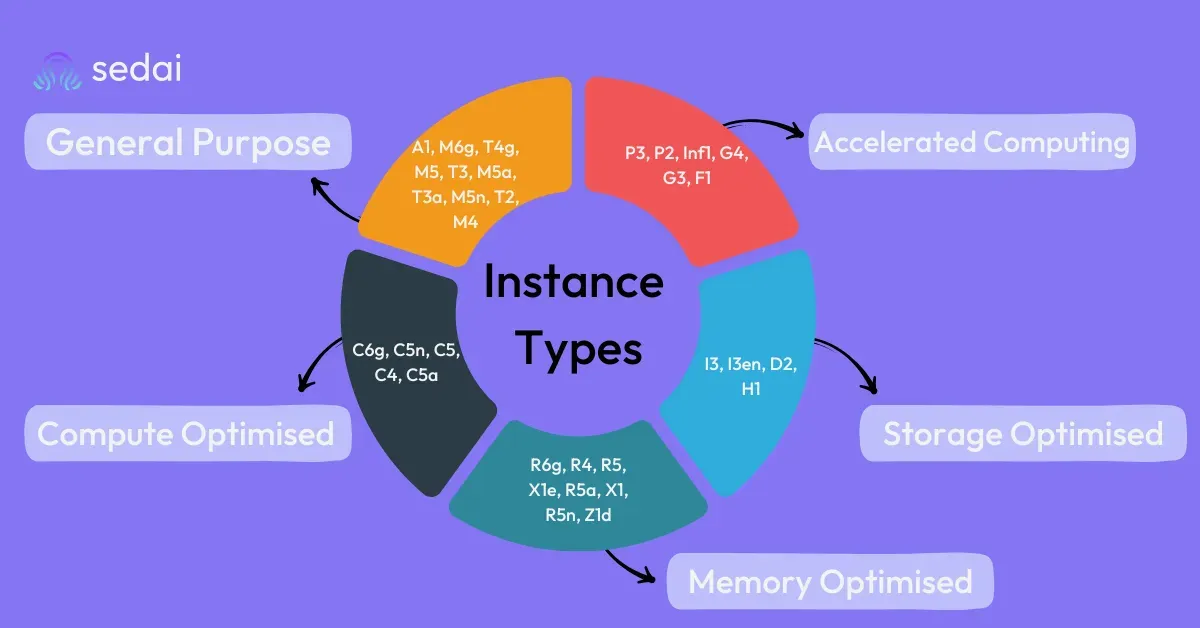

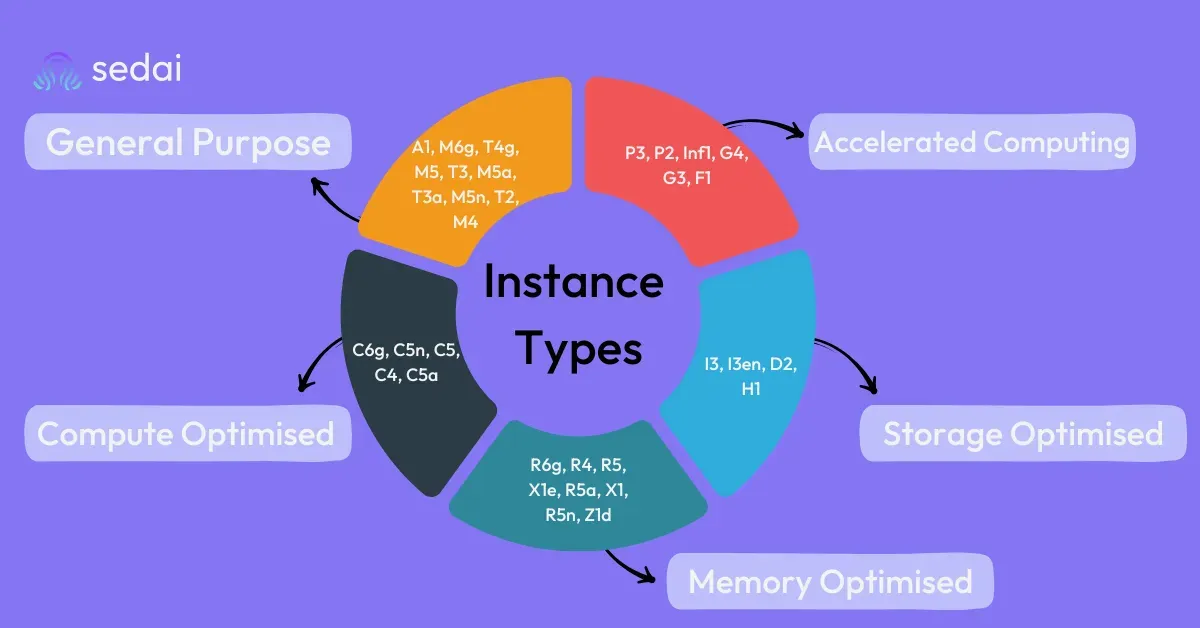

Choosing the Right EC2 Instance Type for EKS Cost Optimization

General-Purpose Instances: For balanced performance across CPU, memory, and networking, general-purpose instances (like T3 or M6g) are often a safe, cost-effective choice for a variety of EKS workloads.

Compute-Optimized Instances: If your EKS workloads are CPU-intensive, such as high-performance web servers or real-time analytics, compute-optimized instances (like C6g) will give you more bang for your buck without overpaying for unnecessary memory.

Memory-Optimized Instances: For workloads with significant memory needs—like data-intensive applications, databases, or real-time big data processing—memory-optimized instances (such as R5 or X2) deliver the required resources efficiently.

Optimizing Load Balancers and Minimizing Data Transfer Costs

Network-related costs, like those for load balancers and cross-zone data transfer, can sneakily inflate your EKS bill if left unchecked. Here’s how you can manage these expenses effectively.

1. Optimize Load Balancers

Load balancers play a crucial role in routing incoming traffic to your EKS pods, but not all load balancers are created equal in terms of cost and efficiency. Here are a few ways to optimize your load balancer usage:

- Choose the Right Type: Application Load Balancers (ALBs) are generally more cost-effective for HTTP/HTTPS traffic compared to Network Load Balancers (NLBs), which are often more costly and better suited for low-latency TCP traffic. Whenever possible, stick with ALBs to save on costs for web applications and similar workloads.

- Limit the Number of Load Balancers: Avoid deploying a load balancer for every service. Instead, consider using an ALB Ingress Controller to manage traffic for multiple services within a single ALB, reducing the need for multiple load balancers.

Quick Tip: Think of load balancers like toll booths on a highway. Fewer tolls mean smoother traffic flow and lower costs, especially for applications with high traffic volumes.

2. Reduce Cross-Zone Traffic

Cross-zone data transfer fees are often an overlooked line item, but they can add up quickly in multi-zone EKS setups. Here’s how to keep them in check:

- Ensure In-Zone Communication: Wherever possible, ensure that pods within your EKS cluster communicate within the same Availability Zone. By designing workloads to process data locally within a zone, you reduce the need for costly cross-zone data transfers.

- Balance Pods Across Zones: If cross-zone traffic can’t be fully avoided, try evenly distributing pods across zones to minimize cross-zone requests. Kubernetes can be configured to keep traffic as localized as possible, reducing unnecessary transfer fees.

3. Use VPC Private Endpoints and Caching

Data transfer between EKS and other AWS services (like S3 or DynamoDB) can add up if not managed thoughtfully. Setting up VPC Private Endpoints and caching frequently accessed data can help mitigate these costs:

- VPC Private Endpoints: With VPC Private Endpoints, data transfer remains within the AWS network, which is often cheaper than sending data through the public internet. This approach is particularly useful when accessing S3 buckets, DynamoDB, or other AWS services from your EKS workloads.

- Implement Caching: Caching frequently accessed data (for example, using Amazon ElastiCache or Redis within the same VPC) minimizes redundant data transfers. This not only speeds up access times for users but also reduces the volume of data flowing across your network, saving on transfer fees.

Monitoring and Tracking Spending in EKS

Keeping tabs on your spending in Amazon EKS is essential for maintaining a cost-effective and scalable environment. AWS offers several built-in tools for cost tracking, and additional third-party solutions can provide even more granular insights. Here's an overview of key tools and how they can help you control and optimize costs in EKS:

Why Monitoring Tools Matter for EKS Cost Control

By using these tools, you can:

- Track Spending: Set up automated cost monitoring to prevent surprises. Cost Explorer, for example, helps you view historical cost data and forecast future expenses.

- Understand Usage Patterns: Detailed metrics from CloudWatch and Prometheus allow you to track resource utilization trends, making it easier to identify areas for potential savings, like unused storage or compute resources.

- Get AI-Driven Recommendations: Tools like Sedai proactively recommend cost-saving measures based on real-time data. For instance, Sedai might identify underutilized nodes and suggest resizing options to reduce costs.

Optimizing Resource Requests and Limits for Cost Control

Setting resource requests and limits correctly is vital for controlling costs without compromising performance. Oversized requests mean paying for resources that sit idle, while undersized ones can cause performance issues.

1. Setting Precise Resource Requests and Limits

When configuring EKS, set your resource requests and limits in line with your actual needs. Using metrics tools like kubectl top helps track usage over time, making it easier to fine-tune settings and avoid excess costs.

2. Avoiding Overprovisioning and Wastage

Overprovisioning resources is one of the main culprits for inflated cloud bills. Monitoring tools like Prometheus or Sedai can identify where you’re overspending so you can adjust accordingly.

Autonomous Optimization with Sedai: AI-Driven Cost Management

Tracking EKS costs manually can be overwhelming. This is where Sedai comes in, autonomizing optimization processes and continuously monitoring workload patterns to right-size resources. For a practical demonstration of Sedai’s capabilities, refer to this step-by-step tutorial on optimizing AWS EKS cost and performance.

1. Self-Optimizing Clusters with Sedai

Sedai’s platform monitors EKS workloads, auto-adjusts resources based on demand, and implements scaling policies to match performance needs with cost efficiency. This removes the need for frequent manual oversight.

2. Real-World Savings with Sedai

In real-world applications, Sedai has helped companies reduce EKS costs by as much as 30%. Through continuous rightsizing, autoscaling, and balancing resource allocations, Sedai can ensure EKS clusters remain cost-efficient while maintaining performance standards.

Maximize EKS Efficiency with Proactive Cost Optimization

Achieving cost efficiency in Amazon EKS requires a clear understanding of your cost drivers and a strategic approach to resource management. From selecting the right instance types and enabling autoscaling to closely monitoring usage and fine-tuning resource allocation, each step plays a role in trimming unnecessary expenses.

To keep your EKS cluster optimized over the long haul, consider leveraging autonomous optimization tools like Sedai. With AI-driven insights, Sedai dynamically adjusts resources to meet demand, reducing the need for manual adjustments and ensuring continuous efficiency. By staying proactive with regular monitoring and automation, you can enjoy a scalable, cost-effective EKS environment that adapts effortlessly to your business needs.

Book a Demonstration Now to discover how Sedai can help your business optimize cloud spending and enhance your EKS performance with minimal oversight.

FAQs

1. How do Spot Instances help reduce costs in Amazon EKS, and when are they a good choice?

Answer: Spot Instances offer significantly discounted rates (up to 90% off On-Demand pricing) by using spare AWS capacity. They are a good choice for fault-tolerant, non-critical workloads like batch processing or testing environments. However, since AWS can reclaim this capacity with little notice, Spot Instances are best suited for applications that can handle interruptions or have flexible timing requirements.

2. What is the role of Cluster Autoscaler and Horizontal Pod Autoscaler (HPA) in EKS cost optimization?

Answer: The Cluster Autoscaler automatically adjusts the number of worker nodes based on workload needs, ensuring that your cluster scales up or down to match demand. The Horizontal Pod Autoscaler (HPA) scales the number of pod replicas based on CPU or memory utilization. Together, these tools help eliminate idle resources and prevent over-provisioning, reducing unnecessary costs by aligning resources with actual demand.

3. How can I minimize cross-zone data transfer costs in Amazon EKS?

Answer: Cross-zone data transfer can add unexpected costs. To minimize this, ensure your workloads communicate within the same Availability Zone as much as possible. Distribute your pods across zones evenly to reduce the likelihood of cross-zone communication and configure your applications to keep data transfer localized when feasible. Additionally, using VPC Private Endpoints for AWS services minimizes data transfer charges by keeping traffic within AWS’s private network.

4. What are the benefits of using Fargate for cost optimization in EKS, and when should I choose it over EC2 instances?

Answer: AWS Fargate is a serverless compute engine that charges based on the resources you actually use, without the need to manage EC2 instances. Fargate is ideal for short-lived or intermittent tasks, where workload patterns are unpredictable, such as development and testing. It’s a cost-effective choice for workloads that don’t need dedicated EC2 instances, as you avoid paying for idle capacity.

5. How does Sedai’s autonomous optimization compare to AWS’s built-in tools like Cost Explorer for managing EKS expenses?

Answer: While AWS Cost Explorer provides insights into spending trends and identifies cost drivers, it requires manual analysis and adjustments. Sedai, on the other hand, automates optimization by continuously monitoring and adjusting resource allocations in real time based on workload patterns. Sedai’s AI-driven platform takes actions like resizing instances, adjusting autoscaling, and optimizing pod resources, helping reduce costs dynamically without manual intervention.

6. What are the key differences in cost between Application Load Balancers (ALBs) and Network Load Balancers (NLBs) in EKS?

Answer: ALBs are typically more cost-effective for routing HTTP/HTTPS traffic, while NLBs are designed for lower-latency TCP/UDP traffic and may be more expensive. Choosing the appropriate load balancer based on your application’s needs can help reduce costs. ALBs are better suited for most web applications, whereas NLBs are ideal for use cases needing low-latency connections, such as real-time data processing.

7. Can using AWS Graviton instances reduce EKS costs, and what workloads are they best suited for?

Answer: Yes, AWS Graviton instances, powered by Arm-based processors, provide up to 40% better price performance over comparable x86-based instances. They are suitable for general-purpose, compute-optimized, and memory-intensive workloads, such as web servers, batch processing, and in-memory databases. Switching to Graviton instances can be an effective way to reduce costs without sacrificing performance.

8. How does right-sizing impact EKS costs, and what tools can help with this?

Answer: Right-sizing involves adjusting the size of EC2 instances and Kubernetes pod resource requests to align with actual usage, avoiding over-provisioning. AWS Compute Optimizer and tools like kube-resource-report can help identify unused or over-provisioned resources. Additionally, Sedai’s autonomous platform dynamically right-sizes resources in real-time, ensuring your cluster is only using what it needs, thus reducing slack costs.

9. Why is managing control plane logging important for EKS cost optimization, and how can I optimize it?

Answer: Control plane logging can incur significant data ingestion and storage fees in CloudWatch. To optimize these costs, selectively enable logging only for components that require monitoring and configure log retention policies to minimize storage. Using Amazon GuardDuty for threat detection and limiting log volume can further reduce expenses while maintaining security.

10. How can I use AWS Cost Allocation Tags effectively to monitor and reduce EKS costs?

Answer: AWS Cost Allocation Tags allow you to categorize resources by project, environment, or team, making it easier to identify cost drivers and allocate costs appropriately. By tagging EKS resources, you can track expenses associated with each application or team, helping to identify high-cost areas for optimization. This level of visibility encourages accountability and more effective resource management across your organization.

.webp&w=3840&q=75&dpl=dpl_8N5gJyctMb8weomVhSxGkhy25E4n)