Introduction

This article summarizes a panel discussion on monitoring and cost optimization strategies for cloud-based systems held at our annual autocon conference. We focus on crucial aspects such as cost management, performance optimization, and the emergence of autonomous solutions. The pnael featured:

- Sumesh Vadassery, Senior Director Engineering, PayPal

- Kumar Rethi, CTO, TopCoder (ex-eBay)

- John Fielder, CISO and Head of Platform Engg, Ironclad

- Benjamin Thomas, Co-Founder & President, Sedai

Read on or you can watch the video version here.

Optimizing Cloud Scaling for Cost and Performance: Insights from PayPal's Senior Director of Platform Engineering

The next generation of monitoring and optimization tactics have emerged, offering promising solutions to address these challenges. Sumesh Vadassery, Senior Director leading the platform engineering at PayPal sheds light on how PayPal manages cloud scaling to deliver the best customer experience while balancing cost and performance.

Sumesh discussed how Paypal handles its website traffic. They observed a traffic pattern that follows the typical internet trend, with Monday and Sunday being the busiest days. During these days, they experience heavy traffic from 3:00 AM to around 3:00 PM, peaking at 11:00 AM. As sales tend to decline after 3:00 PM, they can optimize the site by reducing the size of infrastuctrure deployed.

In the past, Paypal used to manually adjust the site's size based on seasonal events like Black Friday and Cyber Monday. However, now they have implemented advanced machine learning (ML) technologies to predict the required site size every three hours and readjust it every half an hour. This approach allows them to operate with around 1.5 million containers in the evenings and scale up to 5 million containers in the mornings, saving approximately 60% of the site's resources.

But that's not all. As quoted by Sumesh “ Our ML-based automation enables us to adapt to daily changes in user sessions by analyzing factors such as latency, CPU usage, and memory. This automation has freed up our team to focus on improving the applications themselves, rather than managing the infrastructure. By achieving a 60% efficiency gain, we've saved millions of dollars for PayPal and significantly reduced the complexity of managing our infrastructure. This, in turn, has helped their business operate at a faster pace.

Unlocking Operational Efficiency: PayPal's Automation Journey and Insights

Sumesh also emphasized that PayPal operates a highly complex website that interacts with multiple agencies and business units, each generating billions of dollars in revenue annually. Additionally, the core PayPal site itself generates around 20 billion in revenue per year. To give an idea of the scale, the core site consists of over 4,000 stateless services and 200 stateful services.

Sumesh acknowledged the challenges of managing manual operations for thousands of microservices and handling stateful service management. With over 200 million changes in the site per year, the impact on production issues and manual triaging would have been overwhelming. To overcome these challenges, PayPal implemented automation to roll out changes using predictive analysis. This automation not only facilitated faster issue triaging but also provided better visibility to their SREs. As a result, the need for a large number of SREs reduced, allowing PayPal to allocate resources to more valuable areas such as application fine-tuning and performance optimization.

Transitioning to Autonomous Systems: Addressing Challenges and Leveraging Signals for Intelligent Decision-Making

Let's dive into some key points shared by Kumar, the CTO of Topcoder, emphasizing the importance of autonomous systems and the value they bring to businesses. Drawing from his experience at eBay, where he pioneered the use of the PaaS platform on OpenStack, Kumar shed light on why autonomous systems are worth considering, even when organizations already have various automation components in place.

Automation itself has been a practice for a considerable time, including various point automations on eBay. These automations involved tasks like monitoring servers to determine if they exceeded certain memory capacity or had an excessive number of connections. However, over time, it became evident that these automations were causing more issues than they were resolving.

Kumar identified three main problems with the existing automation approach.

- Most automation was designed to handle extreme situations based on past performance data rather than anticipating future changes. This became problematic as the site underwent frequent updates, including core roll-outs, without considering seasonal variations or adapting to new changes. Consequently, these automations began to work against the intended goals.

- The second issue was that automation primarily focused on aiding the Site Reliability Engineering (SRE) team in managing infrastructure, rather than addressing the behavior of applications. Kumar emphasized that the performance and health of applications were crucial for business success. While optimal infrastructure was important, an application that constantly experienced failures undermined the benefits of a well-managed infrastructure. Therefore, it was necessary to involve the application team in the automation process to ensure its effectiveness.

- As applications became increasingly complex, the number of roll-outs multiplied, and developers had the freedom to deploy changes at any time. Despite adding more automations, the SRE team's workload did not decrease. This was because the SREs lacked visibility into the application and were disconnected from its behavior. To address these challenges, the solution lay in transitioning from mere automation to autonomous systems.

At eBay, they recognized the need for an autonomous system that could analyze signals from both the applications and the infrastructure. By considering these signals collectively, the autonomous system could make intelligent decisions. This shift from automation to autonomous systems was driven by the rapid evolution of technology and the need to keep pace with changes. Rather than relying on increasing the volume of automation, eBay chose to prioritize the development of autonomous systems.

Enhancing Application Performance: The Role of Autonomous Management Systems alongside Kubernetes and Mesos

Let me share an interesting point that emerged from my conversation with Kumar Rethi. We touched upon the topic of Kubernetes and Mesos, and the question arose: why add another system when we already have these well-established solutions? Well, it turns out that Kubernetes and Mesos are primarily focused on managing clusters and infrastructure, ensuring they reach the desired state. However, when it comes to what's happening inside those instances and how applications are performing, it becomes a bit of a black box. We've encountered situations where we tried various approaches to fix issues, like restarting instances, but the application still didn't perform as expected. It became evident that we needed a solution that goes beyond managing infrastructure and considers the signals and behavior of the applications themselves.

While Kubernetes and Mesos play a vital role, especially with the rise of microservices and clusters, they can't solely address the complex logic and behavior of applications. This is where the concept of an autonomous management system comes into play. By complementing existing solutions, an autonomous system focuses on understanding application signals and expected behavior. Even without the scale that Sumesh mentioned in the PayPal example, we've witnessed that with proper autonomous management, the need for a dedicated DevOps person can diminish significantly. The emphasis shifts to understanding how applications behave while leaving the infrastructure management to the autonomous system.

As more organizations adopt microservices, intricate business logic, and leverage Kubernetes, the relevance of an autonomous management system grows. It's important to note that these approaches are not in conflict or competition with each other; they work together harmoniously to address different aspects of the evolving landscape."

The Role of Autonomous Systems in Application Optimization and Fine-Tuning

During our conversation with John Fiedler, CISO and head of platform engineering at Ironclad, we delved into the significant role that autonomous and automated systems play, particularly relying on the application layer. Over the past decade, we have witnessed remarkable success in automating infrastructure, primarily driven by the advent of virtualization, which revolutionized performance and unlocked the potential of underlying hardware. This paved the way for what we now refer to as the programmatic data centre or, more commonly, the cloud, where everything operates through APIs.

Subsequently, the introduction of AI and ML further enhanced the landscape. Salesforce made strategic acquisitions of numerous startups that played a pivotal role in shaping the development of Einstein. Notably, as they embarked on the journey, they encountered exceptional teams with cost-effective solutions that revolved around auto-scaling. Leveraging technologies like EMR or Spark, these teams configured auto-scaling mechanisms that seamlessly adjusted resources based on workload fluctuations. They possessed the ability to predict the nature of incoming data, and what started with 10,000 predictions eventually escalated to billions per day.

At that stage, their cost-effectiveness was limited to the AWS infrastructure, leaving untouched layers above. Fine-tuning complex models with numerous parameters proved impossible for humans. Although they achieved a 20% margin for SaaS applications, further reduction requires autonomous systems. Insights from the Einstein journey guide us in making applications more cost-effective amid layered complexity and numerous adjustable options within models and infrastructure.

Enhancing Efficiency and Security: Leveraging Autonomous Systems in Business Management

John Fiedler noted ”I want to stress that we shouldn't fear autonomous systems. Instead, we should see them as valuable tools that can complement our work. Investing in innovation and utilizing autonomous systems can significantly enhance the efficiency of both individuals and companies. From a security standpoint, we face a significant challenge due to a shortage of resources. The security industry is still lacking in personnel, including former SRE teams, operations teams, and platform teams. Furthermore, many organizations today have an extensive range of SAS applications, with the number only increasing. This abundance of systems and applications puts a strain on security and necessitates the adoption of autonomous systems.

To effectively monitor the entire surface area of a business, especially in terms of security, we require the assistance of autonomous or automated systems. Traditional approaches like hiring a network operations center (NOC) or security operations center (SOC) are no longer sufficient. The emergence of monitoring systems with built-in logging and metrics has been valuable, but with the influx of data and integrations, it's becoming overwhelming. Autonomous systems can help us cope with this complexity” It's interesting to see how the concept of cost versus profit centers extends beyond our current discussion. We can find examples where optimizing investments in cloud costs can actually have a positive impact on revenue generation. It highlights the interconnectedness of various aspects within a business and the potential for cost optimization to drive profitability. These insights from John provide valuable perspectives on the intricacies of business management. Thank you, John, for sharing your fascinating thoughts.

Addressing Limitations in Kubernetes: Leveraging Autonomous Systems for Application Awareness and Intelligent Configuration

Benji,discussed the role of Kubernetes and its limitations in terms of autonomous operation. He shared some data points when he came across on LinkedIn, mentioning that there are 450,000 professionals worldwide working with Kubernetes, solving around 85,000 job-related issues. This indicates that Kubernetes is not fully autonomous, as it requires human management. One of the things Kubernetes does well is orchestrating clusters by efficiently arranging containers in the right configuration. However, it lacks awareness of application behavior and specific requirements. Benjie highlighted the example of seasonal changes in PayPal, where application behavior varies daily, weekly, and annually.

To address such scenarios, tools like HPN [VPR] are provided for engineers to configure specific applications. Engineers need to write configurations for each application, considering factors like CPU usage, memory requirements, and open files. However, maintaining these configurations becomes challenging due to dynamic changes in the organization, such as acquisitions or modifications in microservices. Managing configurations for hundreds or thousands of applications in Kubernetes becomes complex and requires dedicated teams of performance engineers and SREs.

He also highlighted that public cloud providers like Amazon offer serverless cloud native features to autonomously handle complex challenges, such as configuration changes, behavior prediction, and seasonality management. Safety checks like traffic management and resource depletion require consideration in automation, while close loop systems that learn and optimize over time ensure the safety and effectiveness of automated processes. Although Kubernetes excels in cluster management and resource scheduling, it lacks application awareness and intelligent configuration capabilities. Autonomous systems are needed to intelligently utilize the configuration options provided by platforms like Kubernetes, enabling them to understand application dependencies, adapt to changing behaviors, and configure platforms effectively to meet specific needs.

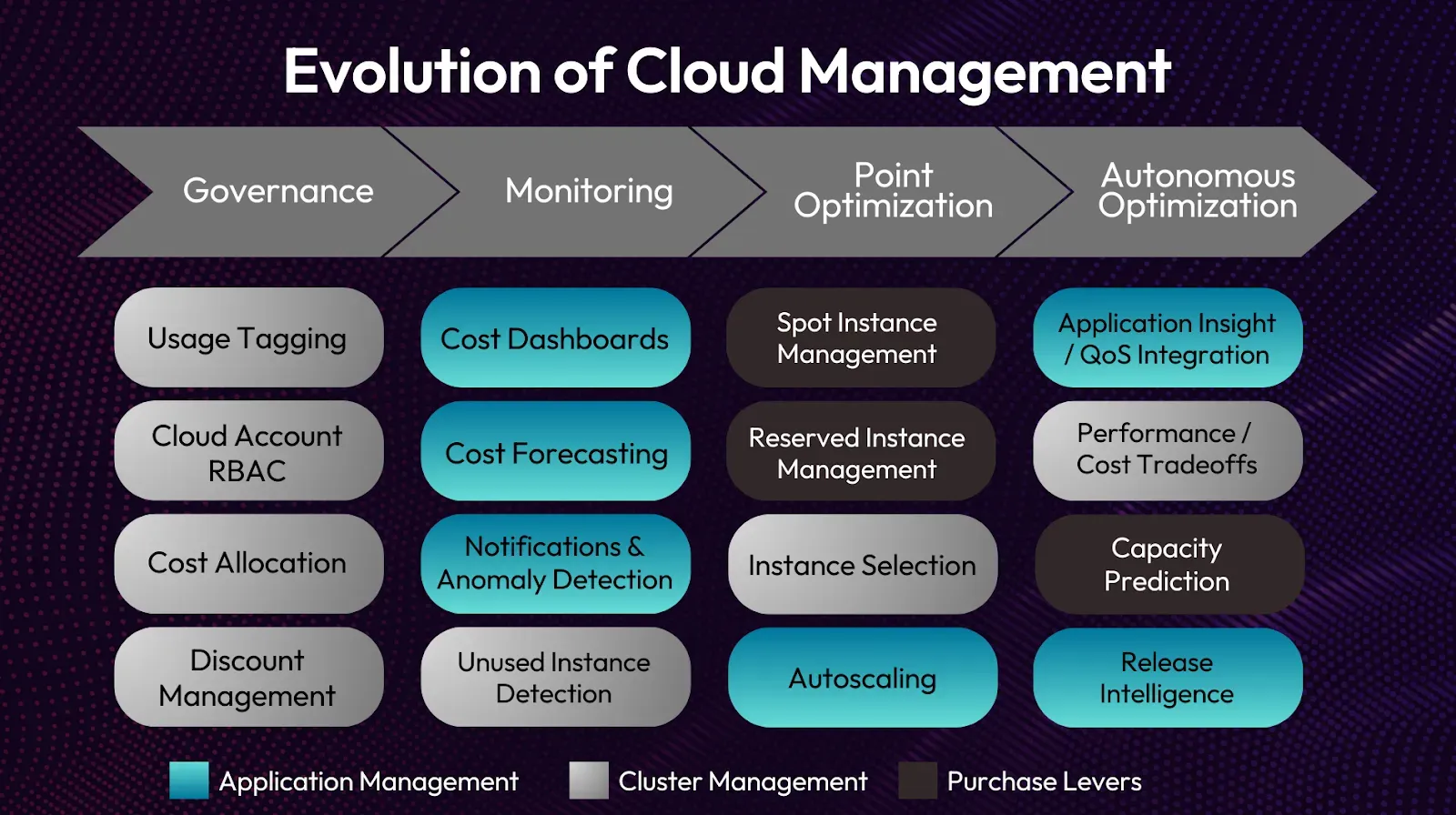

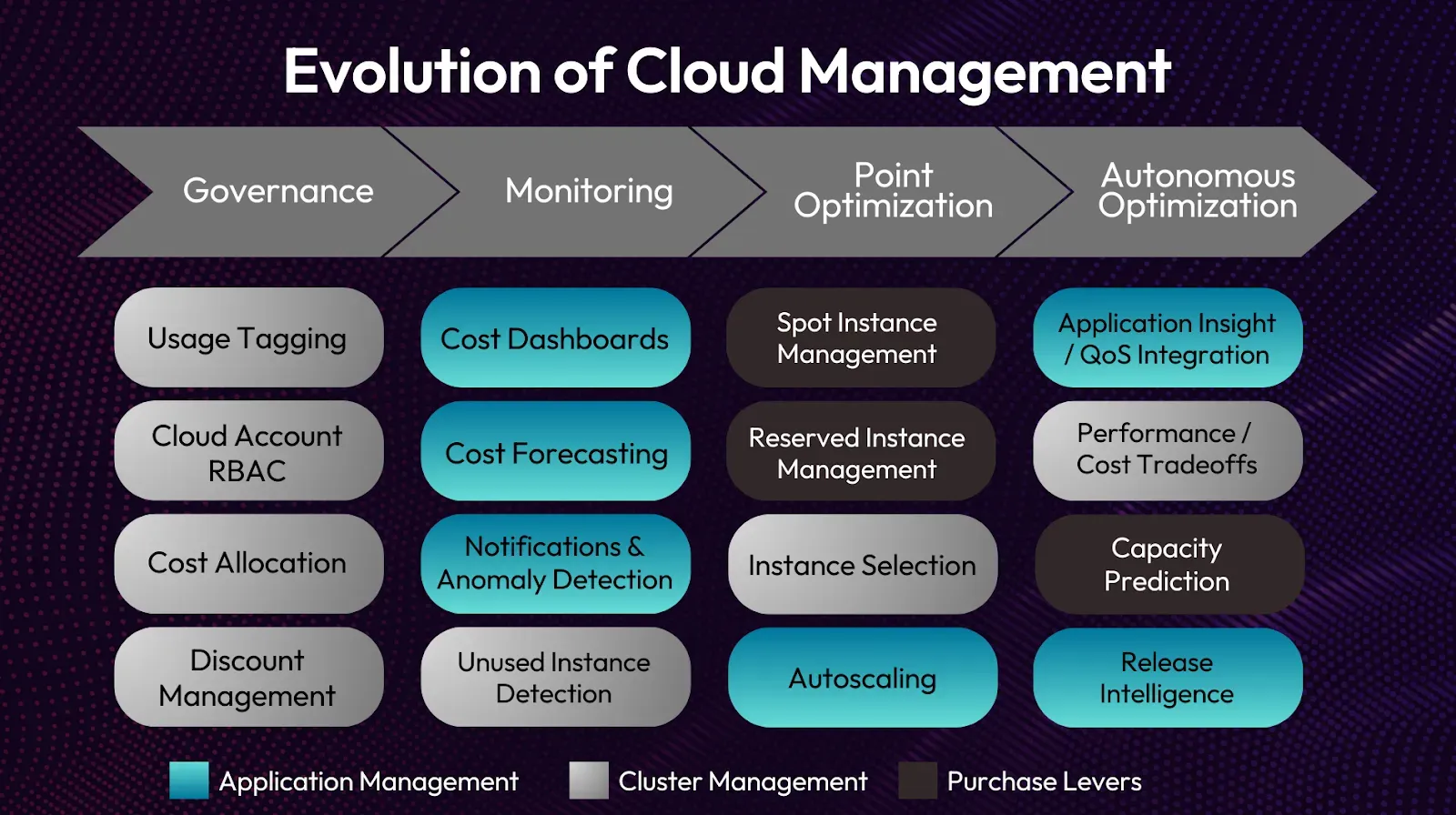

The Role of Intelligent Knobs and Semi-Automation in Cloud Management

Benji noted that in semi-automated systems, companies can save costs by implementing intelligent knobs and semi-automation points. However, maintaining this semi-automation requires expertise and investment in cloud assets, which may divert focus from the company's core business. He emphasizes the importance of considering where companies want to invest - in their own business or in the cloud as a business. Optimizing costs in terms of governance, application, cluster, and purchasing options is crucial but constantly changing. Building expertise and writing semi-automation for these aspects can be time-consuming and may not align with the company's primary goals. Benjie highlights the need to differentiate between who writes the automation and where the company's focus lies.

Furthermore, Benji mentions that in Sedai's deployments, they have observed an additional 50% cost optimization in app and node purchasing. Even with semi-automation, companies tend to prioritize safety by over-provisioning resources. However, there is still a cost benefit to be gained on top of semi-automation by optimizing app performance and taking advantage of purchasing options.