This talk is based on insights shared by Kumar Ramanathan of Velocity Global at Sedai’s autocon conference. From here on we’ll cover Kumar’s story. This is an edited version of his talk. Learn more here about the talk including a comparison of serverless and Kubernetes approaches.

Introduction

Companies are constantly seeking innovative ways to optimize their development environments for efficiency, scalability, and cost-effectiveness. Velocity Global, a leading employer of record (EOR) platform, has embraced a serverless-first architecture to address these challenges.

This article explores our unique approach to creating a streamlined, scalable development environment using serverless technologies. we'll delve into how this approach simplifies infrastructure management, enhances developer productivity, and provides the flexibility needed to handle fluctuating workloads.

By examining Velocity Global's strategies, including our "Paved Roads" concept, I hope to uncover valuable lessons for organizations looking to leverage serverless architecture in their own development processes.

Velocity Global’s Serverless Approach: Simplicity and Scalability

Velocity Global, a leading employer of record (EOR) platform that simplifies global hiring and workforce management, has adopted a serverless-first architecture to optimize its infrastructure for simplicity and cost-effectiveness.

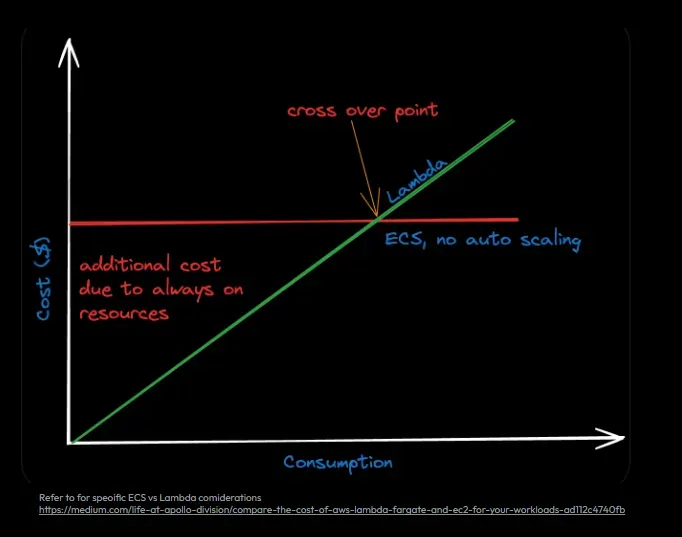

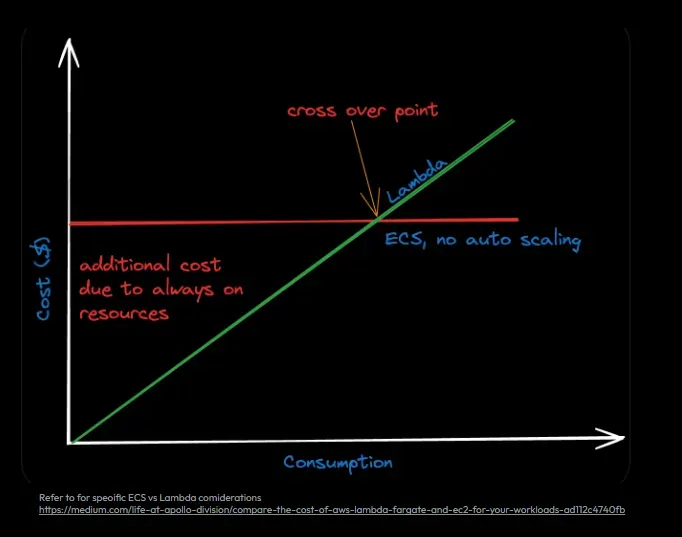

Unlike Kubernetes users (such as BILL, who development environment we cover here), Velocity Global has chosen serverless computing to align with its fluctuating workload demands. This approach, where costs are based on actual usage rather than fixed server times, is ideal for a company like Velocity Global, where demand varies throughout the day or month. With platforms like AWS Lambda, the company benefits from automatic scaling, availability, and real-time resource management. This means their developers can focus on building business logic and new features, while the cloud provider manages the infrastructure. For Velocity Global, Serverless computing significantly reduces operational overhead, accelerates development, and provides a predictable cost structure since they only pay for the resources they use, eliminating the need to maintain servers 24/7.

Advantages of Velocity Global’s Serverless Approach

Velocity Global’s serverless approach delivers key benefits that streamline infrastructure management and improve cost efficiency. Below are some of the most impactful advantages of adopting this model:

- Usage-Based Billing: One of the most significant benefits of serverless is that businesses are billed based on the actual resources consumed rather than how long the infrastructure has been running. This means that when no activity is occurring, the costs are minimal.

- Better Time-to-Value: Serverless architecture abstracts infrastructure concerns, enabling developers to focus purely on application logic. This leads to faster deployment cycles and quicker delivery of value.

- Simplified Infrastructure Management: Since the platform (like AWS) handles scaling and availability, developers don’t need to worry about manually managing containers or orchestrating server clusters.

- Scalability Without Complexity: Serverless systems scale automatically based on the workload, allowing companies like Velocity Global to handle traffic spikes seamlessly without the need for manual intervention.

However, adopting a serverless-first approach is not without its challenges. Developers working in serverless environments often encounter cold starts, which can lead to initial latency when a serverless function is invoked after being idle. Additionally, there are execution limits with AWS Lambda (such as a 15-minute execution limit) that developers must navigate. Deciding on the right granularity for serverless functions is another design-time challenge; for example, figuring out whether to create multiple smaller functions or fewer, larger ones.

Overcoming Challenges with Serverless Development

While serverless-first architecture offers simplicity and cost-efficiency, it also introduces challenges that require careful consideration for smooth development:

- Cold starts: Idle serverless functions experience latency when invoked for the first time after being dormant. This phenomenon, known as cold starts, can lead to delays, affecting user experience, especially in latency-sensitive applications.

- Execution limit: AWS Lambda enforces a 15-minute execution limit, compelling developers to reconsider the granularity of their functions. They must decide between creating many small functions or building larger, more complex functions to handle longer processes, balancing simplicity and performance.

- East-West traffic and event-driven orchestration: Serverless architectures, being event-driven, introduce challenges in managing East-West traffic (traffic between services) and handling event-driven orchestration. Developers must account for distributed systems and eventual consistency models, which differ from traditional systems, to prevent bottlenecks and ensure consistent behavior.

These challenges highlight the complexities that come with adopting a serverless architecture. Developers must carefully weigh the trade-offs between simplicity, performance, and system consistency to ensure an efficient and reliable solution.

Overcoming Development Challenges: Testing and Deployment

Testing in serverless environments presents unique difficulties. Developers often struggle to bring all their application’s infrastructure dependencies (like queues, gateways, and databases) into local development environments. While mocking these services can help, it is not always accurate and can introduce inconsistencies between local tests and production environments.

In response, some developers opt to test in the cloud, deploying their code to a live environment to see how it behaves. However, this approach can lead to long deployment cycles, slowing down development when multiple iterations are needed. Developers often want to "make a change, test it, make another change, and test again," but lengthy deployment times can make this iterative process inefficient.

In terms of deployment, tools such as CloudFormation, CDK, SAM, and the Serverless Framework are available to manage infrastructure, but each comes with its own learning curve. For small, autonomous development teams, these tools can be overwhelming and distract from the core task of solving business problems and delivering features quickly.

Monitoring and Optimizing in Production

Monitoring serverless functions in production adds complexity due to their ephemeral nature. These functions spin up and down as needed, making it difficult to track long-running issues. Tools like DataDog provide visibility, but latency, cold starts, and performance must still be carefully monitored to ensure smooth operation.

A major concern in serverless environments is managing costs. While billed based on usage, over-optimizing for performance can increase costs. Velocity Global tackles this by using cost tagging, utilization dashboards, and performance metrics to balance cost efficiency and performance.

Key methods include DataDog for performance visibility, cost tagging to track resource usage, and real-time dashboards for monitoring behavior and performance.

Paved Roads: Streamlining Development with Pre-Built Infrastructure

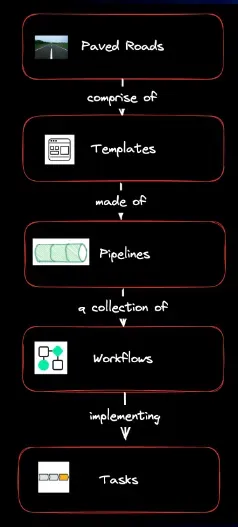

Velocity Global's implementation of the Paved Roads concept provides a streamlined development environment that removes much of the complexity of setting up and managing infrastructure. Inspired by Netflix, this framework allows developers to focus solely on solving business problems through code, as much of the scaffolding, monitoring, and testing environments are pre-built and maintained by the DevOps team.

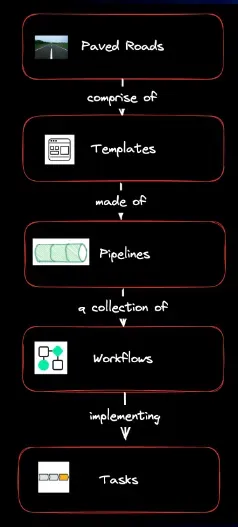

Here is a deeper understanding of Velocity Global’s Paved Road framework reveals how it simplifies development by providing pre-built infrastructure and a structured approach to building, testing, and deploying software.

Paved Roads as a Product: The Paved Road is presented as a "product" that takes care of all scaffolding, enabling engineers to concentrate on delivering business value. DevOps operates as the product owner, and the internal engineering teams act as the customers of this product.

One Approved Way: There is a single approved approach to building, testing, deploying, and monitoring software at Velocity Global, which supports specific programming languages (Node.js, TypeScript, Java, Python). If teams use any other technology, they are "off-road," meaning they must build and maintain their own scaffolding.

Shared Libraries and Components: Libraries for essential features like authentication and authorization (AuthN/AuthZ) are included in the Paved Roads framework, making it easier for developers to implement these crucial aspects of an application.

Pre-Built Templates and Pipelines: Developers are provided with templates and pipelines that allow for automated workflows and task implementation, streamlining the entire development process from infrastructure setup to deployment.

Key Metrics to Measure Effectiveness:

- Developer NPS (Net Promoter Score): Reflects how likely developers are to recommend the Paved Road approach.

- DORA Metrics: Measures the performance and success of software delivery processes.

- No DevOps/QA Involvement in Production Releases: An indicator of the level of automation and reliability, with minimal manual intervention needed during releases.

Here are the benefits of the Paved Road:

- Faster Setup: Developers can start writing code immediately, with much of the infrastructure already in place.

- Pre-Built Components: Common services like authentication and routing are pre-configured.

- Incentive to Stay On-Track: By limiting supported languages, the Paved Road encourages developers to use approved technologies, streamlining the development process.

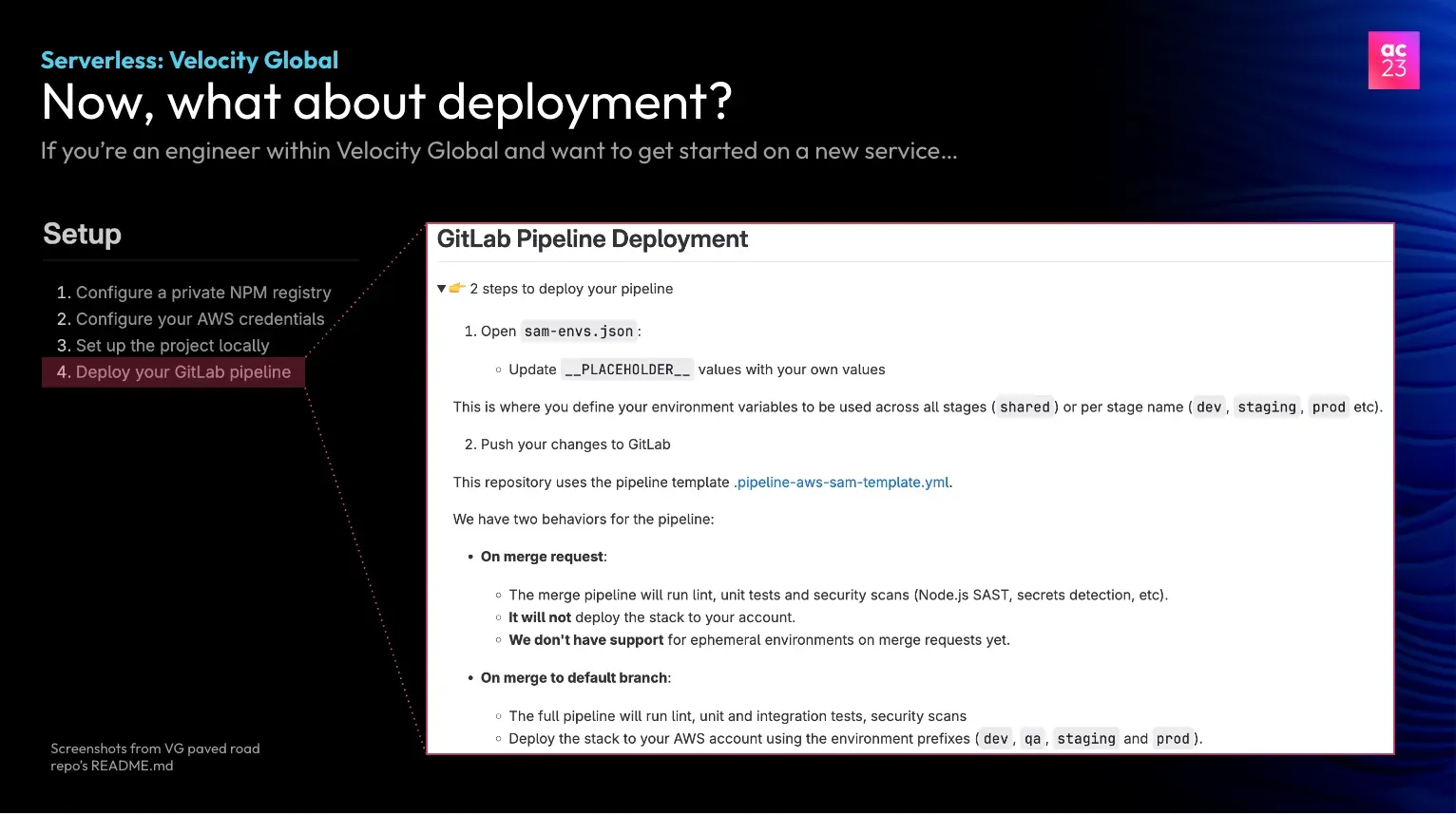

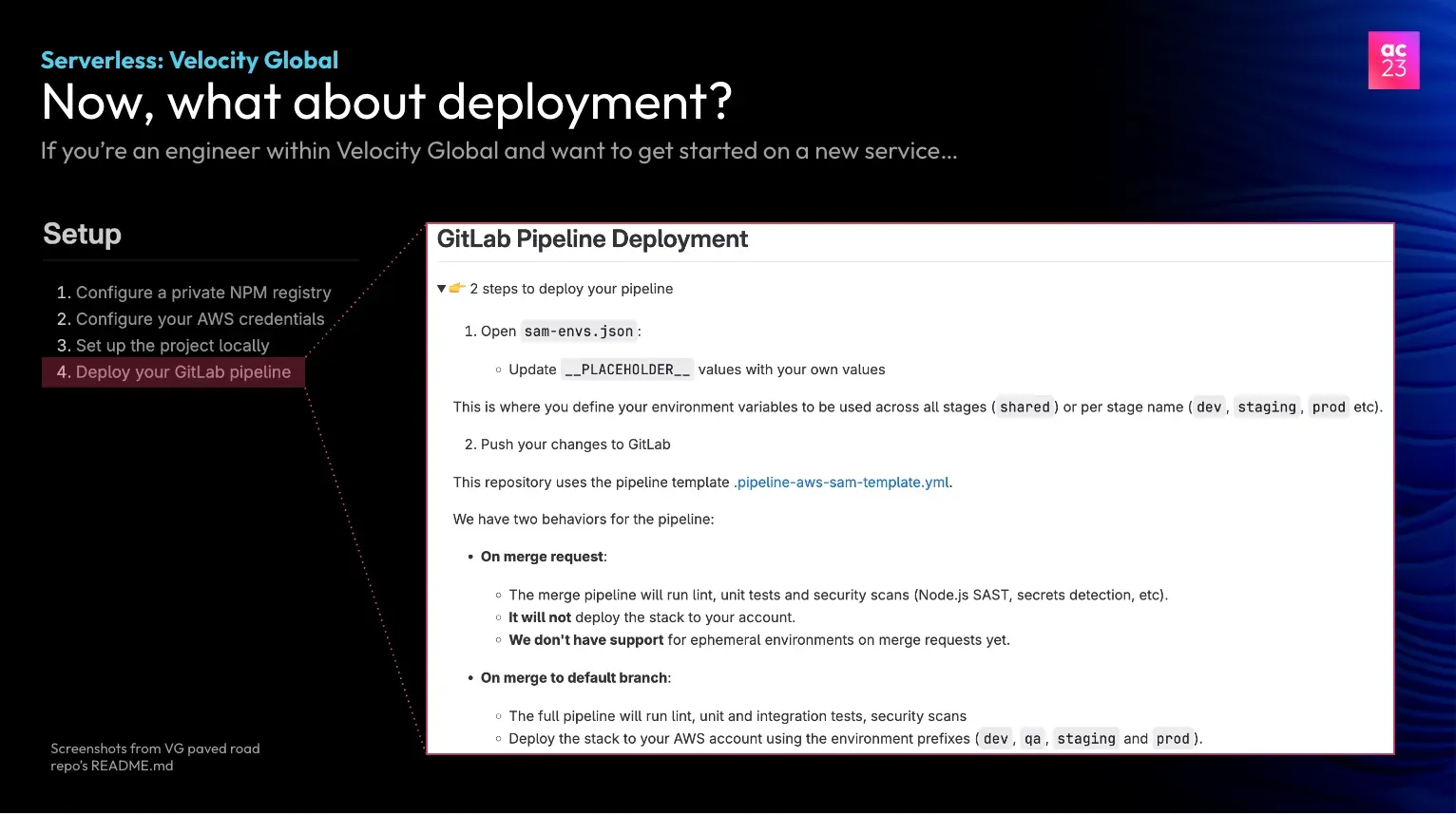

Deploying with CI/CD Pipelines

The deployment process at Velocity Global is fully integrated into their CI/CD pipeline, which automates the deployment workflow. The pipeline includes several key tasks, such as linting, unit testing, scans (both static and dynamic), and ultimately deployment. This ensures that only clean, thoroughly tested code is pushed to production.

Once deployed, teams rely on DataDog to monitor the performance of their services. Each team sets up their own alarms and on-call rotations to ensure they can respond to any issues in real time. Cost tagging and performance dashboards further help teams optimize both cost and performance.

The ultimate goal for Velocity Global is to move towards hands-free optimization, where autonomous tools automatically manage provisioned concurrency and other performance concerns, reducing the cognitive load on developers.

Summary

Velocity Global's adoption of a serverless-first architecture demonstrates the potential of this approach to dramatically simplify infrastructure management while enhancing scalability and cost-efficiency. By leveraging services like AWS Lambda, the company has created a development environment that allows their teams to focus on building business logic and delivering features, rather than managing underlying infrastructure.

Key takeaways from Velocity Global's approach include:

- The benefits of usage-based billing in optimizing costs for fluctuating workloads.

- The importance of abstracting infrastructure concerns to improve developer productivity and time-to-value.

- The challenges of serverless development, including cold starts and execution limits, and strategies to address them.

- The value of implementing a "Paved Roads" framework to standardize development processes and tools.

- The crucial role of robust monitoring and cost optimization in managing serverless environments effectively.

Velocity Global's implementation of the Paved Roads concept, coupled with their focus on CI/CD integration and performance monitoring, showcases a comprehensive approach to serverless development. This strategy not only streamlines the development process but also ensures consistency, reliability, and efficiency across their platform.

While serverless architecture presents its own set of challenges, particularly in areas like testing and deployment, Velocity Global's experience illustrates how these can be effectively managed through careful planning and the right tools. Their journey towards hands-free optimization points to an exciting future where autonomous tools further reduce the cognitive load on developers.

For organizations considering a shift to serverless architecture or looking to optimize their existing serverless environments, Velocity Global's approach offers valuable insights into balancing simplicity, scalability, and cost-effectiveness in modern cloud development.