Controlling cloud cost depends on how your workloads behave in production. Static sizing, conservative autoscaling, and leftover safety buffers quietly lock in excess capacity across AWS, Azure, Google Cloud, and Kubernetes. As traffic patterns shift daily, manual reviews fall behind, and cost drift compounds. AI tools address this by learning real workload behavior, validating changes against latency and error signals, and correcting inefficiencies continuously. When applied with clear guardrails and performance-first validation, these tools help you reduce cloud spend safely while keeping reliability and scaling behavior intact.

Cloud bills rarely increase due to pricing changes. They grow when capacity decisions are made to protect uptime, remaining long after the risk has passed. Static instance sizes, conservative autoscaling limits, and forgotten safety buffers across AWS, Azure, Google Cloud, and Kubernetes quietly turn into persistent waste.

Industry data shows that idle or underutilized resources account for roughly 28–35% of cloud spend, largely due to over-provisioning. Workloads change daily, but cost controls lag behind, so overspend becomes visible only after it is already embedded in production.

This is where AI tools for cloud cost optimization matter. By continuously observing real workload behavior and validating changes against performance signals, they correct cost drift safely.

In this blog, you will explore 18 AI tools for cloud cost optimization and 7 practical strategies to reduce spend without sacrificing reliability.

What Drives Cloud Cost and Why It’s Hard to Control?

Cloud cost is influenced less by pricing alone and more by how engineering teams design, scale, and safeguard systems under uncertainty. In practice, most cloud spend results from decisions made to prevent outages rather than to maximize efficiency.

The challenge lies in the fact that cloud environments change continuously, while cost controls often remain static.

What Actually Drives Cloud Cost in Production:

- Static sizing in dynamic environments: EC2 instance types, Kubernetes requests and limits, and managed services are usually configured once and rarely revisited, even as workload patterns fluctuate weekly.

- Permanent safety buffers: Capacity provisioned for peak traffic, product launches, or incident response is seldom removed afterward. Engineers naturally prioritize survival over post-event rollback.

- Conservative autoscaling configurations: Scale-up reacts promptly, but scale-down is often delayed, capped, or disabled to avoid latency regressions. This cautious approach quietly locks in excess capacity.

- Idle resources without clear ownership: Unused EC2 instances, overprovisioned node groups, abandoned development clusters, and oversized databases linger because no team wants to assume the risk.

- Fragmented optimization across clouds: AWS, Azure, and GCP environments are typically managed independently. While local optimizations occur, global cost behavior often remains invisible.

- Weak correlation between cost and workload behavior: Billing data reports spend. Utilization metrics indicate activity, but not whether scaling down or resizing is safe.

Why Cloud Cost Is Hard to Control:

Controlling cloud cost is difficult because feedback loops are delayed, indirect, and disconnected from the signals engineers rely on. Unlike latency or error rates, cost rarely generates urgency until it has already compounded.

- Delayed cost signals: Billing information lags behind actual usage, making it difficult to link spend changes to traffic shifts, feature releases, or configuration updates.

- Metrics don’t tell the full story: High utilization doesn’t automatically indicate a workload is right-sized. Similarly, low utilization doesn’t guarantee it is safe to reduce resources.

- No clear rollback path: Engineers hesitate to implement aggressive cost optimizations because the potential impact is unclear and reversibility is uncertain.

- Manual optimization can’t keep pace: Reviews typically occur monthly or quarterly, while workloads drift daily due to traffic fluctuations, feature launches, and data growth.

- Reliability incentives dominate decision-making: Engineering teams are accountable for uptime and latency. When forced to choose, operational safety always takes precedence.

At scale, cloud cost becomes a control problem. Without continuous, behavior-aware feedback that is closely tied to performance outcomes, spending will drift upward, even in disciplined engineering organizations.

Once you break down the factors driving cloud spend, the limitations of managing those costs manually start to become obvious.

Suggested Read: Top 14 Cloud Cost Optimization Tools in 2026

Challenges of Manual Cloud Cost Optimization & Its Solutions

Manual cloud cost optimization struggles as systems scale and workloads grow more dynamic. The challenge is that humans and static processes simply can’t keep up with continuous change.

Below are the challenges of manual cloud cost optimization and its solutions.

These challenges show why manual approaches struggle to keep up as cloud environments grow in scale and complexity.

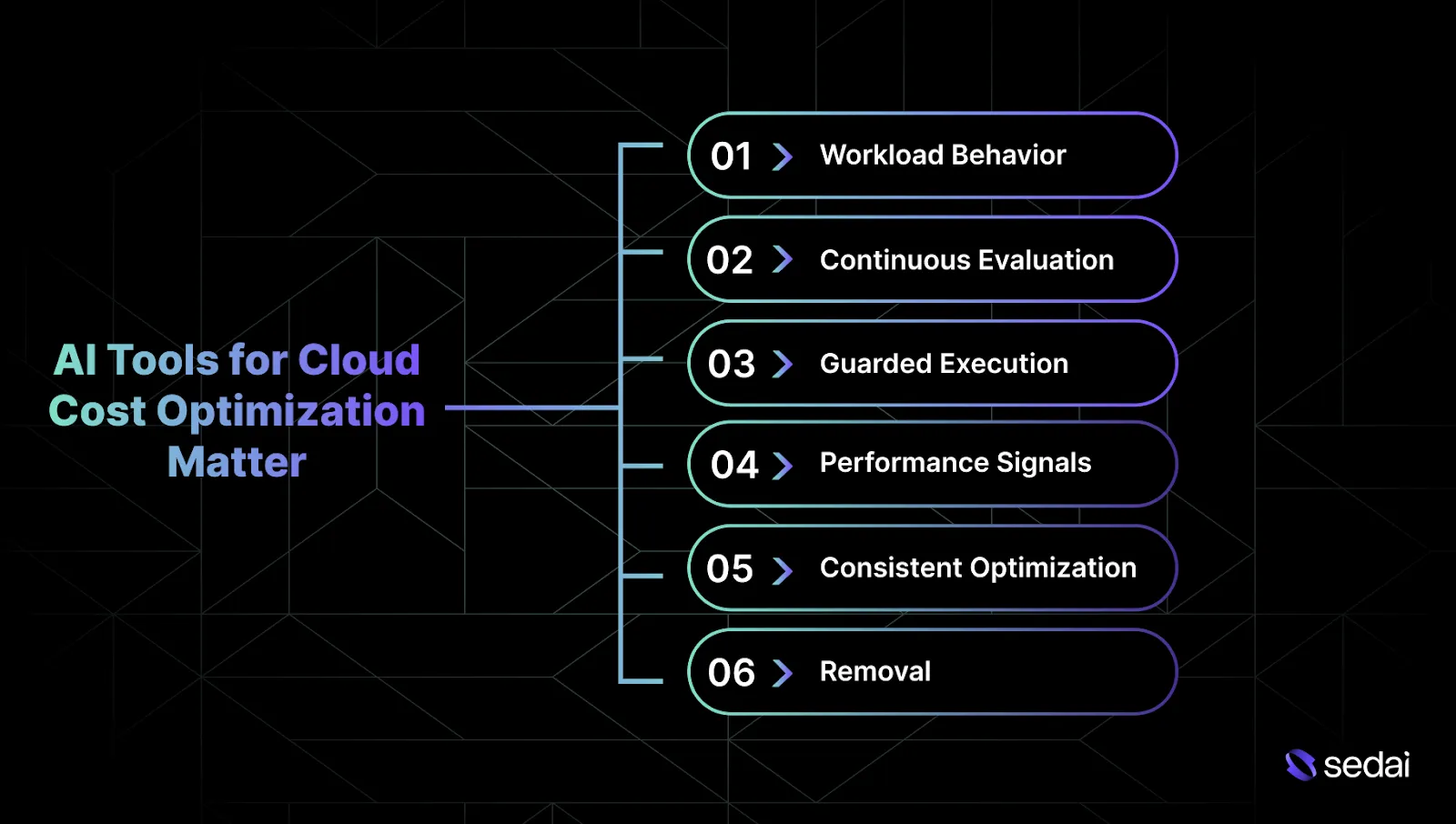

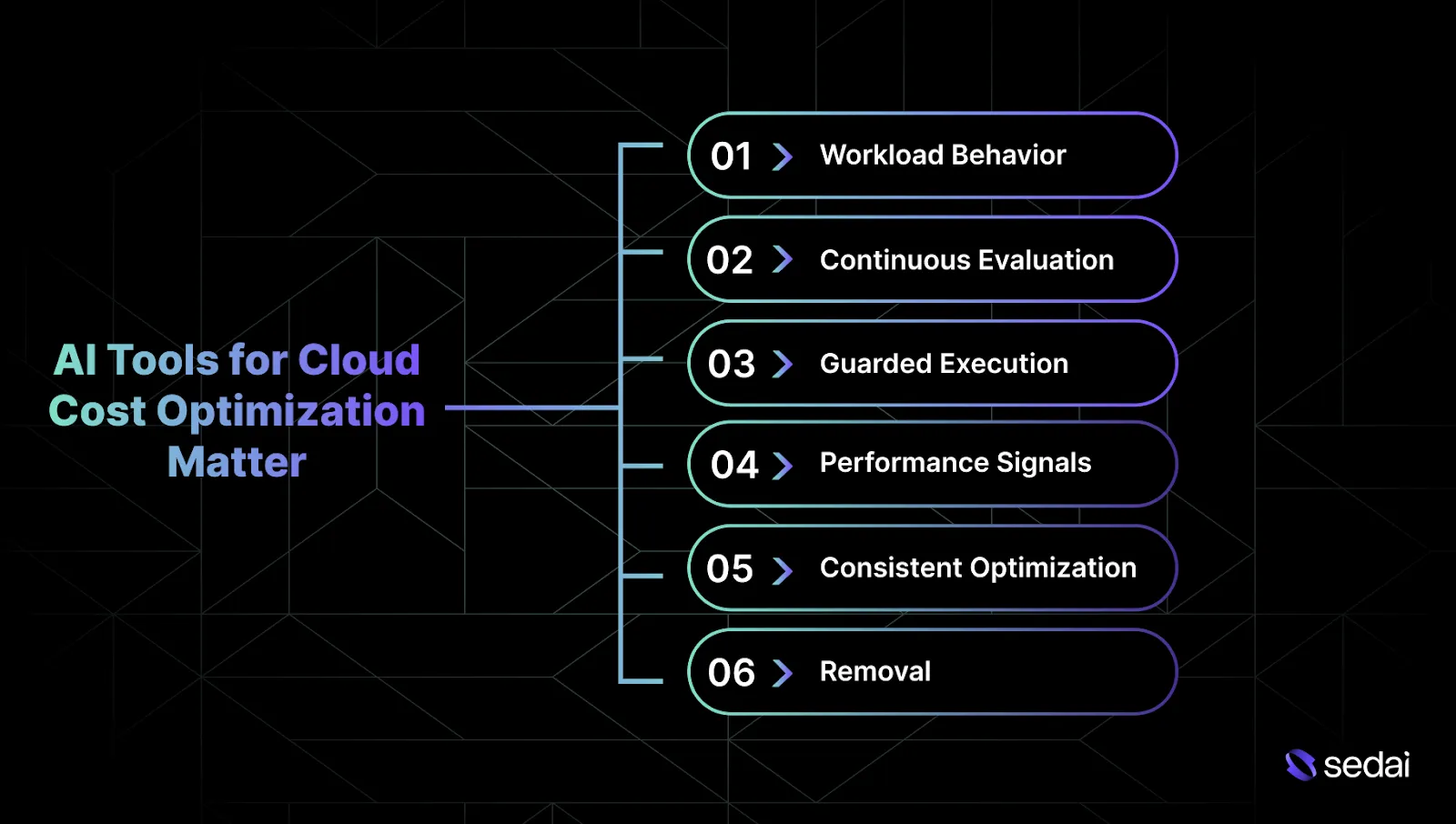

Why Do AI Tools for Cloud Cost Optimization Matter?

Cloud spend is shaped by constantly changing workload behavior. As traffic patterns, data volumes, and usage shift day to day, manual reviews and fixed rules can’t respond quickly or safely enough to prevent cost drift.

Here’s why AI tools for cloud cost optimization matter:

1. Workload Behavior as the Basis for Cost Decisions

Most cost tools rely on utilization snapshots taken at fixed points in time. AI systems instead observe how a workload behaves across real traffic cycles, including sustained peaks, quiet periods, and failure conditions. This shifts capacity reduction from guesswork to a measured, data-backed decision.

2.Continuous Evaluation of Cost Drift

Manual cost reviews run on a cadence that rarely matches how workloads actually change. AI-driven systems reassess resource usage continuously, detecting inefficiencies as traffic patterns, data volume, and usage evolve in real time.

3.Guarded Execution for Cost Changes

Engineers are understandably cautious about cost adjustments when rollback paths are unclear. AI tools apply changes incrementally, validate impact using live performance signals, and automatically revert when latency or error rates move outside expected thresholds.

4.Performance Signals as the Safety Check

Cost tooling often operates separately from observability systems. AI-based optimization uses latency, error rates, and saturation metrics as safety signals, relying on the same indicators engineers already trust in production.

5.Consistent Optimization Across Environments

Multi-account AWS setups, Kubernetes clusters, and hybrid Azure or GCP environments introduce significant coordination overhead. AI tools apply consistent optimization logic across services and teams without requiring manual alignment.

6.Removal of Repetitive Optimization Tasks

Rightsizing and tuning are continuous maintenance tasks in active environments. AI-driven platforms like Sedai take on these repetitive adjustments automatically, freeing senior engineers to focus on architecture, reliability, and long-term system design.

Once the value of AI-driven cost optimization is clear, the focus naturally turns to the capabilities that make it effective.

Key Features of AI Tools for Cloud Cost Optimization

AI tools for cloud cost optimization only matter when their behavior reflects production reality. You should care about how decisions are made, how risk is contained, and how changes are validated under real traffic.

Below are the key features of AI tools for cloud cost optimization.

1.Workload Behavior Modeling Over Time

Effective cost optimization tools base decisions on how workloads behave across real traffic cycles. This includes sustained peak demand, extended low-traffic periods, and failure conditions observed in production. Rightsizing decisions are grounded in proven behavior under load.

2.Decision Logic Informed by System Response

Safe cost reduction depends on understanding how changes in capacity affect system behavior. Mature tools evaluate utilization alongside latency, saturation, and error signals to determine whether existing headroom is protecting performance or simply sitting unused.

3.Guardrails Enforced at Execution Time

Guardrails only provide real safety when they actively prevent unsafe actions. Reliable tools block execution when performance limits are exceeded and automatically halt or reverse changes when those limits are crossed.

4.Incremental Changes With Live Validation

Large configuration changes increase blast radius and risk. Mature systems apply adjustments incrementally and validate each step against live traffic before proceeding further.

5.Kubernetes-Aware Optimization at the Scheduler Layer

In Kubernetes environments, cost behavior is shaped by scheduling mechanics. Effective tools account for how requests, limits, node capacity, autoscaling, and bin-packing interact under real load.

6.Consistent Optimization Logic Across Cloud Providers

Cost inefficiencies often shift across environments rather than disappearing. Reliable tools apply the same decision logic across EC2, managed Kubernetes, serverless services, and managed databases in AWS, Azure, and Google Cloud.

7.Explicit Control Over Autonomy and Execution Scope

Engineers need clear control over how and when decisions are applied. Mature tools support observation, recommendation, and execution paths, with full transparency into each action taken.

8.Low Operational and Cognitive Overhead

Cost optimization tools should operate quietly in the background. Systems that require constant tuning or frequent review introduce a new operational burden instead of reducing it.

Once you understand the key features that drive effective cloud cost optimization, it becomes easier to compare the AI tools that put those capabilities into practice.

Also Read: Strategies to Improve Cloud Efficiency and Optimize Resource Allocation

18 Best AI Tools for Cloud Cost Optimization in 2026

AI tools for cloud cost optimization become necessary when cost behavior cannot be explained solely through dashboards. You need to rely on these tools to analyze workload behavior, enforce safety boundaries, and correct cost drift continuously without destabilizing production systems.

1.Sedai

Sedai is an AI-driven cloud optimization platform built to reduce cloud cost while preserving application performance and reliability across AWS, Azure, Google Cloud, and Kubernetes environments.

Sedai functions as a behavior-aware optimization layer. It uses machine learning to understand how applications behave in real production conditions, evaluates cost and performance tradeoffs, and applies autonomous actions only within explicitly configured safety guardrails.

Key Features

- Behavior-based resource rightsizing: Learns actual workload usage patterns and recommends or applies compute and memory adjustments, avoiding static sizing assumptions.

- ML-informed scaling optimization: Uses historical and live signals to improve scaling behavior, reducing over-provisioning while protecting service objectives.

- Guardrail-driven autonomous actions: Execute optimization changes only when confidence thresholds and safety policies are satisfied.

- Cost-aware optimization decisions: Accounts for pricing models and workload characteristics without hard-coding tradeoffs into the architecture.

- Continuous performance validation: Monitors latency, error rates, and utilization to ensure cost optimizations do not degrade reliability.

- Kubernetes and cloud-native support: Optimizes containerized workloads and cloud resources based on supported services and configurations.

- Adaptive optimization models: Updates learning models as workloads, traffic patterns, and deployment characteristics evolve over time.

How Sedai Delivers Value:

Best For: Engineers and platform teams operating cloud-native or Kubernetes-based environments who want AI-driven cost optimization that respects performance constraints and preserves architectural control.

2.AWS Cost Explorer

Source

AWS Cost Explorer gives engineering teams deep insight into historical AWS spend and helps forecast future usage. It’s a foundation for senior engineers to evaluate how architectural and scaling choices affect costs. AWS Cost Explorer informs planning and commitment decisions.

Key Features:

- Spend Breakdown: View costs by service, account, and usage patterns.

- Forecasting: Project future AWS spend based on historical trends.

- Commitment Analysis: Evaluate Reserved Instance and Savings Plan utilization.

- Architecture Reviews: Assess long-term cost impact of design choices.

Best For: Engineers running AWS workloads who need actionable cost visibility and forecasting to support architecture and capacity decisions.

3.Azure Cost Management

https://azure.microsoft.com/products/cost-management/

Azure Cost Management provides tracking, budgeting, and forecasting across Azure environments. It surfaces insights on SKUs, service usage, and scaling patterns, helping engineers align cost with architectural decisions. It doesn’t automatically change infrastructure.

Key Features:

- Cost Tracking: Monitor spend across subscriptions, resource groups, and services.

- Forecasting: Estimate future usage and costs from historical patterns.

- Budgets & Alerts: Detect deviations and prevent overspend.

- Optimization Suggestions: Integrates Azure Advisor recommendations.

Best For: Senior engineers managing Azure workloads who want clear governance and cost visibility tied to design and scaling choices.

Google Cloud Cost Management helps teams analyze usage-driven costs, forecast spending, and control budgets in GCP environments. It focuses on cost visibility and recommendations rather than automatic optimization.

Key Features:

- Usage Breakdown: Map spend directly to GCP service consumption.

- Cost Forecasting: Estimate future costs using historical data.

- Alerts & Budgets: Flag unexpected cost growth early.

- Idle Resource Detection: Highlight underutilized or idle resources.

Best For: Engineers on GCP who want to understand the cost impact of service choices and autoscaling behavior.

CloudZero connects cloud spend to engineering constructs like services, features, and products. Senior engineers can evaluate architecture efficiency, track unit economics, and detect anomalies without altering infrastructure.

Key Features:

- Cost Mapping: Connect spend to services, features, and products.

- Anomaly Detection: Identify unexpected changes automatically.

- Unit Economics: Calculate cost per customer, request, or feature.

- Real-Time Insights: Improve feedback loops after architecture changes.

Best For: Engineers who want to assess architecture efficiency and cost-effectiveness using actionable metrics rather than aggregate spend.

Finout provides precise cost allocation in shared and complex cloud environments. It helps senior engineers see how shared resources distribute costs across teams and workloads. Finout focuses on clarity; it doesn’t automate optimization.

Key Features:

- Accurate Allocation: Distribute shared infrastructure costs correctly.

- Complex Environments: Handle multi-account and multi-service setups.

- Dependency Visibility: Surface hidden cost dependencies.

- Normalized Data: Prepare clean inputs for internal analysis and reporting.

Best For: Platform and infrastructure teams who need accurate cost attribution to evaluate architectural dependencies and ownership.

Cast AI is a Kubernetes-focused cost-optimization platform that dynamically adjusts nodes, workloads, and resource allocation based on observed usage. Senior engineers benefit from automated cluster and workload efficiency without manual tuning.

Key Features:

- Node Optimization: Adjust node pools and instance types automatically.

- Workload Rightsizing: Dynamically tune CPU and memory requests.

- Cost-Performance Balance: Apply changes within safety constraints.

- Spot Usage: Use spot capacity intelligently to reduce costs.

Best For: Engineers operating large Kubernetes clusters who want autonomous, runtime cost optimization.

Spot helps engineering teams reduce cloud compute costs by orchestrating spot, reserved, and on-demand capacity safely. It keeps workloads available while minimizing compute spend.

Key Features:

- Spot Automation: Orchestrate spot instance usage across workloads.

- Compute Optimization: Adjust provisioning based on demand patterns.

- High Availability: Maintain uptime during spot interruptions.

- Multi-Cloud Support: Optimize compute across AWS, Azure, and GCP.

Best For: Teams with elastic compute workloads that can leverage spot-based savings without sacrificing reliability.

Turbonomic models application demand and infrastructure supply, then executes optimization actions within policy guardrails. It helps senior engineers optimize cost and performance together.

Key Features:

- Demand Modeling: Understand resource needs across applications.

- Automated Actions: Adjust scaling and placement when enabled.

- Cost-Performance Optimization: Avoid savings that degrade reliability.

- Hybrid & Multi-Cloud: Support for on-prem and cloud setups.

Best For: Engineers managing complex applications who need controlled, automated resource optimization.

Anodot applies machine learning to detect abnormal cloud cost or usage behavior. It alerts teams to unexpected patterns early, preventing small issues from becoming major cost overruns.

Key Features:

- Anomaly Detection: Identify unusual spend automatically.

- Behavior Correlation: Link cost spikes to operational signals.

- Noise Reduction: Focus on statistically significant deviations.

- Early Alerts: Investigate before costs escalate.

Best For: Teams seeking early warning signals for abnormal cloud spend without active optimization.

Ternary centralizes multi-cloud cost data and provides visibility, forecasting, and accountability. Senior engineers can map spend to teams and services, evaluate architecture impacts, and plan budgets. It delivers insight-driven optimization.

Key Features:

- Multi-Cloud Visibility: Centralize AWS, Azure, and GCP spend.

- Cost Ownership: Map costs to teams, services, and accounts.

- Forecasting & Budgets: Plan long-term usage and expenses.

- Architecture Cost Review: Evaluate the impact after design changes.

Best For: Engineers managing multi-cloud environments who need clarity to guide architecture and cost decisions.

CloudScore.ai uses AI-assisted analytics to identify inefficiencies and optimization opportunities across cloud environments. It focuses on surfacing insights rather than executing changes.

Key Features:

- Inefficiency Detection: Spot underutilized or misconfigured resources.

- Trend Analysis: Track usage and cost evolution over time.

- Optimization Planning: Prioritize savings opportunities by impact.

- Governance Alignment: Designed for review-driven optimization.

Best For: Senior engineers who want AI-guided insights to inform manual optimization and architecture reviews.

CloudKeeper combines visibility, AI recommendations, and optional automation to reduce cloud waste. Its Tuner identifies optimization opportunities, while automation executes pre-approved actions when enabled.

Key Features:

- AI Recommendations: Identify rightsizing and cleanup opportunities.

- Controlled Automation: Execute selected actions within policy limits.

- Utilization Efficiency: Target idle and over-provisioned resources.

- AWS Integration: Designed to work seamlessly with native workflows.

Best For: Teams wanting a blend of recommendations and selective automation with human oversight.

CloudPilot AI focuses on Kubernetes cost optimization for Amazon EKS clusters. It automates node selection, workload placement, and spot instance usage to improve efficiency.

Key Features:

- Node Provisioning: Automatically choose cost-efficient instance types.

- Workload Placement: Balance cost and availability across clusters.

- Spot Capacity: Reduce compute cost while managing interruptions.

- EKS Focus: Deep integration with AWS Kubernetes workloads.

Best For: Engineers operating EKS clusters seeking autonomous Kubernetes cost optimization.

Usage.ai optimizes cloud purchasing and commitment strategies rather than runtime resources. It reduces financial risk while improving cloud savings.

Key Features:

- Commitment Optimization: Recommend Savings Plans and reservations.

- Risk Reduction: Use insured or risk-managed purchasing models.

- No Infra Changes: Operates entirely at the billing layer.

- Predictable Savings: Target baseline usage efficiency.

Best For: Engineers and FinOps teams managing cloud commitments who want low-risk savings strategies.

Xenonify.ai provides AI-driven FinOps automation for multi-cloud environments. It surfaces inefficiencies, detects anomalies, and can optionally execute remediation actions.

Key Features:

- Anomaly Detection: Identify abnormal spend automatically.

- Cost Visibility: Reveal inefficiencies across services and accounts.

- Tagging & Allocation: Enforce governance standards.

- Optional Automation: Execute actions when configured.

Best For: Teams seeking AI-assisted FinOps with optional automation and execution controls.

Amnic focuses on cloud cost observability and AI-assisted analytics. It highlights inefficiencies and improves understanding of cost drivers across cloud resources.

Key Features:

- Cost Observability: Break down spend by services and resources.

- Reporting Automation: Reduce manual FinOps overhead.

- Inefficiency Detection: Highlight waste and overspend patterns.

- Decision Support: Enable data-driven cost reviews.

Best For: Engineering and platform teams wanting AI-assisted cost observability and analytics.

nOps is AWS-centric, combining automation, analytics, and commitment optimization. It helps teams automatically reduce waste across EC2, EKS, autoscaling groups, and AWS commitments.

Key Features:

- Commitment Optimization: Manage Savings Plans and Reserved Instances.

- Compute Efficiency: Automate EKS and autoscaling optimizations.

- Waste Reduction: Identify unused and inefficient assets.

- FinOps Alignment: Bridge engineering and cost governance workflows.

Best For: Senior engineers running AWS environments who want automated savings with operational visibility.

Here’s a quick comparison table:

After reviewing the leading AI tools, it's helpful to examine the practical strategies teams use to maximize value from cloud cost optimization.

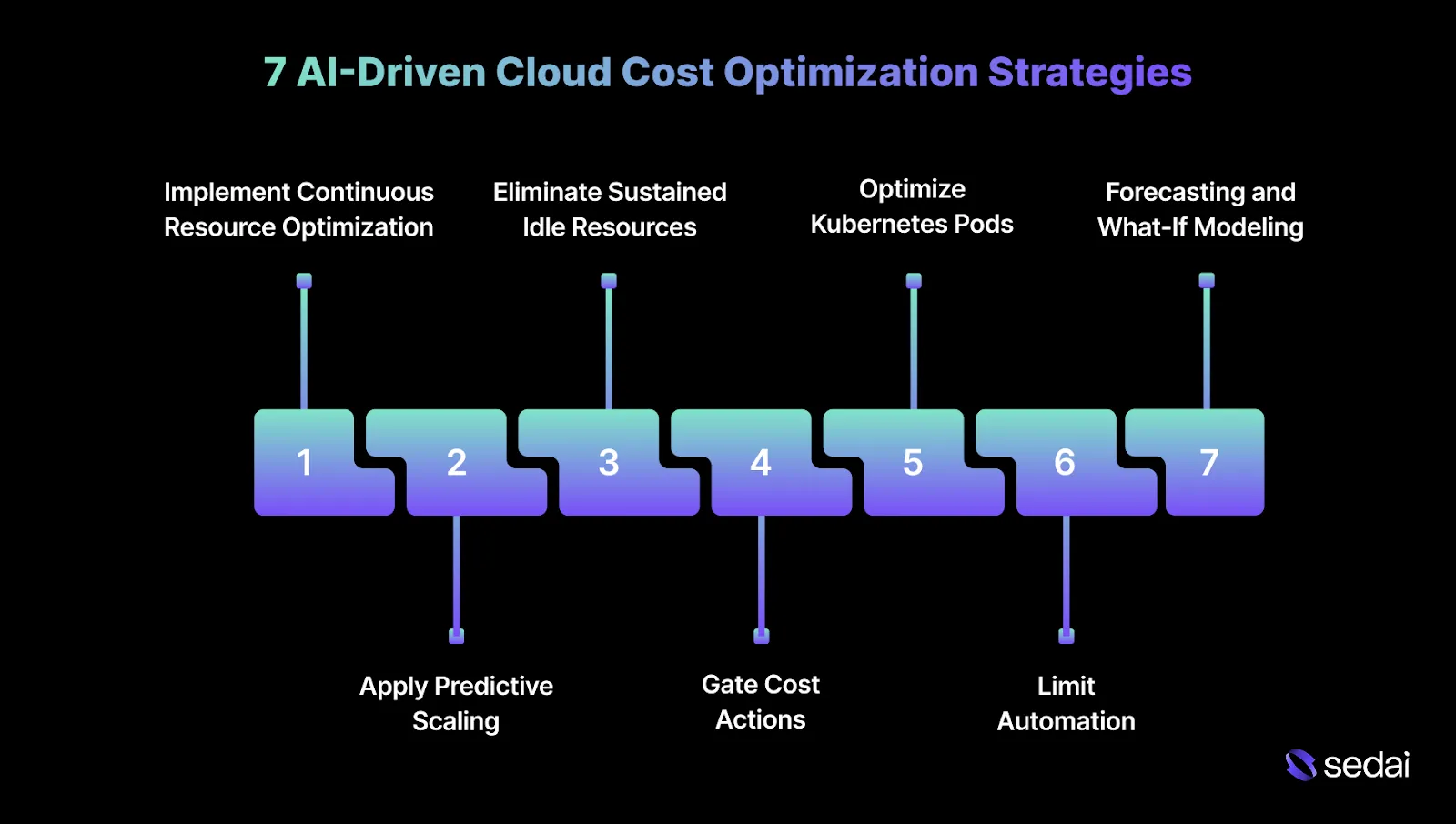

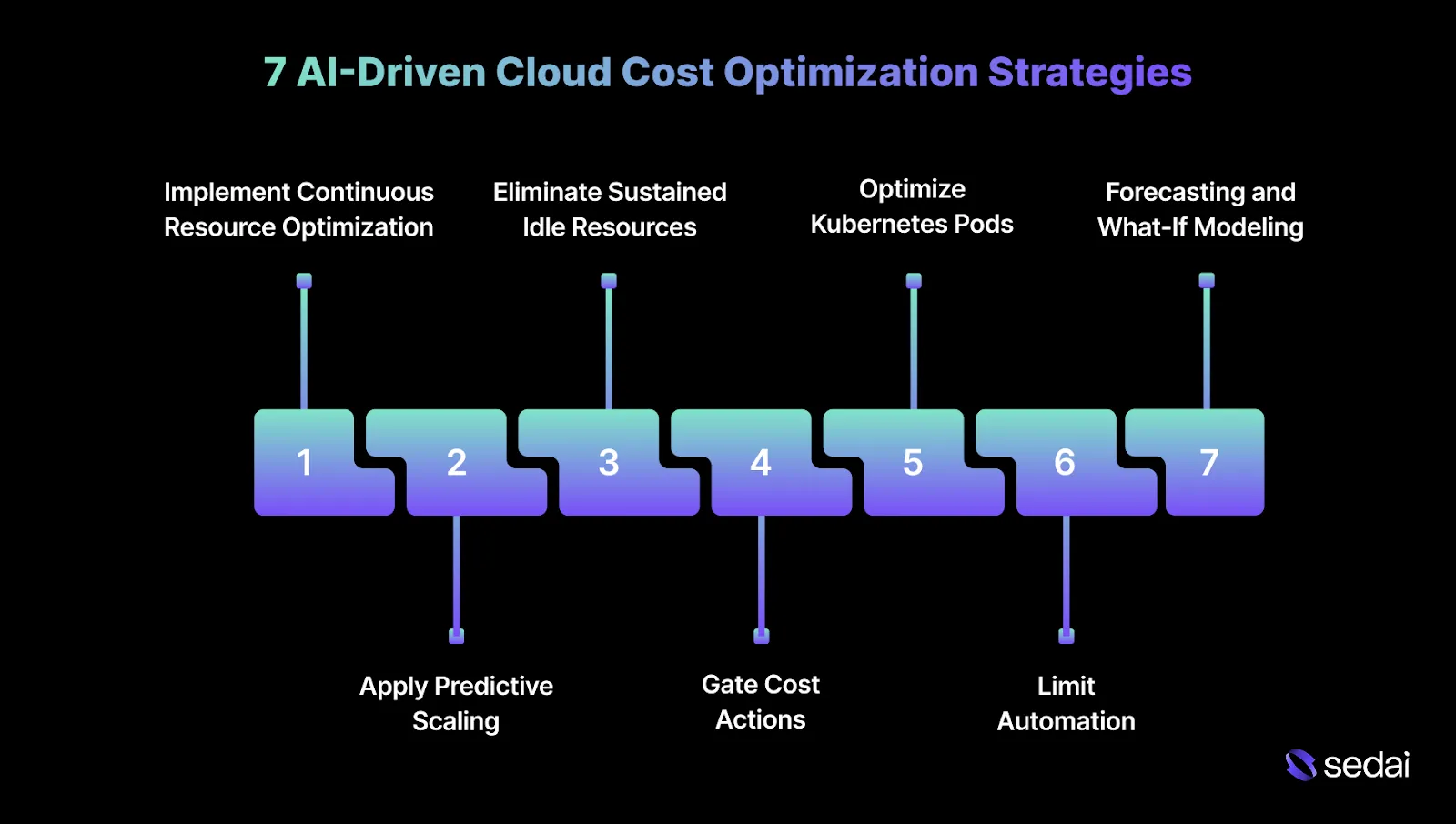

7 AI-Driven Cloud Cost Optimization Strategies

AI-driven strategies are effective when they operate as disciplined control loops alongside production systems. The focus is on continuously correcting cost drift while keeping reliability signals within known bounds.

Below are some effective AI-driven cloud cost optimization strategies.

1.Implement Continuous Resource Optimization

Continuous resource optimization prevents long-term cost drift caused by changing traffic patterns and evolving workloads. The objective is to correct inefficiencies as they emerge, not to rely on periodic cleanup efforts after waste has already accumulated.

This approach depends on automated mechanisms that adjust capacity based on observed demand rather than assumptions made during initial sizing.

How to implement:

- Identify sustained underutilization: Track CPU, memory, and I/O usage across multiple weeks to avoid reacting to short-lived dips or transient behavior.

- Automate gradual downsizing: Reduce capacity in controlled steps while monitoring latency and error rates after each adjustment.

- Validate against live traffic: Confirm that performance remains stable under real workload conditions before applying further reductions.

Tip: Treat every downsizing action as reversible. If rollback paths are not tested, the optimization is not production-ready.

2.Apply Predictive Scaling Instead of Reactive Scaling

Reactive autoscaling responds only after pressure builds, often resulting in delayed scale-ups and unnecessary buffer capacity. Predictive scaling prepares systems ahead of known demand patterns.

This approach relies on historical traffic behavior and seasonality rather than threshold breaches alone.

How to implement:

- Build traffic baselines: Analyze historical load to identify recurring peaks, troughs, and seasonal trends.

- Pre-scale for known demand: Add capacity ahead of predictable traffic increases instead of waiting for saturation signals.

- Restrict scale-down during volatility: Avoid aggressive scale-down actions when traffic variance is high or patterns are unstable.

Tip: Predictive models should be reviewed quarterly. Traffic patterns change faster than most teams expect, especially after product launches or pricing changes.

3.Eliminate Sustained Idle Resources Automatically

Idle resources persist because they rarely trigger operational alerts. Automated detection helps surface and remove capacity that no longer serves an active workload.

How to implement:

- Confirm prolonged inactivity: Flag resources only after weeks of consistently near-zero usage.

- Exclude burst-driven workloads: Avoid cleanup for services that remain idle most of the time but experience sudden demand spikes.

- Enforce ownership checks: Verify resource ownership before removal to reduce the risk of unintended impact.

Tip: Idle cleanup should always be tag-aware. Resources without ownership tags are often the most expensive to investigate after deletion.

4.Gate Cost Actions Using Reliability Signals

Cost reduction should remain invisible to end users. Performance signals define whether an optimization action is safe to execute. Latency, error rates, and saturation metrics act as execution boundaries.

How to implement:

- Define acceptable performance ranges: Set clear thresholds for latency and error behavior under normal operating conditions.

- Block execution outside safe bounds: Pause optimization actions when signals drift beyond defined limits.

- Rollback automatically on regression: Reverse changes without manual intervention when degradation persists.

Tip: If reliability metrics are noisy or poorly defined, cost optimization should pause. Bad signals lead to bad automation decisions.

5.Optimize Kubernetes Pods and Nodes Together

Kubernetes cost efficiency is shaped by scheduler behavior. Pod- and node-level optimization must be coordinated to avoid fragmented capacity. Isolated tuning at a single layer often shifts waste elsewhere in the system.

How to implement:

- Align pod requests with sustained usage: Base resource requests on observed demand rather than peak estimates.

- Improve bin-packing before scaling nodes down: Reduce fragmentation to safely free entire nodes.

- Coordinate with autoscaler behavior: Ensure pod-level changes do not conflict with cluster scaling decisions.

Tip: Always optimize pod requests before touching node counts. Node-level savings rarely hold when pod sizing remains inaccurate.

6.Limit Automation to Repetitive Execution

Automation is most effective when applied to repeatable, low-risk tasks. Architectural decisions and boundary-setting should remain under manual control. This preserves engineering ownership while removing unnecessary operational overhead.

How to implement:

- Automate routine adjustments: Apply automation to frequent rightsizing and scaling actions.

- Define execution boundaries clearly: Specify what automation is allowed to change and what remains manual.

- Review outcomes periodically: Validate results rather than constantly monitor them.

Tip: When automation starts making architectural decisions, teams lose visibility and accountability. Keep strategy human-owned and execution machine-driven.

7.Forecasting and What-If Modeling

Forecasting enables your teams to identify how shifts in traffic, workload behavior, or architectural decisions will impact cloud spend over time.

What-if modeling builds on this by moving cost discussions from reactive explanations to proactive, data-backed planning.

How to implement it effectively:

- Use historical data to establish realistic baselines: Forecasts should be grounded in actual usage patterns, traffic growth, and workload behavior.

- Model scenarios based on concrete engineering changes: Inputs should reflect real events such as user growth, increased data retention, regional expansion, or service migrations.

- Use what-if outputs to guide commitment decisions: Evaluate Reserved Instances, Savings Plans, or capacity commitments using forecasted demand before making long-term commitments..

Tip: Forecasts should be treated as planning tools. Locking decisions too early based on projections often creates long-term cost rigidity.

These strategies become far more practical when supported by tools that enable consistent application at scale.

Must Read: Cloud Cost Optimization 2026: Visibility to Automation

Final Thoughts

Cloud cost optimization works best when it is treated as an ongoing engineering discipline rather than an occasional cleanup task. As workloads evolve and environments span AWS, Azure, Google Cloud, and Kubernetes, static sizing and periodic manual reviews struggle to keep cost and performance aligned.

This is where autonomous optimization becomes necessary. By learning real workload behavior, validating every action against latency and error signals, and operating within strict guardrails, platforms like Sedai help engineering teams reduce cloud spend without introducing instability or operational risk.

The outcome is a cloud environment where costs remain predictable, performance stays protected, and engineers spend less time correcting inefficiencies.

Take control of your cloud costs now and start cutting waste without compromising how your systems run in production.

FAQs

Q1. How long does it take for AI cost-optimization tools to produce reliable results?

A1. Most AI cost optimization tools require an initial learning phase to observe real workload behavior in production. In practice, reliable recommendations typically emerge after several weeks of sustained traffic. This observation window allows the system to understand demand patterns, peak and idle periods, retry behavior, and failure modes before making safe decisions.

Q2. Can AI-driven cost optimization interfere with incident response or on-call workflows?

A2. It should not, when implemented correctly. Mature tools integrate with existing alerting and incident management systems, clearly indicating whether a change originated from automation or manual action. Engineers should always be able to pause automation during incidents and trace every action through detailed audit logs.

Q3. How do these tools handle low-traffic or batch workloads compared to high-traffic services?

A3. Low-traffic and batch workloads exhibit fundamentally different behavior than customer-facing services. AI tools typically use longer observation windows for these workloads to avoid reacting to noisy or infrequent signals. Engineers often need to explicitly exclude sporadic batch jobs or apply stricter guardrails to prevent premature or unsafe downsizing.

Q4. Do AI cost optimization tools work with stateful workloads such as databases?

A4. Some tools do, but under tighter constraints. Stateful systems require slower, more conservative optimization cycles due to durability, replication, and recovery considerations. Engineers should confirm that a tool understands storage I/O patterns, replication lag, and failover behavior before enabling execution on database workloads.

Q5. How do AI tools handle cost optimization during deployments and configuration changes?

A5. Well-designed systems detect deployment activity and treat these periods differently from steady-state operation. Optimization actions are typically paused or slowed during releases to avoid misinterpreting deployment-related performance changes as workload inefficiencies.