Introduction

Managing cloud infrastructure efficiently requires more than just provisioning resources, it demands intelligent automation. For teams running Azure Kubernetes Service (AKS) clusters, keeping them active 24/7 can quickly lead to excessive costs, especially in non-production environments. While cloud scalability is a key advantage, idle clusters running outside of working hours add unnecessary expenses.

This is where automated scheduling for AKS shutdowns and startups becomes crucial. By strategically stopping and restarting clusters based on usage patterns, teams can optimize cloud spending while maintaining operational efficiency.

Importance of Scheduling Shutdowns and Startups for AKS Clusters

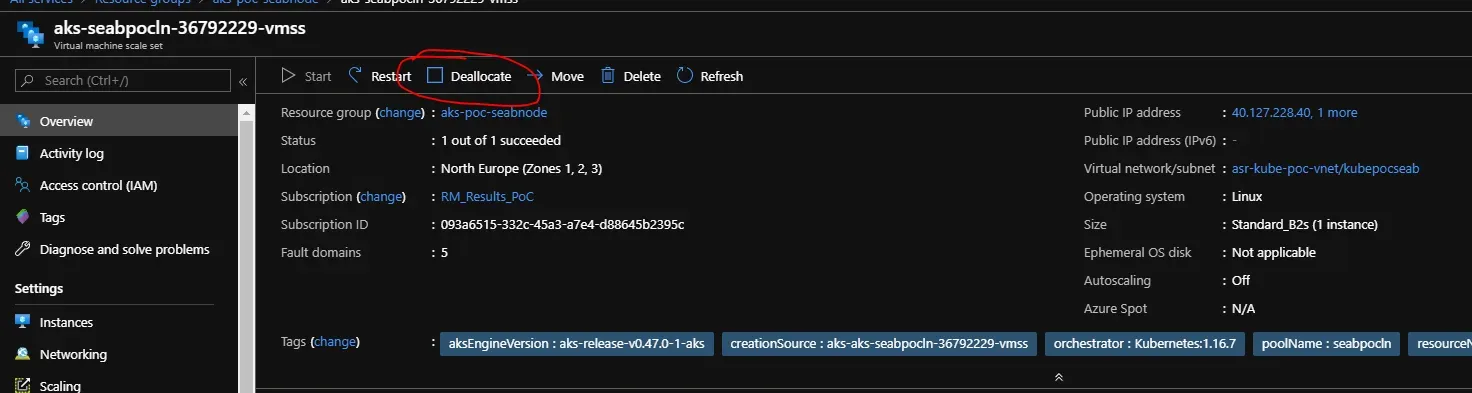

Source: https://github.com/Azure/AKS/issues/1577

Here are some reasons that indicate the importance of startups and scheduling shutdowns in Azure Kubernetes clusters:

1. Cost-Saving for Development Environments During Non-Working Hours

Development clusters are often used only during business hours, but without proper scheduling, they continue to consume compute resources overnight and on weekends. By automating shutdowns, teams can reduce unnecessary expenses while ensuring resources are available when needed.

For a detailed breakdown of AKS pricing and cost factors, check out this guide on Understanding Azure Kubernetes Service (AKS) Pricing & Costs. By understanding pricing components, teams can make informed decisions when optimizing cluster usage.

2. Encourages Reinvestment of Savings Into Other Services

Optimizing AKS costs doesn't just reduce waste—it frees up the budget for more critical cloud initiatives. Organizations can reallocate saved resources to enhance performance, deploy new workloads, or invest in AI-driven optimizations like Sedai, which deliver continuous improvements to cloud efficiency.

3. Need for Scheduled AKS Clusters

Manually starting and stopping clusters is inefficient and prone to human error. Automation ensures reliability, preventing costly oversights and aligning infrastructure with actual usage demands. A well-implemented schedule eliminates the need for engineers to intervene, allowing them to focus on higher-value tasks.

Traditional AKS cost-saving methods rely on static schedules or manual intervention. Sedai takes optimization further with autonomous scaling and intelligent workload management. Instead of pre-defining schedules, Sedai dynamically adjusts resource usage based on real-time demand, ensuring optimal cost efficiency without sacrificing availability.

Current Limitations and Solutions

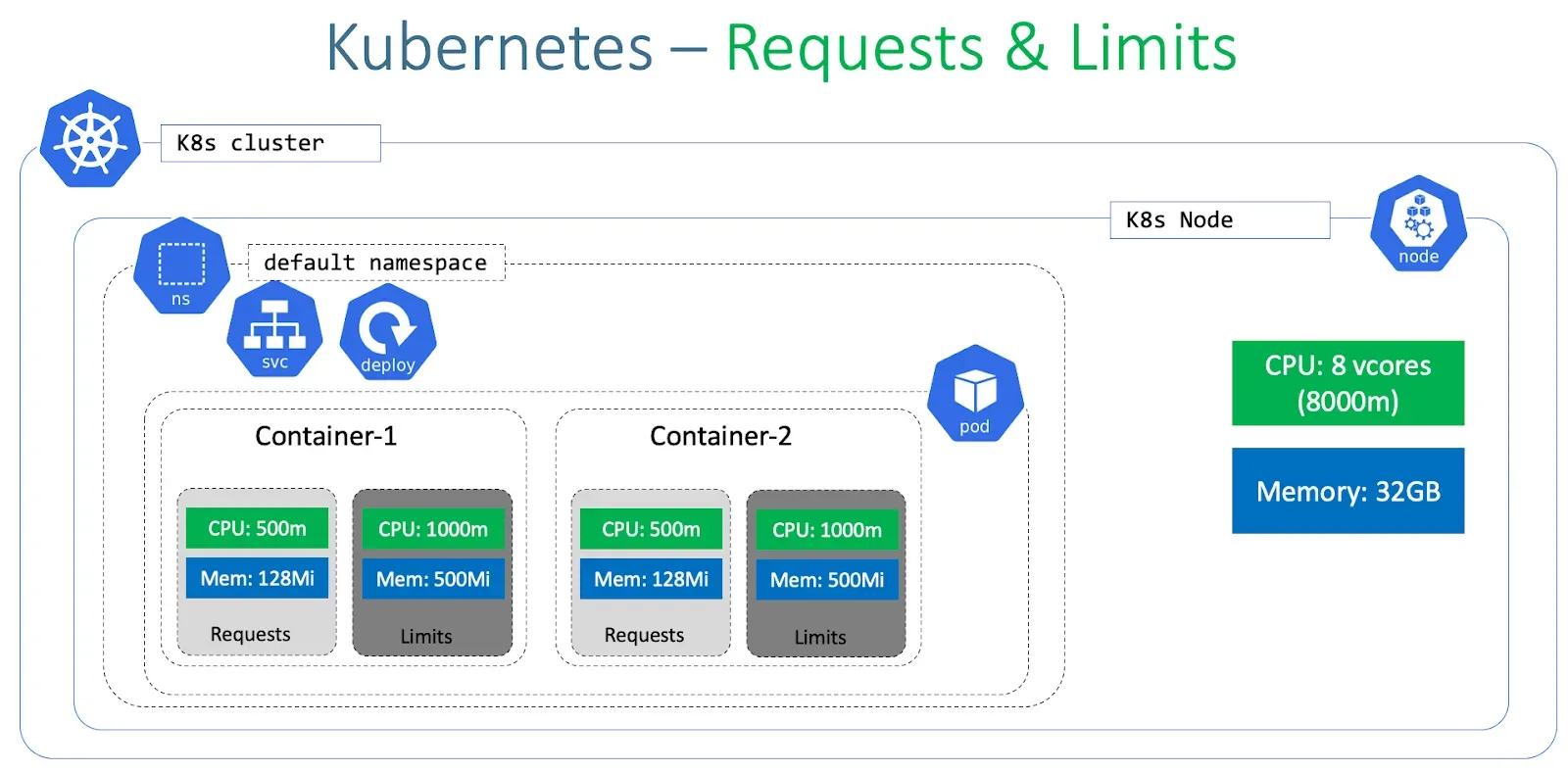

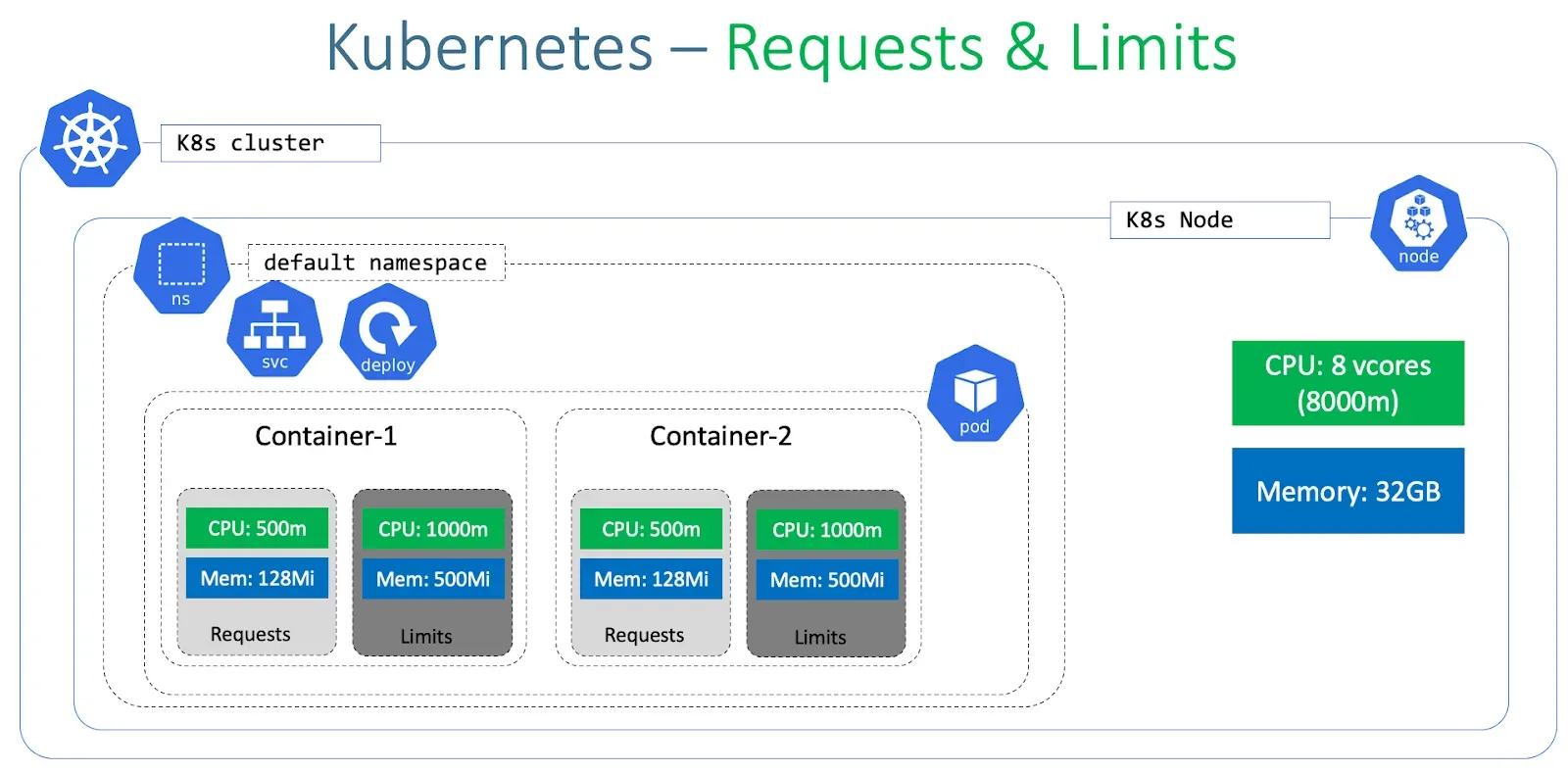

Source: Kubernetes - Requests and Limits

While Azure Kubernetes Service (AKS) provides scalability and flexibility, it lacks a built-in scheduling feature for stopping and starting clusters automatically. Without proper automation, cloud teams must manually intervene to optimize costs, which is inefficient and prone to oversight.

No Native Scheduling Feature in Azure Portal for AKS

Azure’s Kubernetes Service does not currently offer a built-in way to schedule automatic shutdowns and startups within the Azure portal. This means cloud engineers must either manually stop clusters when not in use or risk incurring unnecessary compute costs.

Without a native scheduling option, teams need external automation solutions to control AKS lifecycle management effectively.

Use of Azure CLI, REST APIs, or Logic Apps

To work around this limitation, teams commonly use the following automation approaches:

- Azure CLI & PowerShell Scripts – Engineers can execute scripts using az aks stop and az aks start commands to manage cluster states. These scripts can be scheduled using automation tools like Azure DevOps or cron jobs.

- REST APIs – The Azure Resource Manager (ARM) API allows programmatic control over AKS clusters, enabling teams to trigger stop/start actions via custom scripts or external applications.

- Azure Logic Apps & Automation Accounts – These services provide low-code automation workflows, allowing teams to schedule cluster operations based on predefined triggers, such as business hours.

- Azure Pipelines – Some teams use CI/CD pipelines to automate the process, ensuring development clusters run only when needed for deployments or testing.

Each of these methods helps bridge the gap left by the lack of native scheduling in the Azure portal. However, they still require manual configuration and maintenance.

Curious about how AKS compares to other managed Kubernetes services? This cost comparison of EKS, AKS, and GKE provides insights into pricing structures and optimization strategies across different cloud providers.

Advantages of Automating the Process

Implementing an automated AKS lifecycle management strategy provides several key benefits:

- Cost Optimization – Shutting down idle clusters prevents unnecessary compute expenses, ensuring cloud budgets are used efficiently.

- Operational Efficiency – Engineers no longer need to manually stop and start clusters, reducing administrative overhead.

- Error Prevention – Automated workflows eliminate the risk of forgetting to shut down clusters, preventing surprise cloud bills.

- Seamless Scaling – With automation in place, organizations can ensure clusters are only active when necessary, improving overall resource utilization.

Azure Automation Setup

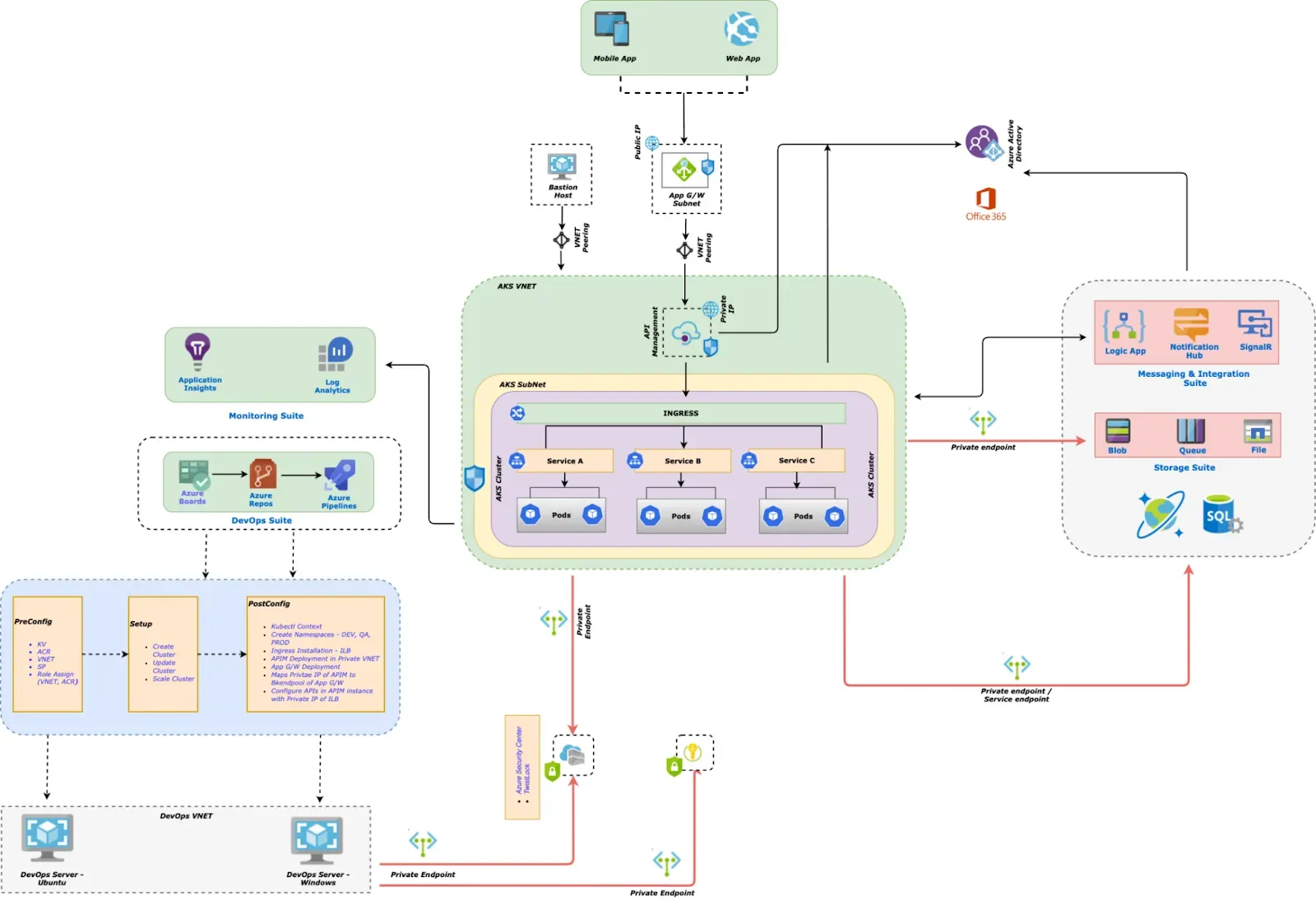

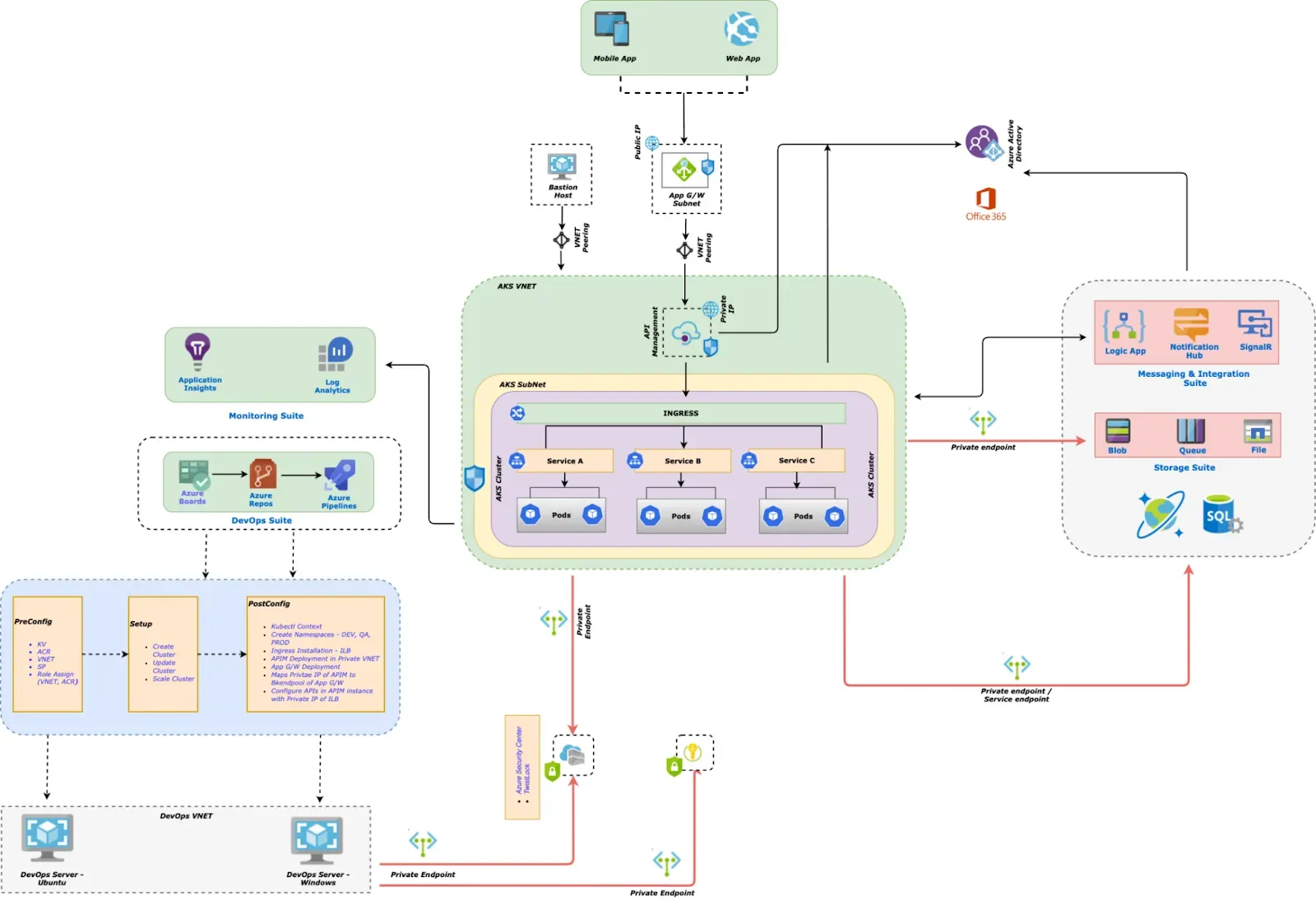

Source: Automating Kubernetes on Azure - AKS and DevOps - Part 1

Manually managing AKS clusters is inefficient and prone to human error. Automating shutdowns and startups ensures cost efficiency and operational reliability. Azure Automation provides a robust solution for scheduling and executing these tasks without manual intervention.

Importance of Having an Azure Automation Account and Assigning Permissions

An Azure Automation Account acts as the foundation for managing AKS lifecycle automation. It enables teams to schedule and execute PowerShell or Python-based runbooks that handle AKS start/stop operations.

To ensure smooth execution, the Automation Account requires proper permissions:

- Contributor Role – Grants permission to start and stop AKS clusters.

- Managed Identity Access – Enables the Automation Account to authenticate securely with Azure services.

- Access Control (IAM) Setup – Ensures the automation scripts can interact with AKS resources.

Setting up these permissions is crucial for executing automation workflows without manual authentication.

Creating and Managing Automation Accounts with Azure CLI

The Azure CLI provides a straightforward way to create and manage an Automation Account. To set one up, use the following command:

bash

Once the Automation Account is created, you can import automation runbooks to schedule the start and stop operations for AKS clusters.

After creating the account, assign the necessary permissions using:

bash

This ensures the Automation Account has sufficient privileges to control AKS clusters.

Using System Assigned Managed Identity

A System Assigned Managed Identity allows Azure Automation to interact with AKS securely without storing credentials. This eliminates security risks associated with hardcoding credentials in automation scripts.

To enable a managed identity on your Automation Account:

bash

This assigns an identity to the account, which can then be granted IAM roles for AKS management. Once enabled, automation runbooks can authenticate to AKS using this identity, reducing security risks and simplifying maintenance.

Script and Logic Implementation

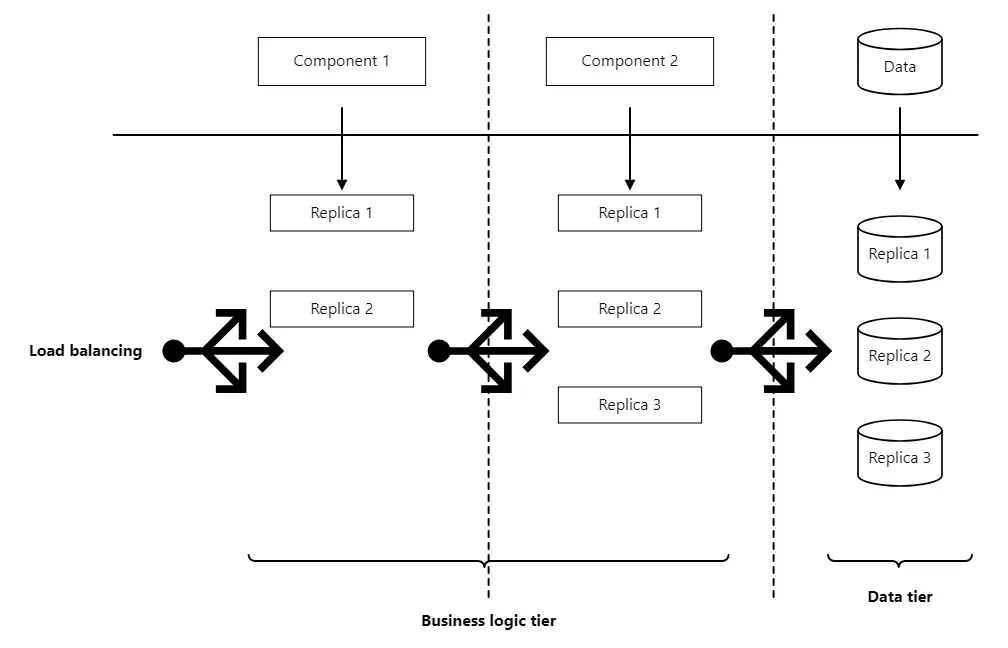

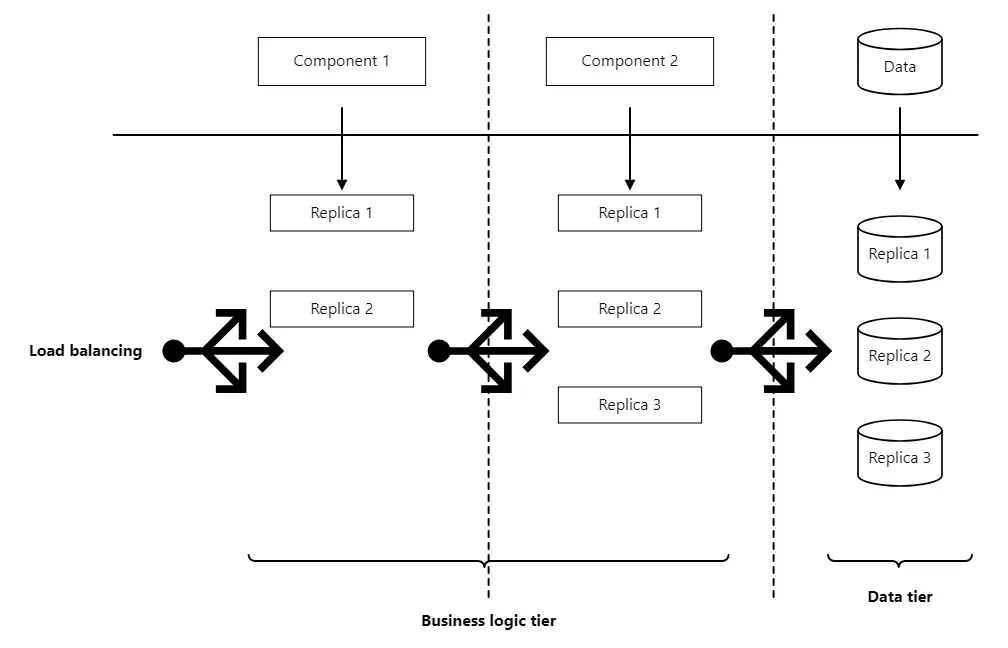

Source: High availability for multitier AKS applications

Automating AKS cluster shutdowns and startups requires well-structured scripts or logic-based workflows. Whether using PowerShell scripts or Azure Logic Apps, the goal is to ensure seamless execution while maintaining flexibility.

Using PowerShell Scripts or Logic Apps for Automation

Two primary approaches are commonly used for automating AKS lifecycle management:

- PowerShell Scripts – Ideal for teams comfortable with scripting and using Azure Automation Accounts. PowerShell provides direct control over AKS operations, leveraging Start-AzAks and Stop-AzAks commands.

- Azure Logic Apps – A no-code/low-code alternative that allows teams to create workflows without writing extensive scripts. Logic Apps can be triggered based on schedules or external events, providing a scalable and maintainable automation solution.

Example PowerShell script for stopping an AKS cluster:

powershell

This script can be scheduled using Azure Automation or executed within a DevOps pipeline.

Alternatively, Azure Logic Apps allow users to define workflows with:

- Scheduled triggers for automatic execution

- HTTP API calls to start/stop AKS clusters

- Role-based access control (RBAC) for security

Example Logic App workflow:

- Trigger the workflow at a predefined time (e.g., 7 AM and 7 PM).

- Retrieve all AKS clusters tagged for automated management.

- Check the cluster’s current status.

- Start or stop the cluster accordingly.

Parameters Required for Cluster Operations

For scripts and Logic Apps to function correctly, certain parameters must be provided:

- AKS Cluster Name – Specifies which cluster should be managed.

- Resource Group – Defines the scope of the operation.

- Operation Type – Indicates whether to start or stop the cluster.

- Authentication Method – Determines how the script authenticates with Azure (Managed Identity, Service Principal, or stored credentials).

Example script execution:

powershell

Ensuring these parameters are dynamically assigned improves script reusability.

Avoiding Hardcoding Through Tagging

Manually maintaining a list of clusters to start/stop is inefficient. Instead, tagging provides a scalable solution.

- Use Azure Tags to define schedules – For example, clusters tagged with Hours=Business can be managed by an automated workflow.

- Dynamically filter AKS clusters – Instead of hardcoding cluster names, scripts retrieve clusters with specific tags, ensuring new clusters are automatically included.

Example command to retrieve tagged clusters:

bash

By leveraging tags, teams can:

- Easily manage multiple clusters without modifying scripts

- Ensure new AKS clusters follow the same automation rules

- Reduce manual intervention and prevent misconfigurations

Executing and Scheduling Cluster Shutdowns and Startups

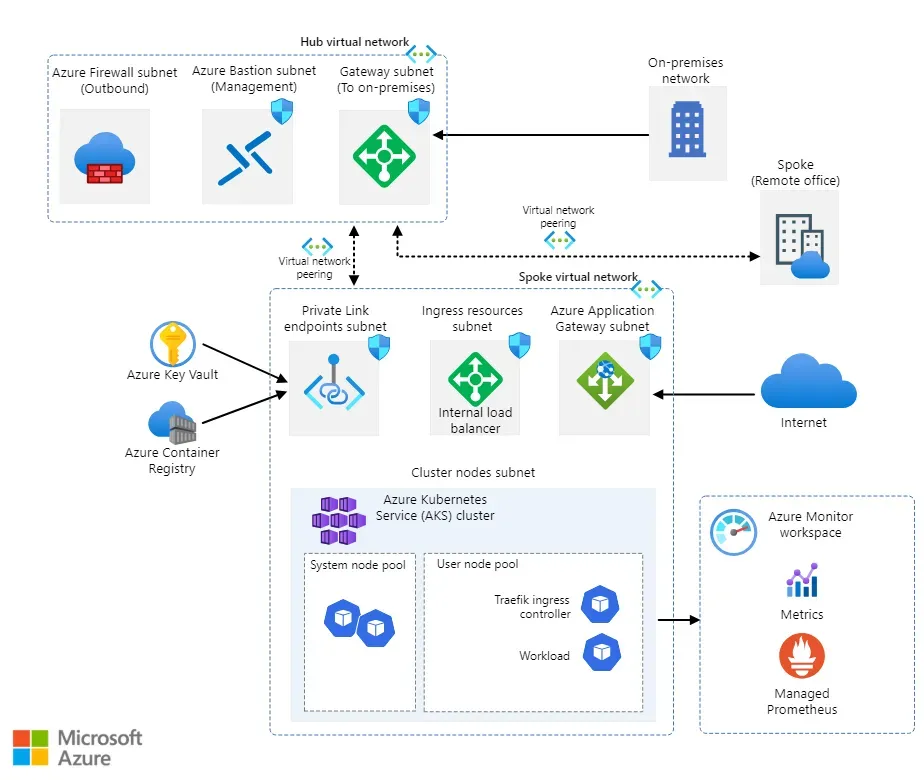

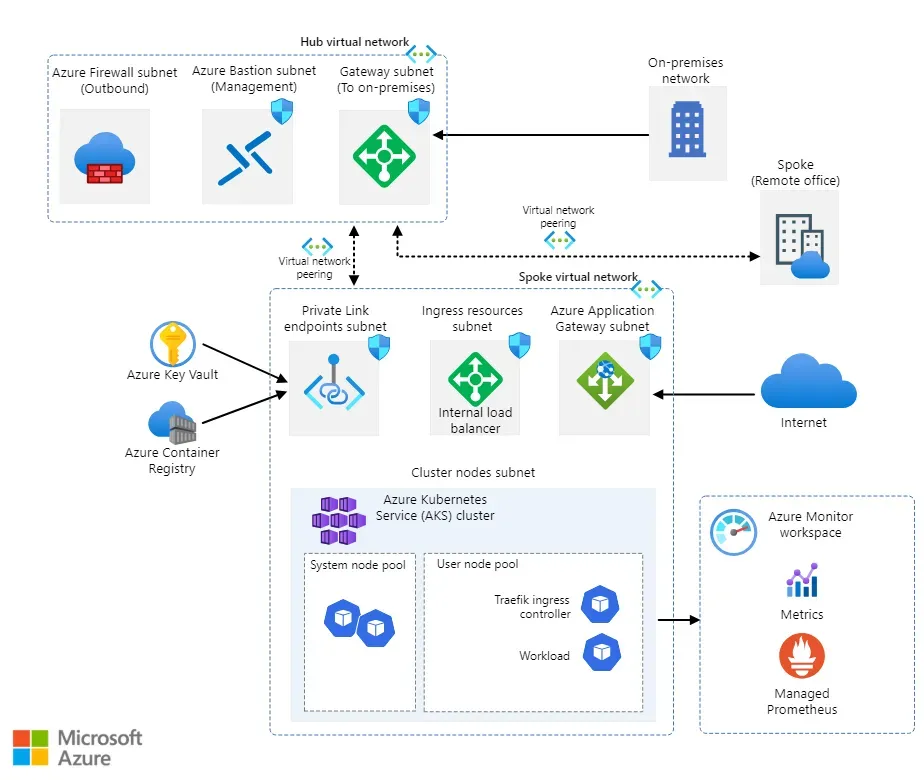

Source: Baseline architecture for an Azure Kubernetes Service (AKS) cluster

Automating AKS cluster lifecycle management ensures resources are available when needed while avoiding unnecessary cloud costs. Scheduling shutdowns and startups eliminates manual intervention, allowing teams to focus on development rather than infrastructure management.

Creating Schedules for Automatic Start and Stop Operations

Azure provides multiple ways to schedule AKS operations:

- Azure Automation Runbooks – Use scheduled PowerShell scripts to start and stop AKS clusters at predefined times.

- Azure Logic Apps – Automate AKS lifecycle based on tags, external triggers, or business hours.

- Azure DevOps Pipelines – Execute scheduled jobs that control cluster states.

- Azure Functions – Trigger cloud-based scripts to handle cluster management dynamically.

To create an Automation Runbook schedule:

- Navigate to the Azure Automation Account.

- Select Schedules → Click Add a schedule.

- Define the start time, recurrence (daily, weekdays, weekends, etc.), and time zone.

- Link the schedule to a PowerShell runbook that executes Start-AzAks or Stop-AzAks.

Example PowerShell script linked to a schedule:

powershell

This ensures clusters start and stop automatically without manual execution.

Input Parameters for Execution

For automation scripts and scheduled jobs to function properly, certain parameters are required:

- AKS Cluster Name – Identifies which cluster to start or stop.

- Resource Group – Ensures the correct cluster is targeted.

- Execution Mode (Start/Stop) – Specifies the desired operation.

- Time Schedule – Defines when the script should execute.

Using dynamic input parameters, scripts remain flexible and reusable across different environments.

For Logic Apps, input parameters can be fetched dynamically using:

json

This setup ensures consistent execution without modifying scripts manually.

Options for On-Demand Versus Scheduled Execution

In Azure Kubernetes Service (AKS), there are two primary ways to control cluster execution:

- On-Demand Execution

This approach requires engineers to manually trigger scripts when necessary. It provides flexibility for scenarios where AKS clusters do not need to be running continuously.

- Example Use Case: A development or testing team performs occasional tests that require a cluster.Instead of keeping the cluster running all the time, engineers manually execute an Azure Logic App or Azure DevOps Pipeline to start the cluster before testing.This approach helps reduce costs by running clusters only when required.

- A development or testing team performs occasional tests that require a cluster.

- Instead of keeping the cluster running all the time, engineers manually execute an Azure Logic App or Azure DevOps Pipeline to start the cluster before testing.

- This approach helps reduce costs by running clusters only when required.

- Scheduled Execution

This method involves fully automated workflows that control cluster availability based on predefined schedules. It ensures that clusters are operational only during specific hours, reducing unnecessary resource usage.

- Example Use Case: A development environment is active only during business hours.An automation workflow, such as an Azure Automation Runbook or a Scheduled Logic App, starts the cluster in the morning and shuts it down overnight.This setup optimizes cloud spending by preventing unnecessary runtime outside of working hours.

- A development environment is active only during business hours.

- An automation workflow, such as an Azure Automation Runbook or a Scheduled Logic App, starts the cluster in the morning and shuts it down overnight.

- This setup optimizes cloud spending by preventing unnecessary runtime outside of working hours.

Both methods help organizations manage AKS efficiently, balancing cost optimization and operational flexibility.

To execute an on-demand PowerShell script in an Automation Runbook:

powershell

To execute a scheduled job in Azure Pipelines, define the cron schedule:

yaml

This flexibility ensures teams balance cost savings with operational availability.

Operational Logic and Error Handling

Source: https://link.springer.com/chapter/10.1007/978-1-4842-5519-3_7

Ensuring that automated AKS start/stop processes run smoothly requires proper state verification, logging, and error handling. Without these safeguards, teams may face unexpected failures, misconfigured clusters, or unintentional downtimes.

Cluster State Verification and Handling

Before executing any start/stop operation, the automation workflow must verify the cluster’s current state to prevent redundant or conflicting actions.

Why state verification is important:

- Prevents attempting to start an already running cluster or stopping an already stopped one.

- Ensures clusters transition smoothly between states without unintended disruptions.

- Avoids API errors or unnecessary compute resource allocation.

Example: Checking Cluster State with Azure CLI

bash

This approach prevents redundant API calls, reducing execution time and avoiding unnecessary requests.

In PowerShell scripts, a similar check can be implemented:

powershell

This ensures the script only runs actions when needed.

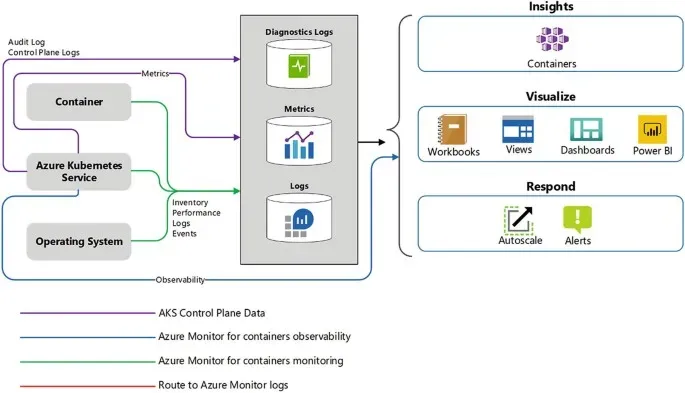

Logging and Error Output Messages

Logging provides visibility into automation workflows and helps troubleshoot failures. Properly formatted logs should capture the following:

- Timestamps – When the operation was executed.

- Cluster state before and after execution – Helps identify incorrect transitions.

- Error messages – Highlights API failures or authentication issues.

In PowerShell, logs can be captured using:

Powershell

This ensures all actions are recorded for debugging and compliance purposes.

For Azure Logic Apps, logs can be captured using:

- Application Insights for centralized logging.

- Blob Storage to store execution details.

- Log Analytics for querying historical job runs.

Recommendations for Testing Before Production Use

Before deploying automation workflows in a production environment, rigorous testing is required to ensure stability and reliability.

- Run in a test environment first – Verify that scheduled executions do not cause unexpected downtimes.

- Monitor logs for failures – Track API responses to detect authentication issues or script failures.

- Use dry-run mode – Instead of executing commands, simulate them first:

powershell

- Validate permissions – Ensure the Automation Account or Managed Identity has the right access control policies.

By following these best practices, teams reduce the risk of unintended outages and ensure a smooth automation rollout.

Traditional automation methods rely on predefined schedules and manual intervention, leaving room for inefficiencies. Sedai eliminates these challenges by providing autonomous cloud optimization, dynamically adjusting AKS cluster states based on real-time demand.

Instead of logging failures and rerunning scripts, Sedai continuously learns from past executions and optimizes infrastructure automatically, reducing the need for extensive manual monitoring.

To enable autonomous cloud optimization with Sedai, you can connect your AKS cluster using these step-by-step instructions. This allows for intelligent, real-time automation without manual intervention.

Methods for Starting and Stopping AKS Clusters

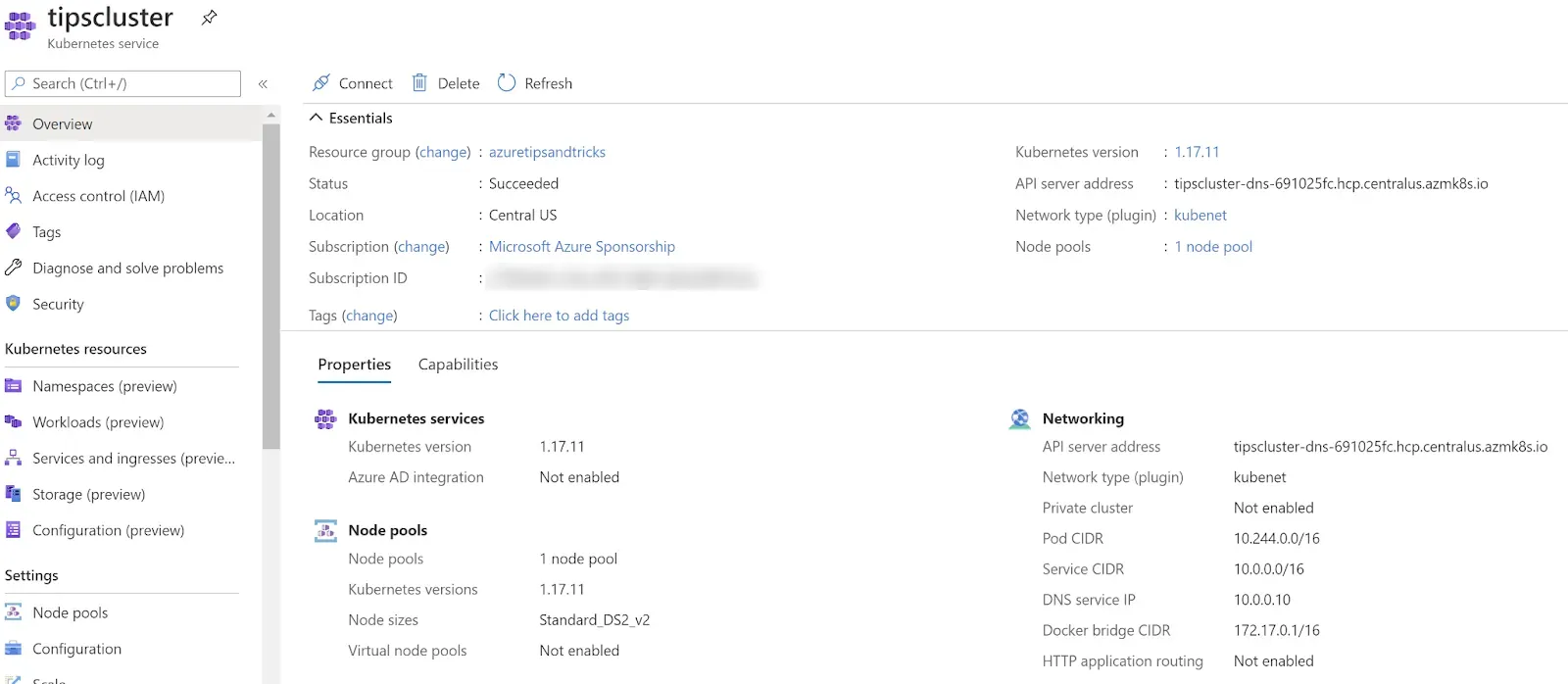

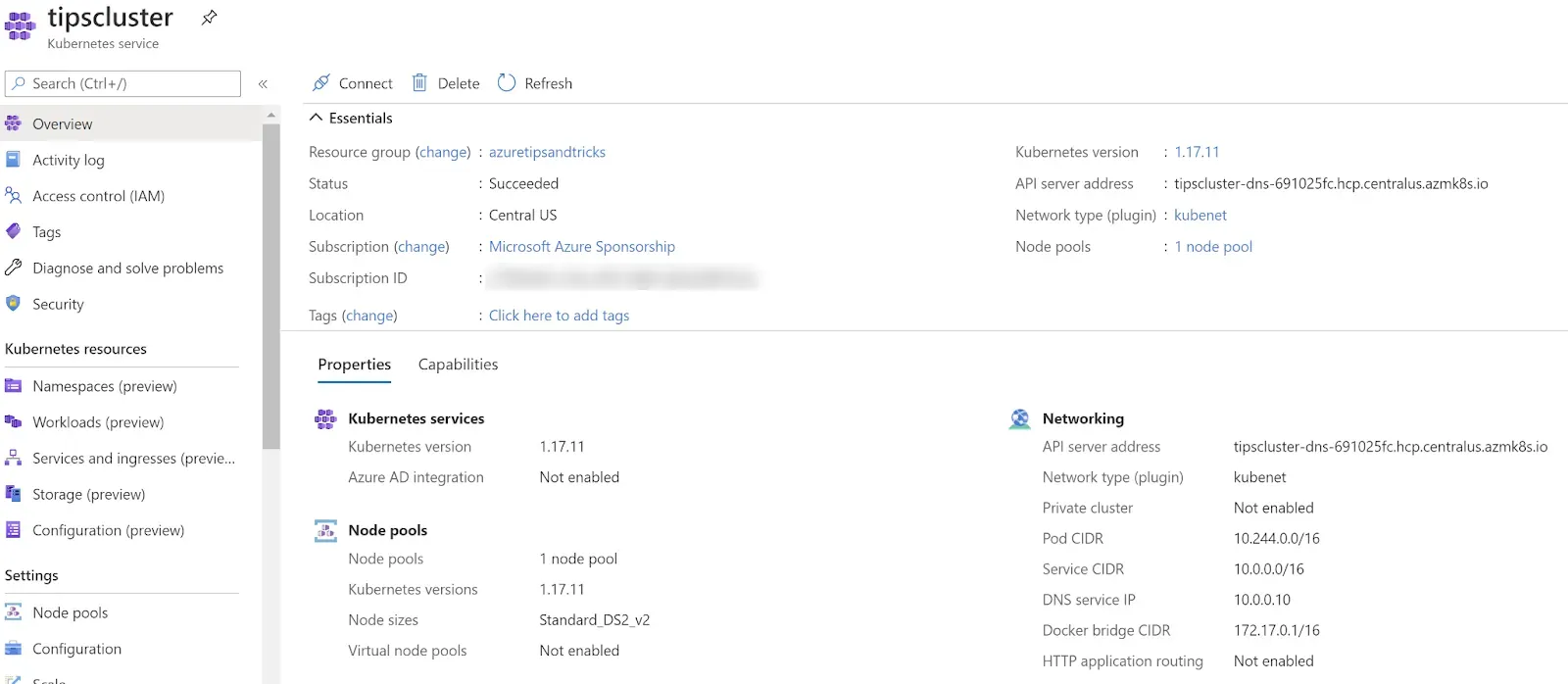

Source: https://microsoft.github.io/AzureTipsAndTricks/blog/tip308.html

Managing AKS clusters efficiently requires a combination of command-line automation, feature awareness, and an understanding of operational impacts. While stopping clusters helps optimize costs, it’s essential to ensure that restart conditions, networking changes, and workload resilience are accounted for.

Commands and Methods Using Azure CLI and PowerShell

Azure provides multiple ways to start and stop AKS clusters, primarily using Azure CLI and PowerShell scripts. These methods can be integrated into Automation Accounts, Logic Apps, or DevOps pipelines to ensure seamless execution.

Using Azure CLI

Stopping an AKS cluster:

bash

Starting an AKS cluster:

bash

Checking the cluster's status:

bash

This approach allows for easy manual or automated control over cluster operations.

Using PowerShell

PowerShell provides more structured automation for managing AKS clusters:

Stopping a cluster:

powershell

Starting a cluster:

powershell

Fetching the cluster’s status before performing an action:

powershell

By integrating these commands into Azure Automation Runbooks or scheduled tasks, teams can ensure AKS clusters operate efficiently without manual intervention.

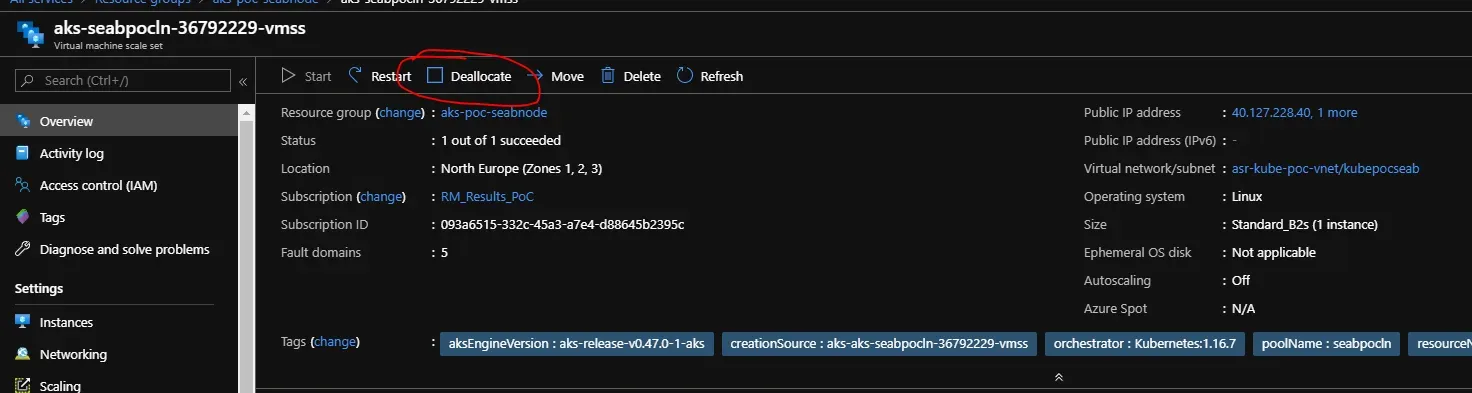

Important Notes on Cluster Feature Support and Conditions

Before implementing an automated AKS lifecycle management solution, teams should consider cluster-specific conditions and limitations:

- Feature Support:

- The stop/start feature is only available for Virtual Machine Scale Set (VMSS) backed clusters.

- Node Auto-Provisioning (NAP) clusters cannot be stopped.

- Stopped clusters retain all configurations except standalone pods (i.e., pods not managed by Deployments or StatefulSets).

- State Retention:

- The cluster state is preserved for up to 12 months when stopped.

- If the cluster is stopped beyond this period, recovery is not possible.

- Operation Restrictions:

- Stopped clusters can only be started or deleted—other operations (e.g., scaling, upgrades) require the cluster to be running.

- Private Endpoints linked to private AKS clusters must be recreated after restart.

- Time Delay Considerations:

- After stopping an AKS cluster, wait at least 15–30 minutes before restarting.

- If a restart is attempted too soon, it may disrupt the shutdown process, leading to potential failures.

By understanding these conditions, teams can prevent unexpected downtime and configuration loss when automating AKS lifecycle management.

Impact of Cluster Operations on IP Addresses and Settings

Stopping and restarting an AKS cluster can introduce changes to networking and settings that impact dependent applications.

IP Address Changes

- The API server’s public IP address may change when a cluster is restarted.

- If applications or firewalls depend on a static IP, additional configuration (such as Azure Private Link) is required.

Autoscaler Behavior

- When a cluster restarts, autoscaler settings do not immediately restore previous node counts.

- Instead, the cluster starts with only the nodes required for workload execution and gradually scales based on demand.

Pod Disruptions

- Standalone pods (not managed by Deployments, StatefulSets, or DaemonSets) are deleted when a cluster stops.

- To ensure high availability, workloads should be managed using Kubernetes controllers like Deployments or StatefulSets.

Persistent Storage Considerations

- Azure Managed Disks and Persistent Volumes (PVs) remain intact, but attached nodes must be re-associated manually if disrupted.

- Ensuring StorageClass configurations align with dynamic reattachment policies prevents unexpected downtime.

Networking Dependencies

- If using Azure Private Endpoints, these must be deleted and recreated when restarting a stopped cluster.

- Ingress Controllers, DNS settings, and network policies should be reviewed to avoid connectivity issues.

Manual or scheduled AKS stop/start operations require ongoing monitoring and adjustments. Sedai removes these challenges with autonomous cloud optimization, dynamically managing AKS cluster availability based on real-time demand. Instead of relying on static schedules, Sedai automatically scales clusters, adjusts networking settings, and ensures optimal resource utilization without requiring human intervention.

Conclusion

Automating the start and stop operations of AKS clusters is a cost-effective and efficient way to manage cloud resources, preventing unnecessary expenses while ensuring clusters are available when needed. By leveraging Azure Automation, CLI commands, Logic Apps, or PowerShell scripts, teams can eliminate manual interventions, streamline operations, and improve overall cloud efficiency.

Implementing automated scheduling reduces human error, enhances workload management, and frees up valuable engineering time. Rather than relying on static schedules, Sedai’s autonomous cloud optimization takes this further, dynamically adjusting cluster availability based on real-time demand, maximizing savings without compromising performance.

Now is the time to move beyond manual processes and embrace automation with Sedai to simplify AKS cluster management and optimize cloud costs effortlessly. Schedule your demo today!

FAQs: Automating AKS Cluster Startups and Shutdowns

1. Why Should I Automate AKS Cluster Shutdowns and Startups?

Manually managing AKS clusters leads to unnecessary cloud costs and operational inefficiencies. Automation ensures that clusters only run when needed, reducing expenses while maintaining availability.

2. What Methods Can I Use to Automate AKS Cluster Lifecycle Management?

You can automate AKS cluster management using Azure Automation Accounts, PowerShell scripts, Azure Logic Apps, Azure CLI, REST APIs, or Azure DevOps Pipelines. Each method provides flexibility and control over when and how clusters operate.

3. Does Azure Provide a Built-In Scheduling Feature for AKS?

No, Azure does not provide a native scheduling feature for AKS cluster start/stop operations. However, automation solutions like Azure Automation and Logic Apps can fill this gap effectively.

4. What Happens to My AKS Cluster When It’s Stopped?

Stopping an AKS cluster pauses all workloads and releases compute resources, but it retains cluster configurations. However, standalone pods (not managed by Deployments or StatefulSets) are deleted upon shutdown.

5. Will My AKS Cluster’s IP Address Change After Restarting?

Yes, the API server’s public IP address may change when restarting a stopped cluster. If applications rely on a fixed IP, use Azure Private Link or DNS updates to prevent connectivity issues.

6. Can I Stop and Start AKS Clusters with Autoscaler Enabled?

Yes, but when a cluster restarts, autoscaler settings do not immediately restore previous node counts. The cluster only starts with the necessary nodes to run workloads, and scaling resumes based on demand.

7. How Can I Schedule My AKS Cluster to Start and Stop Automatically?

You can use Azure Automation with PowerShell runbooks, Azure DevOps Pipelines, or Logic Apps to schedule automatic start/stop operations. These methods ensure clusters are only running when required.

8. What Permissions Are Required to Automate AKS Cluster Operations?

The Automation Account or Managed Identity needs Contributor or Owner role permissions on the AKS resource group. This ensures it can execute start and stop commands without authentication errors.

9. How Long Can an AKS Cluster Stay Stopped?

Azure retains a stopped AKS cluster’s state for up to 12 months. After this period, the cluster cannot be recovered, and a new deployment will be required.

10. How Does Sedai Improve AKS Cost Optimization Compared to Traditional Scheduling?

Unlike static scheduling, which follows predefined rules, Sedai’s autonomous optimization continuously learns from real-time AKS usage patterns. It dynamically scales, stops, or restarts clusters only when necessary, ensuring maximum efficiency and cost savings without manual intervention.