Key takeaways

- Use Spot Instances in Kubernetes to reduce infrastructure costs for fault-tolerant workloads.

- Balance Spot and On-Demand capacity to improve cost efficiency and application reliability.

- Optimize workload scheduling continuously to minimize Spot interruption impact on performance.

- Automate Kubernetes scaling and Spot allocation to reduce cloud waste at scale.

Running Kubernetes on AWS Spot Instances: A Complete Guide

Imagine managing a Kubernetes cluster with fluctuating demands and constantly rising costs. Every scaling decision brings added expenses, and traditional instance pricing often doesn’t allow you to scale affordably. Enter spot instances. Running Kubernetes clusters on spot instances isn’t just a cost-saving strategy—it’s an approach that makes scalability and flexibility possible at a fraction of the price.

By using AWS spot instances in Kubernetes, you’re tapping into Amazon’s surplus capacity, gaining significant savings and making it easier to meet demand surges without blowing through budgets. Spot instances in K8s are becoming increasingly popular, but they come with their own set of considerations, especially around reliability and potential interruptions. That’s where this guide comes in.

This article covers everything from setting up spot instances for Kubernetes clusters to leveraging autoscaling and best practices for managing spot instance interruptions. Additionally, we’ll explore how Sedai’s autonomous optimization platform (Learn more at Sedai) simplifies the process with real-time scaling and interruption management.

Overview of Kubernetes Spot Instances

Source: Sedai

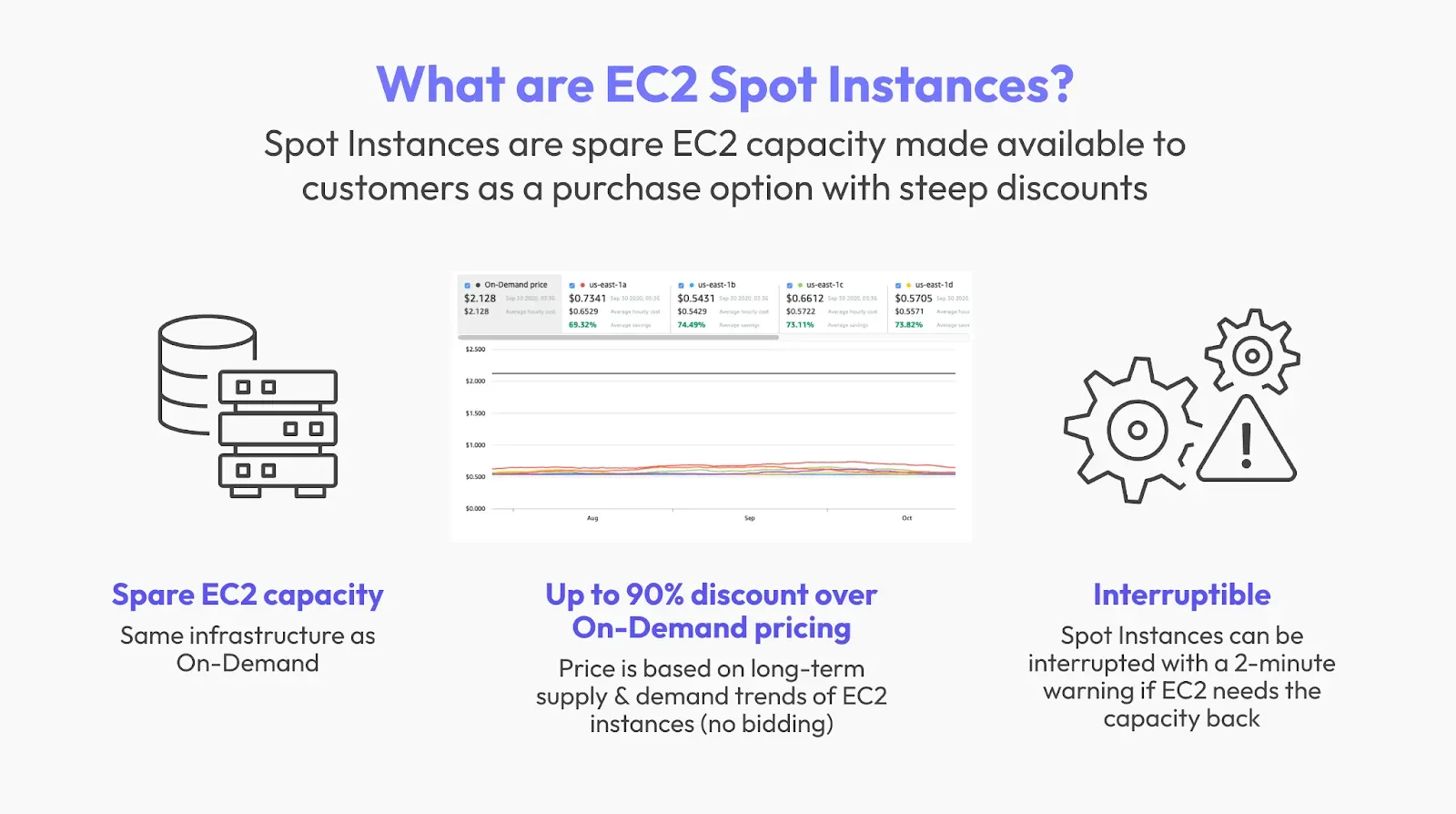

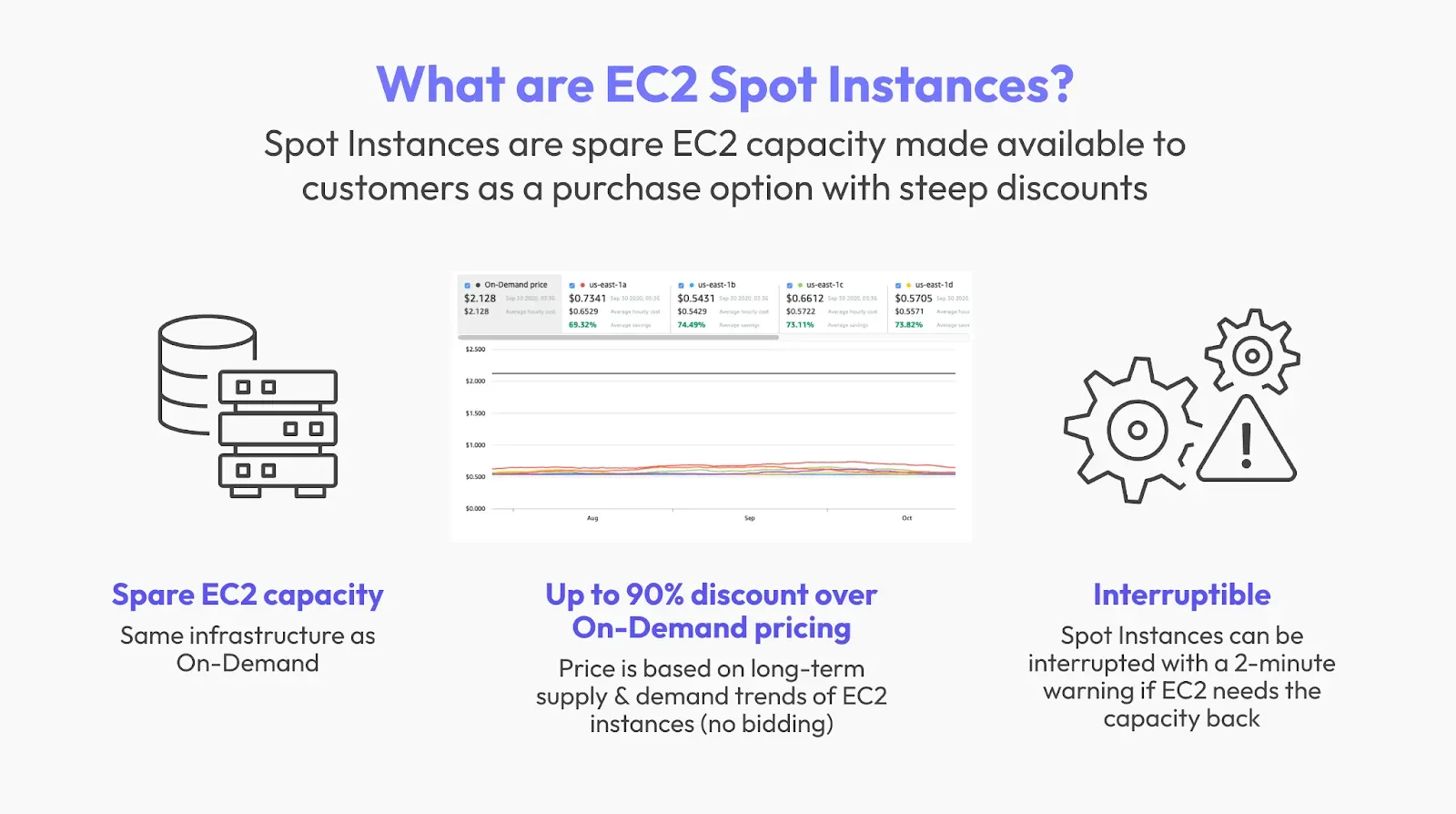

Spot instances offer an affordable way to scale Kubernetes clusters by utilizing AWS’s unused EC2 capacity at discounted rates. The key benefit here is cost-effectiveness—by deploying your Kubernetes workloads on spot instances, you can save as much as 70-90% compared to standard on-demand instances. But with those savings come potential challenges: spot instances can be reclaimed by AWS with as little as two minutes' notice.

With Sedai’s AI-powered tools (Discover Sedai’s solutions), this risk is minimized by continuously monitoring instances and adjusting configurations to ensure consistency. Let’s examine why spot instances have become a popular choice for Kubernetes deployments.

Key Benefits of Using Spot Instances for Kubernetes

- Cost Savings: The financial benefits are substantial, enabling you to do more with a smaller budget. Deploying on spot instances lets you scale up without worrying about exorbitant expenses.

- Enhanced Scalability and Flexibility: Spot instances provide unmatched scalability, with the ability to add and remove instances based on demand.

Spot Instance Bidding StrategiesAWS Spot Instances uses a bidding model where you specify the maximum price you're willing to pay for an instance. AWS will automatically choose the lowest-price capacity available as long as it’s under your bid. Here are some key strategies to effectively manage bidding:

- Fixed Price Strategy: Set a fixed maximum price for a given instance type. If prices are too high, the instance won’t be provisioned.

- Dynamic Bidding: Use automated tools or scripts to adjust the bid based on supply and demand. This approach ensures that your spot instances remain within budget, even during price fluctuations.

- Cost Optimization with Bid Adjustments: By using a cost optimization platform, you can automate bid adjustments based on your predefined budget and scaling requirements.

Setting Up Kubernetes with Spot Instances

Prerequisites for Setting Up Kubernetes on Spot Instances

To begin using spot instances in K8s, you’ll need AWS IAM roles and permissions, as well as essential Kubernetes tools like kubectl and eksctl. These tools will allow you to access and configure your EC2 spot instances.

AWS Requirements Table

Creating a Kubernetes Cluster with Spot Instances

Let’s walk through creating a Kubernetes cluster configured specifically for spot instances:

- Start with Cluster Setup: Use AWS’s eksctl to initiate your Kubernetes cluster with an attached EC2 spot instance node group.

- Define Node Groups in YAML: Configuring node groups with YAML ensures that instances are categorized for scaling and performance.

- Apply Taints and Tolerations: By using taints and tolerations, you control how pods are scheduled, making sure spot instances handle lower-priority workloads.

Example YAML Configuration for Node Groups:

Yaml

By following these steps, you can set up your Kubernetes cluster to maximize cost savings and scalability. Additionally, Sedai’s autonomous node group configuration allows for real-time adjustments, ensuring minimal disruption when spot instances are reclaimed (Explore Sedai’s node group configuration).

Configuring Spot Fleets for Kubernetes

Spot Fleets lets you provision a mix of on-demand and spot instances to meet your needs for both cost and reliability. When configuring Spot Fleets for Kubernetes, consider:

- Diverse Instance Types: Choose multiple instance types and availability zones to ensure availability.

- Target Capacity: Define the desired target capacity based on your scaling needs, which AWS will use to automatically adjust capacity.

- Instance Pools: Configure your fleet with multiple instance pools for higher reliability during demand fluctuations.

Example:

yaml

Autoscaling and Spot Instances in Kubernetes

Source: Sedai

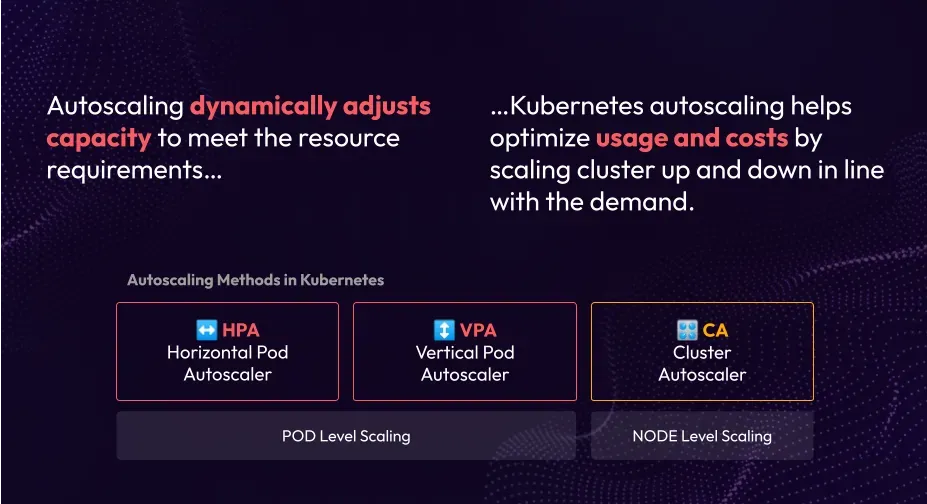

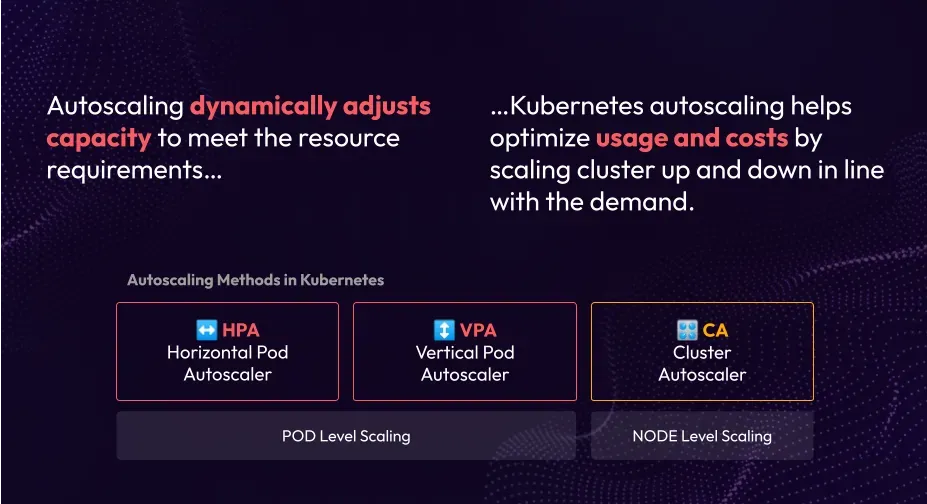

Autoscaling is essential for managing workloads efficiently on spot instances, allowing your Kubernetes cluster to automatically scale up or down based on demand. When using spot instances, the Cluster Autoscaler becomes a vital tool in this setup, especially when configured to prioritize spot nodes. Let’s break down how you can leverage autoscaling and optimize it for cost-efficiency.

Role of the Cluster Autoscaler in Managing Spot Instances

The Cluster Autoscaler is designed to add or remove nodes based on workload needs, making it a perfect partner for spot instances. When the demand increases, the autoscale can add more spot instances, scaling down when the demand subsides to avoid unnecessary costs. For effective use:

- Configure Node Group Labels: Label spot instance nodes so the autoscaler can prioritize scaling for these cost-effective options.

- Set Taints and Tolerations: Taints help segregate workloads on spot instances, ensuring only specific pods are scheduled there.

Best Practices for Autoscaling Spot Instances

Autoscaling plays a pivotal role in ensuring that your Kubernetes workloads are cost-efficient while still maintaining performance and availability.

When using spot instances, it's crucial to adjust scaling based on real-time resource usage and demand fluctuations. Here are some detailed best practices to optimize autoscaling for spot instances:

1. Ensure Cost Efficiency Through Auto-Scaling Policies

To maximize the cost-saving benefits of spot instances, configure your autoscaling policies to dynamically adjust the number of instances based on resource consumption, such as CPU and memory usage. By doing so, your Kubernetes cluster can scale up during peak demand and scale down during idle times, all while leveraging the lower cost of spot instances.

- Set CPU and Memory Usage-Based Policies: Use Kubernetes Resource Requests and Limits to define when scaling actions should occur based on CPU and memory metrics. Configure Horizontal Pod Autoscalers (HPA) to monitor these metrics and scale the application accordingly.

- Configure the Cluster Autoscaler to Focus on Spot Instances: In addition to using HPA, configure the Cluster Autoscaler to prioritize scaling with spot instances. This ensures that spot instances are the first to scale in or out, helping you optimize costs.

Example: A policy that adjusts the number of spot instances based on CPU usage could be written as follows:

yaml

This policy automatically adjusts the number of pods in the example-deployment based on CPU utilization, ensuring that the resources are dynamically scaled based on demand.

2. Diversify Instance Pools

One of the primary risks of using spot instances is the possibility of simultaneous termination of all instances within a single instance pool due to demand spikes or availability issues.

To mitigate this risk, diversify instance pools by using multiple instance types and availability zones. This practice minimizes the chances of all your instances being interrupted at the same time, ensuring that your Kubernetes workload can remain operational.

- Multiple Instance Types: Deploy workloads across different instance families, such as "m5.large" and "m5a.large", or use a mix of CPU-optimized and memory-optimized instances to balance cost and performance.

- Multiple Availability Zones: Configure your instance pools to span multiple availability zones within the region. By doing so, you reduce the impact of a failure in a single zone and increase overall reliability.

- Instance Pool Configuration: Define the number of spot instance pools to be used when configuring your Kubernetes clusters. For higher resilience, aim for at least three pools.

Example: A Kubernetes configuration defining node pools with different instance types and multiple instance pools:

yaml

This example sets up a Kubernetes node group with three different instance types across three different instance pools, ensuring both cost efficiency and fault tolerance in case of spot instance terminations.

3. Use Taints and Tolerations for Optimized Workload Distribution

By using taints and tolerations, you can fine-tune your Kubernetes workloads to ensure that only appropriate workloads are scheduled on spot instances. This allows you to prioritize critical workloads on more stable instances, while running non-critical tasks on spot instances, further optimizing resource allocation.

- Taints: Use taints to mark nodes (e.g., spot instance nodes) so that only specific pods can be scheduled on them. This helps in isolating spot instances for lower-priority workloads and ensures that your critical workloads stay on on-demand instances.

- Tolerations: Use tolerations to allow specific workloads to be scheduled on tainted nodes. This combination of taints and tolerations enables Kubernetes to prioritize which workloads run on spot instances.

Example: Configuring taints and tolerations for a spot instance node group:

yaml

This configuration ensures that only non-critical workloads with the corresponding tolerations are scheduled on the "spot-ng" node group, while critical applications are scheduled on other on-demand node groups.

4. Implement Instance Diversification and Availability Zones

As mentioned earlier, the use of multiple instance types and availability zones can dramatically increase the reliability of your Kubernetes cluster. When spot instances are reclaimed, the more diverse your instance types and availability zones, the higher the chances that your workload will remain operational.

- Multiple Availability Zones: Deploy nodes across multiple availability zones within your region to ensure that your workloads are not dependent on a single zone. This strategy increases resilience to zone failures and interruptions.

- Instance Diversification: By diversifying the instance types used in your Kubernetes cluster, you can optimize performance based on workload requirements, while minimizing the likelihood of all instances being interrupted at once.

Example: A diversified instance pool across multiple availability zones:

yaml

This setup spreads the spot instance node group across three availability zones and pools, reducing the risk of losing all instances at once.

Troubleshooting Common Issues with Spot Instances

- Interruption Handling: Ensure that the termination handler is correctly configured and that pods are rescheduled effectively.

- Scaling Problems: If autoscaling isn’t triggering, check node labels, taints, and resource limits.

- Cost Overruns: Monitor your spot instance costs with AWS cost management tools and adjust bidding strategies if you’re overspending.

Spot Instance Backup Strategies

To mitigate the risk of sudden spot instance terminations, consider the following strategies:

- Use On-Demand Instances for Critical Workloads: Always maintain a baseline level of on-demand instances for essential services to ensure high availability.

- Automated Pod Rescheduling: Implement Kubernetes workloads that can automatically reschedule pods from terminated spot instances onto available on-demand instances or other spot nodes.

- Persistent Storage for State: Use Amazon EBS or EFS to ensure that data is persisted and can survive instance interruptions.

Managing Spot Instance Interruptions in Kubernetes

Source: Sedai

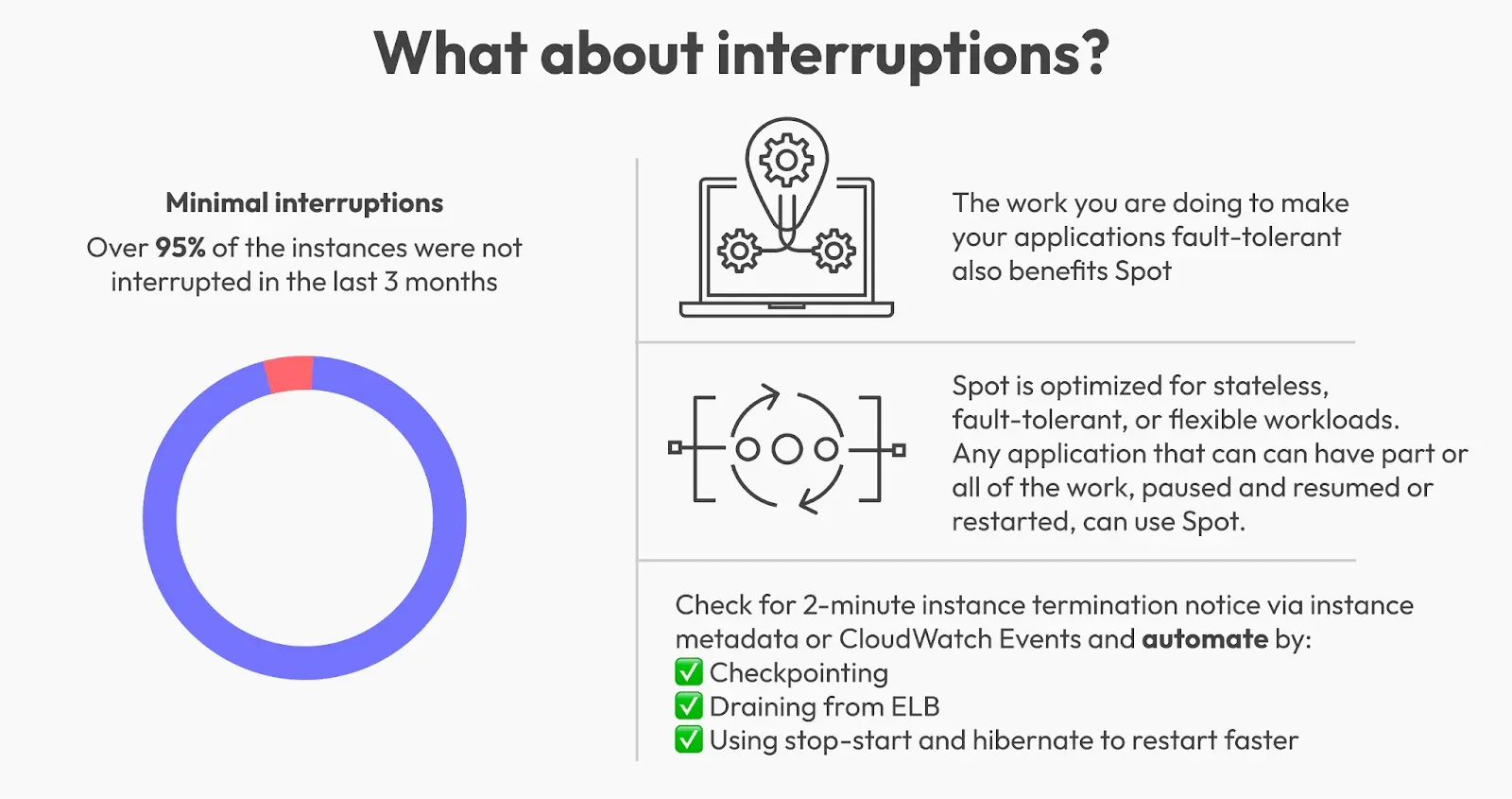

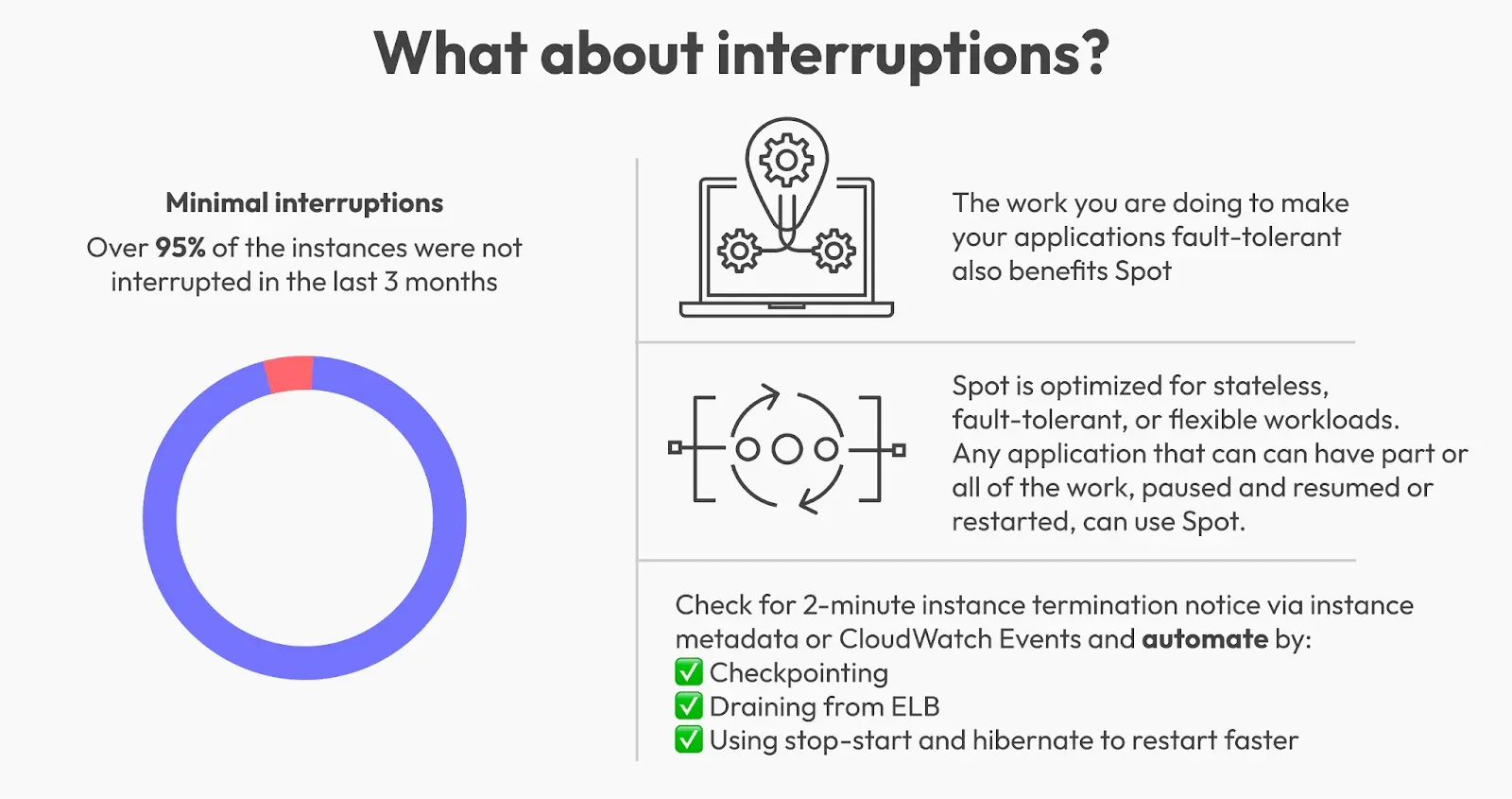

Spot instance interruptions are a reality; AWS can reclaim these instances with minimal notice. Preparing for this ensures your applications remain resilient. Let’s look at how to handle interruptions effectively.

Understanding Spot Instance Interruptions

AWS reclaims spot instances when demand for on-demand capacity spikes. When this happens, AWS provides a termination notice, typically about two minutes in advance. Handling these interruptions gracefully is crucial to avoiding service disruptions.

Deploying AWS Node Termination Handler

The AWS Node Termination Handler is a Kubernetes add-on that intercepts termination notifications and triggers rescheduling for affected pods. Here’s a simple setup guide:

- Install the Node Termination Handler: This handler listens for interruption notices and automates pod rescheduling.

- Configure Pod Affinity: By setting pod affinity and anti-affinity rules, you help balance workloads, ensuring redundancy even if nodes are terminated.

With Sedai’s platform, interruption handling becomes even more proactive. Using predictive analytics, Sedai anticipates interruptions before they occur and optimizes resource distribution, reducing dependency on manual intervention (Explore Sedai’s interruption handling).

Spot Instance Monitoring and MetricsTo ensure smooth operation with spot instances, continuous monitoring is essential. Key AWS metrics for spot instances include:

- EC2 Spot Instance Metrics: Track instance lifecycle, spot price, and interruption notices.

- CloudWatch Alarms: Set up alarms to notify you when spot instance availability changes or when an interruption is imminent.

- Cluster Metrics: Leverage Kubernetes tools like Prometheus to monitor spot node utilization, pod evictions, and resource allocation.

Managing Spot Instance QuotasAWS enforces limits on the number of spot instances you can request in a given region. To manage these quotas effectively, you can:

- Monitor Spot Instance Usage: Regularly monitor your usage through AWS’s console and CloudWatch metrics to stay within limits.

- Request Limit Increases: If you anticipate high demand for spot instances, request quota increases to scale your cluster as needed.

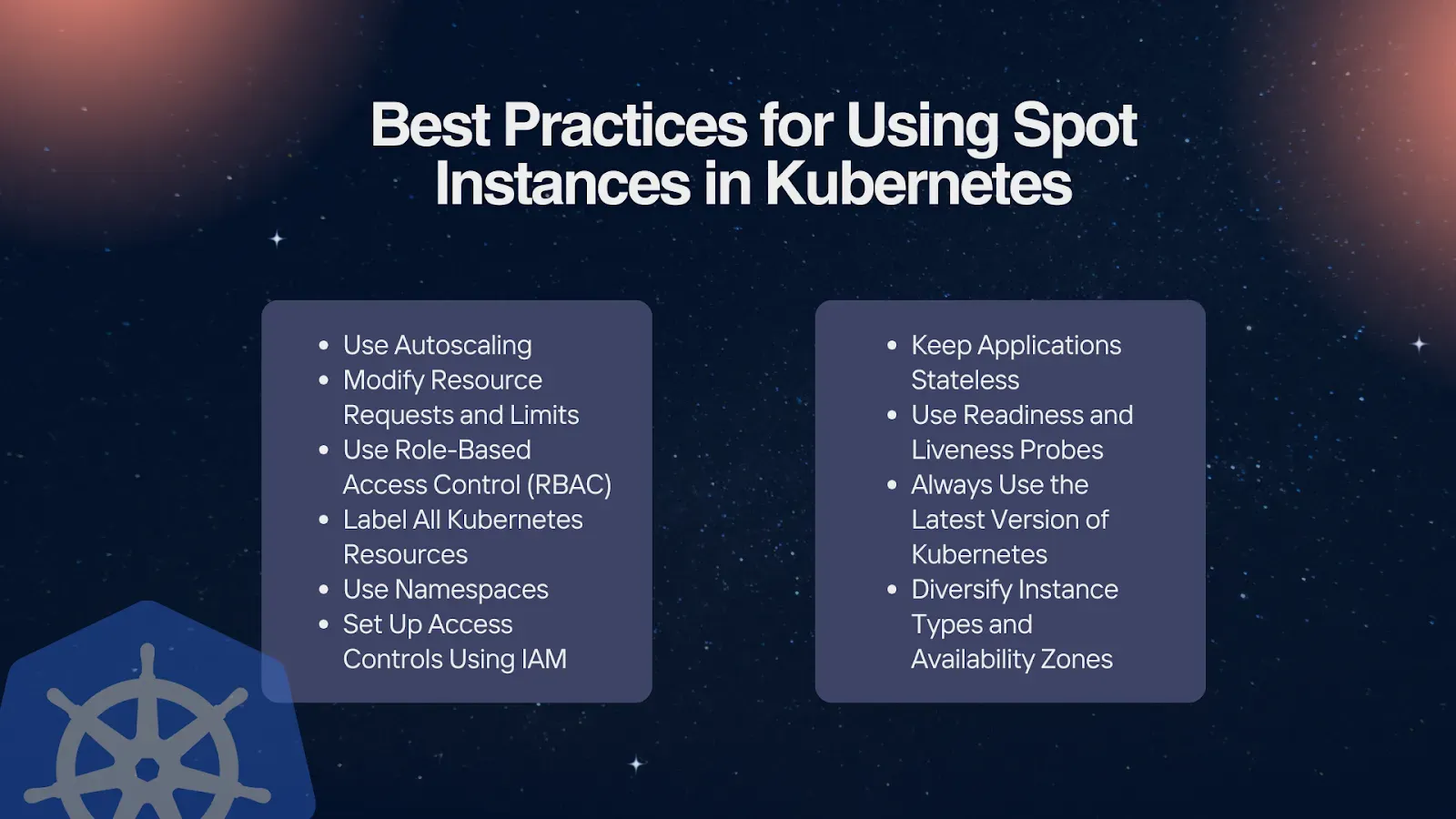

Best Practices for Using Spot Instances in Kubernetes

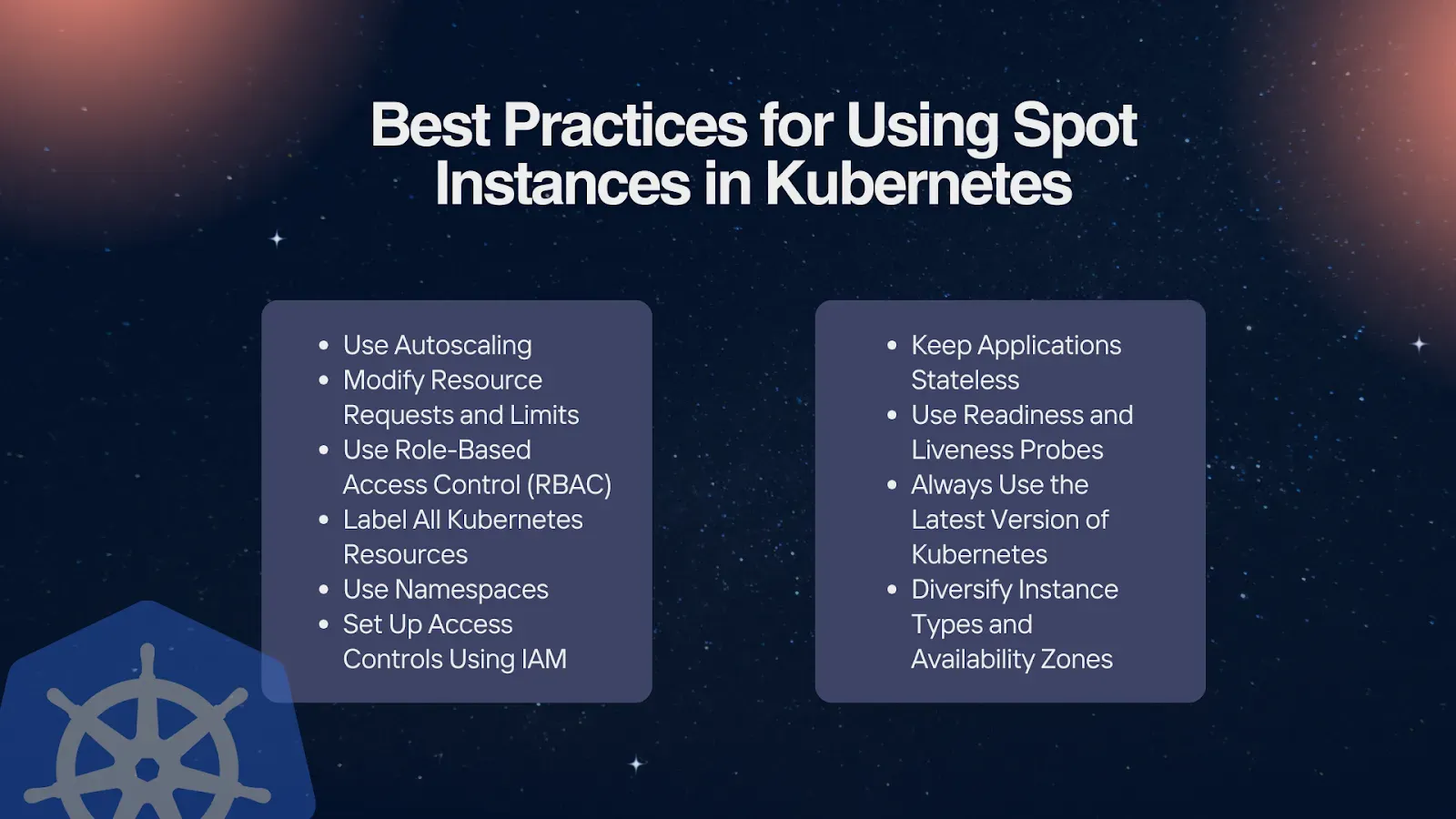

Adopting best practices can significantly reduce the risk of disruptions when running spot instances in Kubernetes. Here are some tried-and-tested strategies.

Diversifying Instance Types and Availability Zones

Using multiple instance types across different availability zones improves resilience and decreases the risk of spot interruptions. When you diversify instance types, your cluster can adjust more flexibly during interruptions by drawing from a broader capacity pool.

Optimizing Node Groups for Spot Instances

Node groups play a critical role in managing workloads on spot instances. Configure node groups with proper labels, taints, and tolerations to ensure that workloads are efficiently handled and to control which applications are assigned to spot nodes.

- Node Group Labels: Use labels to identify node types and prioritize spot nodes.

- Taints and Tolerations: Set taints to ensure only specific workloads are assigned to spot nodes.

Balancing Cost Savings with Reliability

While spot instances offer impressive cost savings, it’s essential to maintain reliability for critical workloads. Use a blend of spot and on-demand instances to ensure high availability. Sedai’s real-time monitoring helps maintain this balance by optimizing node groups and making informed instance selections, allowing you to maintain stability without excessive costs.

Deploying and Scaling Applications on Spot Instances

Running applications on spot instances requires specific configurations to handle scalability and potential interruptions effectively.

Creating and Deploying Applications on Spot Nodes

When deploying applications on spot nodes, it’s essential to configure for resilience. Use labels and taints to direct non-critical applications to spot nodes, ensuring that mission-critical applications are reserved for stable, on-demand instances.

Using Kubectl for Deployment and Scaling Activities

Kubectl commands make managing deployments and scaling on spot instances efficient. Here are some basic commands for managing application deployments:

bash

Monitoring and Adjusting Scaling Strategies with Sedai

Sedai’s platform enhances application scaling by dynamically adjusting scaling strategies based on real-time performance and usage. This means applications maintain stability, even during rapid scaling, without additional manual intervention.

Comparison of Cloud Providers’ Spot Instance Offerings

Here is the comparison of top cloud providers’ spot instance offerings:

Amazon Elastic Compute Cloud (EC2)

Amazon EC2 Spot Instances advertise discounts of up to 90%. In reality, the discounts vary significantly per region, type, and even instance size and end up being much more modest if you require a specific instance type/size. They can also change quickly (their pricing tool is updated every 5 minutes), and they allow you to set a maximum price you are willing to pay (with your VM terminated if the market price exceeds that). In general, the discounts that are obtained for the tested instances are 50% or less.Even though the discounts are not as spectacular as other providers, Amazon offers a very generous 2-minute preemption warning, which should allow most tasks to gracefully end (and perhaps even time for a replacement instance to spin up). In addition, AWS makes it easy to set up their Auto Scaling with Spot instances, requiring less work to include Spot instances in your setup.

Google Compute Engine (GCE)

GCP Spot VMs offer some of the most generous discounts for computing resources among providers. What's more, the prices are not very different from region to region and are stable enough (can only change up to once per month) for them to be on the main price list with no need for special pricing tools. Also, the discounts are only different per type, regardless of instance size. All this allows you to plan ahead and easily figure out what you are paying.

From the table, you can see some amazing savings, with the C3 being a big standout. That's the latest Sapphire Rapids type, so it would seem that Google's customers have not yet started using it en masse, and there is a lot of spare capacity. At full price, we saw that the C3 was not great value, but at a 90% discount, this should be a whole different discussion!

The GCP Spot VMs we are using seem to have a lifetime measured often in hours and sometimes in days, depending on the type and region. However, the preemption warning Google offers is 30 seconds, which is less generous than AWS.

Microsoft Azure

The Azure Spot Virtual Machines behave like on AWS, with prices that can vary over time and preemption when the market price is above your set maximum. However, the actual discounts are much deeper than AWS for most types. A preemption warning is also similar to GCP at 30 seconds; therefore, the pattern is quite similar to GCPs.

Multi-Cloud Spot Instance Strategies

While AWS Spot Instances are a great solution, consider expanding your spot instance strategy to include other cloud providers for added resilience:

- Google Cloud Preemptible VMs: Use a hybrid approach with Google Cloud’s preemptible instances alongside AWS spot instances for workload distribution.

- Azure Spot VMs: If you’re using a multi-cloud Kubernetes environment, consider Azure’s spot VMs as a complementary cost-saving strategy.

- Cross-Cloud Autoscaling: Tools like Kubernetes Federation or multi-cloud Kubernetes environments can help you manage resources and scaling across providers.

Real World Case Studies

Here are two major case studies explained and implemented:

- Case Study 1: Delivery Hero, one of the world's largest food delivery networks, successfully transitioned their entire Kubernetes infrastructure to spot instances, demonstrating that spot instances can work at a massive scale. Visit here to know more.

- Case Study 2: ITV, the UK's largest commercial broadcaster, implemented spot instances to handle growing viewership while optimizing costs during the pandemic. Visit here to know more.

Maximizing Efficiency with Kubernetes Spot Instances

Spot instances provide a powerful solution for Kubernetes clusters, combining cost savings with scalable resources. By following best practices, setting up proper node groups, and implementing autoscaling, you can effectively manage your Kubernetes workloads on spot instances.

Sedai’s Autonomous Optimization Platform brings an extra layer of resilience, enabling you to predict interruptions, optimize configurations, and maintain stability even with the unpredictable nature of spot instances. If you're looking to scale efficiently while minimizing costs, adopting advanced scheduling and scaling practices with Sedai is the key to success.

Schedule a demo today to see how Sedai can transform your Kubernetes operations and help you achieve a cost-effective, reliable environment with minimal manual intervention.

Schedule your demo with Sedai

FAQs

What are spot instances in Kubernetes, and why are they cost-effective?

Spot instances in Kubernetes are discounted, with excess capacity offered by cloud providers like AWS, making them significantly cheaper than on-demand instances. They allow you to scale affordably, but they can be reclaimed by AWS with minimal notice. Learn more about maximizing cost-efficiency with spot instances on Sedai’s blog.

How can Sedai’s platform improve the management of spot instances in Kubernetes?

Sedai’s platform uses real-time monitoring and AI-driven optimization to predict interruptions, adjust configurations, and maintain stability across clusters using spot instances. For an in-depth look at Sedai’s autonomous optimization, check out this blog post.

What are the prerequisites for setting up Kubernetes on spot instances?

Setting up Kubernetes on spot instances requires AWS IAM roles, permissions, and Kubernetes tools like kubectl and eksctl. Proper access to EC2 spot instances is essential for node group configuration. For more setup guidance, read through Sedai’s blog on Kubernetes configurations.

How does the Cluster Autoscaler help manage spot instances in Kubernetes?

The Cluster Autoscaler automatically scales your cluster based on demand, allowing you to prioritize spot instances for cost savings. Sedai’s platform further enhances this by dynamically adjusting instance types to match real-time demand. Explore Sedai’s insights on autoscaling in their blog.

How can I handle spot instance interruptions in Kubernetes?

Spot instance interruptions are managed by tools like the AWS Node Termination Handler, which reschedules pods when instances are terminated. Sedai’s predictive analytics can help anticipate and manage these interruptions more proactively. Learn about handling interruptions on Sedai’s blog.

What are best practices for using spot instances in Kubernetes?

Best practices include diversifying instance types, using multiple availability zones, and balancing spot and on-demand instances. Sedai’s platform helps automate these practices by optimizing node group settings. Discover more best practices on Sedai’s blog.

How does Sedai’s platform optimize pod scheduling for spot instances?

Sedai’s platform manages pod scheduling by adjusting affinity and toleration settings, ensuring an even distribution of workloads. This helps minimize disruption during interruptions. For a detailed guide on Sedai’s scheduling solutions, visit their blog.

What is the role of tolerations and node affinity in managing spot instances?

Tolerations and node affinity allow you to prioritize certain workloads on spot nodes while keeping critical workloads on more stable instances. Read more about managing workloads effectively on Sedai’s blog.

How can I scale applications effectively on spot instances in Kubernetes?

Use tools like kubectl for manual scaling or rely on Sedai’s platform, which dynamically scales applications based on real-time demand. This approach ensures stability without excessive costs. Explore Sedai’s scaling strategies in their blog.

How does Sedai ensure cost savings and reliability with spot instances?

Sedai’s platform continuously monitors, predicts, and optimizes configurations, balancing cost savings with reliability by minimizing manual interventions and optimizing for cost-efficiency. For more insights, check out Sedai’s blog.