Autoscaling in EMR is key to optimizing performance and cost efficiency for dynamic workloads. By adjusting EC2 instances based on demand, you ensure resources are allocated only when needed, preventing over-provisioning. Properly configured scaling policies can improve cost predictability and maintain consistent performance across large data processing tasks. Regular monitoring and fine-tuning of scaling thresholds are crucial for continuous optimization.

Managing resource allocation in an EMR cluster can quickly become a challenge when you’re dealing with fluctuating data processing workloads.

Without proper autoscaling, teams often experience performance slowdowns, longer job completion times, or unnecessary costs due to idle resources.

AWS has highlighted this gap too, noting that EMR Managed Scaling can lower cluster costs by up to 19% and improve utilization by around 15% by adjusting capacity every minute instead of relying on a fixed cluster size.

Many clusters end up either over-provisioned during quieter periods or completely stretched during peak demand, which ultimately leads to inefficiencies.

Autoscaling in EMR helps solve this by automatically adjusting the number of EC2 instances based on real-time metrics. This ensures your cluster stays responsive, cost-effective, and ready to handle sudden increases in workload without manual intervention.

In this blog, you’ll get a clear understanding of how EMR autoscaling works and learn practical setup steps, best practices, and optimization strategies to help you use resources more efficiently while keeping costs under control.

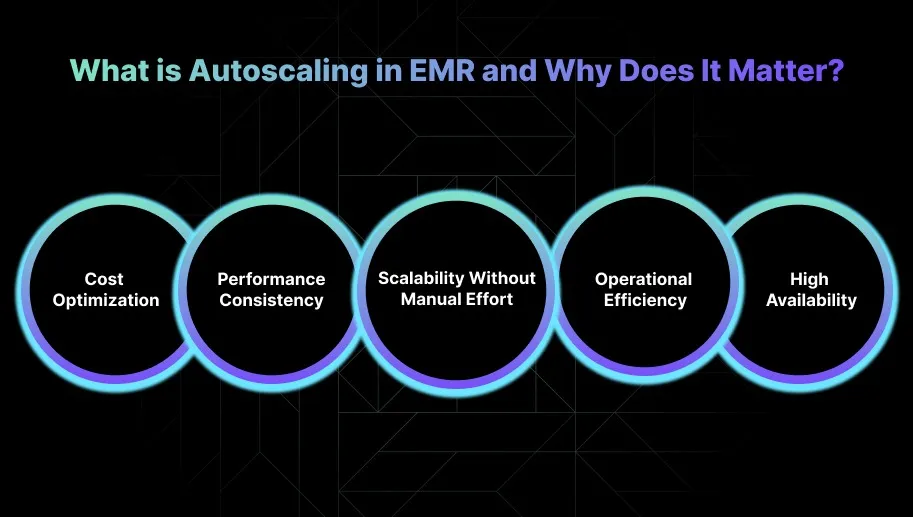

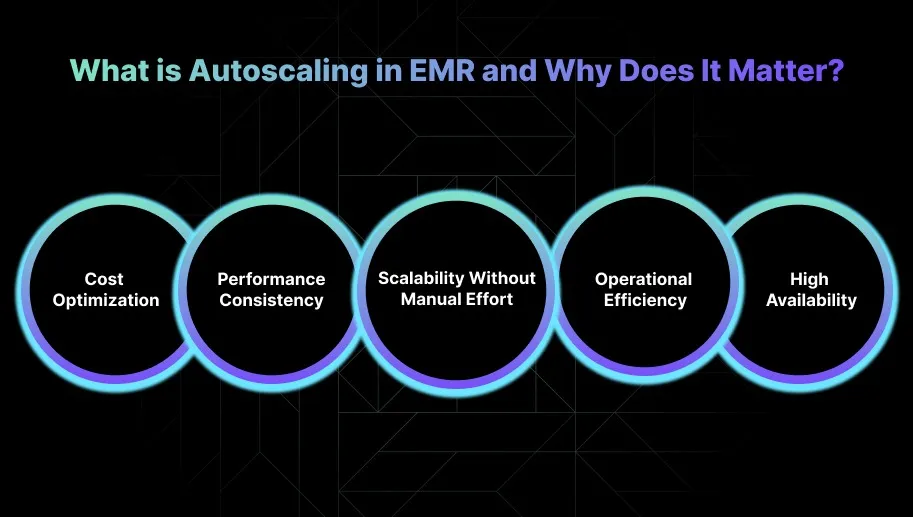

What is Autoscaling in EMR and Why Does It Matter?

Autoscaling in Amazon Elastic MapReduce (EMR) automatically adjusts the number of EC2 instances in your cluster based on workload demand. It helps the cluster scale resources up or down in real time, so you always have enough compute power to process large datasets.

This makes the cluster more efficient and keeps costs under control by preventing unnecessary over-provisioning. Here’s why autoscaling in EMR matters:

1. Cost Optimization

Autoscaling in EMR helps manage cloud spend by adjusting the number of EC2 instances based on actual workload demand.

It ensures you only pay for the resources you need at any moment, which is especially important for large data workloads where compute usage can change quickly.

2. Performance Consistency

For data-heavy jobs, limited resources can slow down tasks or cause failures. Autoscaling adds instances during peak demand so your workloads run smoothly without hitting resource limits. This is essential in production environments where performance matters.

3. Scalability Without Manual Effort

Managing large EMR clusters manually becomes challenging as data volumes grow. Autoscaling handles capacity adjustments through predefined policies, allowing you to focus on improving algorithms and infrastructure rather than managing cluster size.

4. Operational Efficiency

In traditional setups, engineers spend time monitoring resources and resizing clusters. Autoscaling simplifies this by adjusting capacity automatically, reducing operational overhead, and giving teams more time to work on higher-value improvements.

5. High Availability

Autoscaling also helps maintain availability during traffic or processing spikes. It ensures the cluster can handle unexpected demand without performance drops, which is critical for mission-critical applications that require consistent reliability.

Once you understand why autoscaling matters, it becomes easier to see where it delivers the most value.

Suggested Read: Amazon EMR Architecture & Optimization Guide 2026

Popular Use Cases of Autoscaling in EMR

Autoscaling in EMR plays an essential role in managing dynamic, data-driven workloads efficiently. By adjusting resources automatically based on actual demand, it helps maintain strong performance while keeping costs under control.

Below are the most common scenarios where autoscaling provides meaningful value for engineering teams.

1. Big Data Processing

EMR is widely used for large-scale data processing tasks such as ETL, batch processing, and analytics. Autoscaling manages fluctuating workloads by adjusting cluster size based on data volume.

It allows the cluster to expand for heavy processing and contract when demand drops, improving both cost efficiency and throughput.

2. Real-Time Data Streams

Workloads that involve real-time data ingestion and processing, such as log analysis or stream processing with Kafka or Spark Streaming, benefit from autoscaling as the cluster grows during high data velocity and shrinks when traffic slows.

This ensures resources are available when needed and avoids paying for excess capacity during quieter periods.

3. Machine Learning and Data Science Workflows

Machine learning and AI workloads often require variable compute resources to handle large datasets or run compute-intensive algorithms.

Autoscaling ensures that the necessary compute power is available for model training or inference, and scales down once processing completes, improving both performance and cost control.

4. Log and Event Data Processing

For log aggregation and event-driven pipelines, autoscaling helps EMR adapt to spikes in data ingestion while reducing capacity during low activity. This is especially valuable in continuous logging environments where data volume can shift from hour to hour.

5. Data Warehousing with Apache Hive

When using Apache Hive for large-scale data warehousing, autoscaling ensures the cluster has enough capacity to handle complex SQL queries and heavy data transformations across sizable datasets.

After workloads finish, scaling in helps reduce costs without compromising performance.

6. Ad-Hoc Data Analysis

Ad-hoc analysis often requires short bursts of compute power for complex queries on large datasets. Autoscaling makes this smooth by allocating the resources needed on demand and scaling back afterward, preventing idle capacity and unnecessary spend.

7. Backup and Disaster Recovery

Autoscaling also supports backup and disaster recovery workflows by providing additional resources during full backup operations or recovery tasks.

Once these tasks are completed, the cluster scales down again, ensuring efficiency without interrupting critical processes.

Once the use cases are clear, the process of setting up autoscaling becomes much easier to follow.

Also Read: Amazon EMR Cost Optimization: Key Strategies for 2025

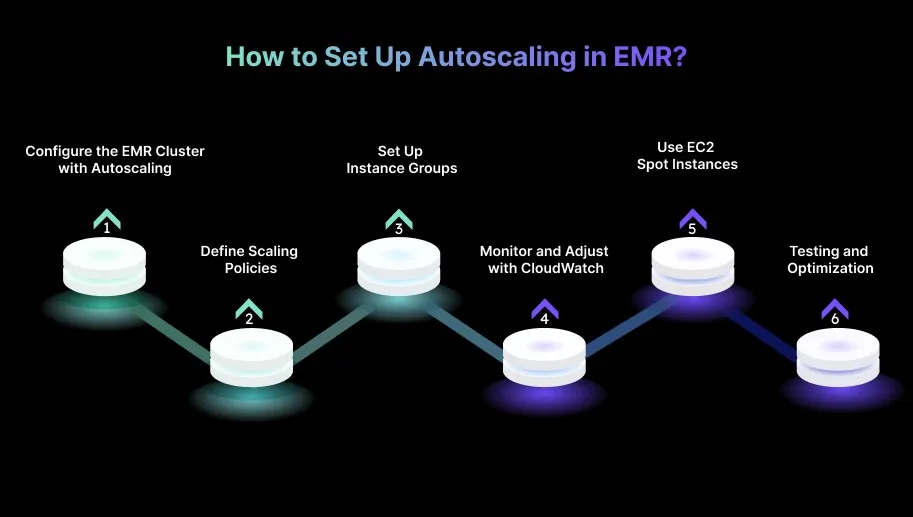

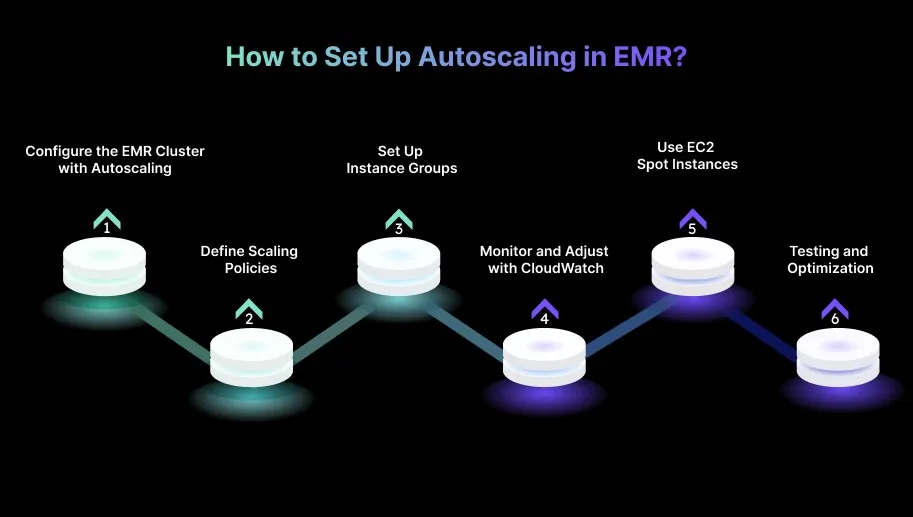

How to Set Up Autoscaling in EMR?

Setting up autoscaling in EMR is an important part of optimizing resource usage for big data workloads. The steps below will help you configure autoscaling effectively so your EMR cluster adapts to demand without manual effort

1. Configure the EMR Cluster with Autoscaling

Launch your EMR cluster with Amazon EC2 instances and enable autoscaling in the Scaling Policy section during setup. Choose the right instance types and define the minimum and maximum number of instances based on your workload needs.

2. Define Scaling Policies

- Scale-out Policy: Set the conditions for adding more nodes, such as CPU or memory thresholds or custom CloudWatch metrics. For example, you can scale out when CPU usage stays above 8% for five minutes.

- Scale-in Policy: Specify when to remove nodes. A common approach is to scale in when CPU or memory usage remains below a lower threshold, such as 20 percent, for a set duration.

3. Set Up Instance Groups

- Instance Groups: Create groups for core nodes and task nodes depending on how your cluster is structured.

- Task Node Autoscaling: Use autoscaling for task nodes when handling temporary or batch workloads. Scale based on task duration or workload size.

- Core Node Autoscaling: Core nodes support Hadoop and HDFS, so they should scale based on performance or storage requirements.

4. Monitor and Adjust with CloudWatch

- Set CloudWatch Alarms: Use CloudWatch to track CPU, memory, disk usage and other performance signals. Create alarms that trigger scaling actions when specific thresholds are reached.

- Metrics to Watch: Focus on CPU usage, disk I/O, and memory utilization. Update your scaling policies as you learn more about real workload behavior.

- Review and Refine: Check CloudWatch logs regularly to ensure scaling actions match workload patterns and tune your thresholds when necessary.

5. Use EC2 Spot Instances (Optional)

You can reduce costs by adding Spot Instances to your autoscaling configuration, especially for flexible task node workloads. Make sure your policies include a fallback to On-Demand instances to avoid disruptions if Spot capacity becomes unavailable.

6. Testing and Optimization

- Test Scaling Behavior: Run high-demand simulations, such as large batch jobs, to see how the cluster scales in practice. Confirm that scaling actions occur smoothly without interrupting processing.

- Optimize for Cost: Adjust scaling thresholds and instance counts based on observed usage patterns. Fine-tune CloudWatch alarms to eliminate unnecessary scaling and keep resource usage efficient.

Smart Practices to Optimize Autoscaling in EMR for Efficiency

Optimizing autoscaling in EMR is essential for managing resources efficiently while keeping costs in check.

By applying the right strategies, you can ensure scaling reacts accurately to workload demands and delivers consistent performance without unnecessary spend.

1. Optimize Autoscaling for Mixed Workloads

For clusters that handle both batch jobs and real-time data streams, configure separate scaling strategies for each.

Use faster scale-out policies for real-time workloads to allocate resources quickly, and apply more conservative scale-in policies for batch jobs to stay efficient during fixed processing windows.

2. Prioritize Efficient Storage Scaling with Autoscaling

You can use storage-based scaling policies to adjust EBS volumes automatically as HDFS storage needs change. Balancing compute and storage helps maintain performance during heavy processing tasks while avoiding unnecessary costs.

Track storage usage closely and refine your scaling thresholds to match workload patterns.

3. Enable Autoscaling for Machine Learning Jobs

For ML workloads, configure autoscaling to match compute resources with dataset size and model complexity.

Spot Instances can reduce training costs for non-critical stages as long as autoscaling is prepared to handle interruptions. This setup delivers the compute power needed for training without over-provisioning.

4. Set Up Autoscaling for High-Performance Computing (HPC) Workloads

For HPC applications, tune autoscaling to prioritize compute during high-demand periods and scale out quickly when workloads spike.

Once jobs finish or activity slows, scale down to avoid idle resources. This keeps HPC jobs running efficiently without waste.

5. Use EMR Autoscaling for Data Transfer Bottlenecks

During data-heavy operations such as shuffling, configure autoscaling to add nodes to prevent slowdowns and keep processing moving.

This is especially useful for large joins and aggregations where shuffle pressure can increase sharply. You can also create shuffle-specific node groups that scale based on data volume.

6. Use Autoscaling for Cost Management Across AWS Regions

For cost control, apply multi-region autoscaling to adjust resources based on regional pricing and Spot availability.

Scale up in regions with lower costs and scale down in regions with higher prices to balance performance with spend. This strategy provides flexibility for teams running variable workloads across multiple regions.

7. Enable Auto-Tuning for Auto-Spark Jobs

Enable auto-tuning for Spark jobs so executors and memory are adjusted as the job progresses.

This prevents smaller jobs from consuming unnecessary resources and ensures larger jobs receive enough capacity to complete efficiently. Dynamic tuning improves both performance and cost-effectiveness.

How Sedai Improves Autoscaling for Dynamic Workloads (Kubernetes, Lambda, ECS, and More)?

Most autoscaling tools still depend on static thresholds, which don’t reflect how workloads actually behave. This often leads to over-provisioning, resource waste, or performance issues.

Sedai takes a very different approach with autonomous autoscaling powered by reinforcement learning. It continuously analyzes real-time workload behavior and adjusts resources across Kubernetes, Lambda, and ECS environments to match true demand.

What Sedai delivers for dynamic autoscaling:

- Pod-Level Rightsizing (CPU & Memory): Sedai automatically tunes pod resource requests and limits based on live usage. This prevents over-allocation, improves performance, and typically cuts cloud costs by more than 30%.

- Node Pool & Instance-Type Optimization: By studying cluster-wide trends, Sedai picks the most efficient instance types for your node pools. This reduces idle capacity, improves performance by up to 75%, and keeps spending under control.

- Autonomous Scaling Decisions: Instead of reacting to static triggers, Sedai scales pods and nodes based on workload behavior. This ensures the right resources are available at the right time and reduces failed customer interactions by up to 70%.

- Automatic Remediation: When Sedai detects resource pressure, instability, or performance drops, it fixes the issue before it impacts users. Teams see up to 6× higher productivity because they spend far less time on manual fixes.

- Full-Stack Cost & Performance Optimization: Sedai optimizes compute, storage, networking, and cloud commitments. This end-to-end tuning delivers up to 50% cost savings while strengthening overall infrastructure performance.

- Multi-Cloud & Multi-Cluster Support: Sedai works across GKE, EKS, AKS, and on-prem clusters, offering consistent optimization at scale. It already manages more than $3.5 million in cloud spend across multi-cloud setups.

- SLO-Driven Scaling: Scaling decisions align with your SLOs and SLIs, helping maintain performance and reliability even during sudden traffic changes.

Must Read: How Engineers Save on AWS EMR Costs: 2026 Guide

Final Thoughts

Autoscaling in EMR is a powerful way to keep your cloud resources aligned with workload demand. However, it’s still essential to continuously monitor and adjust your configurations as your environment changes.

One area that often gets overlooked is predictive scaling, using past data and trends to identify upcoming demand spikes so your cluster can scale ahead of time.

By tapping into these insights, you lower the chances of under-provisioning during busy periods and avoid overspending during quieter ones.

You can take this a step further with Sedai. It helps automate this process by analyzing how your workloads behave over time and forecasting future resource needs.

With Sedai, your autoscaling setup becomes more of a self-optimizing system, adjusting smoothly to workload changes and maintaining efficiency with minimal effort on your part.

Achieve complete visibility into your EMR environment and instantly reduce unnecessary costs by optimizing autoscaling and resource management with Sedai.

FAQs

Q1.How can I prevent autoscaling from over-allocating resources in my EMR cluster?

A1. Review and fine-tune your scaling policies regularly so they reflect actual workload patterns. Use granular thresholds and cooldown periods to avoid unnecessary or rapid scale-out actions.

Q2. Can autoscaling in EMR help with both batch and real-time workloads simultaneously?

A2. Yes, EMR autoscaling can be tuned to support both types by applying different scaling strategies. Real-time workloads benefit from aggressive scale-out policies, while batch jobs work well with more predictable, conservative policies.

Q3. What are the best practices for using Spot Instances with autoscaling in EMR?

A3. Use Spot Instances for flexible task nodes and configure fallback policies to On-Demand in case of interruptions. Make sure your scaling rules account for potential Spot unavailability so the cluster can adjust automatically.

Q4. How do I optimize autoscaling for high-velocity data streams in EMR?

A4. Set faster scale-out policies that respond quickly to spikes in data ingestion. Base scaling decisions on metrics like input rates or memory pressure to match real-time demand without overshooting.

Q5. Can I use autoscaling to improve the performance of Apache Hive queries on EMR?

A5. Yes, autoscaling ensures your cluster has enough compute during heavy or complex Hive queries. Scale up for intensive transformations and scale down afterward to control costs.