The 3 Primary Cloud Providers: AWS, Azure, Google Cloud Platform (GCP)

When a business is evaluating cloud infrastructure, they are typically inundated with options. Each provider has their own set of virtual machine (VM) options, pricing models, and features, so deciding on one can be difficult.

While AWS, Azure, and GCP dominate the cloud market, 87% of enterprises use multiple cloud providers, highlighting the rise of multi-cloud strategies. This AWS vs GCP vs Azure VMs comparison is a detailed analysis of the key features of VM solutions by each provider as businesses seek to evaluate cloud solutions that deliver on cost-effectiveness, performance, scale, and flexibility going forward.

Selecting between these three platforms necessitates a profound comprehension of their advantages and subtle differences. Where AWS is often better known for its breadth of services and geographic reach, Azure shines in its integration with Microsoft’s ecosystem, and GCP is a leader in innovation areas like machine learning and Kubernetes.

In this article, we will look through the features and pricing of AWS EC2, Azure Virtual Machines, and Google Compute Engine, and we will put them side by side to help you decide for your next cloud infrastructure application.

Virtual Machine Features Comparison: AWS EC2 vs Azure Virtual Machines vs Google Compute Engine

It is extremely important to know what differentiating features each provider serves when choosing between AWS EC2, Azure Virtual Machines, and Google Compute Engine (GCE). All three are effective options for cloud computing, but differ greatly in flexibility, scalability, integrations, and instance types offered. Here are the common properties of the VMs below:

Below is a detailed comparison of the key features across these three major cloud platforms, highlighting their distinct advantages and use cases.

Key Takeaways From Comparison:

- AWS EC2 offers unmatched flexibility and scalability, with a wide variety of instance types and services tailored for different workloads. Its extensive integration with other AWS services like S3 and RDS makes it a robust option for teams already entrenched in the AWS ecosystem.

- Azure Virtual Machines excels in hybrid cloud capabilities, making it ideal for enterprises using Microsoft-based tools and solutions. With strong scalability through VM Scale Sets and integration with Microsoft’s suite of enterprise software, Azure is a top choice for businesses looking to integrate cloud infrastructure with on-premises resources.

- Google Compute Engine stands out for its ability to provide highly customizable virtual machine types, offering tailored configurations for specific workloads. Google’s strength in dynamic instance scaling and integration with cutting-edge tools like Kubernetes and AI services makes it a strong contender, particularly for businesses focused on innovation and cost-effective cloud solutions.

Each of these cloud providers offers distinct features that can greatly benefit different business needs. Whether your priority is flexibility, hybrid cloud capabilities, or innovative configurations, understanding these differences will help you make the most informed decision.

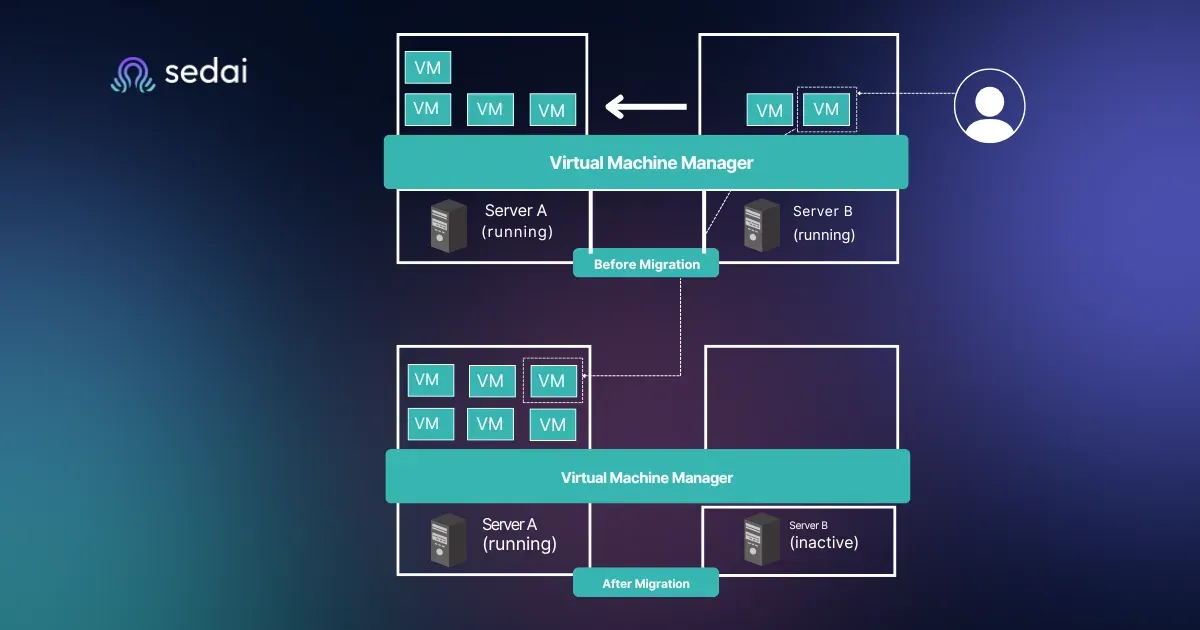

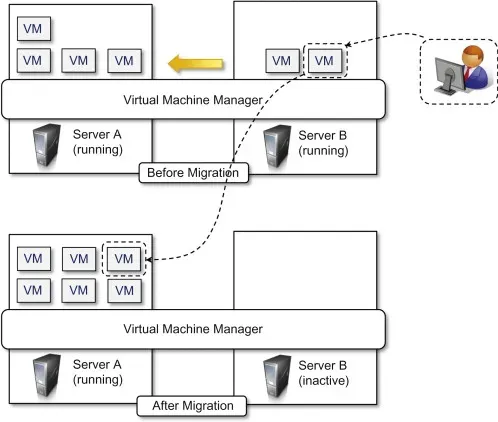

Virtual Machine Instance Access

Replace with:

Source: https://www.sciencedirect.com/topics/computer-science/virtual-machine-instance

Access control and security are critical components when managing cloud-based virtual machines. Each cloud provider offers unique methods for managing virtual machine (VM) access, ensuring that users can securely interact with their instances while maintaining compliance and performance standards. Below, we’ll compare how AWS EC2, Azure Virtual Machines, and Google Compute Engine handle VM access and security:

- AWS EC2 offers robust access control through IAM, allowing fine-grained security for every aspect of cloud resource management. EC2 Instance Connect simplifies SSH access management without needing to store SSH keys, which is beneficial for teams focused on minimizing administrative overhead.

- Azure Virtual Machines provides role-based access control (RBAC) that integrates seamlessly with Microsoft Active Directory. This makes it especially valuable for enterprises with existing Microsoft-based environments, allowing for a unified access management approach across on-premises and cloud resources.

- Google Compute Engine offers flexibility with IAM roles and OS Login, giving users more control over SSH access to instances. GCP also integrates with Identity-Aware Proxy for more advanced identity and access management, which is particularly beneficial for dynamic, highly scalable workloads.

Ultimately, the best approach to VM access depends on your organization’s existing infrastructure and security needs. Whether you prioritize tight IAM control, hybrid integration with Active Directory, or flexible key management, each provider offers powerful tools to securely manage virtual machine access.

Automatic Instance Scaling: AWS EC2 vs Azure Virtual Machines vs Google Compute Engine

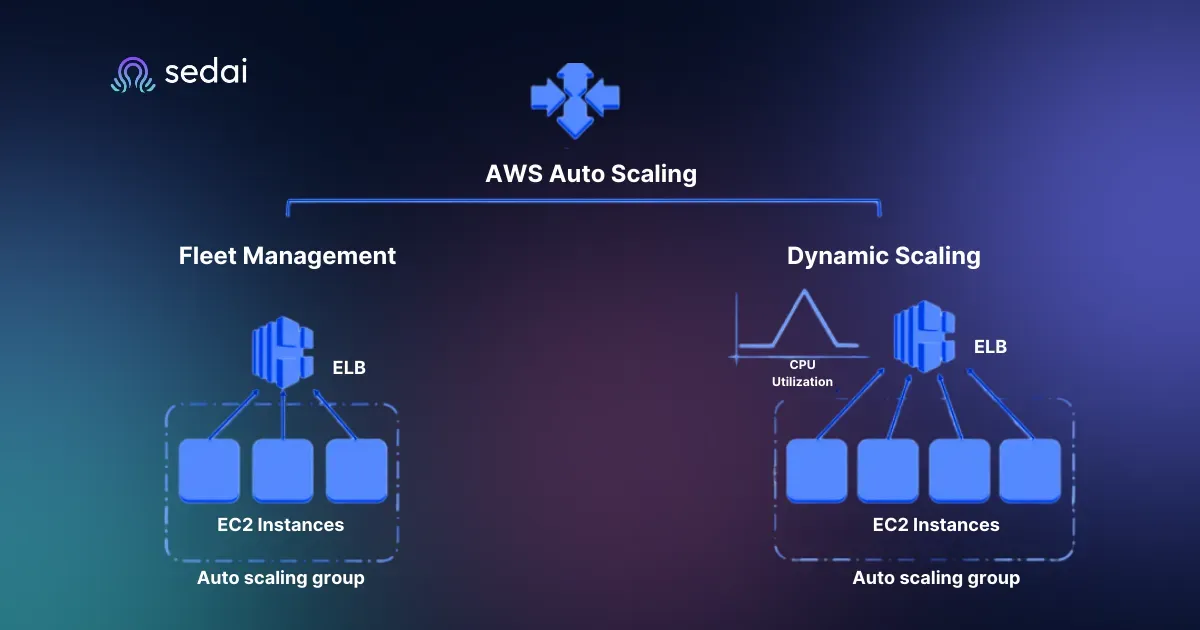

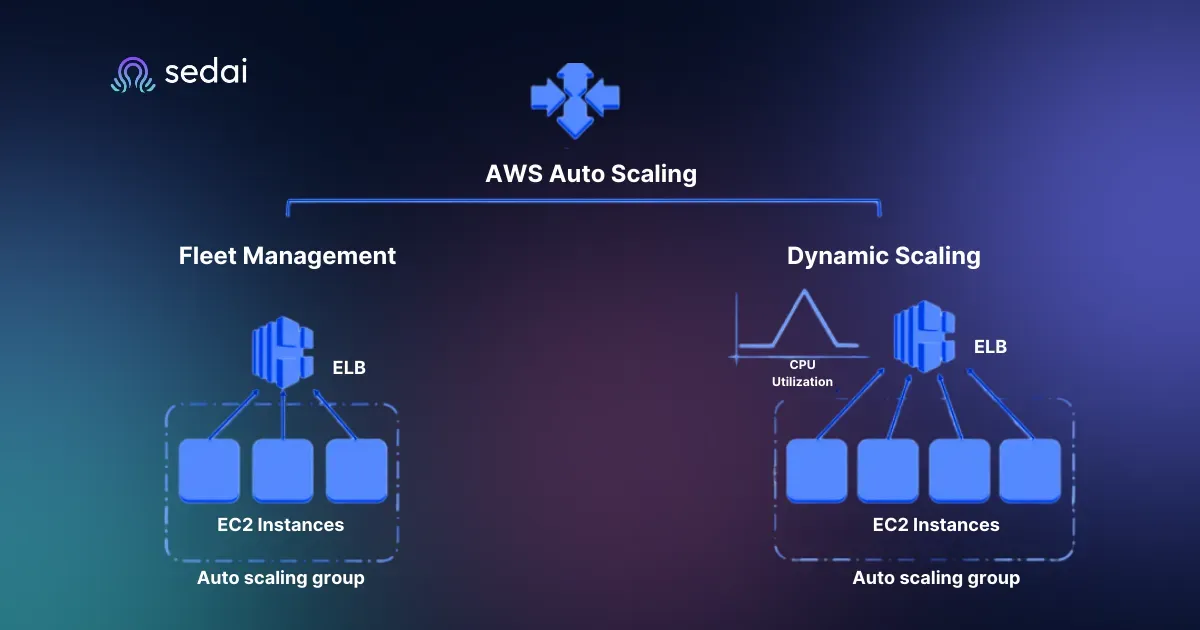

Source Link: Getting Started with Auto Scaling

Automatic instance scaling is a crucial feature for cloud users aiming to optimize performance while managing costs efficiently. All three cloud providers—AWS EC2, Azure Virtual Machines, and Google Compute Engine (GCE)—offer robust automatic scaling capabilities. However, each platform approaches it in slightly different ways, catering to various workload needs and business requirements.

AWS EC2: Auto Scaling Groups and Load Balancing Capabilities

AWS EC2 provides automatic scaling through Auto Scaling Groups (ASG), which allow users to automatically adjust the number of instances based on demand. This is particularly useful for businesses experiencing fluctuating traffic patterns, as it ensures that resources are efficiently allocated without the need for manual intervention.

- Auto Scaling Groups (ASG): ASGs allow users to define a minimum, maximum, and desired number of EC2 instances. AWS will automatically scale up or down based on predefined metrics like CPU utilization or memory usage. This flexibility allows businesses to optimize their resources according to real-time demand.

- Load Balancing: AWS integrates Elastic Load Balancer (ELB) with Auto Scaling, enabling the distribution of incoming traffic across multiple instances. This ensures high availability and fault tolerance for applications, even during high traffic periods.

Example: A retail business using AWS EC2 can configure Auto Scaling for its application servers during peak shopping seasons like Black Friday. If traffic spikes due to increased demand, the Auto Scaling feature will add more instances automatically, and when the traffic returns to normal levels, it will scale down, reducing costs.

Azure Virtual Machines: Virtual Machine Scale Sets for Easy Scaling

Azure Virtual Machines takes a slightly different approach with Virtual Machine Scale Sets (VMSS). This service allows for the management and scaling of a group of identical VMs, making it easy to deploy, manage, and automatically scale applications. VMSS is integrated with Azure Load Balancer for distributing traffic across multiple VM instances, ensuring that your application can handle varying workloads efficiently.

- Automatic Scaling with VMSS: Azure’s VMSS supports automatic scaling based on metrics such as CPU usage, memory usage, or custom metrics. You can set up scaling policies to automatically increase or decrease the number of VMs in response to changes in application demand, ensuring consistent performance and minimizing costs.

- Integration with Azure Load Balancer: Like AWS, Azure provides built-in integration with the Azure Load Balancer, which ensures that incoming traffic is distributed evenly across the scaled VMs.

Example: For a financial services company utilizing Azure Virtual Machines, VMSS can automatically scale the infrastructure during busy periods like end-of-quarter financial closings. With Azure’s load balancing, the system can ensure that the service remains responsive and reliable, even under heavy traffic.

Google Compute Engine: Managed Instance Groups with Dynamic Scaling

Google Compute Engine (GCE) leverages Managed Instance Groups (MIGs) for automatic scaling. MIGs allow users to create a group of instances with identical configurations, and they are designed to scale dynamically based on demand. This makes GCE an excellent option for applications with unpredictable or highly variable workloads.

- Dynamic Scaling: GCE provides dynamic scaling where instances in a MIG are added or removed automatically based on utilization. This means that as your application experiences higher demand, Google Cloud will spin up more instances, and when demand decreases, it will scale back down to save costs.

- Integration with Google Cloud Load Balancing: GCE’s scaling integrates seamlessly with Google Cloud Load Balancing, ensuring that traffic is distributed efficiently across your instances, and providing global scalability with minimal latency.

Example: A startup running a video streaming service on Google Compute Engine can configure MIGs to automatically scale during periods of high demand (such as when a popular show is released) and scale back down during off-peak hours, minimizing costs and ensuring performance.

Sedai can be incredibly helpful when managing cloud resources and optimizing automatic instance scaling across AWS EC2, Azure Virtual Machines, and Google Compute Engine (GCE). As a cloud optimization platform, Sedai uses AI-driven automation to monitor cloud environments in real time and adjust instances dynamically based on usage patterns.

By integrating Sedai’s autonomous cloud optimization platform with AWS vs GCP vs Azure VMs, organizations can enhance their instance scaling strategies by automatically identifying inefficiencies, optimizing resource allocation, and minimizing costs without manual intervention.

Whether managing compute power during peak loads or scaling down during off-peak hours, Sedai ensures that your cloud environment runs smoothly and cost-effectively, helping businesses save on cloud resources while maintaining optimal performance.

Temporary Instance Pricing and Usage

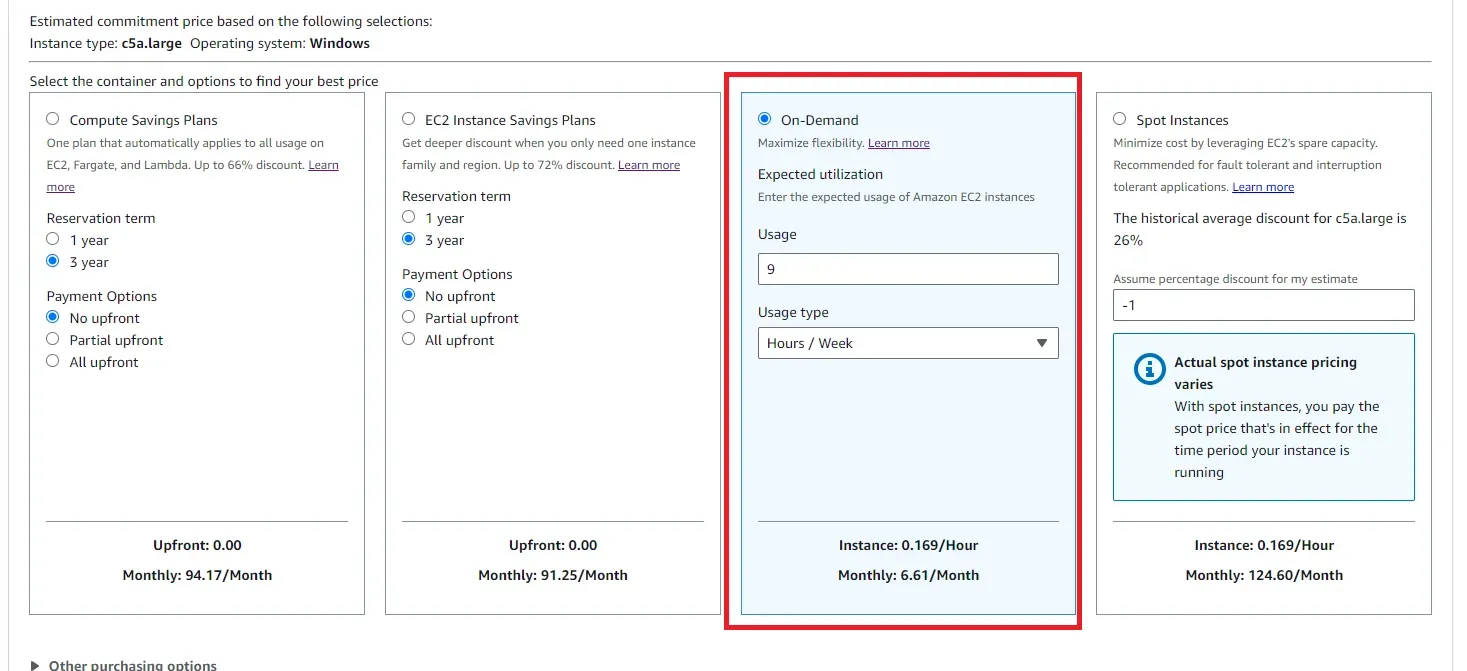

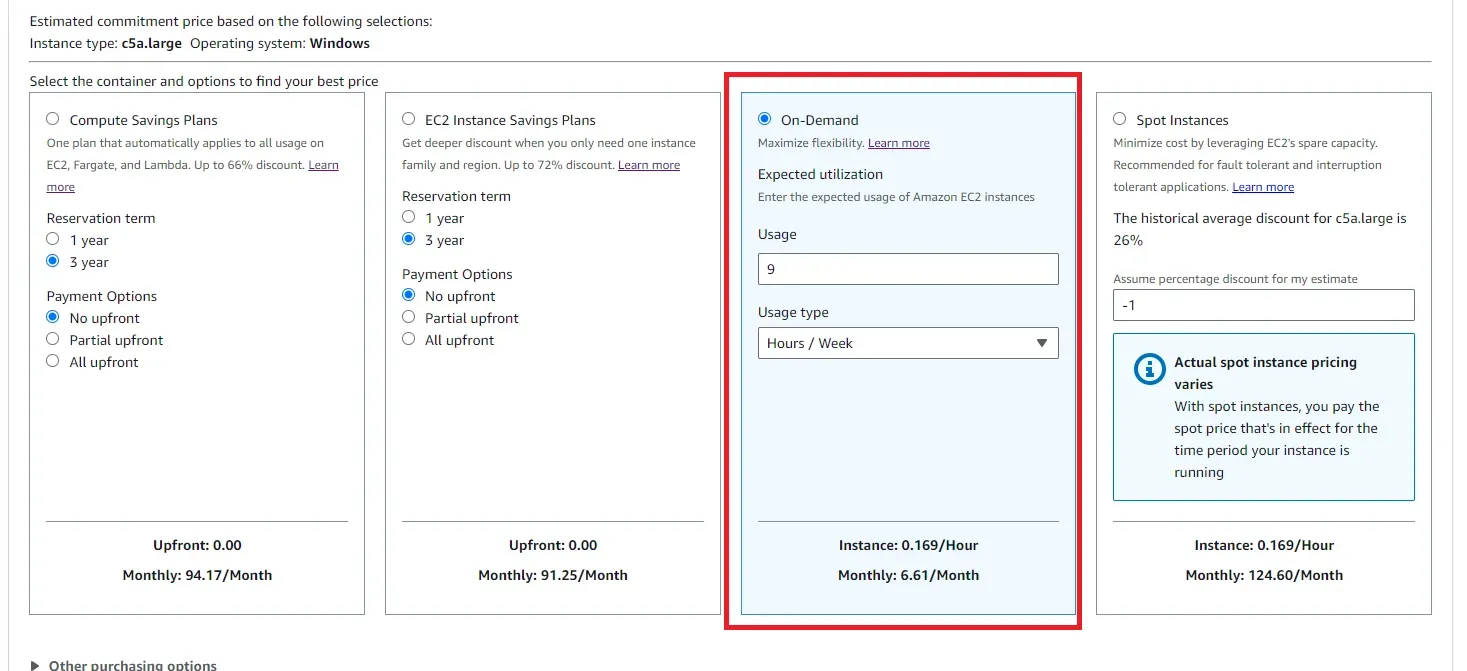

Source Link: Instance pricing calculation

When it comes to temporary cloud instances, all three major cloud providers—AWS, Azure, and Google Cloud—offer flexible pricing models designed to help businesses optimize costs for non-mission-critical workloads.

These instances are typically designed to be interruptible, meaning they can be terminated by the provider with little notice, making them an ideal choice for tasks that can handle sudden interruptions, such as batch processing, web servers, or temporary data analysis tasks.

1. AWS EC2 Spot Instances

AWS EC2 Spot Instances are a great way to save on computing costs by utilizing excess capacity available in AWS's data centers. The pricing is market-driven and can offer discounts of up to 90% compared to the standard on-demand pricing. Spot Instances are particularly beneficial for tasks that are flexible and can tolerate interruptions, such as data processing, CI/CD workloads, and running background jobs. However, the biggest challenge with Spot Instances is that they can be terminated by AWS with just a 2-minute notice if the capacity is needed by other users.

2. Azure Low-Priority VMs

Azure offers Low-Priority VMs, which are similar to AWS Spot Instances in that they are priced lower but are subject to being preempted by Azure at any time. These instances are great for workloads that are not time-sensitive and can be interrupted without significant impact. Azure's Low-Priority VMs offer up to 80% savings compared to regular on-demand VMs, making them an attractive option for running large compute-intensive tasks like big data processing, rendering, or testing.

3. Google Preemptible VMs

Google Cloud's Preemptible VMs offer an affordable and flexible solution for temporary workloads. Like AWS Spot Instances and Azure Low-Priority VMs, Preemptible VMs are priced at a significant discount (up to 80%) compared to regular on-demand pricing.

These VMs can be preempted (terminated) with 30 seconds of notice, and they are ideal for stateless workloads such as batch jobs, video rendering, or scientific computations. Preemptible VMs in Google Cloud are especially useful when there is a need for cost-effective compute power without the expectation of long uptime.

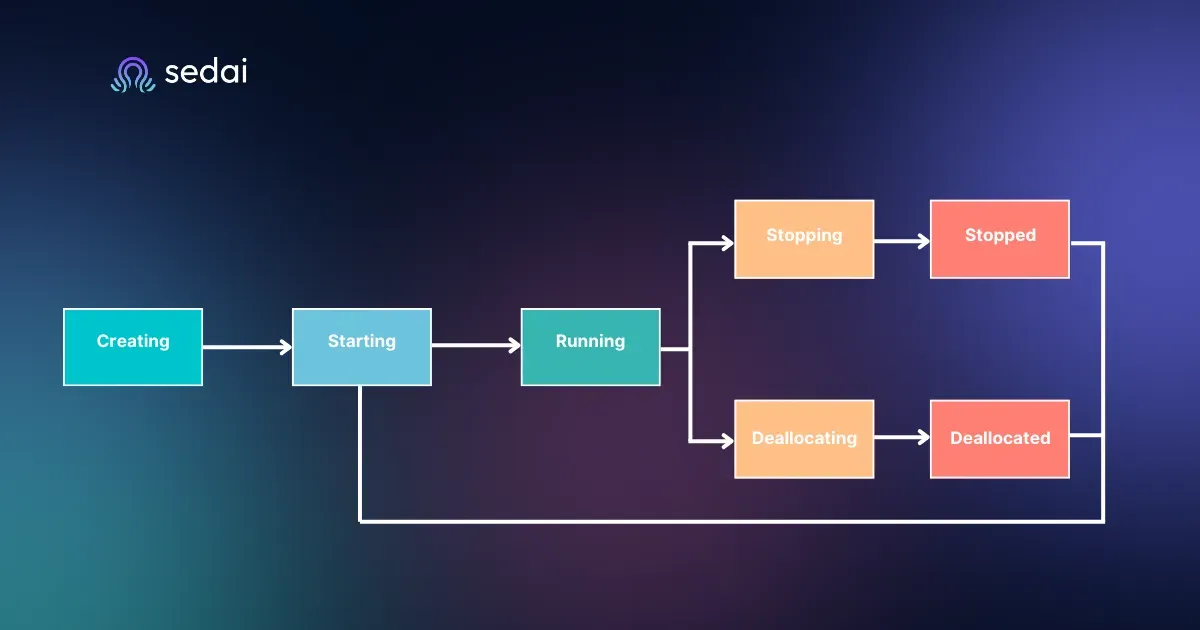

Billing Models for Virtual Machines

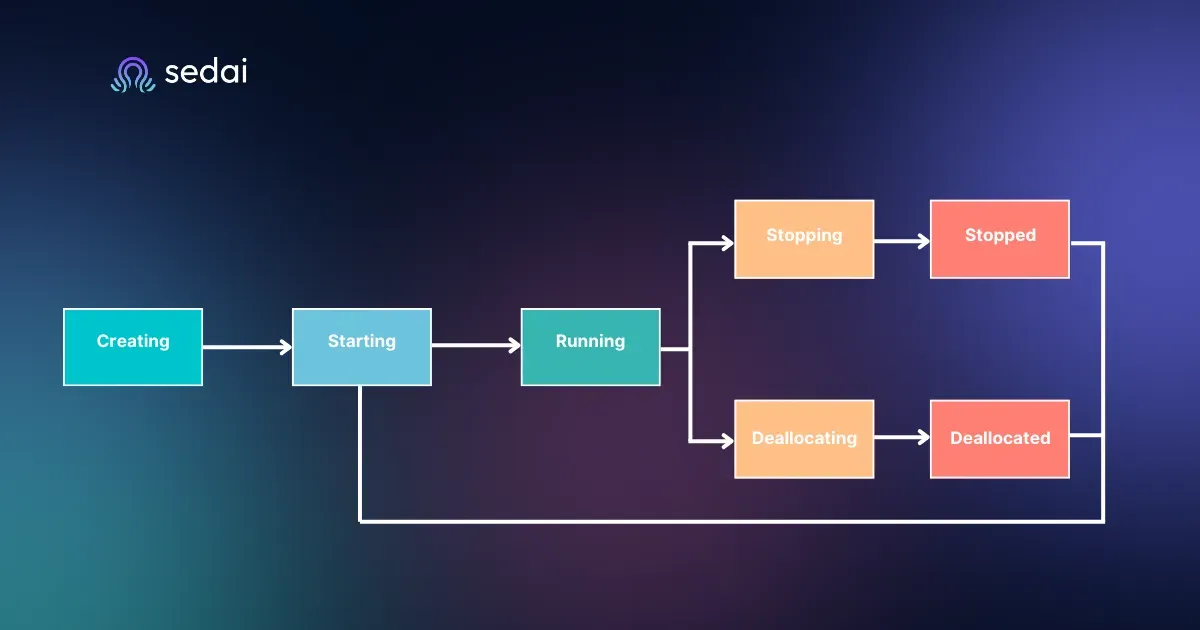

Source Link: States and billing status of Azure Virtual Machines

Each cloud provider offers different billing models designed to provide flexibility based on workload needs, which can help organizations optimize their cloud expenditures. Below is a detailed comparison of billing models across AWS, Azure, and Google Cloud for virtual machines.

1. AWS

- Pay-as-you-go: AWS offers flexible, pay-as-you-go pricing for EC2 instances, where you only pay for the resources you use, with no long-term commitments. This model is ideal for workloads with unpredictable usage patterns.

- Reserved Instances: AWS allows you to reserve instances for 1-3 years, offering discounts of up to 72% compared to on-demand pricing. Reserved Instances are suitable for steady-state workloads that require consistent compute power.

- Savings Plans: AWS provides Savings Plans as a flexible option, where customers can commit to a certain amount of usage for 1 or 3 years. This model offers similar savings to Reserved Instances, but with more flexibility around instance types and regions.

2. Azure

- Consumption-based Billing: Azure's default billing model is consumption-based, where you pay for the virtual machines based on the resources you use (per-second billing). This model suits workloads with fluctuating demands.

- Reserved VM Instances: Azure offers Reserved VM Instances for 1-3 year commitments, allowing significant savings of up to 72%. This model works well for predictable workloads that require consistent resources over time.

3. Google

- Pay-as-you-go: Google Cloud follows a pay-as-you-go pricing model where users only pay for the computing resources they use, billed on a per-second basis.

- Committed Use Discounts: Google offers Committed Use Discounts for customers who commit to using certain resources for 1 or 3 years. This model provides savings similar to AWS's Reserved Instances, typically up to 57% for compute resources.

- Resource-based Discounts: Google also offers resource-based discounts for long-running workloads and sustained use. This helps customers reduce costs further by automatically applying discounts for long-running usage without a commitment.

Pricing Comparison Table: Temporary Instance Pricing and Billing Models

VM Billing Models Across Providers

Pricing Comparison Table: Virtual Machines

Comparing VM prices between cloud providers could be quite complex due to the variety of options, instance types, and regions. Below is a practical comparison based on two common scenarios to provide a clearer understanding of pricing differences across AWS, Azure, and Google Cloud.

Scenario 1: One On-Demand VM

This table compares the on-demand (pay-as-you-go) monthly cost of an average, general-purpose VM used as a web server, running Linux.

Scenario 2: Five Compute-Optimized Reserved VMs

This table compares the monthly cost for five compute-optimized instances running Linux, with a reservation term of 3 years.

As mentioned earlier, various factors like data transfer, licensing, and software can affect the total cost, and these tables present the base cost for these two example scenarios only.

Here’s What These Actually Cost (Provide a Scenario)

Let’s consider a common use case where we need to run a medium-sized application that requires a 5 vCPU and 10 GB of memory. This could be a web server, database, or any workload that needs a reasonable balance between compute power and memory. To understand the actual cost of such a setup, we will compare the prices for each of the major cloud providers—AWS vs Azure vs Google Cloud—across their on-demand, spot, and reserved instance options.

Below is a detailed table showing the best instance type for each cloud provider, with similar specifications and the cost across different billing models (on-demand, spot, and reserved).

Scenario: 5 vCPU, 10 GB Memory - Instance Comparison

This scenario shows a typical application requiring 5 vCPU and 10 GB of memory. As you can see, the pricing for each cloud provider varies significantly, especially when utilizing spot instances for cost optimization. AWS offers a significant discount through spot pricing, but there are trade-offs in terms of instance termination with no prior notice.

Cost Optimization Approach

Cloud providers offer several cost optimization features, but they are often not enough for businesses that need to optimize costs at scale, especially when operating thousands of vCPUs or large workloads. While cloud providers offer tools like visibility into costs, auto-scaling, and right-sizing recommendations, the need for intelligent, autonomous cloud optimization at scale is paramount for achieving long-term savings.

Below is a comparison of the optimization features available across AWS vs Azure vs Google Cloud, highlighting their capabilities in cost visibility, right-sizing, autoscaling, and autonomous operations.

While all three cloud providers offer basic tools for cost visibility and some level of autoscaling, there are significant gaps when it comes to intelligent, autonomous cloud optimization. For large-scale operations, such as those running thousands of vCPUs or handling large workloads, manually adjusting autoscaling configurations or selecting the appropriate instance type can become cumbersome and inefficient.

This is where Sedai can add immense value. Sedai’s autonomous cloud optimization platform goes beyond what traditional cloud providers offer. It continuously adjusts autoscale parameters, recommends instance types based on performance and cost, and even executes those changes automatically to ensure your cloud resources are always optimized for both cost and performance.

For early-stage companies, basic automation and visibility into costs may be enough, but as your cloud infrastructure grows, having Sedai’s intelligent optimization becomes essential for maintaining control over costs and resource efficiency at scale.

If you operate at scale and manage numerous VMs, relying solely on cloud providers' native optimization tools will limit your ability to fully optimize costs. Sedai’s autonomous platform takes cloud cost optimization to the next level, enabling you to scale efficiently while reducing unnecessary costs.

To know more: Strategies to Improve Cloud Efficiency and Optimize Resource Allocation

Conclusion

As cloud usage is on the rise, selecting the appropriate relational database service becomes critical for enhancing optimized performance and cost-effectiveness. Whether it be AWS RDS, Azure SQL, or GCP Cloud SQL, each has its own strengths and weaknesses, which you should consider when evaluating what will work best for your organisation, workloads, and budget.

While many cloud providers are providing basic visibility, autoscaling, and right-sizing recommendations, these tools are just not adequate for the growing complexity of managing the cloud at scale. This is where Sedai’s autonomous cloud optimization platform comes in, it constantly fine-tunes the right instance types and autoscaling parameters and keeps businesses running on the most compelling configurations possible.

Working with Sedai can help businesses stay ahead in their cloud cost optimization workflow, providing a seamless and efficient solution for large-scale. Start your journey with Sedai today to unlock smarter, real-time cloud optimization.

FAQs

1. What are the key differences between AWS, Azure, and Google Cloud VM offerings?

AWS, Azure, and Google Cloud offer similar functionalities but differ in instance types, pricing models, and regional availability. AWS provides a broad range of instance types and services, Azure is known for its hybrid cloud solutions, and Google Cloud specializes in machine learning and Kubernetes-based services.

2. What are the pricing models for cloud VMs?

Cloud VM pricing models typically include pay-as-you-go (on-demand), reserved instances, and spot instances. Each provider offers variations on these models with additional discounts for long-term commitments or unused capacity.

3. Which cloud provider is the cheapest for on-demand VMs?

Google Cloud often provides the most competitive prices for on-demand instances, followed by AWS and Azure. Pricing can vary by region and instance type, so it's important to compare before making a decision.

4. How do AWS Spot Instances help with cost savings?

AWS Spot Instances allow you to bid on unused capacity at significant discounts (up to 90%) compared to on-demand pricing. However, these instances can be interrupted with very little notice, so they are best for workloads that are fault-tolerant.

5. Can I optimize cloud costs without changing my provider?

Yes, by using the native tools available from each cloud provider for cost visibility, right-sizing, and autoscaling, you can optimize cloud costs. However, the optimization features vary and may not always be sufficient at scale.

6. What is the role of autoscaling in cloud cost optimization?

Autoscaling automatically adjusts the number of virtual machines based on traffic or load, ensuring that you're only paying for the resources you need. This helps avoid over-provisioning and reduces overall cloud expenses.

7. How can Sedai help with cloud cost optimization?

Sedai goes beyond traditional cloud providers' offerings by autonomously adjusting instance types and scaling configurations based on real-time data, ensuring continuous cost optimization, especially for large-scale workloads.

8. What are the benefits of using Reserved Instances for cost optimization?

Reserved Instances offer significant discounts (up to 72%) compared to on-demand pricing in exchange for committing to a 1-3 year term. They are ideal for predictable workloads with consistent resource needs.

9. How does cloud cost management work with multiple regions?

Pricing for VMs and services can vary by region due to factors like local demand and availability. Using multiple regions can help balance load, reduce latency, and optimize costs, but careful planning is necessary to manage costs effectively.

10. Why should I consider using Sedai for cloud optimization instead of relying on native tools?

Sedai offers an advanced, autonomous approach to cloud optimization, continuously adjusting resources based on real-time data. Unlike native cloud provider tools, Sedai's platform optimizes performance and cost at scale, making it ideal for businesses managing large workloads.