Unlocking Efficiency: Workload Rightsizing in Amazon EKS

In today’s cloud-centric world, optimizing resource usage is essential for cost control and performance. Amazon EKS (Elastic Kubernetes Service) provides a powerful environment for running Kubernetes at scale, but without proper resource allocation, it can lead to wasted resources and higher costs. Workload rightsizing in Amazon EKS helps businesses align Kubernetes resource allocations—such as CPU and memory—with actual application demands, maximizing efficiency and minimizing costs. With the right approach, organizations can optimize their EKS environments for peak performance, adapt to changing workload demands, and contribute to a more sustainable cloud strategy.

Definition and Importance of Rightsizing in Kubernetes

What is Workload Rightsizing in Amazon EKS?

Workload rightsizing in Kubernetes involves adjusting resource allocations, such as CPU, memory, and storage, to meet the actual needs of applications. In Amazon EKS, this adjustment process is crucial for aligning cloud resources with workload demands to avoid unnecessary costs and inefficiencies. Rightsizing not only ensures smoother operations but also optimizes cluster performance by enabling better use of available resources.

How Rightsizing Optimizes Resource Utilization in Amazon EKS

Rightsizing in Amazon EKS mitigates common issues of over-provisioning, which leads to wasted resources, and under-provisioning, which can cause performance bottlenecks. By accurately matching resources to workload requirements, EKS clusters can efficiently handle fluctuating workloads. Leveraging rightsizing ensures applications maintain high performance even as demand scales, ultimately leading to a more balanced and efficient environment.

Sedai’s platform enhances rightsizing by offering autonomous, real-time adjustments to Kubernetes resources in Amazon EKS. Through machine learning and predictive analytics, Sedai continuously assesses workload needs and allocates resources dynamically, which helps businesses avoid manual configuration errors and achieve consistent performance improvements.

Benefits of Rightsizing for Cost and Performance Optimization

Dual Benefits of Cost Savings and Performance Improvements

The strategic benefits of rightsizing in Amazon EKS are twofold:

- Cost Savings: Rightsizing reduces cloud spending by eliminating the overhead of over-provisioned resources. Studies show that rightsizing can reduce cloud costs by up to 30%, enabling businesses to reinvest savings into growth and innovation.

- Enhanced Application Performance: With properly sized resources, workloads achieve greater responsiveness, reduced latency, and fewer bottlenecks. This improved performance elevates user experience, which can lead to higher customer satisfaction and loyalty.

Leveraging Autonomous Optimization Tools for Dynamic Workload Management

Modern autonomous cloud optimization tools, like Sedai, streamline the rightsizing process by automating real-time resource adjustments. These platforms integrate with EKS to continually evaluate workload demands and adjust resources proactively, providing organizations with reliable performance without manual intervention. This dynamic management empowers teams to focus on scaling applications rather than tuning resource parameters, leading to improved efficiency across operations.

Sedai's autonomous platform excels in enabling fully autonomous, continuous workload management in Amazon EKS. With Sedai, organizations can achieve both cost control and optimal performance, as it intelligently rightsizes based on historical data and real-time workload metrics.

Configuring Resource Requests and Limits in Amazon EKS

Setting CPU and Memory Requests and Limits

Configuring resource requests and limits is a critical step in optimizing workload performance in Amazon EKS. Here’s a comprehensive guide for setting these parameters effectively:

Common Pitfalls to Avoid:

- Pod Evictions: This occurs when pods are terminated due to resource shortages. Properly defined requests can mitigate this, ensuring that critical workloads remain operational.

- Resource Throttling: Without adequate limits, containers may slow down, impacting performance. Implementing the right limits helps maintain a high level of performance under varying conditions.

- Wasted Capacity: Misconfigured requests and limits can lead to unused resources, inflating costs. Recognizing and rectifying these misconfigurations is essential for maximizing cloud investments.

Sedai’s platform continuously monitors configurations, adjusting requests and limits dynamically, ensuring each pod operates within optimal parameters. By leveraging real-time data, Sedai optimizes resource management, thus preventing potential pitfalls in resource allocation.

Best Practices to Prevent Resource Waste

To maximize efficiency in resource allocation, adhere to these best practices:

- Match CPU and Memory with Actual Workload Needs: Analyze historical data and performance metrics to set realistic resource limits. This analysis helps in understanding peak usage times and setting accurate thresholds accordingly.

- Conduct Periodic Reviews: Regular assessments of resource usage and workload demands help maintain alignment with actual needs. Scheduling these reviews can help ensure that resource allocations evolve with changing business requirements.

- Leverage Sedai for Dynamic Rightsizing: By integrating Sedai’s autonomous platform, organizations can utilize AI-driven insights for real-time adjustments, ensuring optimal performance while minimizing waste. Sedai’s machine learning algorithms analyze patterns and trends, making rightsizing more precise.

As an AWS partner, Sedai provides tailored solutions that integrate seamlessly with AWS services, including Amazon EKS. This partnership ensures organizations have access to best practices and advanced tools for optimizing their cloud environments. Explore Sedai's partnership with AWS.

Tools and Solutions for Workload Rightsizing in Amazon EKS

Popular Rightsizing Tools for Amazon EKS

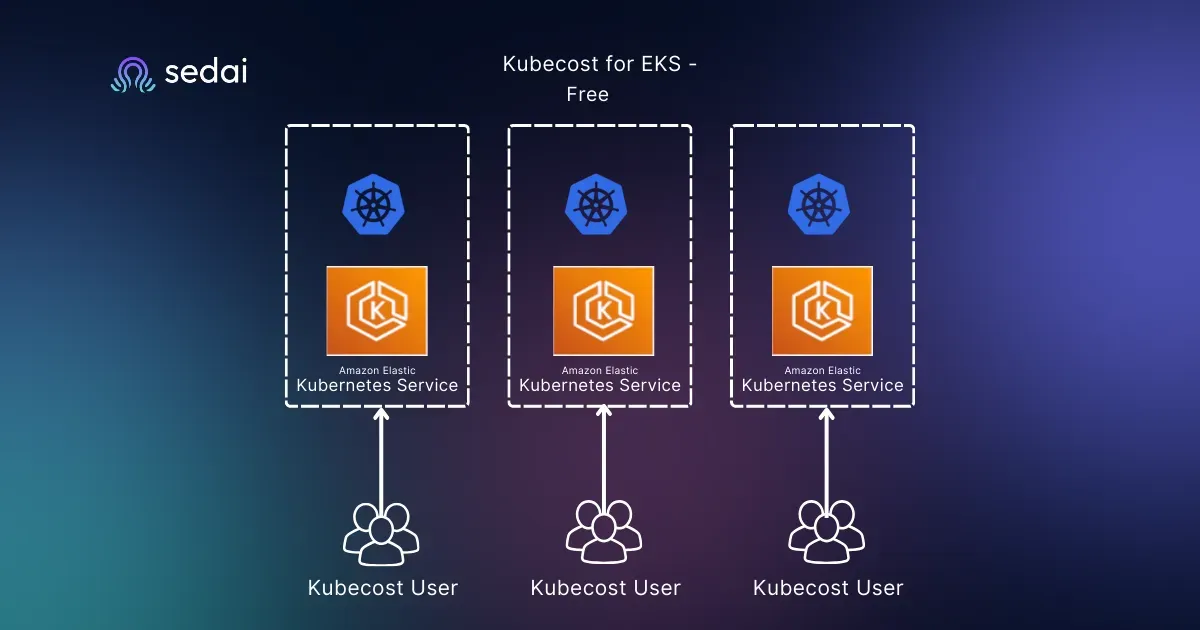

Several tools assist in rightsizing EKS workloads effectively. Here’s an overview of popular options:

Sedai, in contrast, offers an integrated solution that combines monitoring and rightsizing into one cohesive platform. Sedai’s AI-driven capabilities continuously analyze workload requirements, autonomously applying adjustments in real time, which minimizes the need for manual configurations and human error. This level of

automation enhances overall operational efficiency.

Manual vs Automated Rightsizing in EKS

Manual rightsizing requires ongoing monitoring and is prone to inaccuracies due to human error. Sedai’s autonomous cloud optimization platform eliminates these challenges, providing a fully dynamic rightsizing solution. This approach ensures that EKS clusters are constantly tuned to meet live workload demands, significantly reducing operational costs and enhancing efficiency.

- Efficiency Gains: Organizations that adopt autonomous rightsizing see improvements in operational efficiency, with a reduction in configuration errors by up to 50%. This improvement not only leads to cost savings but also allows teams to focus on innovation and development.

Using Autoscalers for Optimal Resource Utilization

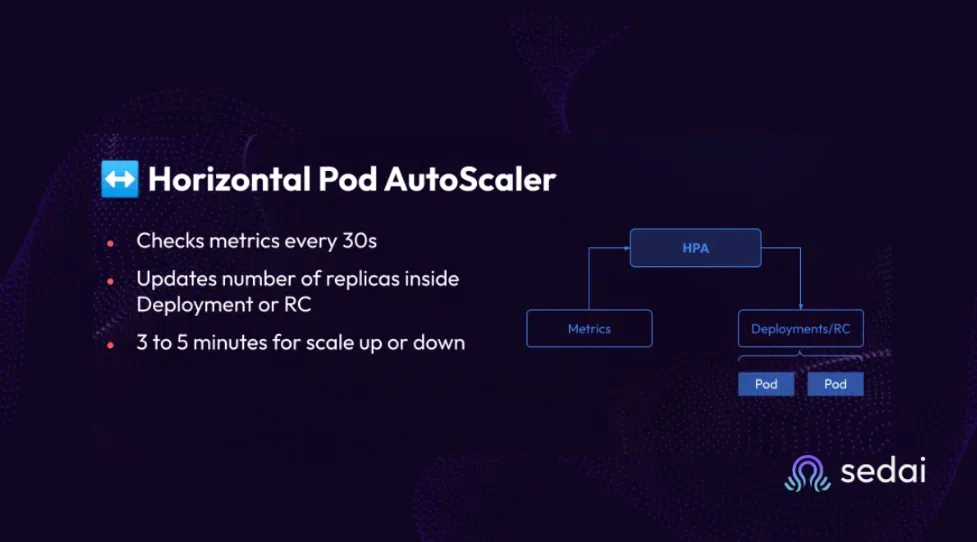

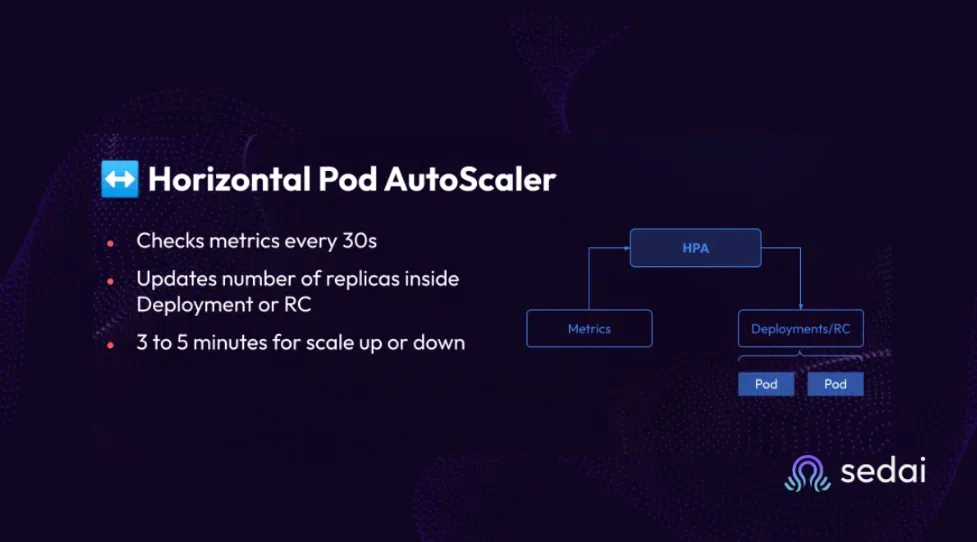

Horizontal Pod Autoscaler (HPA)

HPA is a Kubernetes component that adjusts the number of pod replicas based on CPU utilization or other select metrics. This functionality enables applications to scale seamlessly according to load, thus maintaining performance without unnecessary over-provisioning of resources.

- Use Cases: HPA is particularly effective in scenarios where applications experience variable workloads, such as during peak traffic times or during data processing tasks. Its ability to dynamically adjust helps ensure that applications remain responsive under different load conditions.

Integrating Sedai with HPA allows for enhanced performance management, where Sedai automatically optimizes pod resource requests based on real-time analytics, ensuring that applications scale smoothly while maintaining resource efficiency.

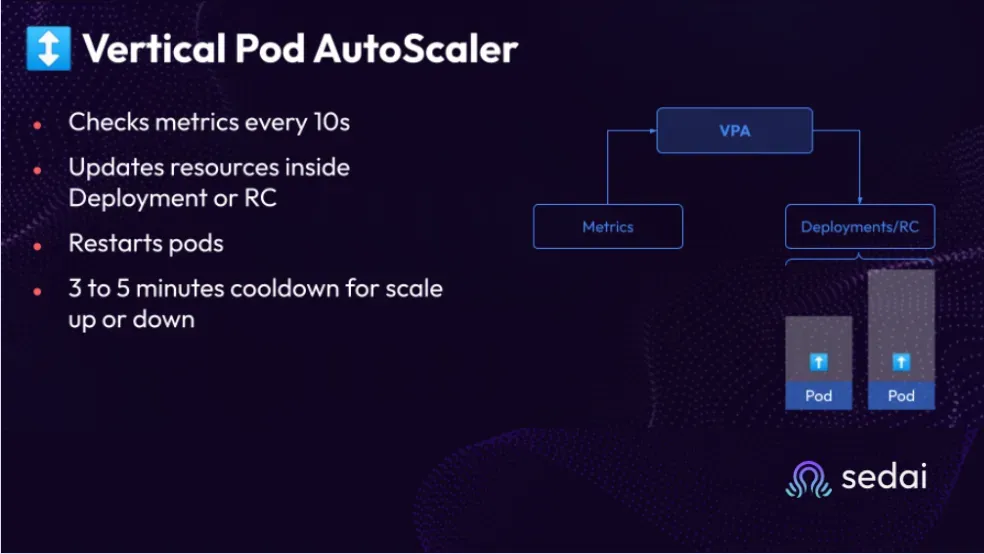

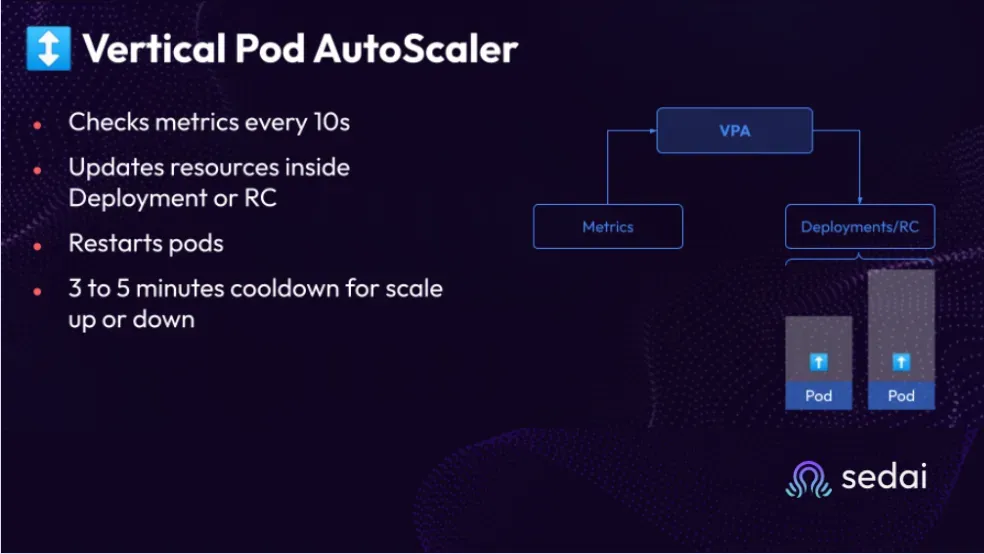

Vertical Pod Autoscaler (VPA)

VPA modifies the resource requests for pods based on historical usage and trends. It continuously monitors workloads and suggests adjustments to ensure optimal resource allocation over time.

- Advantages: VPA is essential for applications that experience growth or fluctuating demands, as it helps avoid resource exhaustion while allowing for gradual resource adjustments. This flexibility is crucial for maintaining consistent performance.

When paired with Sedai’s platform, VPA benefits from enhanced predictive analytics, providing proactive adjustments that lead to up to a 25% increase in resource utilization efficiency. This ensures that workloads are not only responsive but also cost-effective.

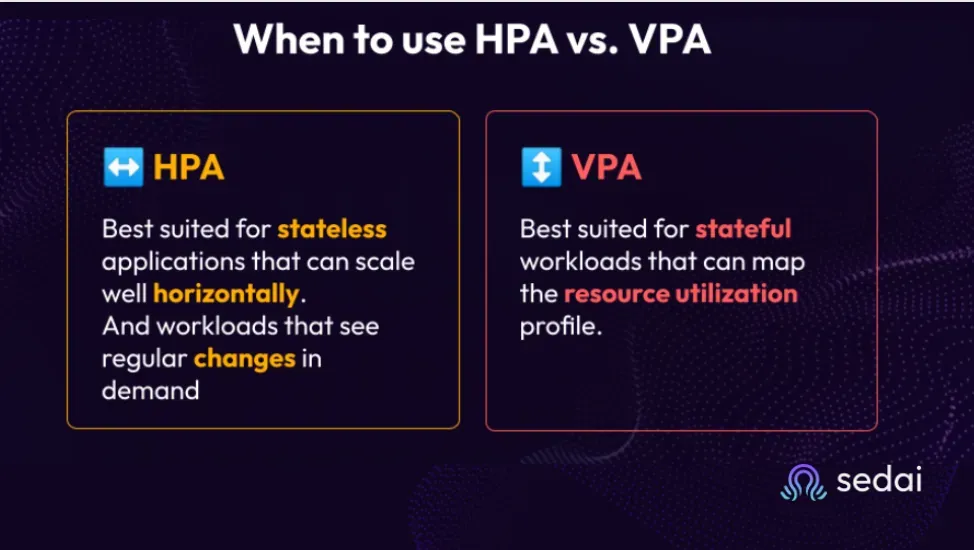

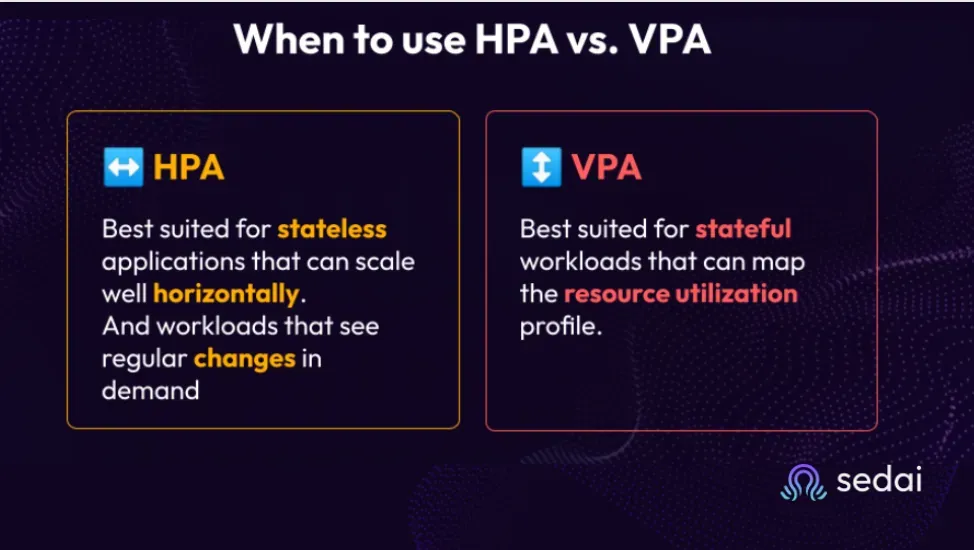

When to Use HPA vs. VPA in EKS

Organizations can gain the most value by using both HPA and VPA in tandem. HPA excels in adjusting replica counts dynamically, while VPA focuses on resource adjustments based on usage. Sedai’s platform facilitates the integration of both autoscalers, providing a comprehensive solution that maximizes resource utilization while minimizing costs.

Strategic Optimization Practices in Amazon EKS

Aligning Requests with Actual Utilization

It’s important to align resource requests with actual utilization - this is crucial for maintaining optimal performance in EKS clusters.

- Utilization Tracking: By using observability tools such as Prometheus and Grafana, organizations can gain insights into resource usage patterns, enabling them to make informed adjustments. These insights are essential for understanding how workloads behave over time and identifying potential areas for optimization.

Sedai enhances this process by combining monitoring data with proactive resource management, allowing for real-time adjustments that ensure EKS workloads are continuously aligned with business demands and resource capabilities.

Leveraging Observability for Cost Efficiency

Effective observability can significantly impact cost management. Monitoring tools help track key performance metrics, allowing teams to identify areas for optimization.

Companies leveraging observability in their cloud environments have reported up to 60% reductions in operational costs due to improved visibility into resource usage and waste. This highlights the critical role observability plays in driving efficiency.

Incorporating Sedai’s AI-driven insights allows businesses to gain deeper visibility into their workloads, leading to more precise rightsizing and cost management strategies. By continuously monitoring and analyzing usage patterns, Sedai ensures organizations can adapt quickly to changing conditions without incurring unnecessary costs.

For organizations looking to enhance their resource optimization strategies, Sedai's AI-powered rightsizing solutions for AWS EC2 VMs offer valuable insights into automated resource management, which can be similarly beneficial in the context of Amazon EKS. Learn more about AI-powered rightsizing for AWS EC2 VMs.

Implementing Rightsizing Recommendations

Collecting and Applying Rightsizing Recommendations

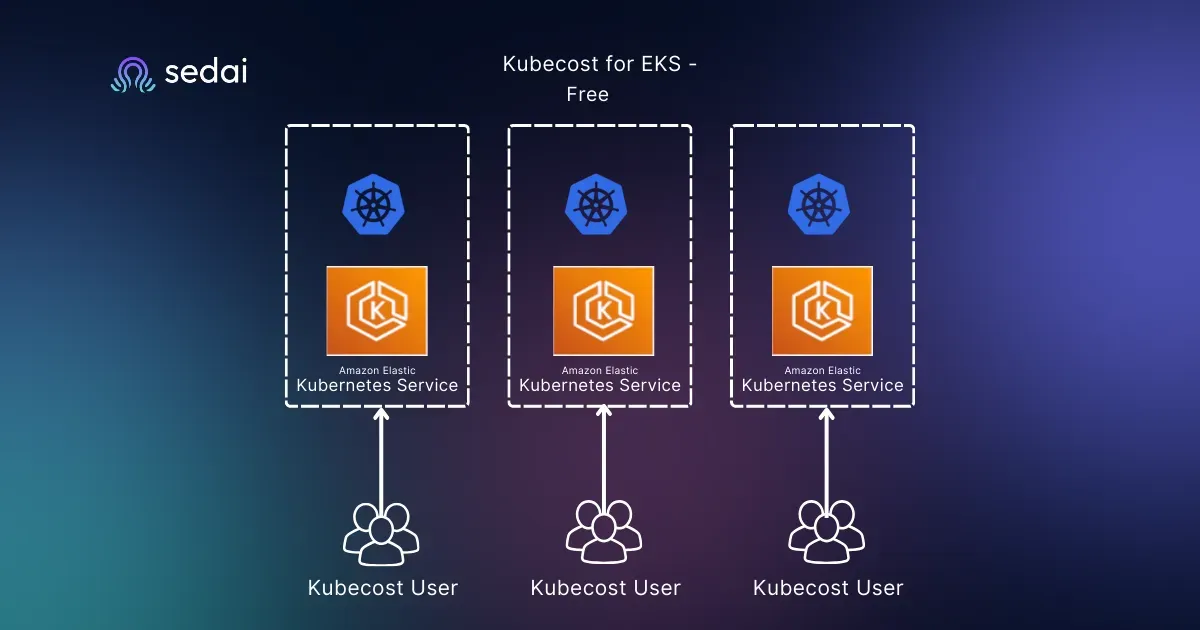

Tools like Kubecost and Goldilocks are effective for gathering rightsizing recommendations, helping organizations identify underutilized or over-committed resources. Applying these insights helps optimize workload performance in real time, ensuring clusters operate efficiently. For instance, Sedai's AI-driven platform integrates these recommendations into its autonomous system, reducing manual oversight requirements.

Practical Scenarios for Rightsizing in Amazon EKS

Rightsizing in EKS can provide substantial cost savings. For example, consider a workload initially configured for peak demands. By rightsizing, companies can save up to 30% on monthly cloud costs by reducing overallocated CPU resources. However, as demands evolve, rightsizing should be revisited to adjust to new requirements and prevent inefficiencies, which Sedai's platform can autonomize.

Addressing Node Optimization in Amazon EKS

Node Selection Best Practices

Selecting the right node instance types for each workload ensures balanced costs and optimal performance. EKS offers varied node instance types, each suitable for specific workloads, and aligning node types with workload requirements minimizes unnecessary overhead. Node pools, for instance, help achieve efficient resource allocation by grouping similar instances.

Node Bin-Packing for Efficient Utilization

Node bin-packing maximizes node utilization by grouping workloads efficiently, reducing unused resources. For EKS clusters, efficient bin-packing can minimize the number of nodes required, saving costs. Additionally, Sedai’s autonomous optimization can assist by dynamically redistributing workloads across nodes, maintaining efficiency as cluster demands shift.

Autonomous Optimization for EKS Workloads

Integrating Autonomous Optimization in EKS

Autonomous optimization tools like Sedai significantly enhance rightsizing by providing intelligent, AI-driven decision-making. This system allows real-time scaling adjustments without manual intervention, optimizing performance while minimizing costs. Sedai’s platform continuously monitors metrics, ensuring resources remain aligned with fluctuating demands.

Case Example of Autonomous Optimization in Action

For example, a media company with unpredictable traffic peaks adopted Sedai, which dynamically adjusted resources based on real-time usage, resulting in 40% cost savings during peak hours. By autonomously handling these fluctuations, Sedai enabled the company to meet demand without excessive provisioning or performance drops.

Ensuring Efficient Resource Utilization in Amazon EKS

Workload rightsizing in Amazon EKS offers a powerful strategy for balancing cost efficiency with optimal performance. By closely matching resource allocations to actual workload demands, organizations can significantly reduce cloud costs, prevent waste, and maintain high application performance. The key benefits of rightsizing include:

- Optimized Cost Efficiency: By right-sizing workloads, businesses avoid over-provisioning and underutilization, leading to reduced operational costs without sacrificing performance.

- Enhanced Cluster Performance: Rightsizing ensures that applications receive the exact resources they need to function effectively, thereby improving response times and stability across the EKS environment.

Incorporating a blend of autoscalers, like the Horizontal and Vertical Pod Autoscalers, along with rightsizing tools such as Kubecost and Goldilocks, further strengthens this approach. Manual best practices, when paired with these automated tools, enable a well-rounded strategy for maintaining a cost-effective, high-performance Kubernetes environment. Yet, fully autonomous platforms like Sedai take these benefits a step further by continuously monitoring and adjusting workloads, ensuring optimal resource usage in real time.

Understanding the pricing model of Amazon EKS is crucial for effective rightsizing and cost management. Sedai’s insights into AWS EKS pricing help organizations strategize their resource allocations better. Delve into AWS EKS pricing and costs here.

How Autonomous Optimization Can Help

In today’s cloud environment, manual monitoring and rightsizing may not be enough to achieve peak efficiency, especially with fluctuating demands and dynamic workloads. This is where autonomous optimization solutions, like Sedai, bring transformative value.

Sedai's platform leverages advanced AI to provide fully autonomous rightsizing and optimization, making it an invaluable asset for EKS clusters. With Sedai, businesses can offload the responsibility of constant monitoring and manual adjustments, as the platform’s AI continuously evaluates workloads and aligns resources to current needs. Sedai’s automated approach not only boosts performance but also maximizes resource efficiency by preventing common issues such as over-provisioning and under-utilization.

Unlocking Real-Time, Intelligent Decision-Making

With Sedai’s real-time intelligence, organizations benefit from rapid, data-driven adjustments that align with fluctuating application demands. For example, Sedai’s platform detects patterns in usage metrics, automatically reallocating resources to maintain cost-efficiency without sacrificing application stability.

Sedai empowers companies to optimize their EKS environments autonomously, allowing for scalability, adaptability, and enhanced performance—without manual intervention. For those interested in experiencing Sedai's impact, we recommend that you schedule a demo.. This hands-on look at Sedai’s capabilities can help you understand the full scope of benefits, from minimizing cloud costs to sustaining consistent performance in demanding, dynamic workloads.

By integrating Sedai’s autonomous optimization with rightsizing efforts in Amazon EKS, businesses can ensure they’re getting the best value from their cloud investment, paving the way for efficient and scalable Kubernetes operations.

Implementing rightsizing practices in development and test environments can lead to substantial cost savings. Sedai’s case studies demonstrate how organizations have saved significantly by optimizing their Kubernetes workloads. Read about rightsizing in Kubernetes dev/test environments.

FAQs

1. What is workload rightsizing in Amazon EKS?

Workload rightsizing involves adjusting the CPU, memory, and storage allocations of workloads in Amazon EKS based on actual requirements to optimize performance and cost efficiency. This practice is crucial in ensuring that resources are effectively allocated to meet workload demands.

2. Why is rightsizing important for my EKS workloads?

Rightsizing helps prevent over-provisioning, leading to significant cost savings (up to 30%) while enhancing performance and operational efficiency. By ensuring that workloads only use what they need, organizations can avoid unnecessary expenses and allocate resources more effectively.

3. How can I set resource requests and limits for my EKS pods?

Resource requests and limits can be defined in your pod specifications. Use monitoring tools to assess usage patterns and adjust these values accordingly. This iterative process helps fine-tune resource allocations for optimal workload performance over time.

4. What tools are available for rightsizing in Amazon EKS?

Popular tools include Kubecost, Goldilocks, and Sedai, which provide visibility and recommendations for optimizing resource allocations in EKS. Each tool offers unique features that cater to different aspects of rightsizing, enabling organizations to choose the right solution for their needs.

5. What are the benefits of using Sedai for rightsizing?

Sedai offers autonomous optimization, continuously analyzing workloads and making real-time adjustments to ensure optimal resource allocation and performance. By reducing the need for manual intervention, Sedai helps organizations achieve greater efficiency and responsiveness.

6. How does horizontal pod autoscaler (HPA) differ from vertical pod autoscaler (VPA)?

HPA adjusts the number of pod replicas based on metrics like CPU usage, while VPA modifies resource requests for existing pods based on usage trends. Each autoscaler serves a unique purpose, making it important to understand their functionalities to optimize workload performance effectively.

7. What role does observability play in workload rightsizing?

Observability provides insights into resource usage patterns, enabling teams to make informed adjustments for cost efficiency and performance improvements. This visibility is critical in identifying potential bottlenecks and resource inefficiencies, leading to better decision-making.

8. How can I ensure ongoing rightsizing in my EKS cluster?

By integrating monitoring tools and employing Sedai’s autonomous capabilities, organizations can maintain optimal resource allocations as workload demands change. Continuous monitoring allows for proactive adjustments, ensuring resources are always aligned with current needs.

9. What are the potential risks of not rightsizing my EKS workloads?

Failure to rightsize can lead to resource waste, inflated costs, and degraded application performance due to inadequate resource allocations. These risks can hinder business operations and lead to customer dissatisfaction if workloads do not perform as expected.

10. Can Sedai help with rightsizing in other cloud environments?

Yes, Sedai is designed to support rightsizing across various cloud platforms, making it a versatile solution for organizations with multi-cloud strategies. This flexibility allows businesses to maintain consistency in resource management practices regardless of their cloud infrastructure.

.webp&w=3840&q=75&dpl=dpl_8N5gJyctMb8weomVhSxGkhy25E4n)