Introduction

This article is a summary of an insightful panel discussion on the topic of Serverless versus Kubernetes at Sedai's autocon conference. You can watch the full video here.

The panel consisted of technical and investment professionals who bring a wealth of experience to the discussion. Salil Deshpande, General Partner of Uncorrelated Ventures was the moderator of the panel. Rachit Lohani, formerly the CTO and SVP of Engineering at Paylocity, has an impressive track record with leading organizations such as Atlassian, Intuit, and Netflix. Siddharth Ram, the current CTO and SVP of Engineering at Inflection, possesses extensive experience from his roles at Intuit and Qualcomm. Shridhar Pandey, a Senior Product Manager of Lambda, added his expertise to the conversation. Lastly, we have Kenneth Nguyen, Co-Founder at Tasq, who brought insights from both his startup experience and enterprise background at BP and Shell.

Should All Apps be Serverless?

Salil Deshpande commenced the discussion by emphasizing the chain of logic that suggests most Kubernetes apps should be stateless. This line of reasoning prompts the thought-provoking question: “Why not opt for the simplicity of serverless, specifically Lambda?” The aim is to explore the potential benefits of serverless architectures in terms of streamlining operations and achieving autonomy.

Salil first discussed the intricacies of state management in Kubernetes, drawing from insights from a prominent database company. Three approaches were highlighted:

1. Self-managed state outside of Kubernetes Maximum choice, maximum effort

It’s simple enough to connect applications and databases through declaration. The benefit of running it outside on a traditional VM is that you maximize choice. The downside is that you’re creating infrastructure redundancy and additional Ops work. Rather than automation, you’ll have to manually manage at least five tools already available in Kubernetes (Monitoring, Load Balancing, Configuration, Service Discovery, Logging)

2. Cloud data services outside of Kubernetes - Less Choice, Less Effort

It’s also possible to leverage cloud services to run your Database outside of Kubernetes. Choosing the DbaaS route elimina the need for Ops to manage spinning up, scaling, and managing Database, and they’re not responsible for a redundant infrastructure stack. It’s an external service, but doesn’t add the redundancy of a full Database Stack.

The downside is you’re stuck with the DBaaS as offered by your cloud services provider, which makes even less sense for those running things in house or in prem. And since you don’t have direct access to the infrastructure running the Database, fine-tuning performance and managing compliance can be an issue.

3. Asking Kubernetes to manage state - Cloud-Native Simplicity, Devops Agility

Since its inception, the Kubernetes community has worked tirelessly to solve some of the existential challenges presented by achieving maximum fluidity in a world still mostly run in persistent storage. How do you maintain the seamless flexibility of distributed pods in cases where state demands an application and database stay connected even as pods are added, subtracted, or restarted? Kubernetes provides two native paths to get there. StatefulSets or DaemonSets. StatefulSets assign a unique ID that keeps application and Database containers connected through automation. DaemonSets create a 1:1 relationship where a pod runs on all the nodes of the cluster. When a node is added or removed from a cluster, the pod is also automatically added or removed.

A compelling argument is presented in favor of the first two options, asserting that they enable stateless Kubernetes apps. This led to the critical question of why not transition to serverless solutions like Lambda.

The main point in this panel is to discuss the relationship between serverless and Kubernetes and question the logic behind using Kubernetes for stateless applications. Salil Deshpande states that if most Kubernetes apps are stateless, it would be simpler to use serverless (specifically Lambda) instead. Running stateless systems on Kubernetes adds unnecessary complexity and serverless architectures, such as Lambda, offer a more streamlined solution.

Reconsidering Kubernetes: Advocating for Serverless for Streamlined Business Focus

Siddharth Ram’s perspective on the topic of serverless versus Kubernetes expressed skepticism about taking Kubernetes advice from a database company and questions their understanding of the intricacies of Kubernetes.

Siddharth highlights his experience at Inflection, where they initially considered moving everything to serverless using Lambda but eventually decided to switch to Kubernetes.

He explained that while they wanted to be cloud-native, they found that using Kubernetes introduced a range of complexities and specific knowledge requirements. They had engineers dedicated to managing the Kubernetes cluster, and the risk of losing an engineer meant finding a replacement with similar expertise. Siddharth didn't want his company to become a container management company.

Based on their needs as an eCommerce company, Siddharth believes that serverless, specifically Lambda, is a better fit for most use cases. He emphasizes the simplicity and value of offloading infrastructure management to a service provider like AWS. By adopting Lambda, they were able to leverage the benefits of serverless architecture and integrate it with their autonomous systems.

Regarding concerns about vendor lock-in, Siddharth doesn't view it as a significant issue. He compares it to other technology choices and sees being locked into AWS as an acceptable trade-off for the advantages gained.

Overall, Siddharth's point is that for most cases, serverless (Lambda) makes sense, and he recommends it to others considering the direction to take. He highlights the value of offloading infrastructure management and focusing on core business logic.

Choosing Between Serverless (Lambda) and Kubernetes: Factors to Consider

Rachit Lohani's main point on the other hand is that the choice between serverless (specifically Lambda) and Kubernetes depends on the specific problem being solved and the desired outcomes.

He agrees that most Kubernetes apps should be stateless and that serverless architectures like Lambda are simpler and provide autonomy. However, he argues that Kubernetes can also be autonomous and states that both serverless and Kubernetes require similar efforts to achieve autonomy. According to Lohani, the decision to use Kubernetes or serverless depends on factors such as the need for high throughput or low latency, the level of engineering investment required, and the ability to abstract complexity from developers. While his organization has mostly converted to serverless, there are still applications running on Kubernetes due to the existing engineering investment and the satisfactory performance of those applications.

Serverless vs. Kubernetes: Simplicity, Autonomy, and Infrastructure Management in Focus

Shridhar Pandey, the Senior Product Manager of Lambda, shares his perspective on the topic of Serverless versus Kubernetes. He supports the idea that most Kubernetes applications should be stateless and argues that if that is the case, it is simpler to use Lambda or Serverless functions instead. He highlights that the first two options presented in the white paper of a popular database company, where the state is managed outside of Kubernetes or using a cloud data service, align with the stateless approach. According to Shridhar, moving to Serverless, particularly Lambda, allows for greater autonomy and offloading the infrastructure management to a cloud provider like AWS.

Overall, Shridhar Pandey's point in the panel is that for stateless applications, using Serverless options like Lambda can provide simplicity, autonomy, and a reduction in infrastructure management, while acknowledging that there may be exceptions and engineering considerations that influence the choice between Serverless and Kubernetes.

Startups: Benefits, Use Cases, and Vendor Lock-in

Kenneth Nguyen's point in this panel is that he believes most Kubernetes apps should be stateless and that using serverless technologies like Lambda would be simpler and more autonomous. He supports this by referencing a popular database company's white paper, which suggests running databases outside of Kubernetes or using a cloud data service, making the Kubernetes app stateless. Kenneth argues that the complexity of managing stateful applications in Kubernetes can be avoided by adopting serverless architectures. He mentions the experience of moving to serverless with his own company, Tasq, and the benefits it brought, including increased autonomy.

He also addresses concerns about vendor lock-in, stating that being locked into a specific cloud provider like AWS is acceptable given the other technology choices made in software development.

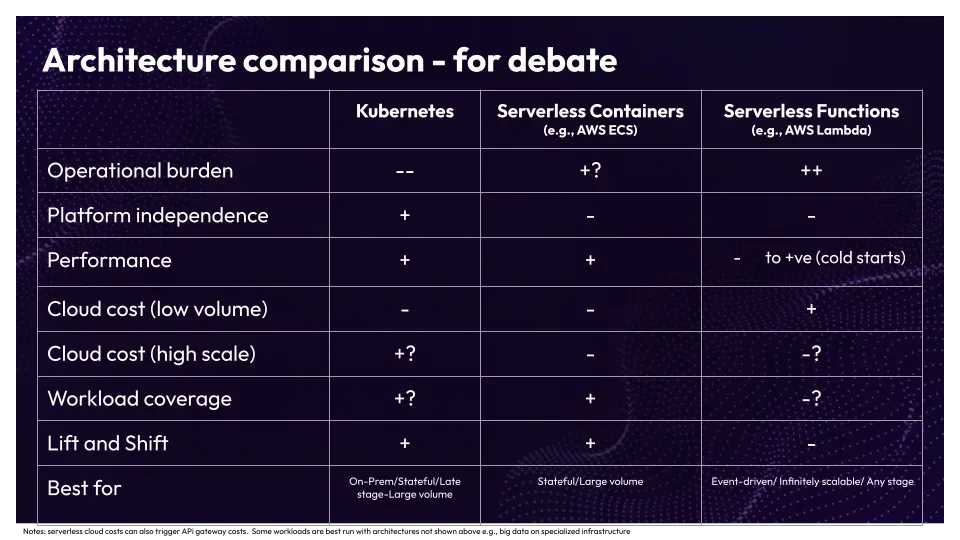

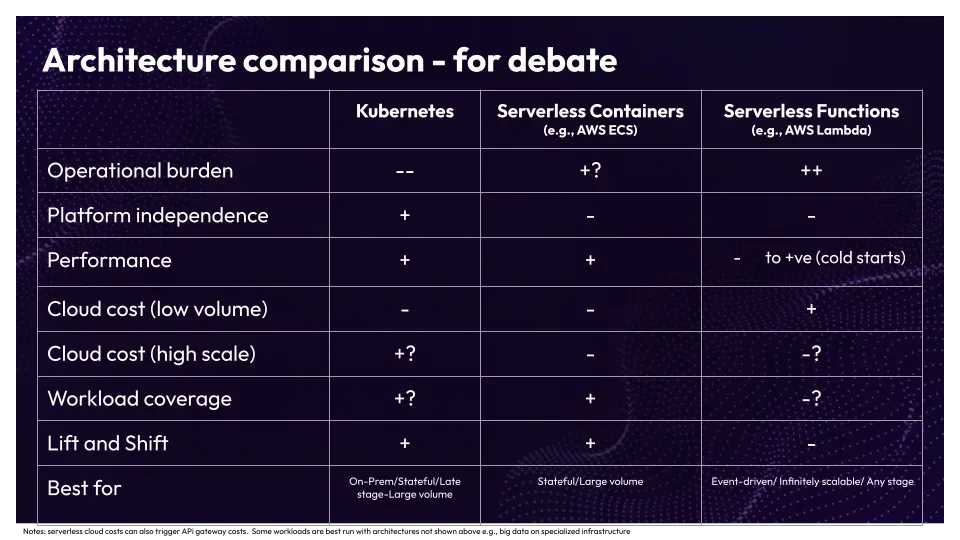

Architecture Comparison: Kubernetes vs Serverless Containers vs Serverless Functions

Conclusion

The panelists explored a comparison chart that assessed the operational burden of Kubernetes versus Serverless functions. While there is some disagreement on the specific weights assigned to each factor, the panelists generally agree that minimizing operational burden is crucial. They highlighted the importance of considering the value-to-cost ratio, with operational burden taking precedence over cloud cost. The consensus is that technologies like AWS Lambda provide significant value by reducing operational burden, particularly for startups and ventures focused on product-market fit. As businesses scale, Kubernetes becomes a more viable choice due to increased complexity and the need for dedicated teams to manage workloads.