Identifying unused EC2 resources is crucial for optimizing AWS costs and maintaining a lean infrastructure. Idle EC2 instances, unattached Elastic IPs, and unused EBS volumes continue to accumulate charges without providing any value. By monitoring key metrics like CPU utilization, network traffic, and disk I/O, you can spot underutilized resources before they drain your budget. Tools like Sedai help automate this process, identifying and shutting down idle instances, ensuring your infrastructure remains cost-efficient.

AWS EC2 costs rising even when workloads remain stable is often the first sign that hidden waste is building.

Teams usually focus their optimization efforts on active services, while unused instances, idle Elastic IPs, and forgotten volumes quietly continue to accumulate charges in the background.

This problem is more common than most realize. Idle or stopped resources can account for 10-15% of AWS bills. It means thousands of dollars disappear each month without delivering any value.

That level of silent spend shows an opportunity to tighten cloud efficiency. This is where EC2 cleanup and optimization make a real impact.

By identifying orphaned resources, rightsizing instances, and reclaiming unused capacity, you keep your environment lean, cost-efficient, and aligned with actual usage.

In this blog, you’ll explore how to spot these hidden charges and clean them up, so your AWS setup stays efficient, lean, and easier on the budget.

What is an Idle EC2 Resource?

An idle EC2 resource refers to an EC2 instance that’s running but not doing any meaningful work.

Even though it’s powered on and consuming compute, memory, storage, and networking resources, it isn’t actively processing requests or supporting your application in any real way.

These instances quietly accumulate costs in the background, charging you for compute time, attached storage, and even minimal network activity, all without delivering any value in return.

The key indicators of idle EC2 resources include:

- Low CPU Utilization: Instances that consistently operate below 10% CPU usage, showing they aren’t performing any meaningful compute activity.

- No Network Traffic: Instances with little to no inbound or outbound traffic, which suggests they aren’t serving requests or transferring data across systems.

- Minimal or No Disk I/O: Instances that aren’t interacting with attached EBS volumes, no reads, writes, or storage operations, indicating a lack of active workloads.

- Inactive Applications: EC2 instances originally set up for a task or service that’s no longer in use, or workloads that have been shifted to other environments such as containers or serverless platforms.

Knowing what an idle EC2 resource is makes it clear why identifying them matters for managing costs and performance.

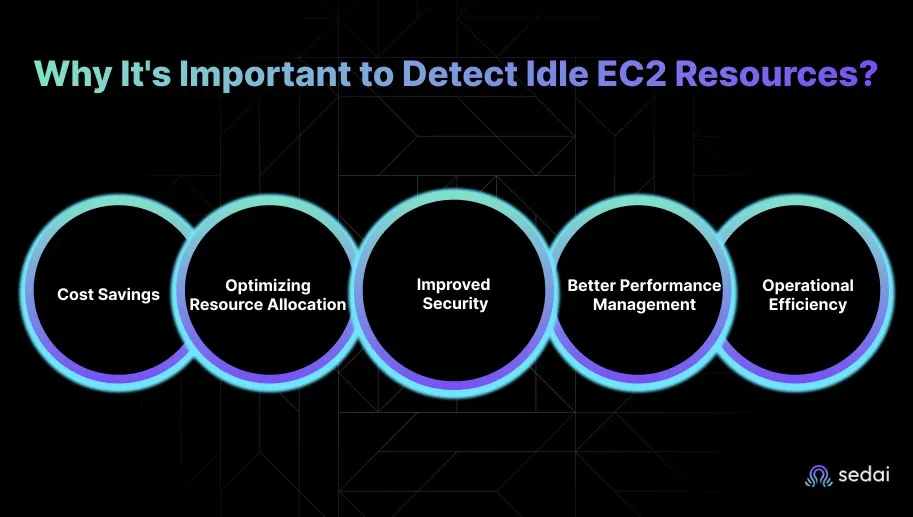

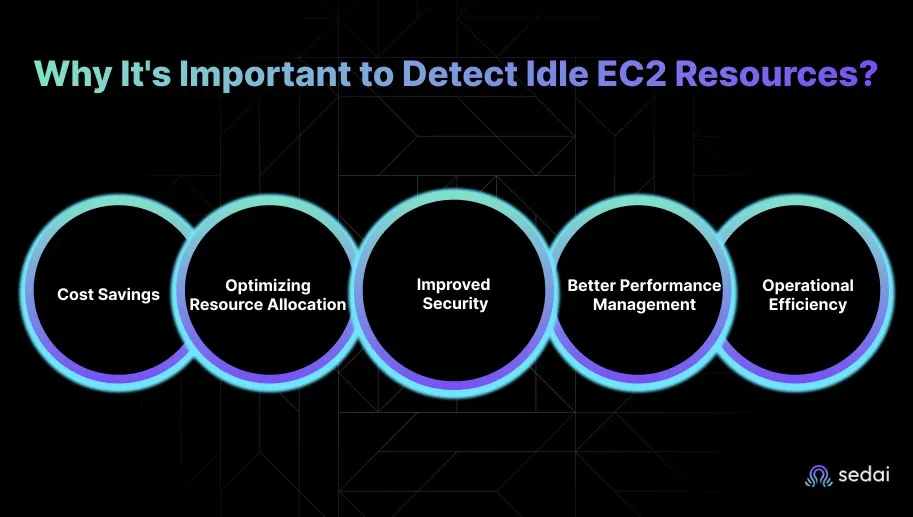

Why It's Important to Detect Idle EC2 Resources?

Detecting idle EC2 resources is a crucial part of keeping your cloud environment efficient and cost-effective. When an EC2 instance sits running without doing any real work, it still racks up compute, storage, and networking charges.

Below are the key reasons to detect idle EC2 resources.

1. Cost Savings

Idle EC2 instances continue to generate full charges for compute, storage, and network usage even when they’re not doing meaningful work.

Identifying and shutting down these instances can significantly reduce your cloud bill, often by as much as 30% depending on how many underutilized resources are running in your environment.

2. Optimizing Resource Allocation

When EC2 instances sit idle, it’s usually a sign of overprovisioning. Detecting these underused resources helps you right-size your infrastructure and match capacity to actual demand.

This ensures you’re only paying for the resources you truly need, rather than maintaining excess compute power that doesn’t contribute to your workloads.

3. Improved Security

Idle instances can unintentionally create security gaps. Even when they’re not in use, these instances may still have open ports, outdated configurations, or unnecessary access permissions.

Removing or shutting down idle resources reduces your attack surface and strengthens your cloud environment's overall security posture.

4. Better Performance Management

Idle EC2 resources often contribute to cloud sprawl, leaving unnecessary instances running across accounts, regions, or VPCs.

Regularly auditing and identifying these instances helps you simplify environments, reduce clutter, and maintain better control over performance management.

5. Operational Efficiency

Automating the detection of idle resources helps reduce manual overhead for engineering teams. By setting up monitoring, alerts, and automated policies, your team can proactively manage idle EC2 instances and focus their time on strategic initiatives.

Once you understand why detecting idle EC2 resources is essential, it’s helpful to know which types of resources are most likely to become idle.

Suggested Read: EC2 Cost Optimization 2026: Engineer’s Practical Guide

Which EC2 Resources Are Most Likely to Become Idle?

Certain EC2 resources tend to become idle more often simply because of how they’re provisioned or used within cloud environments. Spotting them early helps you avoid unnecessary cloud spend and keeps infrastructure clean and efficient.

Below is a list of EC2 resources that are most likely to become idle.

1. EC2 Instances with Low-Traffic Workloads

Instances that support applications with inconsistent or low traffic are among the most common sources of idle resources.

Dev and test environments, staging setups, and infrequent batch-processing jobs often stay powered on even when no one is using them. Since these workloads don’t run continuously, the instances quickly enter idleness.

2. EC2 Instances in Auto Scaling Groups (ASGs)

Auto Scaling Groups spin up extra instances during traffic spikes, but those instances can linger after demand drops.

If scaling policies aren’t configured well, the extra capacity remains active longer than needed, leading to idle instances that continue generating charges.

3. Unattached Elastic IPs (EIPs)

Elastic IPs might seem harmless, but when they’re not attached to a running instance, AWS continues charging for them. It’s easy to forget about EIPs after terminating instances, making them a common and unnecessary source of idle cost.

4. Unused EBS Volumes

Detached or unused EBS volumes keep accruing storage costs even after the instances they were attached to are gone.

These volumes often remain from past tests, temporary environments, or forgotten deployments, quietly adding to overall spend while providing zero value.

5. Underutilized Reserved Instances (RIs)

Reserved Instances are meant to save money, but when they’re mismatched with actual usage, they end up becoming idle.

For example, reserving capacity for a larger instance type than your workload needs results in underutilization, which is essentially another form of idle resource.

6. Load Balancers with Low or No Traffic

Elastic Load Balancers that no longer serve meaningful traffic can also fall into the idle category. Since ELBs incur hourly charges regardless of usage, an unused load balancer can inflate costs without improving performance or availability.

7. EC2 Spot Instances

Spot Instances offer great savings, but if they remain running without handling any workload, they become idle just like any other instance. Without proper lifecycle management, unused Spot Instances can sit unnoticed, adding up to avoidable costs.

After knowing which EC2 resources are prone to idling, the next step is learning how to identify them effectively.

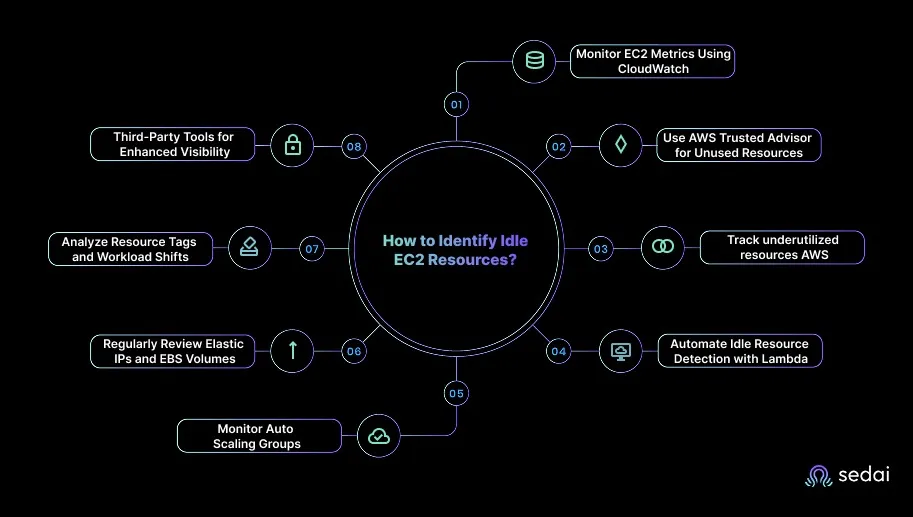

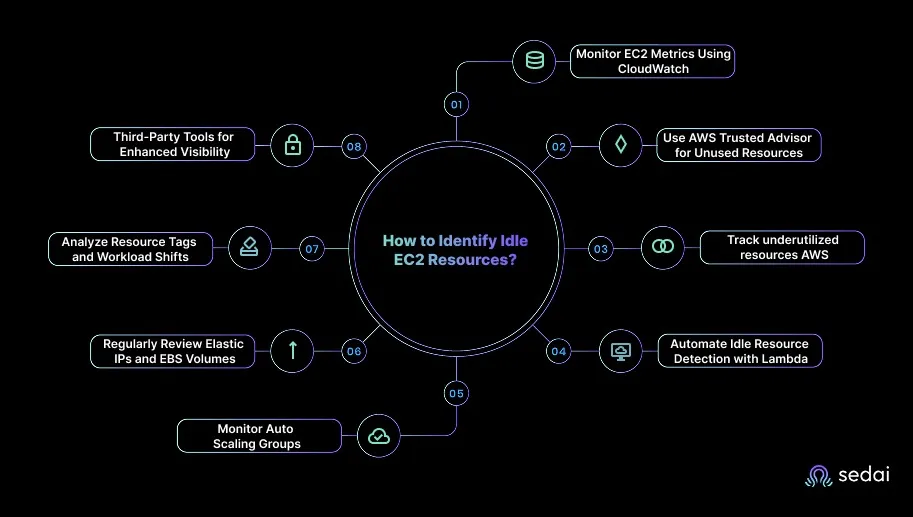

How to Identify Idle EC2 Resources?

Identifying idle EC2 resources requires a mix of intelligent monitoring, automated checks, and proactive engineering practices.

You can rely on a combination of AWS-native services and third-party optimization tools to accurately spot underutilized instances before they inflate cloud bills.

Here’s how you can effectively detect idle EC2 resources:

1. Monitor EC2 Metrics Using CloudWatch

- Use CloudWatch Insights to create custom queries that identify EC2 instances running with low resource utilization across your AWS environment.

- Configure CloudWatch to trigger notifications when CPU utilization falls below a specific threshold for a defined time period, allowing you to review and act on potential idle resources.

2. Use AWS Trusted Advisor for Unused Resources

- AWS Trusted Advisor provides recommendations on underutilized EC2 instances by analyzing historical data. It offers insights into instances with low CPU utilization, excessive reserved capacity, or over-provisioning.

- Regularly review Trusted Advisor’s "Cost Optimization" checks to identify instances that are not performing optimally.

3. Use AWS Cost Explorer to Track Underutilized Resources

- AWS Cost Explorer helps track and analyze EC2 resource utilization patterns over time.

- Filter by resource usage to identify instances that consistently show low consumption or have irregular usage spikes.

4. Automate Idle Resource Detection with Lambda

- Use AWS Lambda to automate the detection of idle EC2 instances based on CPU, network, or disk activity. For instance, Lambda functions can check CloudWatch metrics and automatically stop idle instances after a defined period.

- Create Lambda scripts that periodically check for idle EC2 instances and notify you or automatically stop instances with no activity for 24+ hours, especially for non-production or test environments.

5. Monitor Auto Scaling Groups

- EC2 instances in Auto Scaling Groups (ASGs) can often remain idle when demand drops. Review your ASG settings to ensure scaling policies match actual traffic and workloads.

- Audit Auto Scaling Group metrics in CloudWatch to ensure scaling actions align with usage patterns, and adjust policies to prevent overprovisioning of idle EC2 instances during low-demand periods.

6. Regularly Review Elastic IPs and EBS Volumes

- Elastic IPs (EIPs) that are not associated with any running EC2 instance still incur costs. Similarly, detached EBS volumes accumulate storage costs even when not in use.

- Set up periodic reviews using AWS Lambda to identify and release unattached EIPs and unused EBS volumes that are no longer required.

7. Analyze Resource Tags and Workload Shifts

- Tagging EC2 instances and other resources based on environment can quickly identify idle or obsolete instances. Look for tags indicating that resources are no longer required, or have been updated by newer, more optimized configurations.

- Use AWS Config to automatically flag EC2 instances that haven’t been used within a certain period and are missing relevant tags for active projects or workloads.

8. Third-Party Tools for Enhanced Visibility

- Tools like Sedai offer deeper insights and advanced analytics on resource usage. These tools identify idle instances by aggregating and correlating EC2 metrics, cost data, and usage patterns across your AWS environment.

- Use third-party cost management tools to get a consolidated view of idle resources and optimize cloud infrastructure in real time.

Once idle EC2 resources are identified, it’s important to follow smart practices to manage them and reduce waste.

Also Read: Amazon EC2 Spot Instances Guide 2026: Savings & Automation

Smart Practices to Manage Idle EC2 Resources and Reduce Waste

Managing idle EC2 resources is essential for optimizing cloud costs and maintaining efficient operations. When done correctly, it helps you eliminate waste, improve utilization, and ensure that infrastructure aligns with real workload demands rather than assumptions.

Below are advanced, practical strategies to help you manage idle EC2 instances and keep your environment running efficiently.

1. Automate EC2 Instance Shutdown During Off-Hours

Cut unnecessary costs by using AWS Instance Scheduler to automatically start and stop EC2 instances according to predefined schedules, ensuring they run only when needed.

Apply scheduled shutdowns for dev or test environments so they aren’t active during non-critical hours.

Tip: Consider combining scheduled shutdowns with alert notifications to catch any instances that must stay active unexpectedly. Reviewing these schedules monthly ensures savings without impacting business operations.

2. Utilize EC2 Auto-Scaling with Proper Scaling Policies

Configure Auto Scaling policies that adjust EC2 capacity in response to real-time demand, ensuring you use only the resources required at any given time.

Review your scaling thresholds regularly so they reflect actual usage patterns and prevent overprovisioning.

Tip: Analyze historical traffic trends to fine-tune scaling triggers for better efficiency. Incorporate predictive scaling to proactively handle peak loads while minimizing idle resources.

3. Utilize EC2 Spot Instances for Non-Critical Workloads

For flexible or fault-tolerant workloads, switch to EC2 Spot Instances to significantly reduce compute costs. Integrate Spot capacity into your Auto Scaling groups so your application stays efficient and adaptable.

Just ensure you have interruption handling in place to preserve progress and fail over to on-demand instances when needed.

Tip: Track Spot interruption rates and maintain a small pool of on-demand instances as fallback. Automating workload migration between Spot and on-demand ensures continuous operation without overspending.

4. Implement EC2 Hibernation for Temporary Workloads

Enable EC2 Hibernation for workloads that run intermittently, such as temporary testing environments or periodic batch jobs.

Hibernation lets you pause and resume instances without incurring charges for continuous running. This helps maintain instance state while avoiding unnecessary compute charges.

Tip: Use tagging to differentiate hibernating instances from active ones for easier tracking. Combining hibernation with snapshot backups adds an extra layer of data protection.

5. Regularly Review and Clean Up Elastic IPs (EIPs)

Use AWS Lambda to automatically spot Elastic IPs that aren’t linked to running EC2 instances and release them to avoid extra costs.

Make reviewing EIPs a regular practice, especially after workload migrations or instance cleanups. This helps ensure you’re only paying for public IPs you actively use.

Tip: Implement automated reporting for unattached IPs and idle volumes to stay proactive. Periodic cost audits help identify patterns and prevent recurring waste.

6. Optimize Load Balancers Based on Traffic

Monitor Elastic Load Balancers with CloudWatch to find those receiving little or no traffic. Consolidate or remove ELBs that don’t add value, so you maintain only essential load balancers.

Eliminating unused ELBs helps reduce waste and keeps your networking costs focused on active workloads.

Tip: Use CloudWatch logs to identify underused backend targets that could be consolidated. Scheduling quarterly load balancer reviews keeps networking costs in check and performance optimal.

How Sedai Delivers Autonomous Optimization for EC2?

Many EC2 optimization efforts depend on periodic reviews, guess-based instance choices, and reactive scaling policies, which often leave gaps in performance and cost control.

Those gaps become expensive, as mis-sized EC2 instances, unused capacity, and delayed tuning decisions quickly inflate cloud bills and affect application performance.

Sedai closes this gap by continuously learning how workloads behave, predicting usage patterns, and autonomously adjusting EC2 configurations as conditions shift.

Instead of relying on static thresholds or scheduled clean-ups, Sedai makes decisions in real time, keeping EC2 environments right-sized, efficient, and stable without manual intervention.

Here’s what Sedai delivers:

- Autonomous rightsizing and commitment optimization: Sedai examines CPU, memory, and I/O patterns, selecting the most efficient instance sizes and types while updating commitments safely. This intelligence drives 30%+ reduced cloud costs while maintaining reliability.

- Performance-driven tuning across instance fleets: Sedai identifies workloads that need more or fewer compute resources. These continuous adjustments result in a 75% improvement in application performance.

- Early anomaly detection and automated remediation: Sedai detects performance drift, such as saturation or inefficient scaling, and resolves it autonomously. It contributes to 70% fewer failed customer interactions (FCIs).

- Self-driving optimization actions at scale: Sedai executes thousands of optimization tasks autonomously, including resizing, instance transitions, scaling adjustments, and lifecycle actions. It helps teams achieve 6× greater engineering productivity.

- Enterprise-grade, multi-cloud proven efficiency: Sedai continuously manages optimization for large EC2 estates alongside Azure, GCP, and Kubernetes workloads, backed by $3B+ cloud spend managed across global environments.

Sedai turns EC2 optimization from reactive cleanup into autonomous, real-time decision making. You gain predictable performance, lower compute spend, and a fleet that continuously aligns with workload behavior, without tuning everything by hand.

If you're addressing unused EC2 resources in AWS with Sedai, use our ROI calculator to estimate the return on investment by modeling the cost savings from identifying and eliminating idle or underutilized resources.

Must Read: Amazon EC2 (2025): Expert Guide to Instances, Cost & Automation

Final Thoughts

Spotting unused resources in EC2 is a good starting point for cutting cloud waste, but the real value comes from creating a long-term, proactive cost strategy. Instead of reacting only when idle resources pile up, automation helps you stay ahead.

Tools like AWS Lambda and Sedai make this possible by monitoring your EC2 environment continuously and adjusting resources as demand shifts.

Sedai goes a step further by automatically optimizing instances, scaling workloads efficiently, and keeping performance steady without manual effort.

Take control of your EC2 setup and start eliminating wasted spend from day one with Sedai.

FAQs

1. How can I ensure that EC2 instances are properly tagged to identify unused resources?

A1. Proper tagging makes it easier to track EC2 usage and quickly spot resources that are sitting idle. By adding clear labels such as “Production,” “Test,” or “Inactive,” you can filter and group instances by purpose or workload.

2. Can I automate the termination of idle EC2 instances without manual intervention?

A2. Yes, you can automate the entire process using AWS Lambda and CloudWatch metrics. Lambda functions can monitor key indicators, such as CPU utilization or network traffic, and automatically stop or terminate instances that remain idle for a specified window.

3. What are the best practices for monitoring EC2 instances to detect idle resources?

A3. The most effective approach is to set up CloudWatch alarms for consistently low CPU usage, minimal network activity, or little to no disk I/O. Reviewing AWS Trusted Advisor and Cost Explorer recommendations also catches underutilized instances early.

4. How can I identify and manage unused EC2 Spot Instances?

A4. Spot Instances are cost-efficient, but they can still run idle if not monitored. Checking CloudWatch metrics for low CPU or network activity can help you identify unused instances.

5. Are there any hidden costs associated with idle EC2 resources like Elastic IPs or unused EBS volumes?

A5. Yes, unused Elastic IPs still incur charges when they aren’t attached to running instances, and detached EBS volumes continue to accumulate storage costs. Automating cleanup with AWS Lambda or regularly reviewing unused EIPs and volumes through AWS Trusted Advisor keeps your cloud environment clean and cost-efficient.