For engineering teams running a mature IaC practice, introducing autonomous optimization creates an inherent tension: any system that continuously adjusts infrastructure outside of Git creates drift — a gap between what's in your code and what's actually running.

Sedai's answer to that challenge is a set of three concrete integrations, and today we're announcing the latest: Guardrails as Code, now available for Kubernetes workload optimization.

Sedai’s IaC Story So Far

Sedai already offers two mechanisms for keeping your IaC in sync with our autonomous optimizations:

- Loopback Updates open a pull request in your Git repo within 24 hours of every validated optimization, so your IaC reflects what's actually running.

- CI/CD Overwrite Protection is achieved via Sedai Sync for release-based pipelines, or the Live Sync Controller for GitOps environments like Argo CD and Flux. This feature prevents your deployment pipeline from reverting Sedai's changes.

Together, these handle the after: what happens once Sedai has acted. But there's a before side too, and that's what we’ve addressed with Guardrails as Code.

Our Solution: Codifying Ranges, Not Fixed Values

Guardrails as Code is the best way to optimize cloud infrastructure at scale, while still maintaining a source of truth.

Today, resource configuration values — CPU limits, memory requests, replica counts — are almost universally expressed as fixed numbers in IaC. A single value, committed to code, treated as a stable fact about a service. This made sense when infrastructure was static and changes were expensive. It makes less sense in a world where workloads are dynamic, cloud resources are elastic, and optimization systems can act autonomously and continuously.

A better model is already embodied in Kubernetes pod autoscaler configuration: HPAs and VPAs don't require a fixed replica count or a fixed memory value. Instead, we specify ranges — minimums and maximums — and offload the details to an intelligent system (Kubernetes) to operate within those bounds in real time.

This practice is standard, trusted, and pragmatic within the dynamic context of Kubernetes. As autonomous optimization becomes the new normal in the world of infrastructure management, we must embrace the same solution for the values that we are entrusting to autonomous systems, like CPU and memory allocation. As autonomous systems adjust configurations on the fly to reduce costs and protect performance, static values quickly become stale. As in the case of pod autoscalers, IaC in the context of autonomous optimization should define the envelope of acceptable behavior. An optimization system like Sedai should find the best value within that envelope, continuously, based on real signals. Guardrails as Code is how we are attempting to live up to this new reality.

Introducing Guardrails as Code

Guardrails as Code lets you define the boundaries within which Sedai is allowed to operate — directly in your Git repository, as versioned YAML files, reviewed and merged like any other configuration change.

Think of it as a policy layer that lives in code. Before Sedai makes any optimization decision, it checks the applicable guardrails and constrains its option space accordingly. You might specify that a particular service should never run with fewer than 8 vCPU or more than 32 vCPU. You might set memory limits for a latency-sensitive service that can't tolerate aggressive downsizing. You might define account-wide defaults that apply unless overridden at the group or resource level.

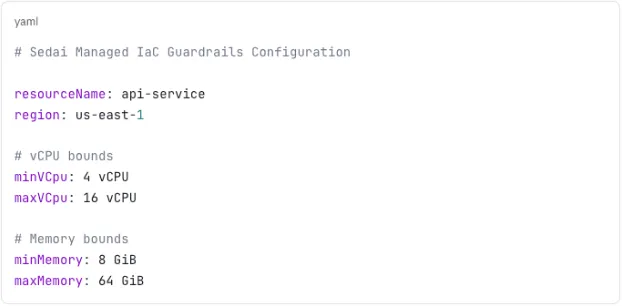

A guardrails file looks like this:

If a guardrails file doesn't exist yet for a resource, Sedai will automatically create one via pull request with a proposed set of initial values (so you're not starting from a blank page) and the file still goes through your normal review process before taking effect.

Guardrails can be configured at three levels of granularity:

- Resource level — for services with specific requirements Sedai can't infer from signals alone (specialized hardware, contractual SLAs, etc.)

- Group level — apply consistent policies across a cluster, namespace, or tag-based group of resources

- Account level — set defaults that apply across everything, and override where needed with resource-level and group-level guardrails

A note on scope: Today, Guardrails as Code is available for Kubernetes workload optimization. Support for optimization of VMs and Kubernetes nodes is coming soon.

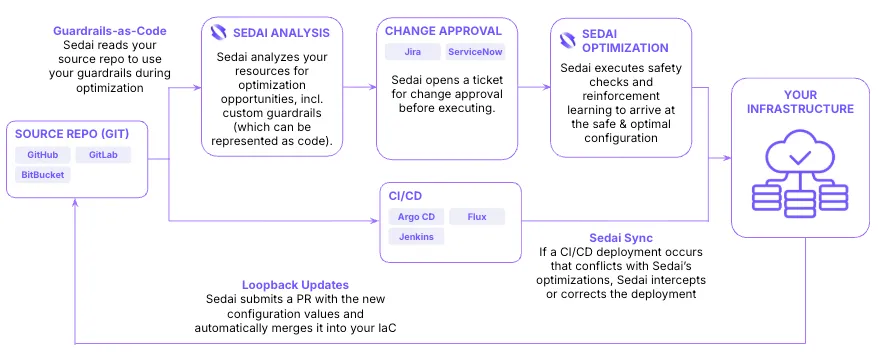

How the Three Mechanisms Work Together

Each mechanism addresses a distinct moment in the lifecycle of an autonomous optimization event:

- Guardrails as Code. Before Sedai acts, it evaluates optimization opportunities against the ranges your team has defined in Git, bounding the decision space from the start.

- Loopback Updates. Once a change is validated, Sedai opens a PR in your repo to bring your IaC in sync.

- CI/CD Overwrite Protection. If your pipeline fires in the window between Sedai's change and that PR being merged, Sedai catches and corrects it.

Throughout all of this, Sedai is doing what it's designed to do: continuously analyzing your workloads, executing changes safely, and learning from the results.

With all three integrations in place, the full lifecycle looks like this:

A Deeper Look: Using Guardrails as Code

Setting Up the Integration

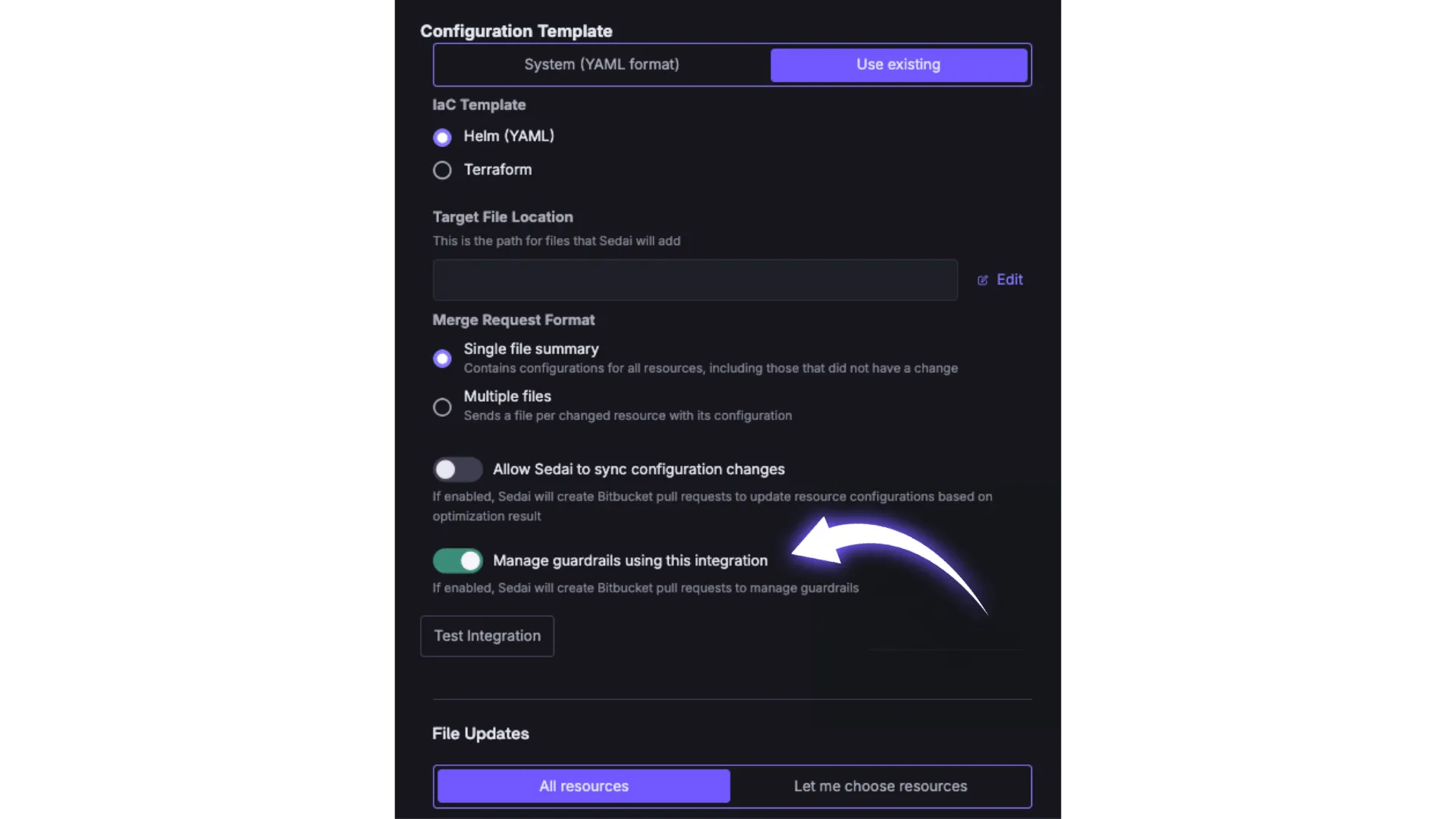

Guardrails as Code requires a Git integration to be configured in Sedai (Settings → Integrations), with the Manage guardrails using this integration option enabled. Sedai supports GitHub, GitLab, and Bitbucket Cloud.

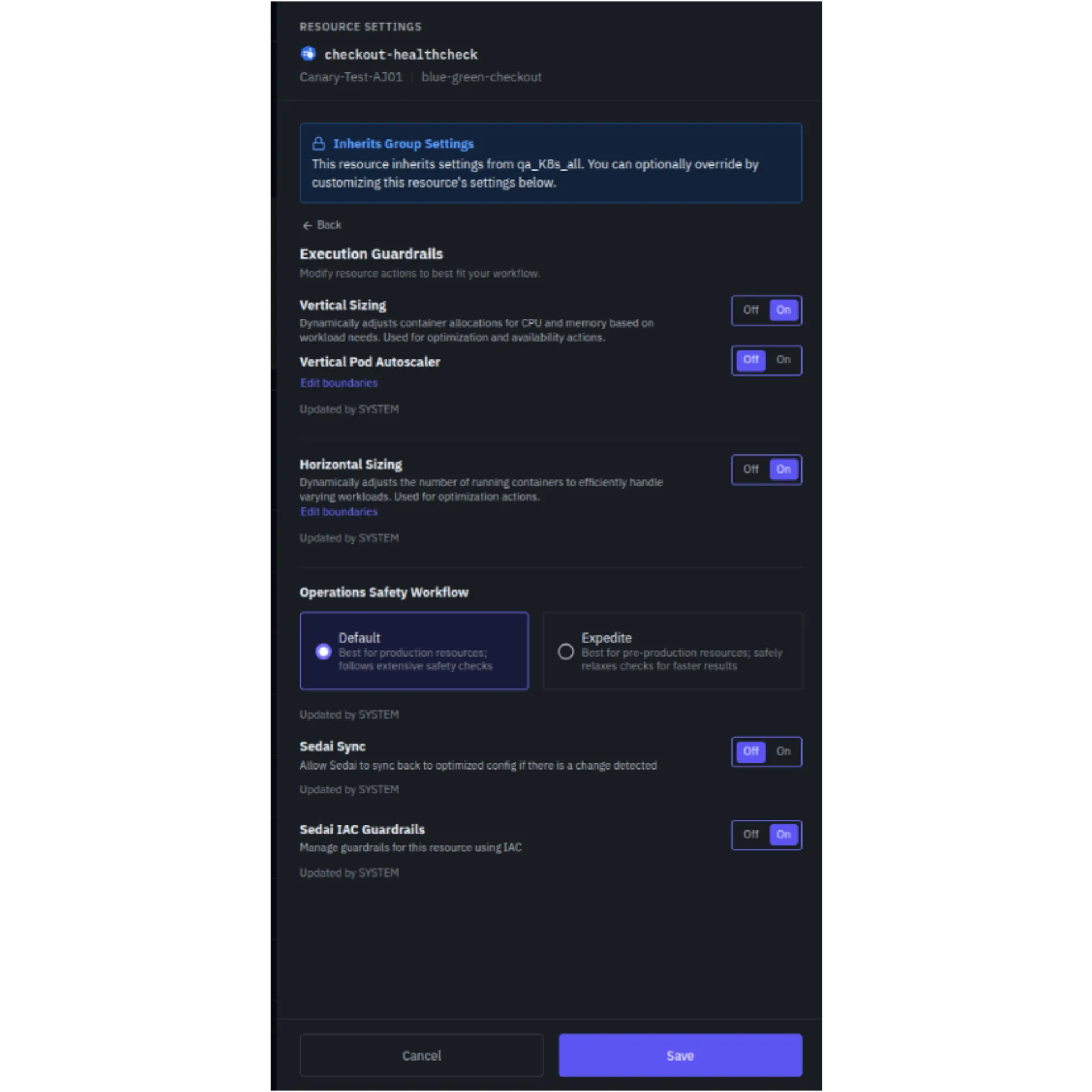

Once the integration is active, you can enable guardrails management for your resources. This can be done at the resource level, group level, or account level from the Sedai UI. The actual guardrail values themselves live in Git from that point forward.

Managing guardrails at the resource level

Scope and Precedence

When multiple levels of guardrails apply to the same resource, Sedai resolves conflicts using a precedence hierarchy: resource-level settings override group-level settings, which in turn override account-level defaults. This gives you the flexibility to set sensible defaults broadly while carving out exceptions for individual services or environments that need tighter or looser constraints.

The Guardrails File

The guardrails YAML format is intentionally simple and human-readable. It specifies the resource name, region, and min/max bounds for the configuration dimensions Sedai can optimize — currently vCPU and memory for Kubernetes workload optimization.

When Sedai proposes initial guardrail values, it bases them on observed utilization patterns — so the proposed ranges are grounded in real data, not arbitrary defaults.

Get Started

Guardrails as Code is available now for Kubernetes workload optimization. If you're already a Sedai customer, you can enable it from Settings → Integrations. If you're new to Sedai, we'll explain how the full IaC integration story applies to your environment when you book a demo.

Support for Kubernetes and VM node optimization guardrails is coming soon. Stay tuned.